Olusiji Medaiyese

Magnification-Invariant Image Classification via Domain Generalization and Stable Sparse Embedding Signatures

Apr 28, 2026Abstract:Magnification shift is a major obstacle to robust histopathology classification, because models trained on one imaging scale often generalize poorly to another. Here, we evaluated this problem on the BreaKHis dataset using a strict patient-disjoint leave-one-magnification-out protocol, comparing supervised baseline, baseline augmented with DCGAN-generated patches, and a gradient-reversal domain-general model designed to preserve discriminative information while suppressing magnification-specific variation. Across held-out magnifications, the domain-general model achieved the strongest overall discrimination and its clearest gain was observed when 200X was held out. By contrast, GAN augmentation produced inconsistent effects, improving some folds but degrading others, particularly at 400X. The domain-general model also yielded the lowest Brier score at 0.063 vs 0.089 at baseline. Sparse embedding analysis further revealed that domain-general training reduced average signature size more than three-fold (306 versus 1,074 dimensions) while preserving equivalent predictive performance (AUC: 0.967 vs 0.965; F1: 0.930 vs 0.931). It also increased cross-fold signature reproducibility from near-zero Jaccard overlap in the baseline to 0.99 between the 100X and 200X folds. These findings show that calibrated, compact, and transferable representations can be learned without added architectural complexity, with clear implications for the reliable deployment of computational pathology models across heterogeneous acquisition settings.

Wavelet Transform Analytics for RF-Based UAV Detection and Identification System Using Machine Learning

Feb 23, 2021

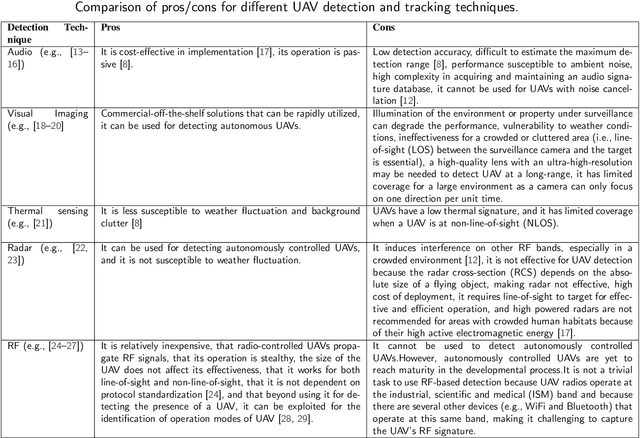

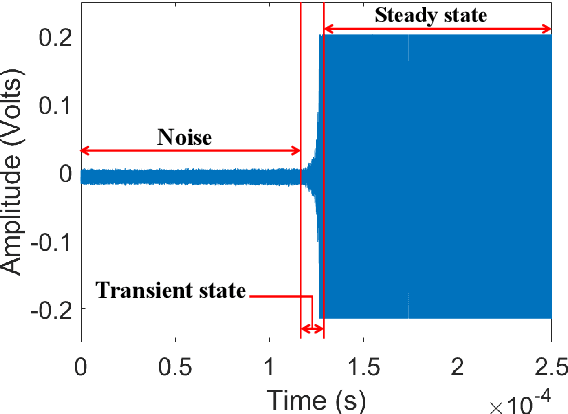

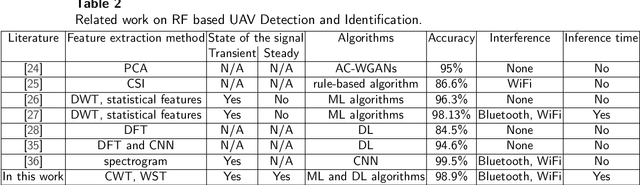

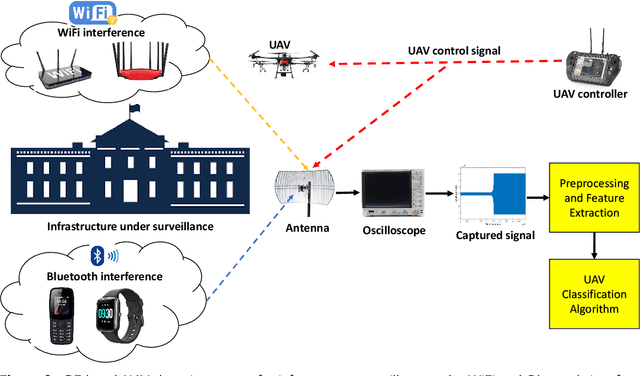

Abstract:In this work, we performed a thorough comparative analysis on a radio frequency (RF) based drone detection and identification system (DDI) under wireless interference, such as WiFi and Bluetooth, by using machine learning algorithms, and a pre-trained convolutional neural network-based algorithm called SqueezeNet, as classifiers. In RF signal fingerprinting research, the transient and steady state of the signals can be used to extract a unique signature from an RF signal. By exploiting the RF control signals from unmanned aerial vehicles (UAVs) for DDI, we considered each state of the signals separately for feature extraction and compared the pros and cons for drone detection and identification. Using various categories of wavelet transforms (discrete wavelet transform, continuous wavelet transform, and wavelet scattering transform) for extracting features from the signals, we built different models using these features. We studied the performance of these models under different signal to noise ratio (SNR) levels. By using the wavelet scattering transform to extract signatures (scattergrams) from the steady state of the RF signals at 30 dB SNR, and using these scattergrams to train SqueezeNet, we achieved an accuracy of 98.9% at 10 dB SNR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge