Oliver Giudice

Applied Research Team, IT dept., Banca d'Italia, Rome, Italy

In-Depth DCT Coefficient Distribution Analysis for First Quantization Estimation

Aug 07, 2020

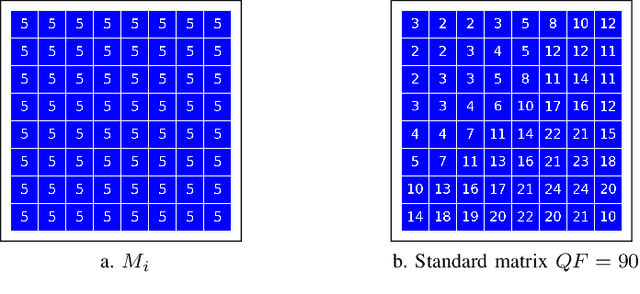

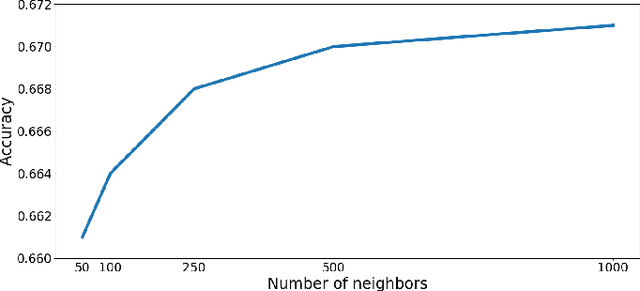

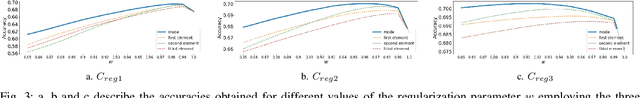

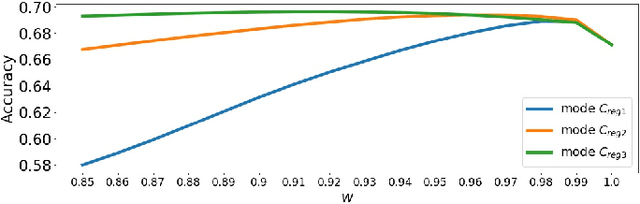

Abstract:The exploitation of traces in JPEG double compressed images is of utter importance for investigations. Properly exploiting such insights, First Quantization Estimation (FQE) could be performed in order to obtain source camera model identification (CMI) and therefore reconstruct the history of a digital image. In this paper, a method able to estimate the first quantization factors for JPEG double compressed images is presented, employing a mixed statistical and Machine Learning approach. The presented solution is demonstrated to work without any a-priori assumptions about the quantization matrices. Experimental results and comparisons with the state-of-the-art show the goodness of the proposed technique.

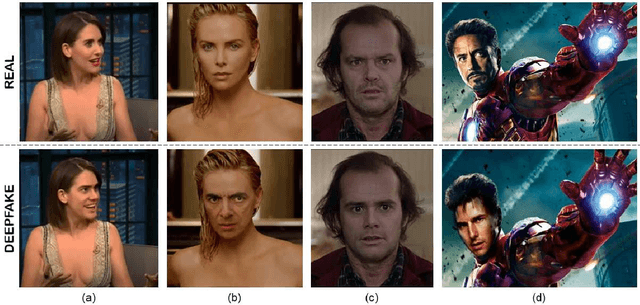

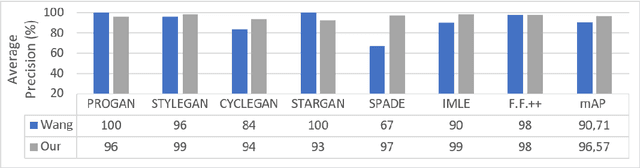

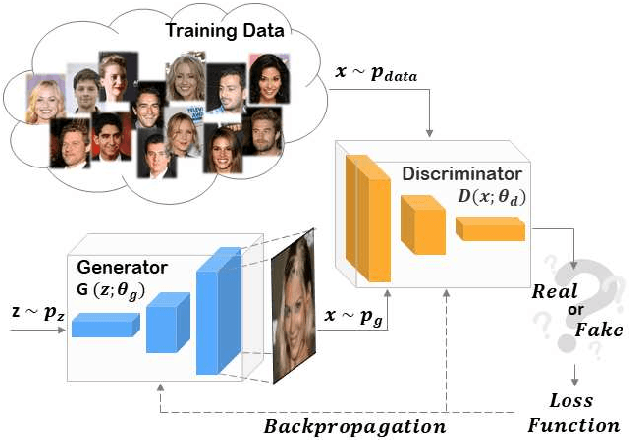

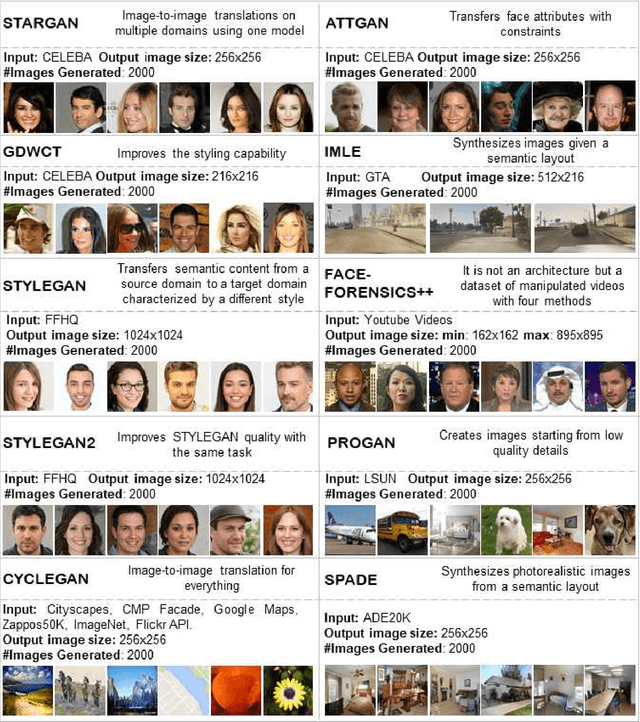

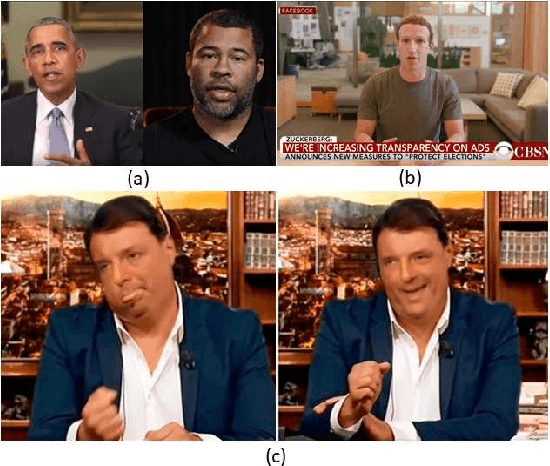

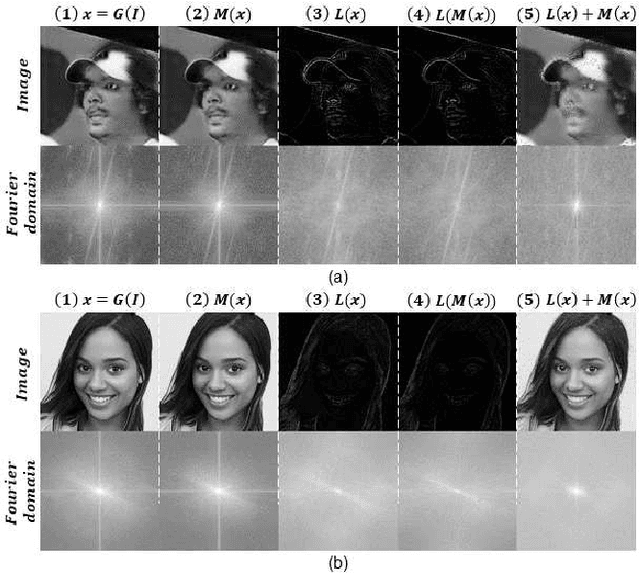

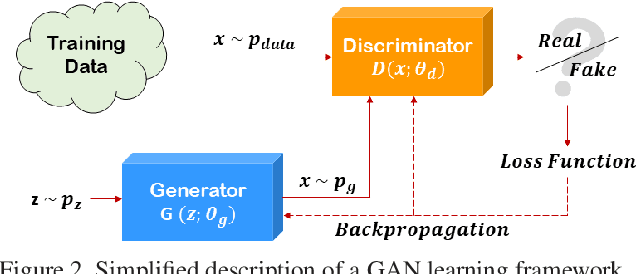

Fighting Deepfake by Exposing the Convolutional Traces on Images

Aug 07, 2020

Abstract:Advances in Artificial Intelligence and Image Processing are changing the way people interacts with digital images and video. Widespread mobile apps like FACEAPP make use of the most advanced Generative Adversarial Networks (GAN) to produce extreme transformations on human face photos such gender swap, aging, etc. The results are utterly realistic and extremely easy to be exploited even for non-experienced users. This kind of media object took the name of Deepfake and raised a new challenge in the multimedia forensics field: the Deepfake detection challenge. Indeed, discriminating a Deepfake from a real image could be a difficult task even for human eyes but recent works are trying to apply the same technology used for generating images for discriminating them with preliminary good results but with many limitations: employed Convolutional Neural Networks are not so robust, demonstrate to be specific to the context and tend to extract semantics from images. In this paper, a new approach aimed to extract a Deepfake fingerprint from images is proposed. The method is based on the Expectation-Maximization algorithm trained to detect and extract a fingerprint that represents the Convolutional Traces (CT) left by GANs during image generation. The CT demonstrates to have high discriminative power achieving better results than state-of-the-art in the Deepfake detection task also proving to be robust to different attacks. Achieving an overall classification accuracy of over 98%, considering Deepfakes from 10 different GAN architectures not only involved in images of faces, the CT demonstrates to be reliable and without any dependence on image semantic. Finally, tests carried out on Deepfakes generated by FACEAPP achieving 93% of accuracy in the fake detection task, demonstrated the effectiveness of the proposed technique on a real-case scenario.

Single architecture and multiple task deep neural network for altered fingerprint analysis

Jul 09, 2020

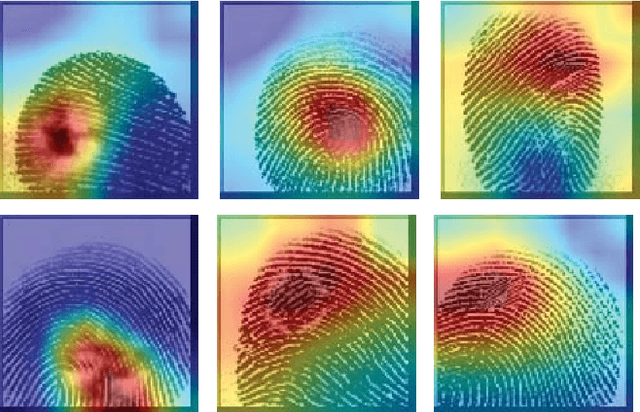

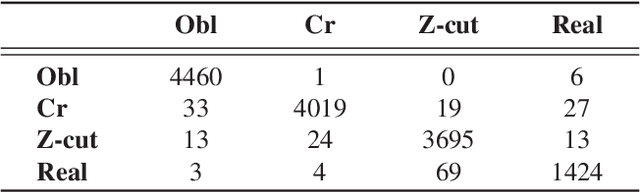

Abstract:Fingerprints are one of the most copious evidence in a crime scene and, for this reason, they are frequently used by law enforcement for identification of individuals. But fingerprints can be altered. "Altered fingerprints", refers to intentionally damage of the friction ridge pattern and they are often used by smart criminals in hope to evade law enforcement. We use a deep neural network approach training an Inception-v3 architecture. This paper proposes a method for detection of altered fingerprints, identification of types of alterations and recognition of gender, hand and fingers. We also produce activation maps that show which part of a fingerprint the neural network has focused on, in order to detect where alterations are positioned. The proposed approach achieves an accuracy of 98.21%, 98.46%, 92.52%, 97.53% and 92,18% for the classification of fakeness, alterations, gender, hand and fingers, respectively on the SO.CO.FING. dataset.

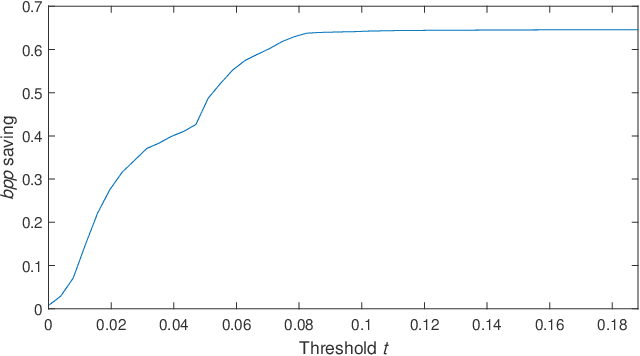

Animated GIF optimization by adaptive color local table management

Jul 09, 2020

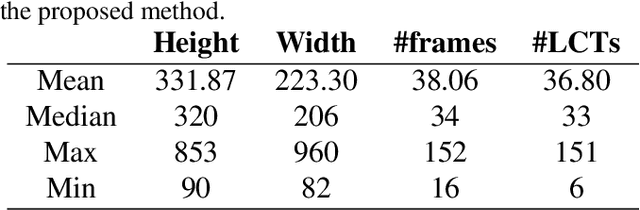

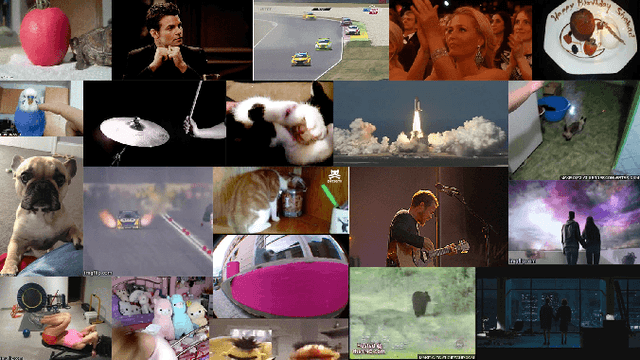

Abstract:After thirty years of the GIF file format, today is becoming more popular than ever: being a great way of communication for friends and communities on Instant Messengers and Social Networks. While being so popular, the original compression method to encode GIF images have not changed a bit. On the other hand popularity means that storage saving becomes an issue for hosting platforms. In this paper a parametric optimization technique for animated GIFs will be presented. The proposed technique is based on Local Color Table selection and color remapping in order to create optimized animated GIFs while preserving the original format. The technique achieves good results in terms of byte reduction with limited or no loss of perceived color quality. Tests carried out on 1000 GIF files demonstrate the effectiveness of the proposed optimization strategy.

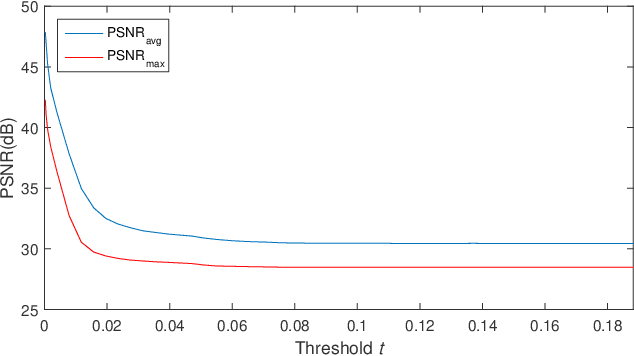

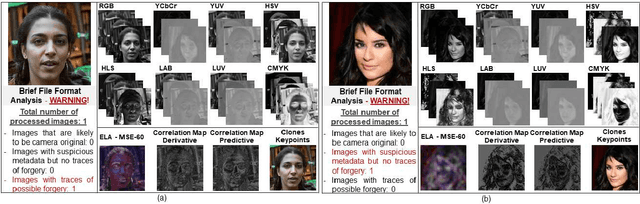

Preliminary Forensics Analysis of DeepFake Images

Jun 04, 2020

Abstract:One of the most terrifying phenomenon nowadays is the DeepFake: the possibility to automatically replace a person's face in images and videos by exploiting algorithms based on deep learning. This paper will present a brief overview of technologies able to produce DeepFake images of faces. A forensics analysis of those images with standard methods will be presented: not surprisingly state of the art techniques are not completely able to detect the fakeness. To solve this, a preliminary idea on how to fight DeepFake images of faces will be presented by analysing anomalies in the frequency domain.

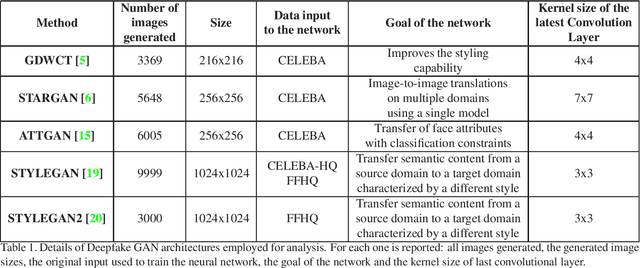

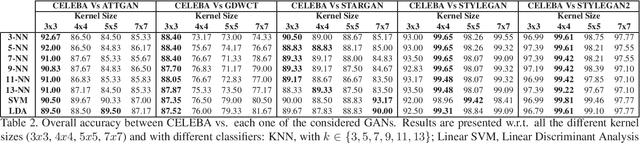

DeepFake Detection by Analyzing Convolutional Traces

Apr 22, 2020

Abstract:The Deepfake phenomenon has become very popular nowadays thanks to the possibility to create incredibly realistic images using deep learning tools, based mainly on ad-hoc Generative Adversarial Networks (GAN). In this work we focus on the analysis of Deepfakes of human faces with the objective of creating a new detection method able to detect a forensics trace hidden in images: a sort of fingerprint left in the image generation process. The proposed technique, by means of an Expectation Maximization (EM) algorithm, extracts a set of local features specifically addressed to model the underlying convolutional generative process. Ad-hoc validation has been employed through experimental tests with naive classifiers on five different architectures (GDWCT, STARGAN, ATTGAN, STYLEGAN, STYLEGAN2) against the CELEBA dataset as ground-truth for non-fakes. Results demonstrated the effectiveness of the technique in distinguishing the different architectures and the corresponding generation process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge