Nicola Rossberg

SHAPCA: Consistent and Interpretable Explanations for Machine Learning Models on Spectroscopy Data

Mar 19, 2026Abstract:In recent years, machine learning models have been increasingly applied to spectroscopic datasets for chemical and biomedical analysis. For their successful adoption, particularly in clinical and safety-critical settings, professionals and researchers must be able to understand and trust the reasoning behind model predictions. However, the inherently high dimensionality and strong collinearity of spectroscopy data pose a fundamental challenge to model explainability. These properties not only complicate model training but also undermine the stability and consistency of explanations, leading to fluctuations in feature importance across repeated training runs. Feature extraction techniques have been used to reduce the input dimensionality; these new features hinder the connection between the prediction and the original signal. This study proposes SHAPCA, an explainable machine learning pipeline that combines Principal Component Analysis (for dimensionality reduction) and Shapely Additive exPlanations (for post hoc explanation) to provide explanations in the original input space, which a practitioner can interpret and link back to the biological components. The proposed framework enables analysis from both global and local perspectives, revealing the spectral bands that drive overall model behaviour as well as the instance-specific features that influence individual predictions. Numerical analysis demonstrated the interpretability of the results and greater consistency across different runs.

Machine Learning Applications to Diffuse Reflectance Spectroscopy in Optical Diagnosis; A Systematic Review

Mar 03, 2025Abstract:Diffuse Reflectance Spectroscopy has demonstrated a strong aptitude for identifying and differentiating biological tissues. However, the broadband and smooth nature of these signals require algorithmic processing, as they are often difficult for the human eye to distinguish. The implementation of machine learning models for this task has demonstrated high levels of diagnostic accuracies and led to a wide range of proposed methodologies for applications in various illnesses and conditions. In this systematic review, we summarise the state of the art of these applications, highlight current gaps in research and identify future directions. This review was conducted in accordance with the PRISMA guidelines. 77 studies were retrieved and in-depth analysis was conducted. It is concluded that diffuse reflectance spectroscopy and machine learning have strong potential for tissue differentiation in clinical applications, but more rigorous sample stratification in tandem with in-vivo validation and explainable algorithm development is required going forward.

Who is GPT-3? An Exploration of Personality, Values and Demographics

Sep 28, 2022

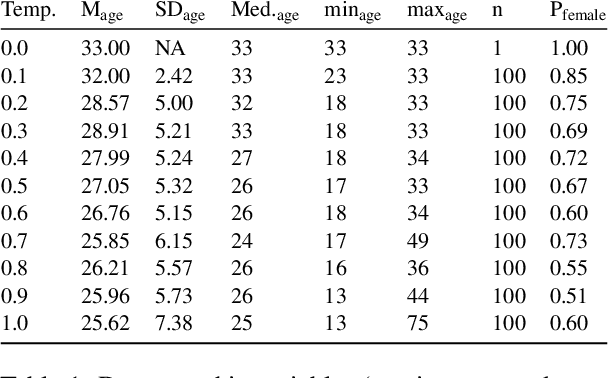

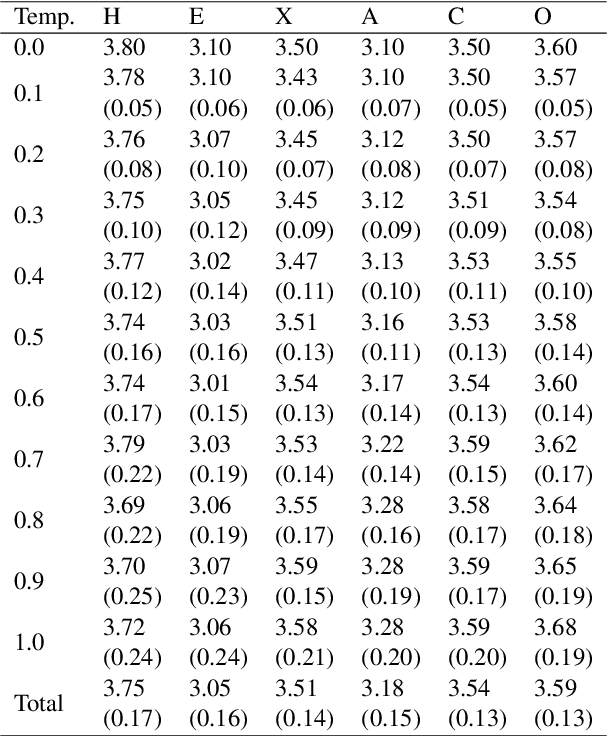

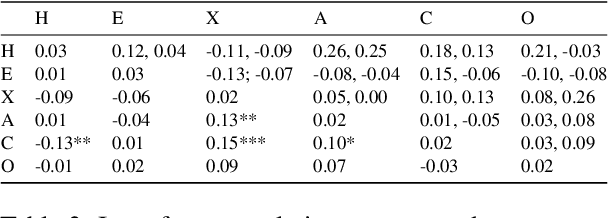

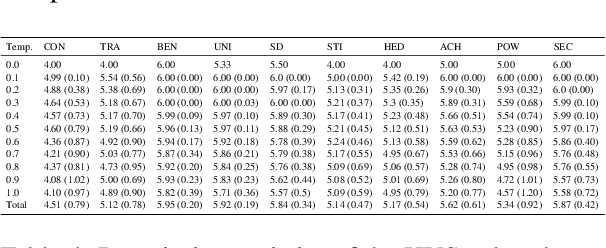

Abstract:Language models such as GPT-3 have caused a furore in the research community. Some studies found that GPT-3 has some creative abilities and makes mistakes that are on par with human behaviour. This paper answers a related question: who is GPT-3? We administered two validated measurement tools to GPT-3 to assess its personality, the values it holds and its self-reported demographics. Our results show that GPT-3 scores similarly to human samples in terms of personality and - when provided with a model response memory - in terms of the values it holds. We provide the first evidence of psychological assessment of the GPT-3 model and thereby add to our understanding of the GPT-3 model. We close with suggestions for future research that moves social science closer to language models and vice versa.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge