Nico S. Gorbach

Scalable Variational Inference for Dynamical Systems

Apr 10, 2018

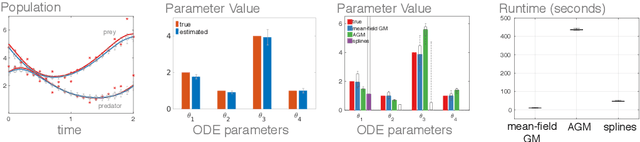

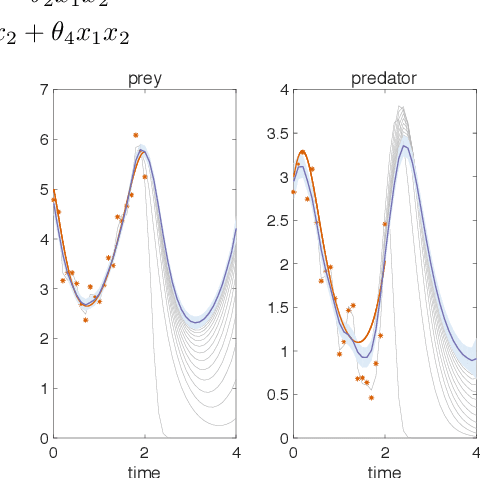

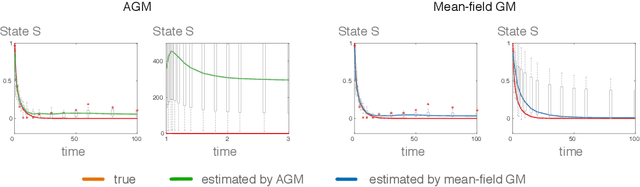

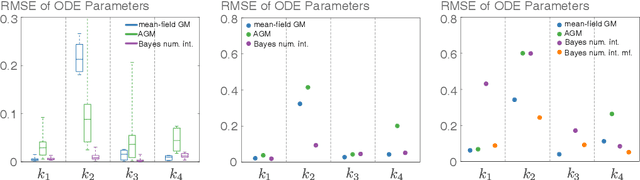

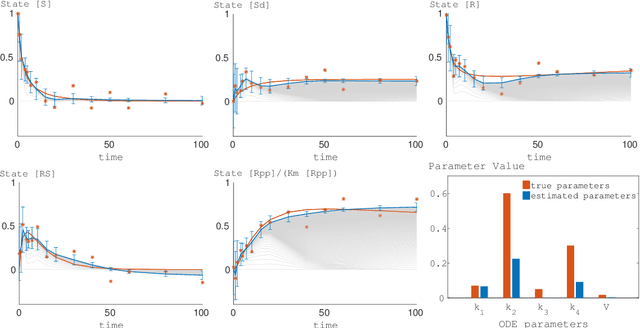

Abstract:Gradient matching is a promising tool for learning parameters and state dynamics of ordinary differential equations. It is a grid free inference approach, which, for fully observable systems is at times competitive with numerical integration. However, for many real-world applications, only sparse observations are available or even unobserved variables are included in the model description. In these cases most gradient matching methods are difficult to apply or simply do not provide satisfactory results. That is why, despite the high computational cost, numerical integration is still the gold standard in many applications. Using an existing gradient matching approach, we propose a scalable variational inference framework which can infer states and parameters simultaneously, offers computational speedups, improved accuracy and works well even under model misspecifications in a partially observable system.

Mean-Field Variational Inference for Gradient Matching with Gaussian Processes

Oct 21, 2016

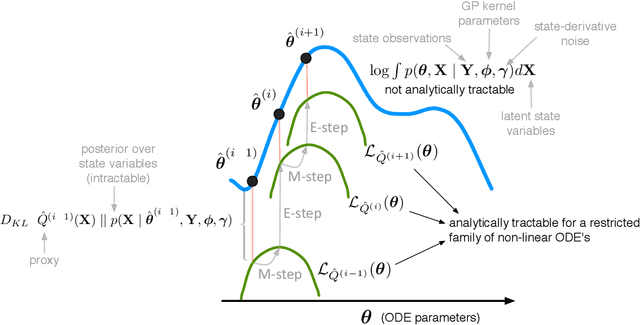

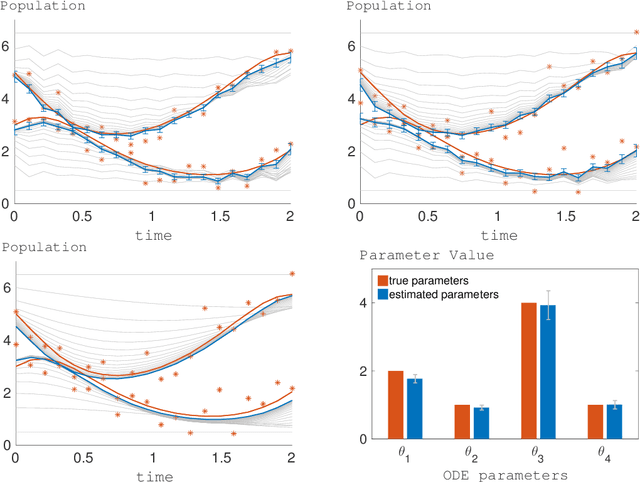

Abstract:Gradient matching with Gaussian processes is a promising tool for learning parameters of ordinary differential equations (ODE's). The essence of gradient matching is to model the prior over state variables as a Gaussian process which implies that the joint distribution given the ODE's and GP kernels is also Gaussian distributed. The state-derivatives are integrated out analytically since they are modelled as latent variables. However, the state variables themselves are also latent variables because they are contaminated by noise. Previous work sampled the state variables since integrating them out is \textit{not} analytically tractable. In this paper we use mean-field approximation to establish tight variational lower bounds that decouple state variables and are therefore, in contrast to the integral over state variables, analytically tractable and even concave for a restricted family of ODE's, including nonlinear and periodic ODE's. Such variational lower bounds facilitate "hill climbing" to determine the maximum a posteriori estimate of ODE parameters. An additional advantage of our approach over sampling methods is the determination of a proxy to the intractable posterior distribution over state variables given observations and the ODE's.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge