Navid Alemi Koohbanani

Context-Aware Learning using Transferable Features for Classification of Breast Cancer Histology Images

Mar 06, 2018

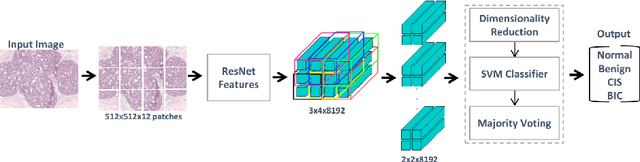

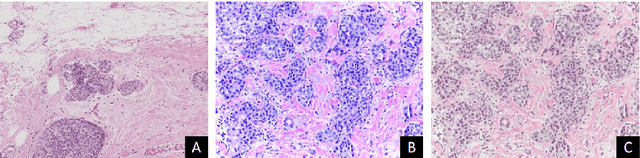

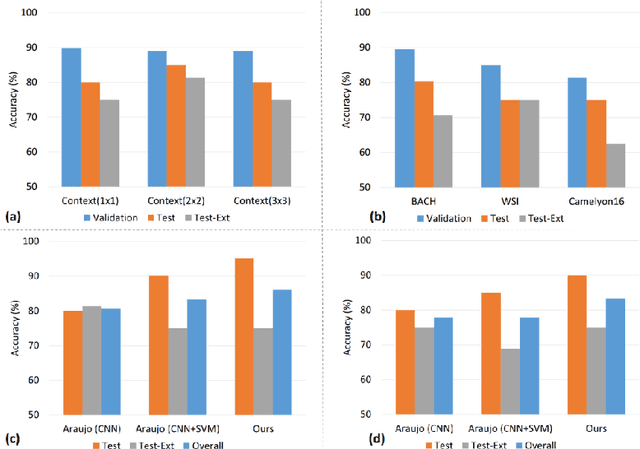

Abstract:Convolutional neural networks (CNNs) have been recently used for a variety of histology image analysis. However, availability of a large dataset is a major prerequisite for training a CNN which limits its use by the computational pathology community. In previous studies, CNNs have demonstrated their potential in terms of feature generalizability and transferability accompanied with better performance. Considering these traits of CNN, we propose a simple yet effective method which leverages the strengths of CNN combined with the advantages of including contextual information, particularly designed for a small dataset. Our method consists of two main steps: first it uses the activation features of CNN trained for a patch-based classification and then it trains a separate classifier using features of overlapping patches to perform image-based classification using the contextual information. The proposed framework outperformed the state-of-the-art method for breast cancer classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge