Najmeh Nazari

Is Mamba Reliable for Medical Imaging?

Feb 16, 2026Abstract:State-space models like Mamba offer linear-time sequence processing and low memory, making them attractive for medical imaging. However, their robustness under realistic software and hardware threat models remains underexplored. This paper evaluates Mamba on multiple MedM-NIST classification benchmarks under input-level attacks, including white-box adversarial perturbations (FGSM/PGD), occlusion-based PatchDrop, and common acquisition corruptions (Gaussian noise and defocus blur) as well as hardware-inspired fault attacks emulated in software via targeted and random bit-flip injections into weights and activations. We profile vulnerabilities and quantify impacts on accuracy indicating that defenses are needed for deployment.

Multi-level Binarized LSTM in EEG Classification for Wearable Devices

Apr 19, 2020

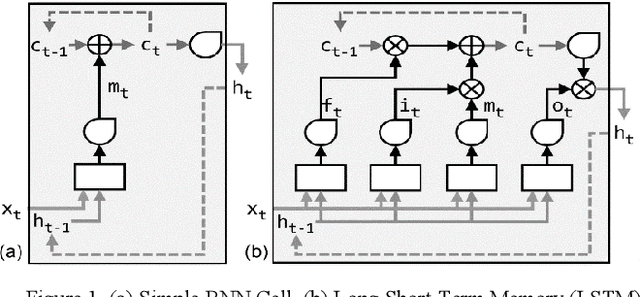

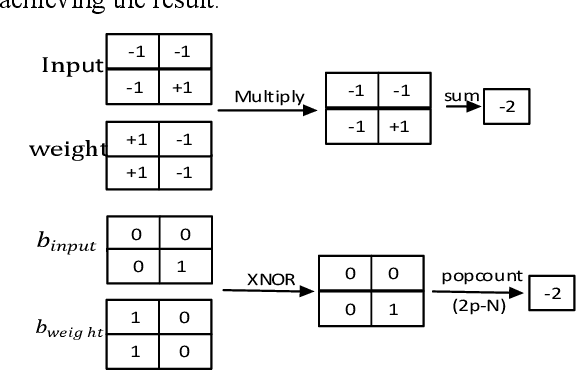

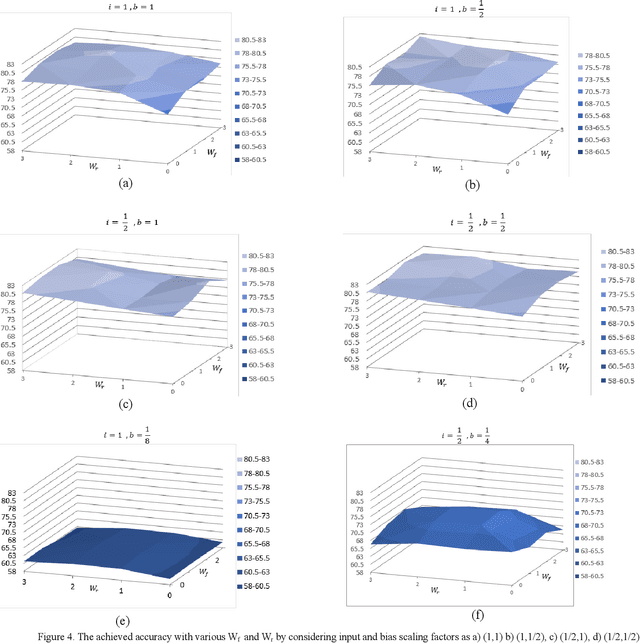

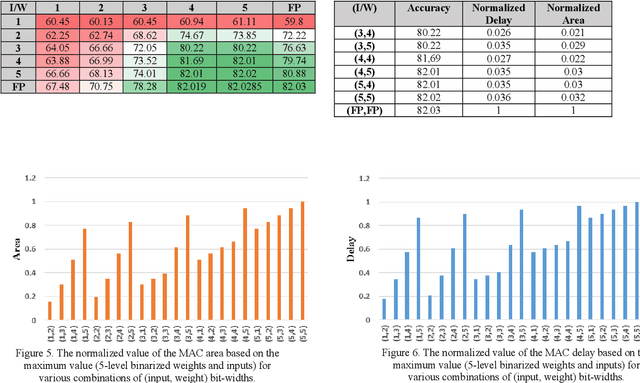

Abstract:Long Short-Term Memory (LSTM) is widely used in various sequential applications. Complex LSTMs could be hardly deployed on wearable and resourced-limited devices due to the huge amount of computations and memory requirements. Binary LSTMs are introduced to cope with this problem, however, they lead to significant accuracy loss in some application such as EEG classification which is essential to be deployed in wearable devices. In this paper, we propose an efficient multi-level binarized LSTM which has significantly reduced computations whereas ensuring an accuracy pretty close to full precision LSTM. By deploying 5-level binarized weights and inputs, our method reduces area and delay of MAC operation about 31* and 27* in 65nm technology, respectively with less than 0.01% accuracy loss. In contrast to many compute-intensive deep-learning approaches, the proposed algorithm is lightweight, and therefore, brings performance efficiency with accurate LSTM-based EEG classification to real-time wearable devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge