Nadir Bengana

Improving land cover segmentation across satellites using domain adaptation

Nov 25, 2019

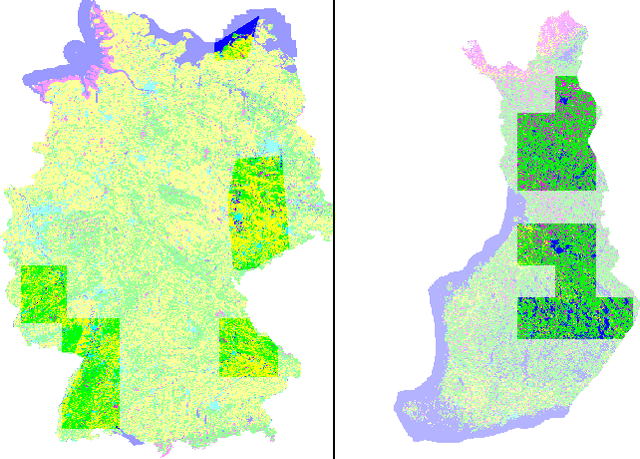

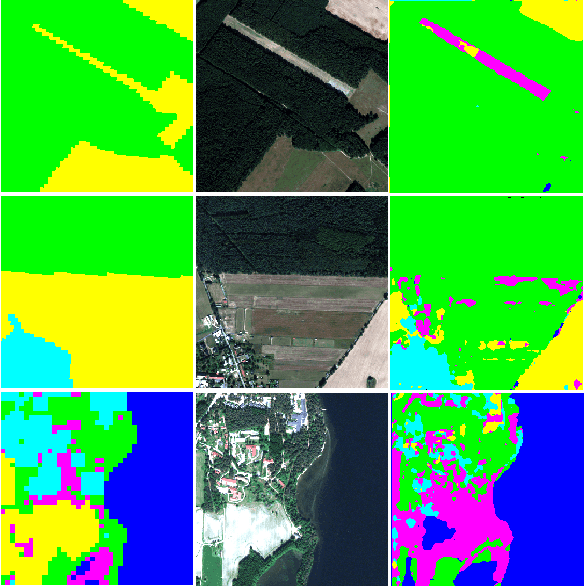

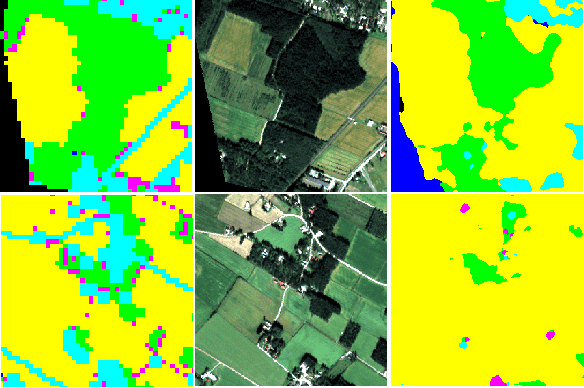

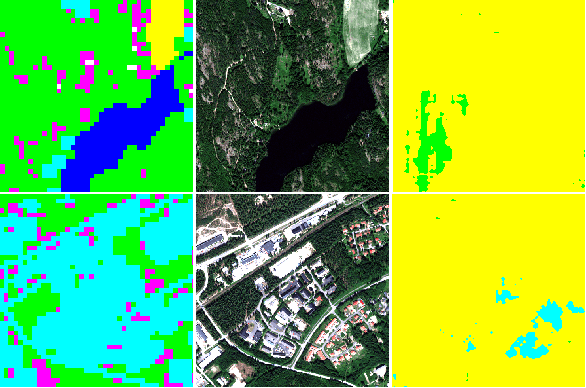

Abstract:Image segmentation for Land Use and Land Cover (LULC) mapping is a valuable asset that saves a lot of time and effort as opposed to manual annotation. However the lack of datasets to train a model good enough to cover a variety of locations on the planet does not exist. Domain adaptation which proved to be quite useful in segmenting street view images can be used to solve the problem of scarcely labeled land cover datasets. In this paper we build a few labeled datasets based on multispectral imagery from Sentinel-2, Worldview-2 and Pleiades-1 satellites and test domain adaptation between those datasets. Experiments show that domain adaptation manages to make it possible to semantically segment images from different areas on the planet with a limited amount of labeled data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge