Nadia Magnenat-Thalmann

SHANDS: A Multi-View Dataset and Benchmark for Surgical Hand-Gesture and Error Recognition Toward Medical Training

Mar 27, 2026Abstract:In surgical training for medical students, proficiency development relies on expert-led skill assessment, which is costly, time-limited, difficult to scale, and its expertise remains confined to institutions with available specialists. Automated AI-based assessment offers a viable alternative, but progress is constrained by the lack of datasets containing realistic trainee errors and the multi-view variability needed to train robust computer vision approaches. To address this gap, we present Surgical-Hands (SHands), a large-scale multi-view video dataset for surgical hand-gesture and error recognition for medical training. \textsc{SHands} captures linear incision and suturing using five RGB cameras from complementary viewpoints, performed by 52 participants (20 experts and 32 trainees), each completing three standardized trials per procedure. The videos are annotated at the frame level with 15 gesture primitives and include a validated taxonomy of 8 trainee error types, enabling both gesture recognition and error detection. We further define standardized evaluation protocols for single-view, multi-view, and cross-view generalization, and benchmark state-of-the-art deep learning models on the dataset. SHands is publicly released to support the development of robust and scalable AI systems for surgical training grounded in clinically curated domain knowledge.

Nadine: An LLM-driven Intelligent Social Robot with Affective Capabilities and Human-like Memory

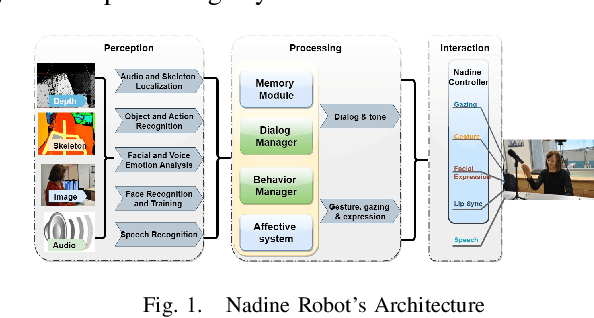

May 30, 2024Abstract:In this work, we describe our approach to developing an intelligent and robust social robotic system for the Nadine social robot platform. We achieve this by integrating Large Language Models (LLMs) and skilfully leveraging the powerful reasoning and instruction-following capabilities of these types of models to achieve advanced human-like affective and cognitive capabilities. This approach is novel compared to the current state-of-the-art LLM-based agents which do not implement human-like long-term memory or sophisticated emotional appraisal. The naturalness of social robots, consisting of multiple modules, highly depends on the performance and capabilities of each component of the system and the seamless integration of the components. We built a social robot system that enables generating appropriate behaviours through multimodal input processing, bringing episodic memories accordingly to the recognised user, and simulating the emotional states of the robot induced by the interaction with the human partner. In particular, we introduce an LLM-agent frame for social robots, SoR-ReAct, serving as a core component for the interaction module in our system. This design has brought forth the advancement of social robots and aims to increase the quality of human-robot interaction.

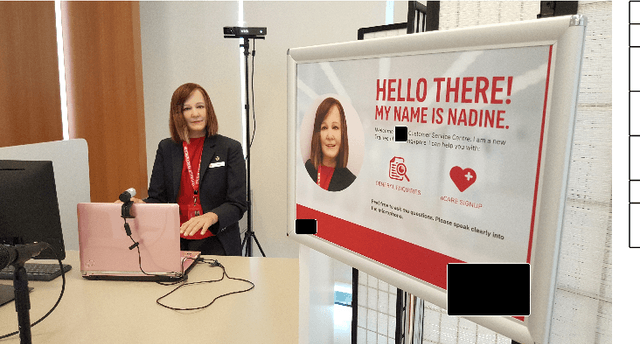

Can a Humanoid Robot be part of the Organizational Workforce? A User Study Leveraging Sentiment Analysis

May 30, 2019

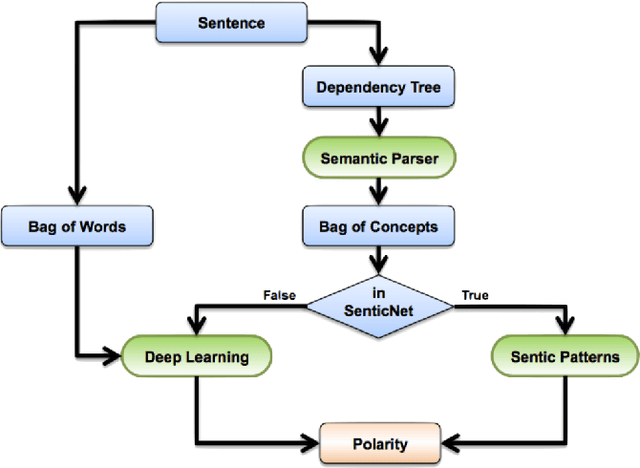

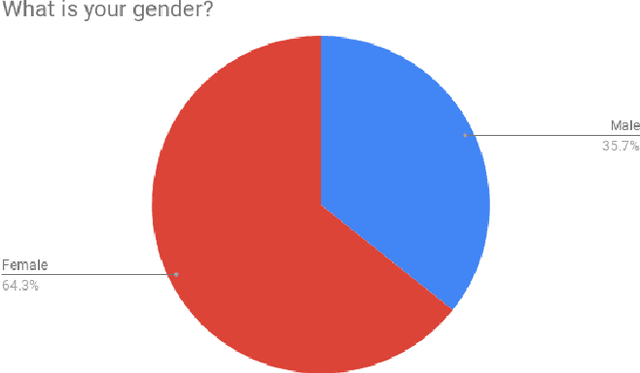

Abstract:Hiring robots for the workplaces is a challenging task as robots have to cater to customer demands, follow organizational protocols and behave with social etiquette. In this study, we propose to have a humanoid social robot, Nadine, as a customer service agent in an open social work environment. The objective of this study is to analyze the effects of humanoid robots on customers at work environment, and see if it can handle social scenarios. We propose to evaluate these objectives through two modes, namely, survey questionnaire and customer feedback. We also propose a novel approach to analyze customer feedback data (text) using sentic computing methods. Specifically, we employ aspect extraction and sentiment analysis to analyze the data. From our framework, we detect sentiment associated to the aspects that mainly concerned the customers during their interaction. This allows us to understand customers expectations and current limitations of robots as employees.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge