Moi Hoon Yap

Fully Convolutional Networks for Diabetic Foot Ulcer Segmentation

Aug 06, 2017

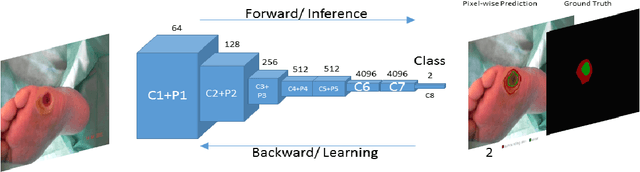

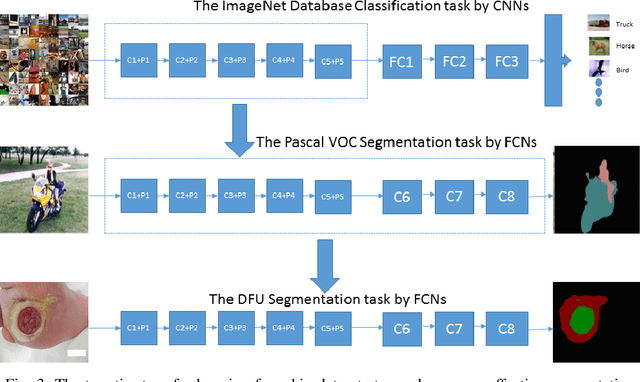

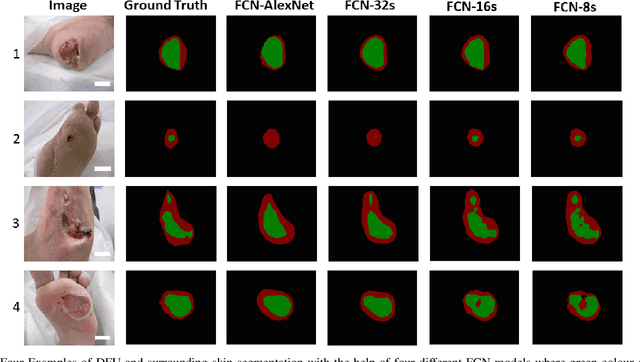

Abstract:Diabetic Foot Ulcer (DFU) is a major complication of Diabetes, which if not managed properly can lead to amputation. DFU can appear anywhere on the foot and can vary in size, colour, and contrast depending on various pathologies. Current clinical approaches to DFU treatment rely on patients and clinician vigilance, which has significant limitations such as the high cost involved in the diagnosis, treatment and lengthy care of the DFU. We introduce a dataset of 705 foot images. We provide the ground truth of ulcer region and the surrounding skin that is an important indicator for clinicians to assess the progress of ulcer. Then, we propose a two-tier transfer learning from bigger datasets to train the Fully Convolutional Networks (FCNs) to automatically segment the ulcer and surrounding skin. Using 5-fold cross-validation, the proposed two-tier transfer learning FCN Models achieve a Dice Similarity Coefficient of 0.794 ($\pm$0.104) for ulcer region, 0.851 ($\pm$0.148) for surrounding skin region, and 0.899 ($\pm$0.072) for the combination of both regions. This demonstrates the potential of FCNs in DFU segmentation, which can be further improved with a larger dataset.

A Comparative Study of the Clinical use of Motion Analysis from Kinect Skeleton Data

Jul 31, 2017

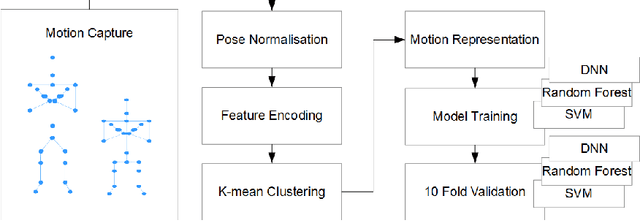

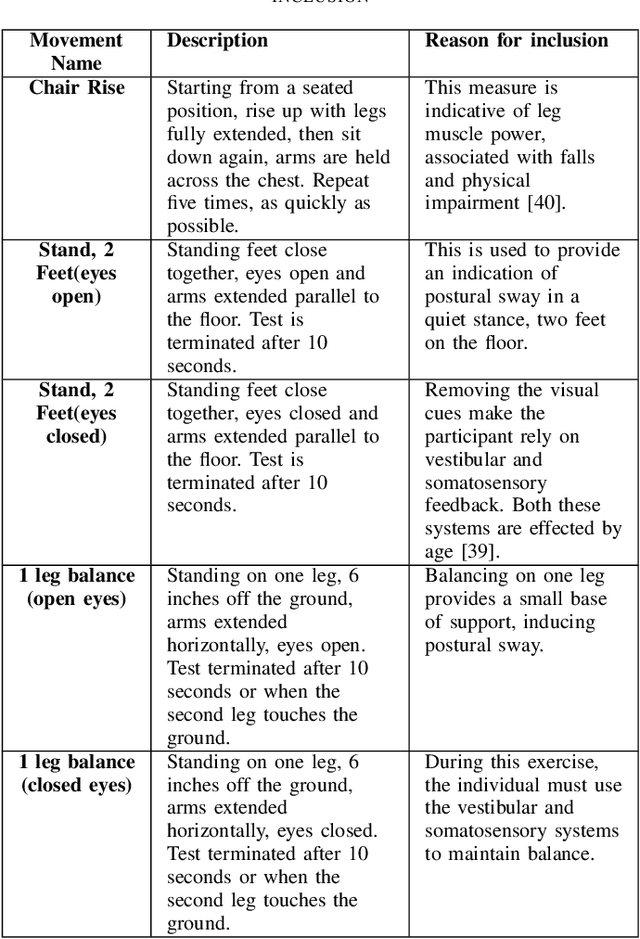

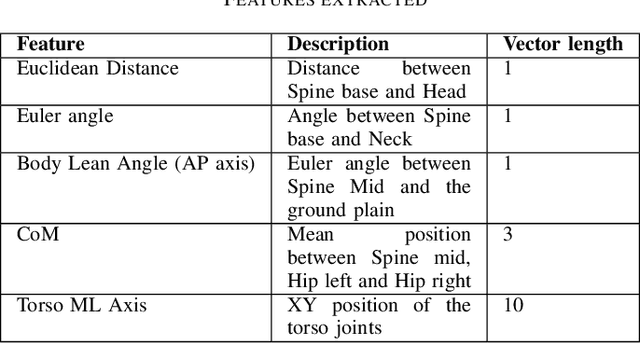

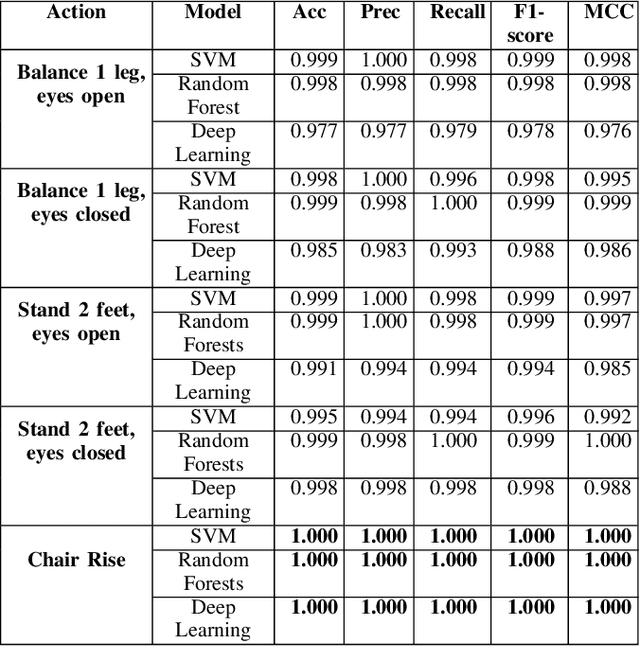

Abstract:The analysis of human motion as a clinical tool can bring many benefits such as the early detection of disease and the monitoring of recovery, so in turn helping people to lead independent lives. However, it is currently under used. Developments in depth cameras, such as Kinect, have opened up the use of motion analysis in settings such as GP surgeries, care homes and private homes. To provide an insight into the use of Kinect in the healthcare domain, we present a review of the current state of the art. We then propose a method that can represent human motions from time-series data of arbitrary length, as a single vector. Finally, we demonstrate the utility of this method by extracting a set of clinically significant features and using them to detect the age related changes in the motions of a set of 54 individuals, with a high degree of certainty (F1- score between 0.9 - 1.0). Indicating its potential application in the detection of a range of age-related motion impairments.

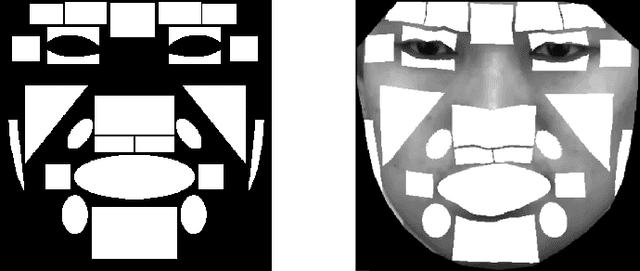

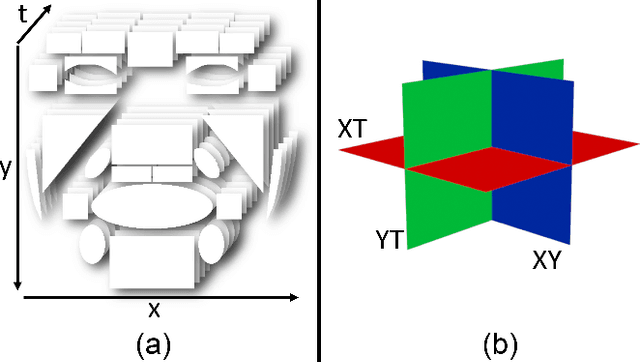

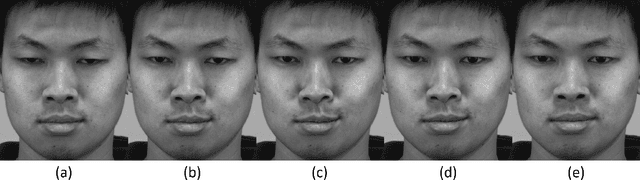

Objective Micro-Facial Movement Detection Using FACS-Based Regions and Baseline Evaluation

Dec 15, 2016

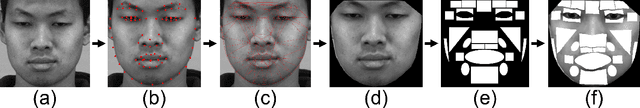

Abstract:Micro-facial expressions are regarded as an important human behavioural event that can highlight emotional deception. Spotting these movements is difficult for humans and machines, however research into using computer vision to detect subtle facial expressions is growing in popularity. This paper proposes an individualised baseline micro-movement detection method using 3D Histogram of Oriented Gradients (3D HOG) temporal difference method. We define a face template consisting of 26 regions based on the Facial Action Coding System (FACS). We extract the temporal features of each region using 3D HOG. Then, we use Chi-square distance to find subtle facial motion in the local regions. Finally, an automatic peak detector is used to detect micro-movements above the newly proposed adaptive baseline threshold. The performance is validated on two FACS coded datasets: SAMM and CASME II. This objective method focuses on the movement of the 26 face regions. When comparing with the ground truth, the best result was an AUC of 0.7512 and 0.7261 on SAMM and CASME II, respectively. The results show that 3D HOG outperformed for micro-movement detection, compared to state-of-the-art feature representations: Local Binary Patterns in Three Orthogonal Planes and Histograms of Oriented Optical Flow.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge