Moi Hoon Yap

Automatic Lesion Boundary Segmentation in Dermoscopic Images with Ensemble Deep Learning Methods

Feb 02, 2019

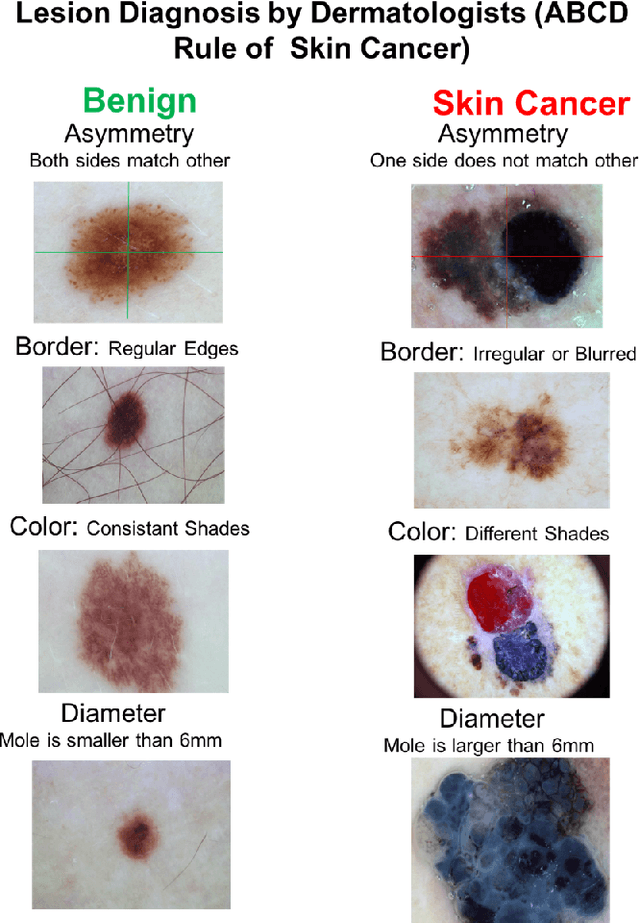

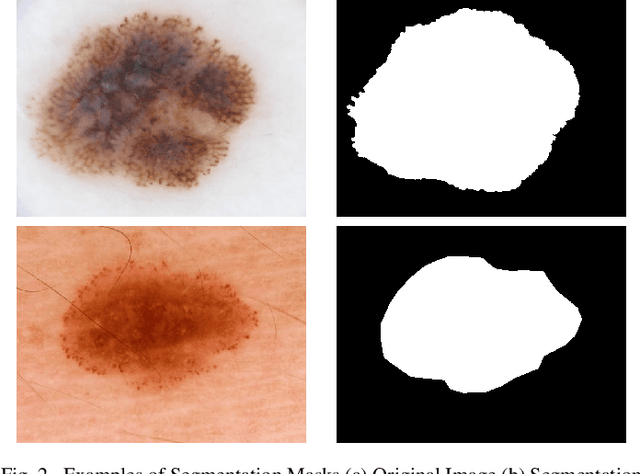

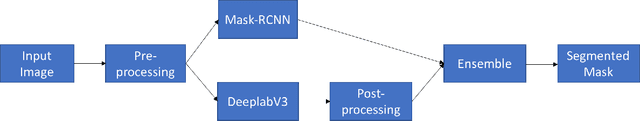

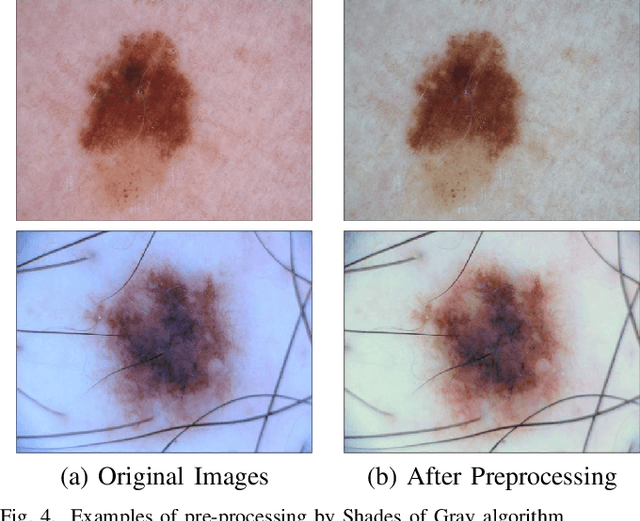

Abstract:Early detection of skin cancer, particularly melanoma, is crucial to enable advanced treatment. Due to the rapid growth of skin cancers, there is a growing need of computerized analysis for skin lesions. These processes including detection, classification, and segmentation. The state-of-the-art public available datasets for skin lesions are often accompanied with a very limited amount of segmentation ground truth labeling as it is laborious and expensive. The lesion boundary segmentation is vital to locate the lesion accurately in dermoscopic images and lesion diagnosis of different skin lesion types. In this work, we propose the use of fully automated deep learning ensemble methods for accurate lesion boundary segmentation in dermoscopic images. We trained the Mask-RCNN and DeeplabV3+ methods on ISIC-2017 segmentation training dataset and evaluate the various ensemble performance of both networks on ISIC-2017 testing set, PH2 dataset. Our results showed that the proposed ensemble method segmented the skin lesions with Jaccard index of 79.58% for the ISBI 2017 test dataset. In comparison to FrCN, FCN, U-Net, and SegNet, the proposed ensemble method outperformed them by 2.48%, 7.42%, 17.95%, and 9.96% for the Jaccard index, respectively. Furthermore, the proposed ensemble method achieved a segmentation accuracy of 95.6% for some representative clinical benign cases, 90.78\% for the melanoma cases, and 91.29% for the seborrheic keratosis cases in the ISBI 2017 test dataset, exhibiting better performance than those of FrCN, FCN, U-Net, and SegNet.

Spotting Micro-Expressions on Long Videos Sequences

Dec 26, 2018

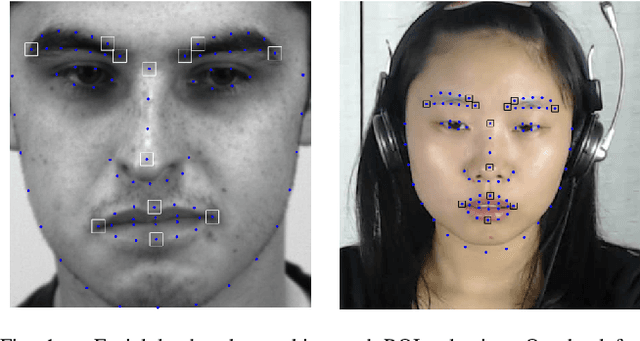

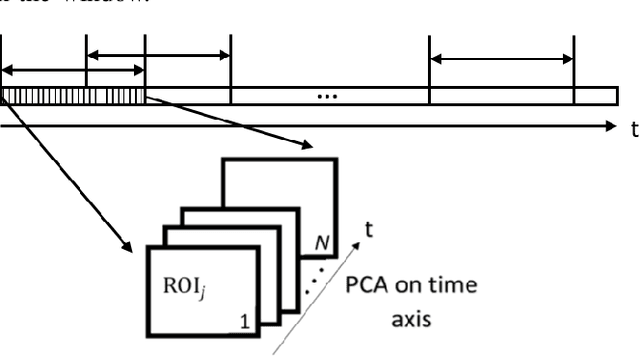

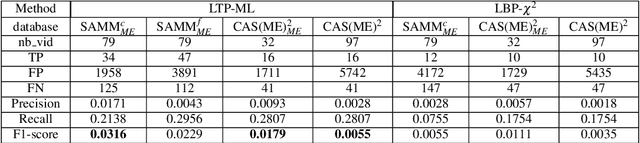

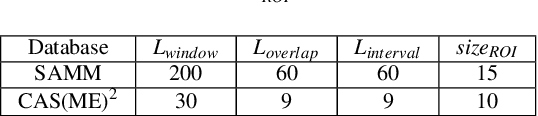

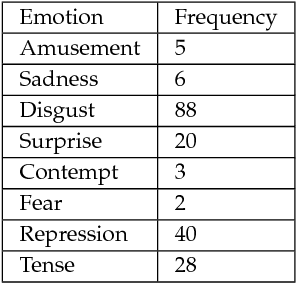

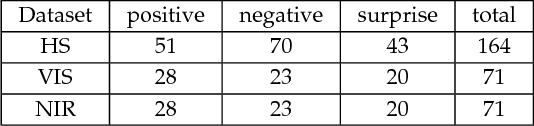

Abstract:This paper presents baseline results for the first Micro-Expression Spotting Challenge 2019 by evaluating local temporal pattern (LTP) on SAMM and CAS(ME)2. The proposed LTP patterns are extracted by applying PCA in a temporal window on several facial local regions. The micro-expression sequences are then spotted by a local classification of LTP and a global fusion. The performance is evaluated by Leave-One-Subject-Out cross validation. Furthermore, we define the criteria of determining true positives in one video by overlap rate and set the metric F1-score for spotting performance of the whole database. The F1-score of baseline results for SAMM and CAS(ME)2 are 0.0316 and 0.0179, respectively.

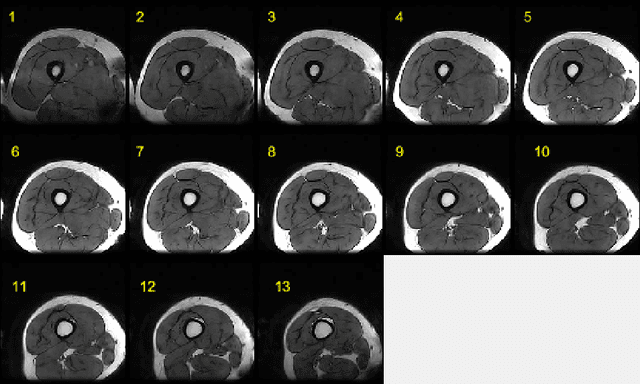

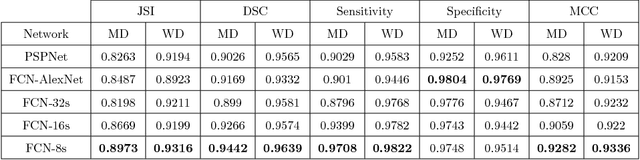

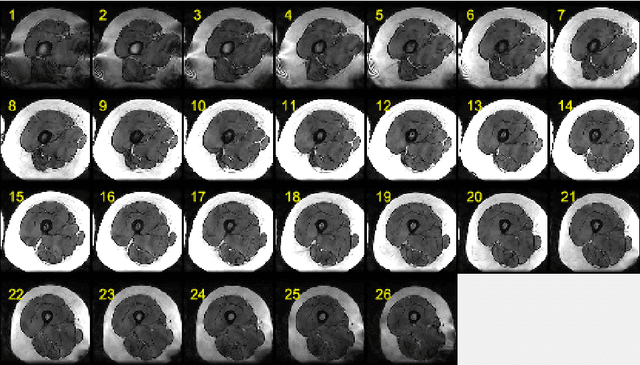

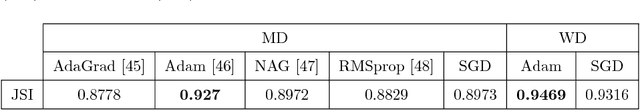

Semantic Segmentation of Human Thigh Quadriceps Muscle in Magnetic Resonance Images

Aug 09, 2018

Abstract:This paper presents an end-to-end solution for MRI thigh quadriceps segmentation. This is the first attempt that deep learning methods are used for the MRI thigh segmentation task. We use the state-of-the-art Fully Convolutional Networks with transfer learning approach for the semantic segmentation of regions of interest in MRI thigh scans. To further improve the performance of the segmentation, we propose a post-processing technique using basic image processing methods. With our proposed method, we have established a new benchmark for MRI thigh quadriceps segmentation with mean Jaccard Similarity Index of 0.9502 and processing time of 0.117 second per image.

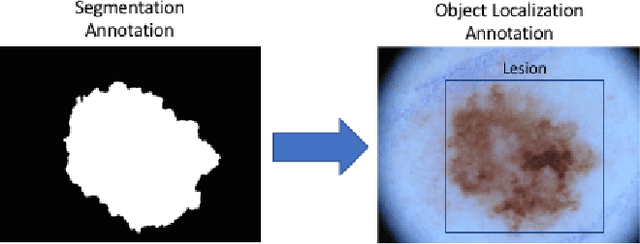

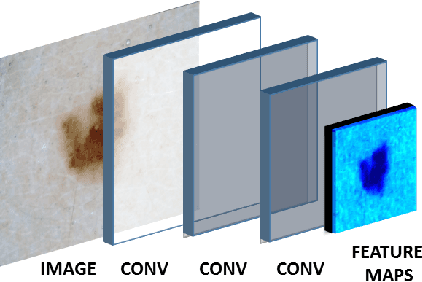

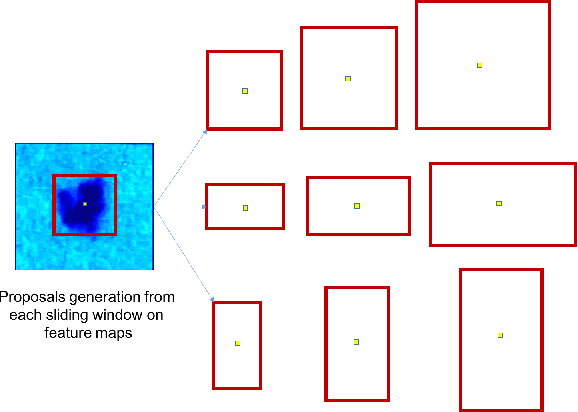

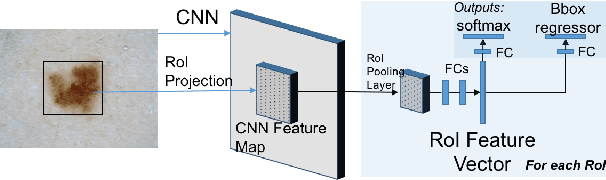

Region of Interest Detection in Dermoscopic Images for Natural Data-augmentation

Jul 27, 2018

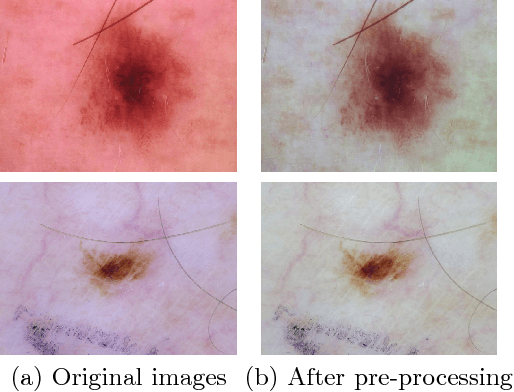

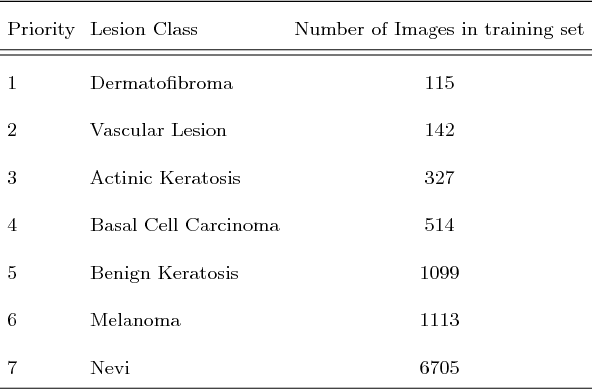

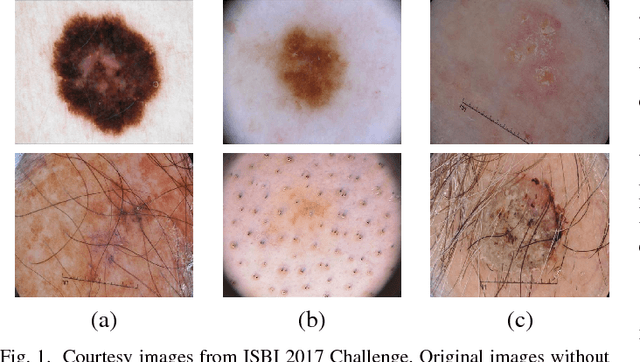

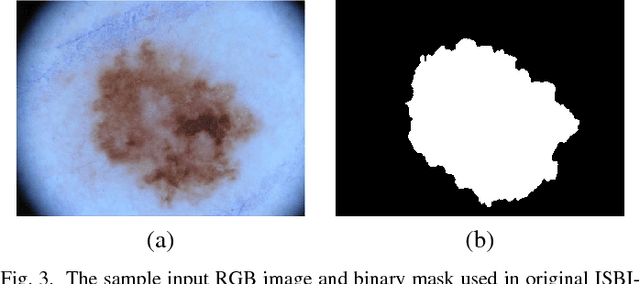

Abstract:With the rapid growth of medical imaging research, there is a great interest in the automated detection of skin lesions with computer algorithms. The state-of-the-art datasets for skin lesions are often accompanied with very limited amount of ground truth labeling as it is laborious and expensive. The region of interest (ROI) detection is vital to locate the lesion accurately and robust to subtle features of different skin lesion types. In this work, we propose the use of two object localization meta-architectures for end-to-end ROI skin lesion detection in dermoscopic images. We trained the Faster-RCNN-InceptionV2 and SSD-InceptionV2 on ISBI-2017 training dataset and evaluate the performances on ISBI-2017 testing set, PH2 and HAM10000 datasets. Since there was no earlier work in ROI detection for skin lesion with CNNs, we compare the performance of skin localization methods with the state-of-the-art segmentation method. The localization methods proved superiority over the segmentation method in ROI detection on skin lesion datasets. In addition, based on the detected ROI, an automated natural data-augmentation method is proposed. To demonstrate the potential of our work, we developed a real-time mobile application for automated skin lesions detection. The codes and mobile application will be made available for further research purposes.

Multi-Class Lesion Diagnosis with Pixel-wise Classification Network

Jul 24, 2018

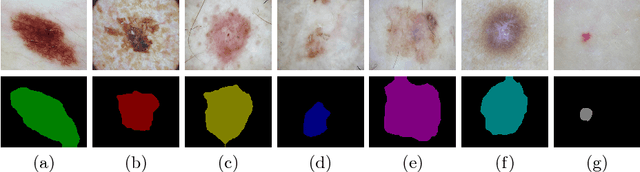

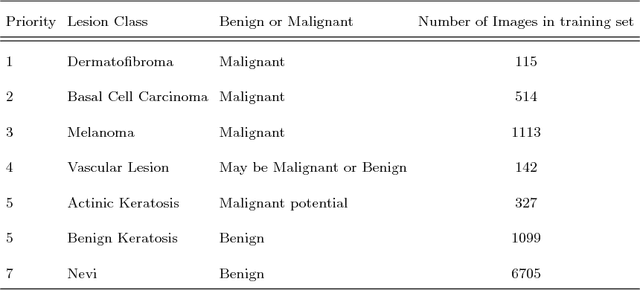

Abstract:Lesion diagnosis of skin lesions is a very challenging task due to high inter-class similarities and intra-class variations in terms of color, size, site and appearance among different skin lesions. With the emergence of computer vision especially deep learning algorithms, lesion diagnosis is made possible using these algorithms trained on dermoscopic images. Usually, deep classification networks are used for the lesion diagnosis to determine different types of skin lesions. In this work, we used pixel-wise classification network to provide lesion diagnosis rather than classification network. We propose to use DeeplabV3+ for multi-class lesion diagnosis in dermoscopic images of Task 3 of ISIC Challenge 2018. We used various post-processing methods with DeeplabV3+ to determine the lesion diagnosis in this challenge and submitted the test results.

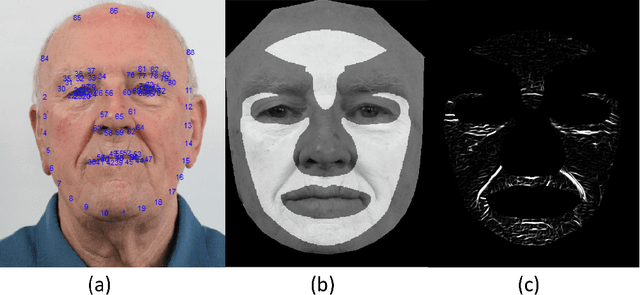

A Review on Facial Micro-Expressions Analysis: Datasets, Features and Metrics

May 07, 2018

Abstract:Facial micro-expressions are very brief, spontaneous facial expressions that appear on the face of humans when they either deliberately or unconsciously conceal an emotion. Micro-expression has shorter duration than macro-expression, which makes it more challenging for human and machine. Over the past ten years, automatic micro-expressions recognition has attracted increasing attention from researchers in psychology, computer science, security, neuroscience and other related disciplines. The aim of this paper is to provide the insights of automatic micro-expressions and recommendations for future research. There has been a lot of datasets released over the last decade that facilitated the rapid growth in this field. However, comparison across different datasets is difficult due to the inconsistency in experiment protocol, features used and evaluation methods. To address these issues, we review the datasets, features and the performance metrics deployed in the literature. Relevant challenges such as the spatial temporal settings during data collection, emotional classes versus objective classes in data labelling, face regions in data analysis, standardisation of metrics and the requirements for real-world implementation are discussed. We conclude by proposing some promising future directions to advancing micro-expressions research.

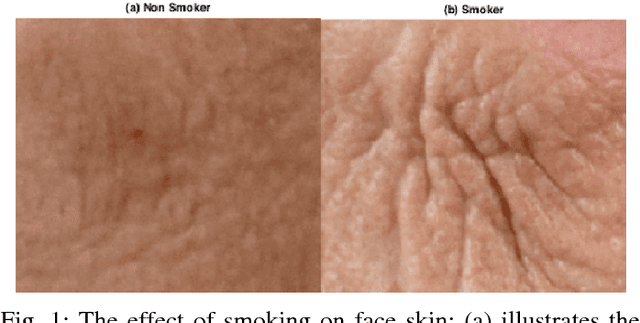

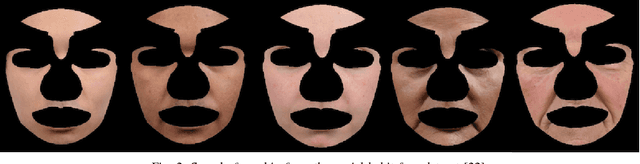

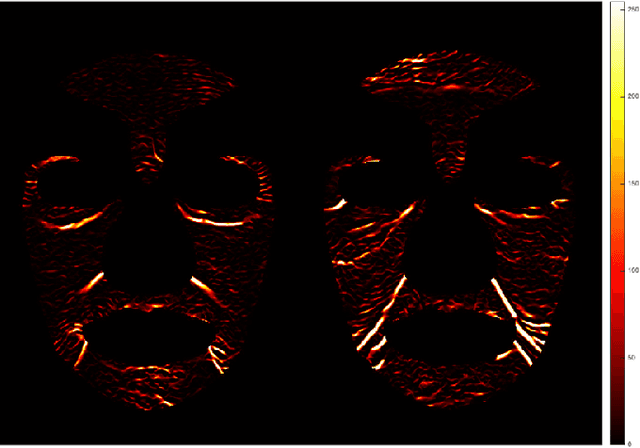

Automated Assessment of Facial Wrinkling: a case study on the effect of smoking

Dec 31, 2017

Abstract:Facial wrinkle is one of the most prominent biological changes that accompanying the natural aging process. However, there are some external factors contributing to premature wrinkles development, such as sun exposure and smoking. Clinical studies have shown that heavy smoking causes premature wrinkles development. However, there is no computerised system that can automatically assess the facial wrinkles on the whole face. This study investigates the effect of smoking on facial wrinkling using a social habit face dataset and an automated computerised computer vision algorithm. The wrinkles pattern represented in the intensity of 0-255 was first extracted using a modified Hybrid Hessian Filter. The face was divided into ten predefined regions, where the wrinkles in each region was extracted. Then the statistical analysis was performed to analyse which region is effected mainly by smoking. The result showed that the density of wrinkles for smokers in two regions around the mouth was significantly higher than the non-smokers, at p-value of 0.05. Other regions are inconclusive due to lack of large scale dataset. Finally, the wrinkle was visually compared between smoker and non-smoker faces by generating a generic 3D face model.

* 6 pages, 8 figures, Accepted in 2017 IEEE SMC International Conference

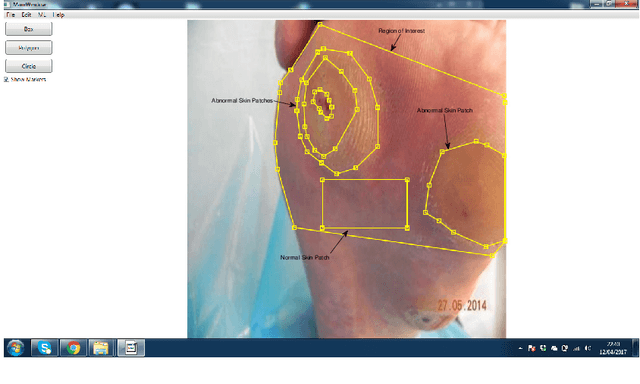

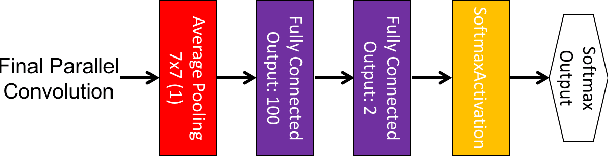

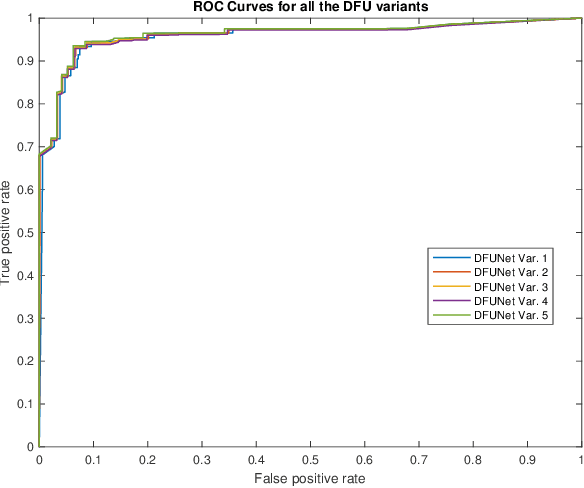

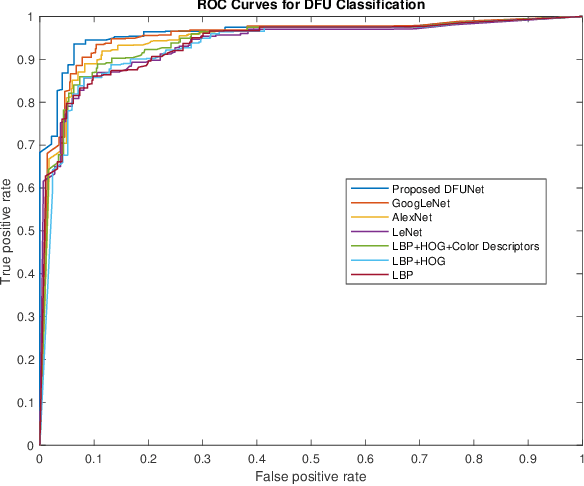

DFUNet: Convolutional Neural Networks for Diabetic Foot Ulcer Classification

Dec 10, 2017

Abstract:Globally, in 2016, one out of eleven adults suffered from Diabetes Mellitus. Diabetic Foot Ulcers (DFU) are a major complication of this disease, which if not managed properly can lead to amputation. Current clinical approaches to DFU treatment rely on patient and clinician vigilance, which has significant limitations such as the high cost involved in the diagnosis, treatment and lengthy care of the DFU. We collected an extensive dataset of foot images, which contain DFU from different patients. In this paper, we have proposed the use of traditional computer vision features for detecting foot ulcers among diabetic patients, which represent a cost-effective, remote and convenient healthcare solution. Furthermore, we used Convolutional Neural Networks (CNNs) for the first time in DFU classification. We have proposed a novel convolutional neural network architecture, DFUNet, with better feature extraction to identify the feature differences between healthy skin and the DFU. Using 10-fold cross-validation, DFUNet achieved an AUC score of 0.962. This outperformed both the machine learning and deep learning classifiers we have tested. Here we present the development of a novel and highly sensitive DFUNet for objectively detecting the presence of DFUs. This novel approach has the potential to deliver a paradigm shift in diabetic foot care.

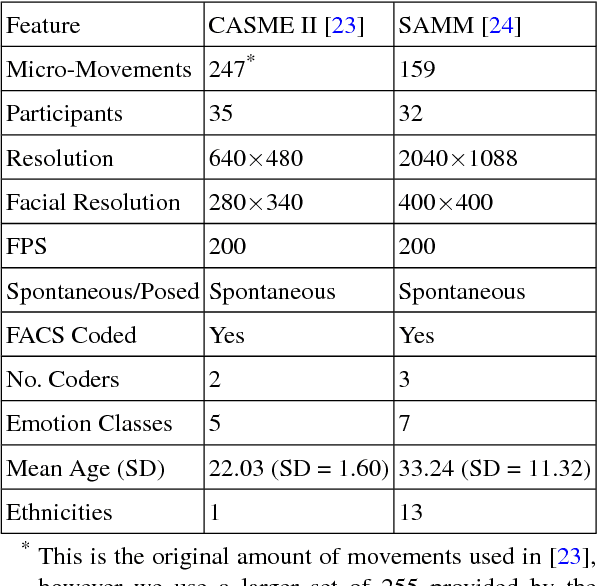

Objective Classes for Micro-Facial Expression Recognition

Dec 03, 2017

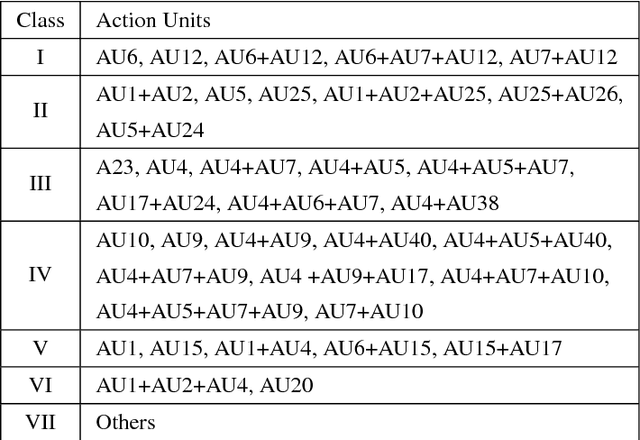

Abstract:Micro-expressions are brief spontaneous facial expressions that appear on a face when a person conceals an emotion, making them different to normal facial expressions in subtlety and duration. Currently, emotion classes within the CASME II dataset are based on Action Units and self-reports, creating conflicts during machine learning training. We will show that classifying expressions using Action Units, instead of predicted emotion, removes the potential bias of human reporting. The proposed classes are tested using LBP-TOP, HOOF and HOG 3D feature descriptors. The experiments are evaluated on two benchmark FACS coded datasets: CASME II and SAMM. The best result achieves 86.35\% accuracy when classifying the proposed 5 classes on CASME II using HOG 3D, outperforming the result of the state-of-the-art 5-class emotional-based classification in CASME II. Results indicate that classification based on Action Units provides an objective method to improve micro-expression recognition.

Multi-class Semantic Segmentation of Skin Lesions via Fully Convolutional Networks

Nov 28, 2017

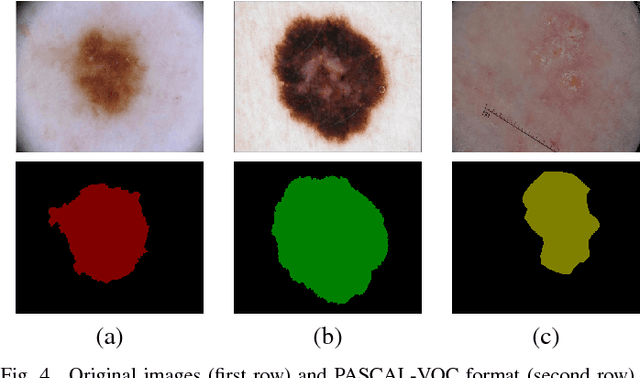

Abstract:Early detection of skin cancer, particularly melanoma, is crucial to enable advanced treatment. Due to the rapid growth of skin cancers, there is a growing need of computerized analysis for skin lesions. These processes including detection, classification, and segmentation. There are three main types of skin lesions in common that are benign nevi, melanoma, and seborrhoeic keratoses which have huge intra-class variations in terms of color, size, place and appearance for each class and high inter-class visual similarities in dermoscopic images. The majority of current research is focusing on melanoma segmentation, but it is also very important to segment the seborrhoeic keratoses and benign nevi lesions as these regions potentially indicate the pre-cancer stage. We propose a multiclass semantic segmentation for these three classes from publicly available ISBI-2017 challenge dataset which consists of 2750 dermoscopic images. We propose an end-to-end solution using fully convolutional networks (FCNs) for multi-class semantic segmentation, which will automatically segment the melanoma, keratoses and benign lesions. To overcome the issue of data deficiency, we propose a transfer learning approach which uses both partial transfer learning and full transfer learning to train FCNs for multi-class semantic segmentation of skin lesions. The results are presented in Dice Similarity Coefficient (Dice) to compare the performance of the deep learning segmentation methods on the dataset with 5-fold cross-validation. The results showed that the two-tier level transfer learning FCN-8s achieved the overall best result with Dice score of 0.785 in a benign category, 0.653 in melanoma segmentation, and 0.557 in seborrhoeic keratoses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge