Mkhuseli Ngxande

Multi-Frame Quality Enhancement On Compressed Video Using Quantised Data of Deep Belief Networks

Jan 27, 2022

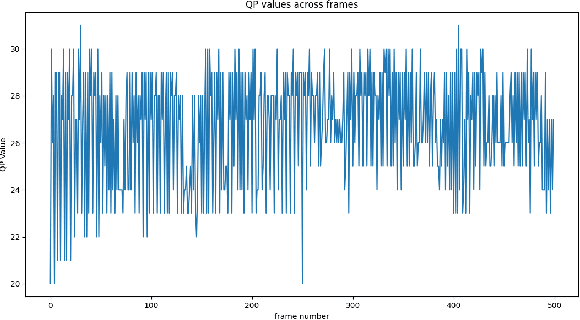

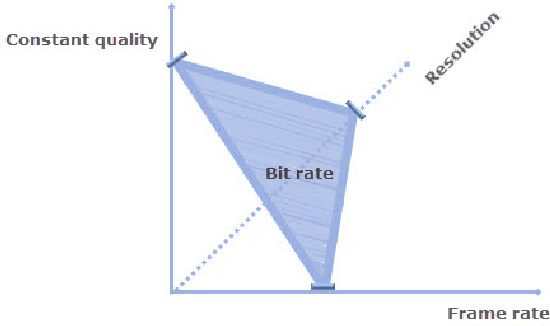

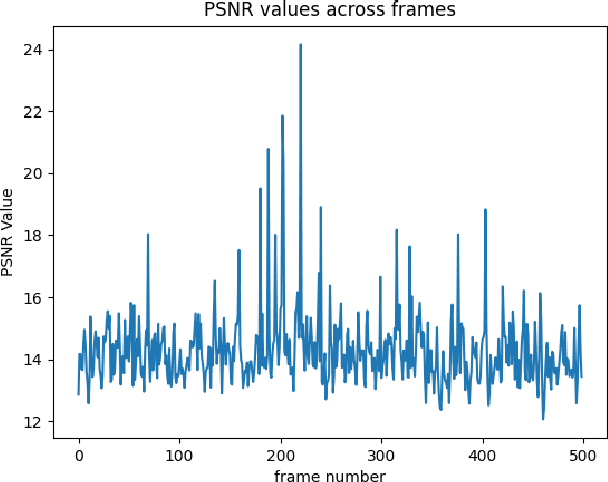

Abstract:In the age of streaming and surveillance compressed video enhancement has become a problem in need of constant improvement. Here, we investigate a way of improving the Multi-Frame Quality Enhancement approach. This approach consists of making use of the frames that have the peak quality in the region to improve those that have a lower quality in that region. This approach consists of obtaining quantized data from the videos using a deep belief network. The quantized data is then fed into the MF-CNN architecture to improve the compressed video. We further investigate the impact of using a Bi-LSTM for detecting the peak quality frames. Our approach obtains better results than the first approach of the MFQE which uses an SVM for PQF detection. On the other hand, our MFQE approach does not outperform the latest version of the MQFE approach that uses a Bi-LSTM for PQF detection.

Bias Remediation in Driver Drowsiness Detection systems using Generative Adversarial Networks

Dec 10, 2019

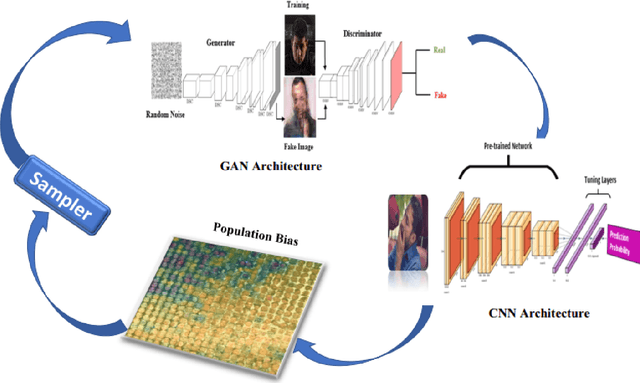

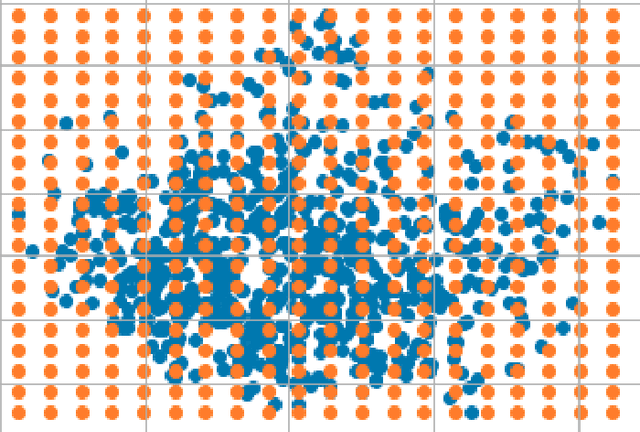

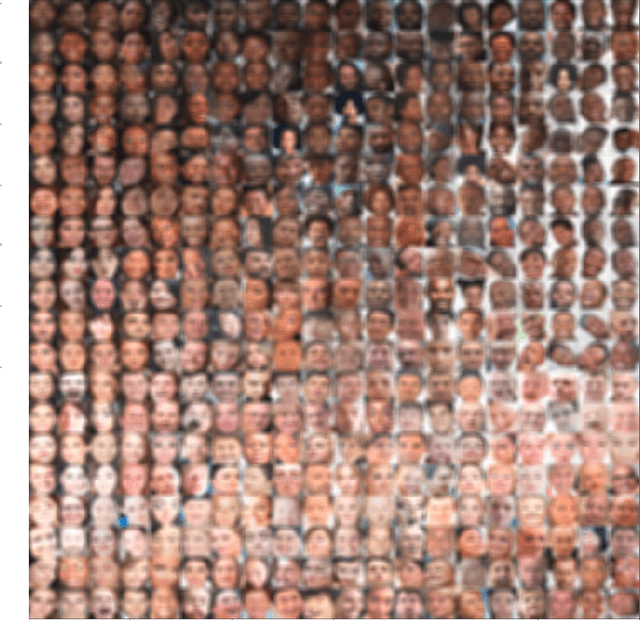

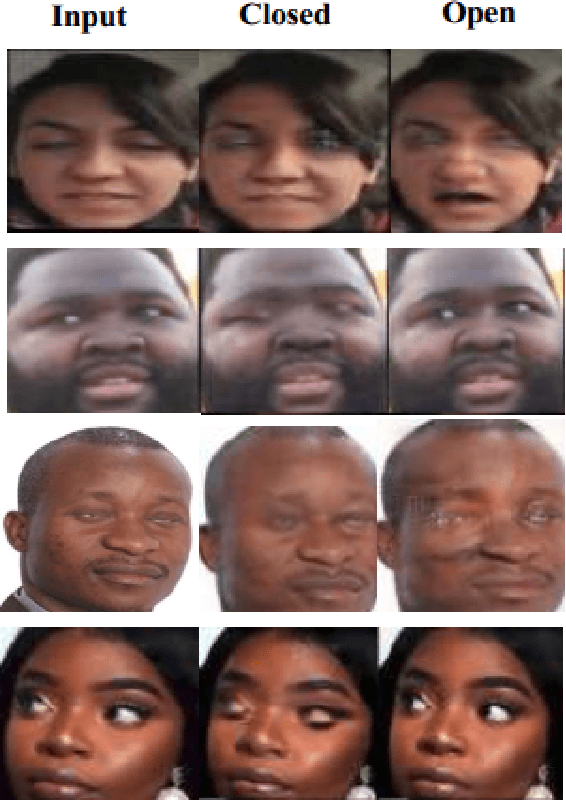

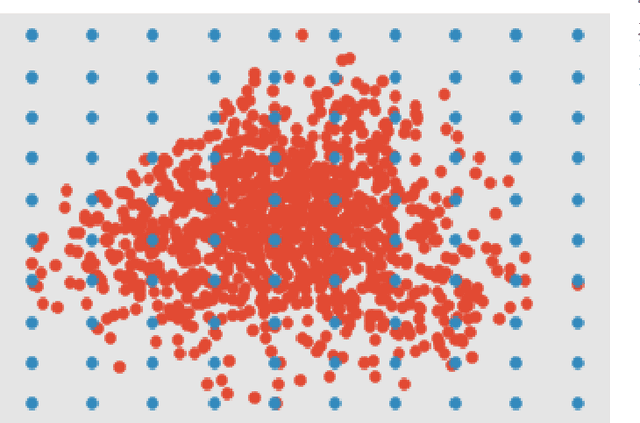

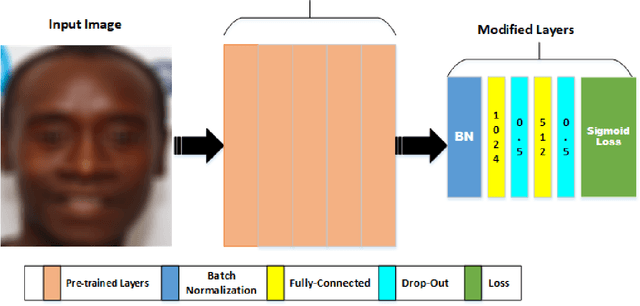

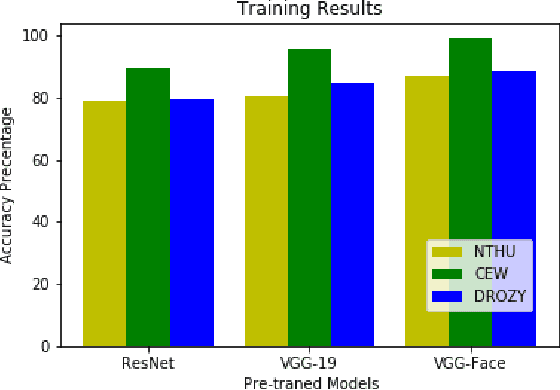

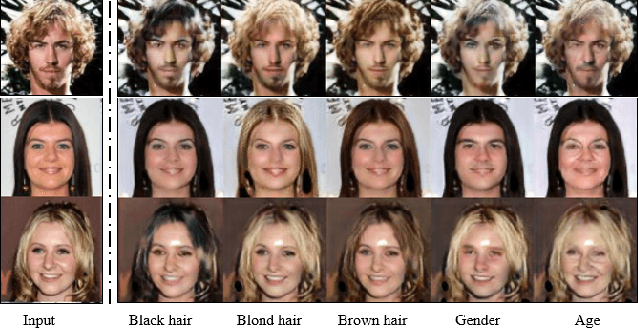

Abstract:Datasets are crucial when training a deep neural network. When datasets are unrepresentative, trained models are prone to bias because they are unable to generalise to real world settings. This is particularly problematic for models trained in specific cultural contexts, which may not represent a wide range of races, and thus fail to generalise. This is a particular challenge for Driver drowsiness detection, where many publicly available datasets are unrepresentative as they cover only certain ethnicity groups. Traditional augmentation methods are unable to improve a model's performance when tested on other groups with different facial attributes, and it is often challenging to build new, more representative datasets. In this paper, we introduce a novel framework that boosts the performance of detection of drowsiness for different ethnicity groups. Our framework improves Convolutional Neural Network (CNN) trained for prediction by using Generative Adversarial networks (GAN) for targeted data augmentation based on a population bias visualisation strategy that groups faces with similar facial attributes and highlights where the model is failing. A sampling method selects faces where the model is not performing well, which are used to fine-tune the CNN. Experiments show the efficacy of our approach in improving driver drowsiness detection for under represented ethnicity groups. Here, models trained on publicly available datasets are compared with a model trained using the proposed data augmentation strategy. Although developed in the context of driver drowsiness detection, the proposed framework is not limited to the driver drowsiness detection task, but can be applied to other applications.

Detecting inter-sectional accuracy differences in driver drowsiness detection algorithms

Apr 23, 2019

Abstract:Convolutional Neural Networks (CNNs) have been used successfully across a broad range of areas including data mining, object detection, and in business. The dominance of CNNs follows a breakthrough by Alex Krizhevsky which showed improvements by dramatically reducing the error rate obtained in a general image classification task from 26.2% to 15.4%. In road safety, CNNs have been applied widely to the detection of traffic signs, obstacle detection, and lane departure checking. In addition, CNNs have been used in data mining systems that monitor driving patterns and recommend rest breaks when appropriate. This paper presents a driver drowsiness detection system and shows that there are potential social challenges regarding the application of these techniques, by highlighting problems in detecting dark-skinned driver's faces. This is a particularly important challenge in African contexts, where there are more dark-skinned drivers. Unfortunately, publicly available datasets are often captured in different cultural contexts, and therefore do not cover all ethnicities, which can lead to false detections or racially biased models. This work evaluates the performance obtained when training convolutional neural network models on commonly used driver drowsiness detection datasets and testing on datasets specifically chosen for broader representation. Results show that models trained using publicly available datasets suffer extensively from over-fitting, and can exhibit racial bias, as shown by testing on a more representative dataset. We propose a novel visualisation technique that can assist in identifying groups of people where there might be the potential of discrimination, using Principal Component Analysis (PCA) to produce a grid of faces sorted by similarity, and combining these with a model accuracy overlay.

DepthwiseGANs: Fast Training Generative Adversarial Networks for Realistic Image Synthesis

Mar 06, 2019

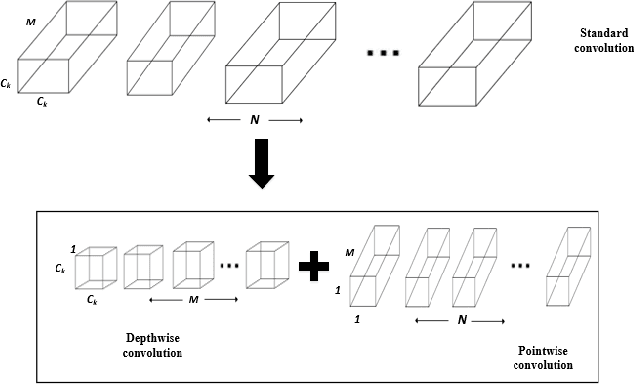

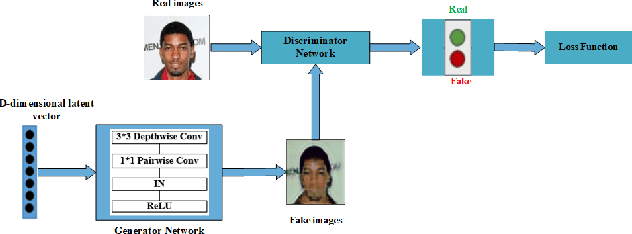

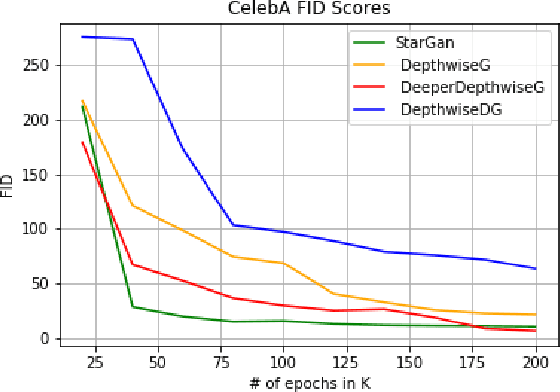

Abstract:Recent work has shown significant progress in the direction of synthetic data generation using Generative Adversarial Networks (GANs). GANs have been applied in many fields of computer vision including text-to-image conversion, domain transfer, super-resolution, and image-to-video applications. In computer vision, traditional GANs are based on deep convolutional neural networks. However, deep convolutional neural networks can require extensive computational resources because they are based on multiple operations performed by convolutional layers, which can consist of millions of trainable parameters. Training a GAN model can be difficult and it takes a significant amount of time to reach an equilibrium point. In this paper, we investigate the use of depthwise separable convolutions to reduce training time while maintaining data generation performance. Our results show that a DepthwiseGAN architecture can generate realistic images in shorter training periods when compared to a StarGan architecture, but that model capacity still plays a significant role in generative modelling. In addition, we show that depthwise separable convolutions perform best when only applied to the generator. For quality evaluation of generated images, we use the Fr\'echet Inception Distance (FID), which compares the similarity between the generated image distribution and that of the training dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge