Mia-Katrin Kvalsund

Stability and Geometry of Attractors in Neural Cellular Automata

Apr 14, 2026Abstract:Throughout the literature on Neural Cellular Automata (NCAs), it is often taken for granted that the systems learn attractors. This is shown through evolving the system for many timesteps and noting visual similarity to the goal state. There remain many questions after such an analysis. Namely, what kind of attractors do we have? Is their behavior ordered or chaotic? Can we estimate stability over very long time horizons? What really happens in the attractor when perturbations are applied? In this paper, we present a case study to help answer these questions, with methods drawn from the literature on dynamical systems theory. We use the growing gecko NCA of Mordvintsev et al. (2020) with deterministic cell updates as a case study. To the best of the authors' knowledge, we present the first visualizations of NCA attractor dynamics. We also analyze them using the Lyapunov and Fourier spectra, to reveal that the NCA displays oscillatory, periodic and quasi-periodic behavior, and that these behaviors arise early during training. This challenges the belief that NCAs learn fixed point attractors. Finally, we show that large perturbations to the attractor states can throw the NCAs into a secondary mode separate from the original attractor. We hope that this initial foray into NCA attractor dynamics expands the toolkit for NCA researchers to analyze the robustness and stability of their systems.

Visualising the Attractor Landscape of Neural Cellular Automata

Apr 12, 2026Abstract:As Neural Cellular Automata (NCAs) are increasingly applied outside of the toy models in Artificial Life, there is a pressing need to understand how they behave and to build appropriate routes to interpret what they have learnt. By their very nature, the benefits of training NCAs are balanced with a lack of interpretability: we can engineer emergent behaviour, but have limited ability to understand what has been learnt. In this paper, we apply a variety of techniques to pry open the NCA black box and glean some understanding of what it has learnt to do. We apply techniques from manifold learning (principal components analysis and both dense and sparse autoencoders) along with techniques from topological data analysis (persistent homology) to capture the NCA's underlying behavioural manifold, with varying success. Results show that when analysis is performed at a macroscopic level (i.e. taking the entire NCA state as a single data point), the underlying manifold is often quite simple and can be captured and analysed quite well. When analysis is performed at a microscopic level (i.e. taking the state of individual cells as a single data point), the manifold is highly complex and more complicated techniques are required in order to make sense of it.

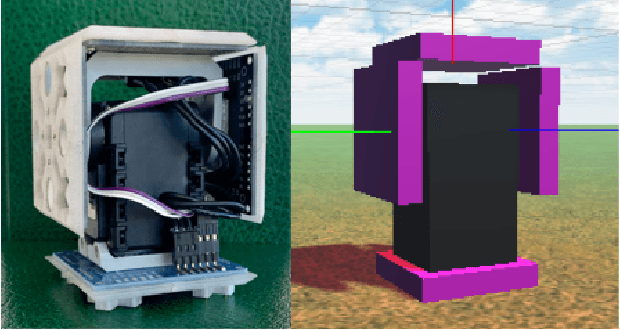

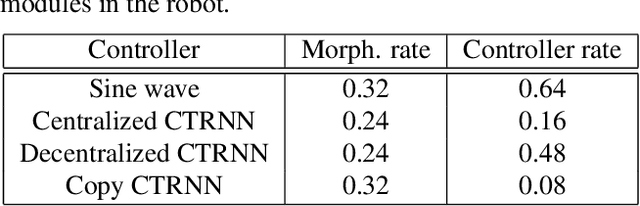

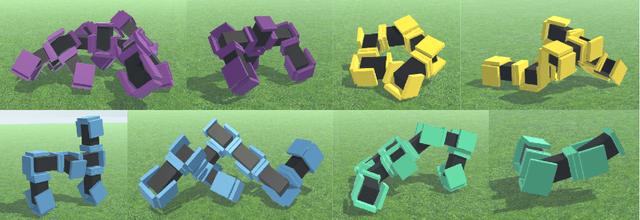

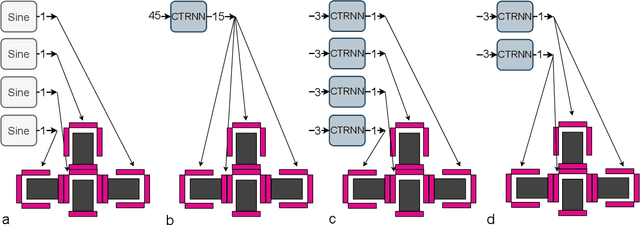

Centralized and Decentralized Control in Modular Robots and Their Effect on Morphology

Jun 27, 2022

Abstract:In Evolutionary Robotics, evolutionary algorithms are used to co-optimize morphology and control. However, co-optimizing leads to different challenges: How do you optimize a controller for a body that often changes its number of inputs and outputs? Researchers must then make some choice between centralized or decentralized control. In this article, we study the effects of centralized and decentralized controllers on modular robot performance and morphologies. This is done by implementing one centralized and two decentralized continuous time recurrent neural network controllers, as well as a sine wave controller for a baseline. We found that a decentralized approach that was more independent of morphology size performed significantly better than the other approaches. It also worked well in a larger variety of morphology sizes. In addition, we highlighted the difficulties of implementing centralized control for a changing morphology, and saw that our centralized controller struggled more with early convergence than the other approaches. Our findings indicate that duplicated decentralized networks are beneficial when evolving both the morphology and control of modular robots. Overall, if these findings translate to other robot systems, our results and issues encountered can help future researchers make a choice of control method when co-optimizing morphology and control.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge