Maya R. Gupta

Multi-Task Averaging

Aug 24, 2012

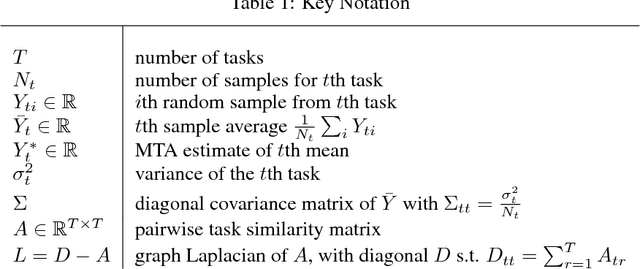

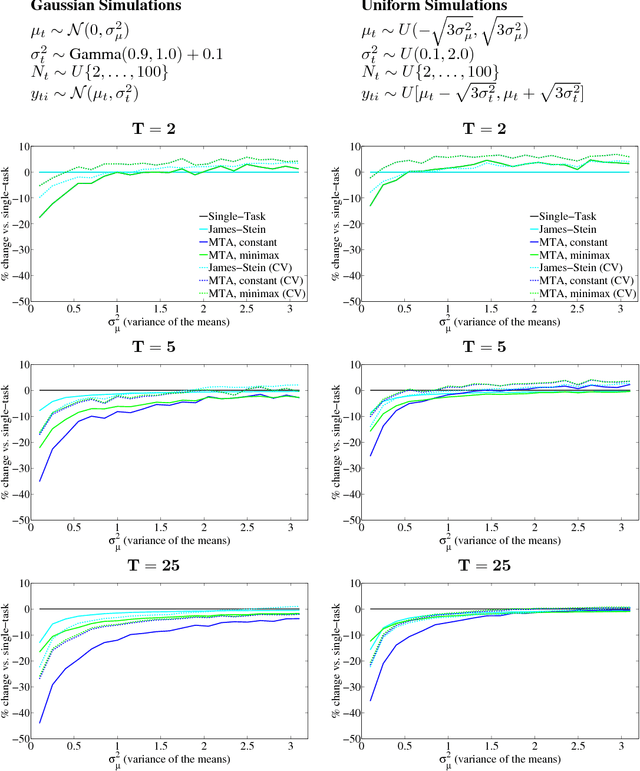

Abstract:We present a multi-task learning approach to jointly estimate the means of multiple independent data sets. The proposed multi-task averaging (MTA) algorithm results in a convex combination of the single-task maximum likelihood estimates. We derive the optimal minimum risk estimator and the minimax estimator, and show that these estimators can be efficiently estimated. Simulations and real data experiments demonstrate that MTA estimators often outperform both single-task and James-Stein estimators.

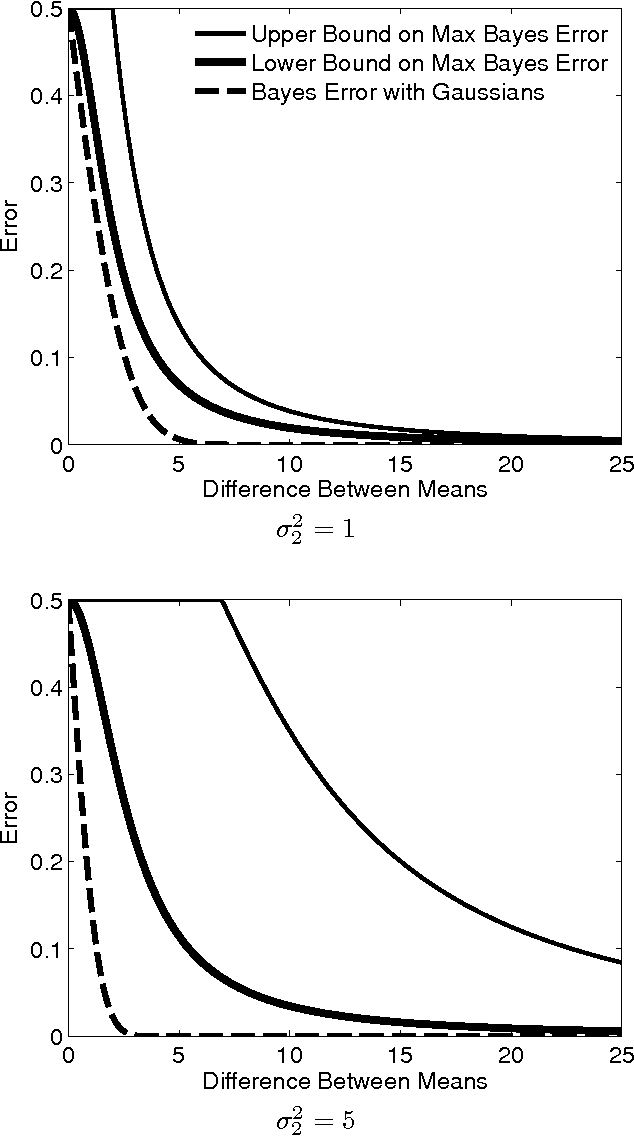

Bounds on the Bayes Error Given Moments

Jan 30, 2012

Abstract:We show how to compute lower bounds for the supremum Bayes error if the class-conditional distributions must satisfy moment constraints, where the supremum is with respect to the unknown class-conditional distributions. Our approach makes use of Curto and Fialkow's solutions for the truncated moment problem. The lower bound shows that the popular Gaussian assumption is not robust in this regard. We also construct an upper bound for the supremum Bayes error by constraining the decision boundary to be linear.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge