Matteo Pantano

A Position Statement on Endovascular Models and Effectiveness Metrics for Mechanical Thrombectomy Navigation, on behalf of the Stakeholder Taskforce for AI-assisted Robotic Thrombectomy (START)

Mar 30, 2026Abstract:While we are making progress in overcoming infectious diseases and cancer; one of the major medical challenges of the mid-21st century will be the rising prevalence of stroke. Large vessels occlusions are especially debilitating, yet effective treatment (needed within hours to achieve best outcomes) remains limited due to geography. One solution for improving timely access to mechanical thrombectomy in geographically diverse populations is the deployment of robotic surgical systems. Artificial intelligence (AI) assistance may enable the upskilling of operators in this emerging therapeutic delivery approach. Our aim was to establish consensus frameworks for developing and validating AI-assisted robots for thrombectomy. Objectives included standardizing effectiveness metrics and defining reference testbeds across in silico, in vitro, ex vivo, and in vivo environments. To achieve this, we convened experts in neurointervention, robotics, data science, health economics, policy, statistics, and patient advocacy. Consensus was built through an incubator day, a Delphi process, and a final Position Statement. We identified that the four essential testbed environments each had distinct validation roles. Realism requirements vary: simpler testbeds should include realistic vessel anatomy compatible with guidewire and catheter use, while standard testbeds should incorporate deformable vessels. More advanced testbeds should include blood flow, pulsatility, and disease features. There are two macro-classes of effectiveness metrics: one for in silico, in vitro, and ex vivo stages focusing on technical navigation, and another for in vivo stages, focused on clinical outcomes. Patient safety is central to this technology's development. One requisite patient safety task needed now is to correlate in vitro measurements to in vivo complications.

* Published in Journal of the American Heart Association

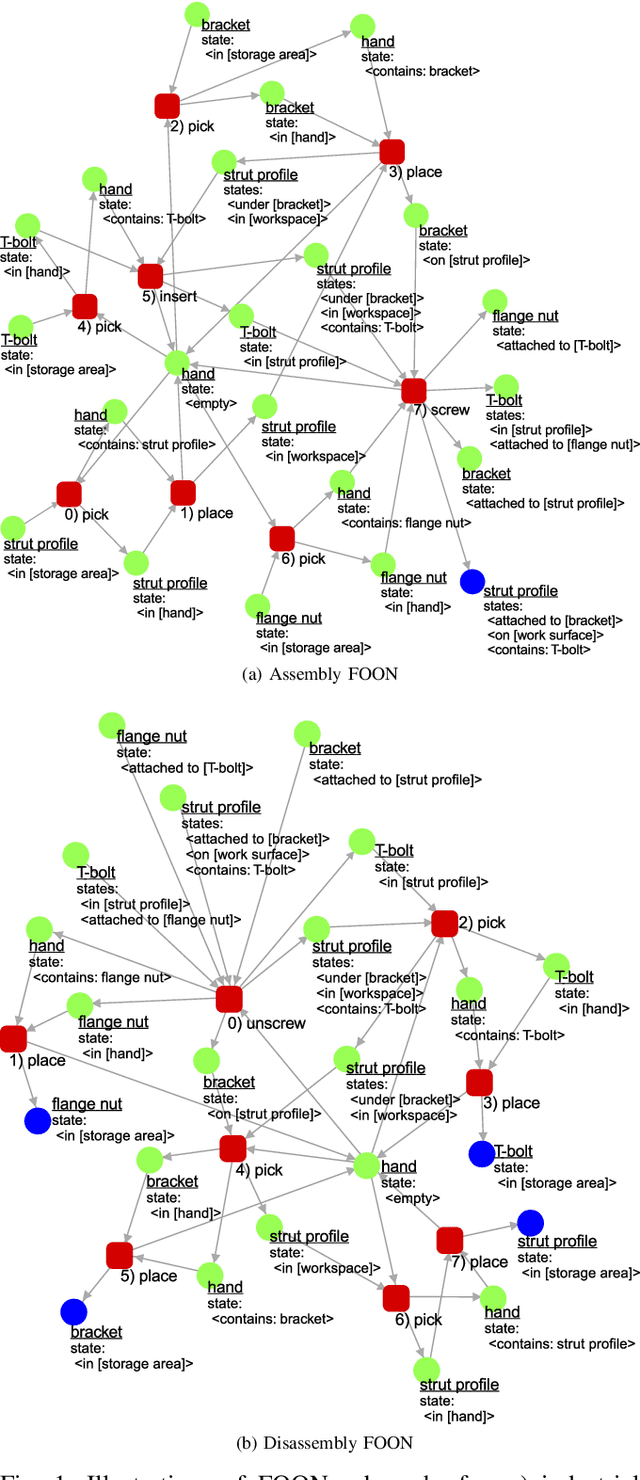

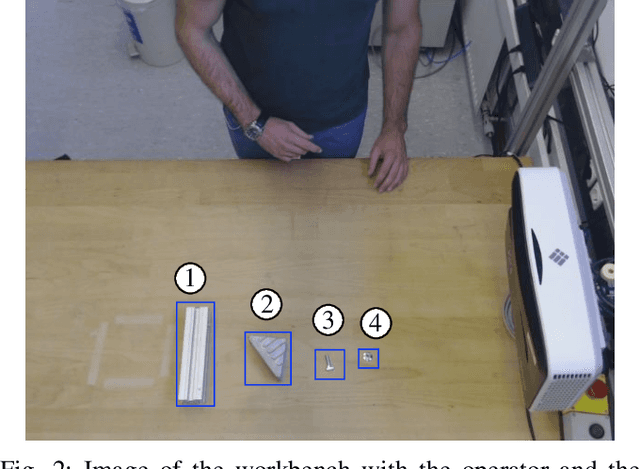

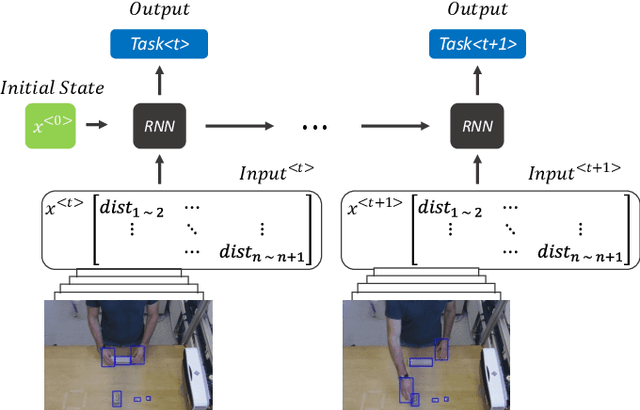

Grounding of the Functional Object-Oriented Network in Industrial Tasks

Apr 05, 2022

Abstract:In this preliminary work, we propose to design an activity recognition system that is suitable for Industrie 4.0 (I4.0) applications, especially focusing on Learning from Demonstration (LfD) in collaborative robot tasks. More precisely, we focus on the issue of data exchange between an activity recognition system and a collaborative robotic system. We propose an activity recognition system with linked data using functional object-oriented network (FOON) to facilitate industrial use cases. Initially, we drafted a FOON for our use case. Afterwards, an action is estimated by using object and hand recognition systems coupled with a recurrent neural network, which refers to FOON objects and states. Finally, the detected action is shared via a context broker using an existing linked data model, thus enabling the robotic system to interpret the action and execute it afterwards. Our initial results show that FOON can be used for an industrial use case and that we can use existing linked data models in LfD applications.

Capability-based Frameworks for Industrial Robot Skills: a Survey

Mar 01, 2022

Abstract:The research community is puzzled with words like skill, action, atomic unit and others when trying to describe robot capabilities. However, for giving the possibility to integrate such in the industrial scenario a standardization of their description is necessary. This work, through a structured review approach, tries to identify commonalities in the research community. From this review it was possible to perceive that most of the industrially focused research work targets simple capabilities like pick and place, the large amount of authors agree on a structure consisting of task, skill and primitive, the Robot Operating System is a common framework both in industrial and non-industrial domains and skills are a main enabler for high mix - low volume productions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge