Massimiliano Ronzani

Graph-based Event Log Repair

Aug 07, 2025

Abstract:The quality of event logs in Process Mining is crucial when applying any form of analysis to them. In real-world event logs, the acquisition of data can be non-trivial (e.g., due to the execution of manual activities and related manual recording or to issues in collecting, for each event, all its attributes), and often may end up with events recorded with some missing information. Standard approaches to the problem of trace (or log) reconstruction either require the availability of a process model that is used to fill missing values by leveraging different reasoning techniques or employ a Machine Learning/Deep Learning model to restore the missing values by learning from similar cases. In recent years, a new type of Deep Learning model that is capable of handling input data encoded as graphs has emerged, namely Graph Neural Networks. Graph Neural Network models, and even more so Heterogeneous Graph Neural Networks, offer the advantage of working with a more natural representation of complex multi-modal sequences like the execution traces in Process Mining, allowing for more expressive and semantically rich encodings. In this work, we focus on the development of a Heterogeneous Graph Neural Network model that, given a trace containing some incomplete events, will return the full set of attributes missing from those events. We evaluate our work against a state-of-the-art approach leveraging autoencoders on two synthetic logs and four real event logs, on different types of missing values. Different from state-of-the-art model-free approaches, which mainly focus on repairing a subset of event attributes, the proposed approach shows very good performance in reconstructing all different event attributes.

Generating Counterfactual Explanations Under Temporal Constraints

Mar 03, 2025

Abstract:Counterfactual explanations are one of the prominent eXplainable Artificial Intelligence (XAI) techniques, and suggest changes to input data that could alter predictions, leading to more favourable outcomes. Existing counterfactual methods do not readily apply to temporal domains, such as that of process mining, where data take the form of traces of activities that must obey to temporal background knowledge expressing which dynamics are possible and which not. Specifically, counterfactuals generated off-the-shelf may violate the background knowledge, leading to inconsistent explanations. This work tackles this challenge by introducing a novel approach for generating temporally constrained counterfactuals, guaranteed to comply by design with background knowledge expressed in Linear Temporal Logic on process traces (LTLp). We do so by infusing automata-theoretic techniques for LTLp inside a genetic algorithm for counterfactual generation. The empirical evaluation shows that the generated counterfactuals are temporally meaningful and more interpretable for applications involving temporal dependencies.

Generating the Traces You Need: A Conditional Generative Model for Process Mining Data

Nov 04, 2024Abstract:In recent years, trace generation has emerged as a significant challenge within the Process Mining community. Deep Learning (DL) models have demonstrated accuracy in reproducing the features of the selected processes. However, current DL generative models are limited in their ability to adapt the learned distributions to generate data samples based on specific conditions or attributes. This limitation is particularly significant because the ability to control the type of generated data can be beneficial in various contexts, enabling a focus on specific behaviours, exploration of infrequent patterns, or simulation of alternative 'what-if' scenarios. In this work, we address this challenge by introducing a conditional model for process data generation based on a conditional variational autoencoder (CVAE). Conditional models offer control over the generation process by tuning input conditional variables, enabling more targeted and controlled data generation. Unlike other domains, CVAE for process mining faces specific challenges due to the multiperspective nature of the data and the need to adhere to control-flow rules while ensuring data variability. Specifically, we focus on generating process executions conditioned on control flow and temporal features of the trace, allowing us to produce traces for specific, identified sub-processes. The generated traces are then evaluated using common metrics for generative model assessment, along with additional metrics to evaluate the quality of the conditional generation

Recommending the optimal policy by learning to act from temporal data

Mar 16, 2023

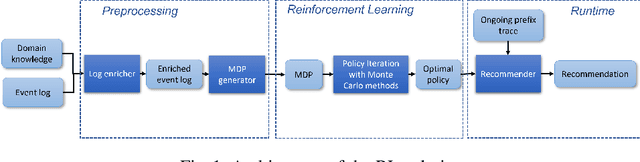

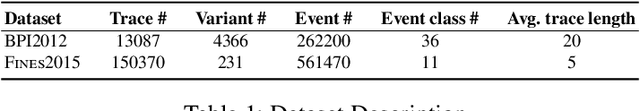

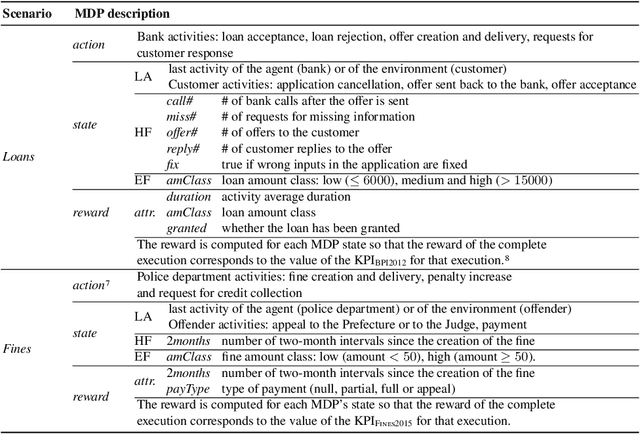

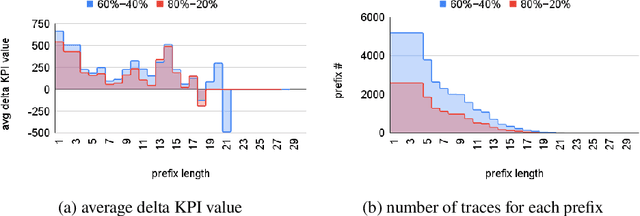

Abstract:Prescriptive Process Monitoring is a prominent problem in Process Mining, which consists in identifying a set of actions to be recommended with the goal of optimising a target measure of interest or Key Performance Indicator (KPI). One challenge that makes this problem difficult is the need to provide Prescriptive Process Monitoring techniques only based on temporally annotated (process) execution data, stored in, so-called execution logs, due to the lack of well crafted and human validated explicit models. In this paper we aim at proposing an AI based approach that learns, by means of Reinforcement Learning (RL), an optimal policy (almost) only from the observation of past executions and recommends the best activities to carry on for optimizing a KPI of interest. This is achieved first by learning a Markov Decision Process for the specific KPIs from data, and then by using RL training to learn the optimal policy. The approach is validated on real and synthetic datasets and compared with off-policy Deep RL approaches. The ability of our approach to compare with, and often overcome, Deep RL approaches provides a contribution towards the exploitation of white box RL techniques in scenarios where only temporal execution data are available.

Learning to act: a Reinforcement Learning approach to recommend the best next activities

Mar 29, 2022

Abstract:The rise of process data availability has led in the last decade to the development of several data-driven learning approaches. However, most of these approaches limit themselves to use the learned model to predict the future of ongoing process executions. The goal of this paper is moving a step forward and leveraging data with the purpose of learning to act by supporting users with recommendations for the best strategy to follow, in order to optimize a measure of performance. In this paper, we take the (optimization) perspective of one process actor and we recommend the best activities to execute next, in response to what happens in a complex external environment, where there is no control on exogenous factors. To this aim, we investigate an approach that learns, by means of Reinforcement Learning, an optimal policy from the observation of past executions and recommends the best activities to carry on for optimizing a Key Performance Indicator of interest. The potentiality of the approach has been demonstrated on two scenarios taken from real-life data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge