Martin Fleming

Crashing Waves vs. Rising Tides: Preliminary Findings on AI Automation from Thousands of Worker Evaluations of Labor Market Tasks

Apr 01, 2026Abstract:We propose that AI automation is a continuum between: (i) crashing waves where AI capabilities surge abruptly over small sets of tasks, and (ii) rising tides where the increase in AI capabilities is more continuous and broad-based. We test for these effects in preliminary evidence from an ongoing evaluation of AI capabilities across over 3,000 broad-based tasks derived from the U.S. Department of Labor O*NET categorization that are text-based and thus LLM-addressable. Based on more than 17,000 evaluations by workers from these jobs, we find little evidence of crashing waves (in contrast to recent work by METR), but substantial evidence that rising tides are the primary form of AI automation. AI performance is high and improving rapidly across a wide range of tasks. We estimate that, in 2024-Q2, AI models successfully complete tasks that take humans approximately 3-4 hours with about a 50% success rate, increasing to about 65% by 2025-Q3. If recent trends in AI capability growth persist, this pace of AI improvement implies that LLMs will be able to complete most text-related tasks with success rates of, on average, 80%-95% by 2029 at a minimally sufficient quality level. Achieving near-perfect success rates at this quality level or comparable success rates at superior quality would require several additional years. These AI capability improvements would impact the economy and labor market as organizations adopt AI, which could have a substantially longer timeline.

Economics of Human and AI Collaboration: When is Partial Automation More Attractive than Full Automation?

Mar 31, 2026Abstract:This paper develops a unified framework for evaluating the optimal degree of task automation. Moving beyond binary automate-or-not assessments, we model automation intensity as a continuous choice in which firms minimize costs by selecting an AI accuracy level, from no automation through partial human-AI collaboration to full automation. On the supply side, we estimate an AI production function via scaling-law experiments linking performance to data, compute, and model size. Because AI systems exhibit predictable but diminishing returns to these inputs, the cost of higher accuracy is convex: good performance may be inexpensive, but near-perfect accuracy is disproportionately costly. Full automation is therefore often not cost-minimizing; partial automation, where firms retain human workers for residual tasks, frequently emerges as the equilibrium. On the demand side, we introduce an entropy-based measure of task complexity that maps model accuracy into a labor substitution ratio, quantifying human labor displacement at each accuracy level. We calibrate the framework with O*NET task data, a survey of 3,778 domain experts, and GPT-4o-derived task decompositions, implementing it in computer vision. Task complexity shapes substitution: low-complexity tasks see high substitution, while high-complexity tasks favor limited partial automation. Scale of deployment is a key determinant: AI-as-a-Service and AI agents spread fixed costs across users, sharply expanding economically viable tasks. At the firm level, cost-effective automation captures approximately 11% of computer-vision-exposed labor compensation; under economy-wide deployment, this share rises sharply. Since other AI systems exhibit similar scaling-law economics, our mechanisms extend beyond computer vision, reinforcing that partial automation is often the economically rational long-run outcome, not merely a transitional phase.

How Much Progress Has There Been in NVIDIA Datacenter GPUs?

Jan 27, 2026Abstract:Graphics Processing Units (GPUs) are the state-of-the-art architecture for essential tasks, ranging from rendering 2D/3D graphics to accelerating workloads in supercomputing centers and, of course, Artificial Intelligence (AI). As GPUs continue improving to satisfy ever-increasing performance demands, analyzing past and current progress becomes paramount in determining future constraints on scientific research. This is particularly compelling in the AI domain, where rapid technological advancements and fierce global competition have led the United States to recently implement export control regulations limiting international access to advanced AI chips. For this reason, this paper studies technical progress in NVIDIA datacenter GPUs released from the mid-2000s until today. Specifically, we compile a comprehensive dataset of datacenter NVIDIA GPUs comprising several features, ranging from computational performance to release price. Then, we examine trends in main GPU features and estimate progress indicators for per-memory bandwidth, per-dollar, and per-watt increase rates. Our main results identify doubling times of 1.44 and 1.69 years for FP16 and FP32 operations (without accounting for sparsity benefits), while FP64 doubling times range from 2.06 to 3.79 years. Off-chip memory size and bandwidth grew at slower rates than computing performance, doubling every 3.32 to 3.53 years. The release prices of datacenter GPUs have roughly doubled every 5.1 years, while their power consumption has approximately doubled every 16 years. Finally, we quantify the potential implications of current U.S. export control regulations in terms of the potential performance gaps that would result if implementation were assumed to be complete and successful. We find that recently proposed changes to export controls would shrink the potential performance gap from 23.6x to 3.54x.

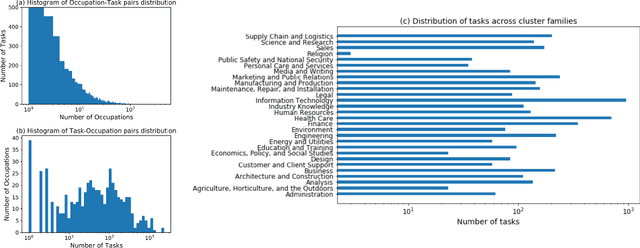

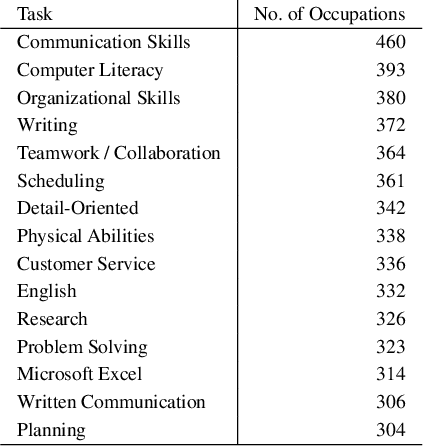

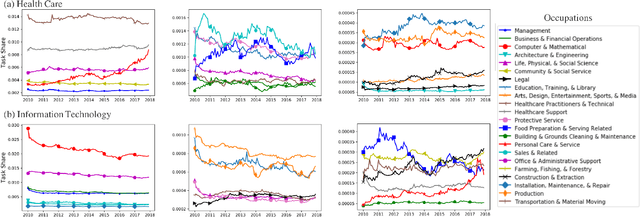

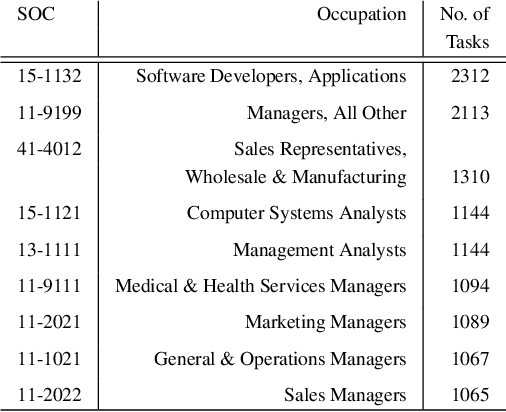

Learning Occupational Task-Shares Dynamics for the Future of Work

Jan 28, 2020

Abstract:The recent wave of AI and automation has been argued to differ from previous General Purpose Technologies (GPTs), in that it may lead to rapid change in occupations' underlying task requirements and persistent technological unemployment. In this paper, we apply a novel methodology of dynamic task shares to a large dataset of online job postings to explore how exactly occupational task demands have changed over the past decade of AI innovation, especially across high, mid and low wage occupations. Notably, big data and AI have risen significantly among high wage occupations since 2012 and 2016, respectively. We built an ARIMA model to predict future occupational task demands and showcase several relevant examples in Healthcare, Administration, and IT. Such task demands predictions across occupations will play a pivotal role in retraining the workforce of the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge