Martin Biehl

Araya Inc., Tokyo, Japan

Formal approaches to a definition of agents

Apr 10, 2017

Abstract:This thesis contributes to the formalisation of the notion of an agent within the class of finite multivariate Markov chains. Agents are seen as entities that act, perceive, and are goal-directed. We present a new measure that can be used to identify entities (called $\iota$-entities), some general requirements for entities in multivariate Markov chains, as well as formal definitions of actions and perceptions suitable for such entities. The intuition behind $\iota$-entities is that entities are spatiotemporal patterns for which every part makes every other part more probable. The measure, complete local integration (CLI), is formally investigated in general Bayesian networks. It is based on the specific local integration (SLI) which is measured with respect to a partition. CLI is the minimum value of SLI over all partitions. We prove that $\iota$-entities are blocks in specific partitions of the global trajectory. These partitions are the finest partitions that achieve a given SLI value. We also establish the transformation behaviour of SLI under permutations of nodes in the network. We go on to present three conditions on general definitions of entities. These are not fulfilled by sets of random variables i.e.\ the perception-action loop, which is often used to model agents, is too restrictive. We propose that any general entity definition should in effect specify a subset (called an an entity-set) of the set of all spatiotemporal patterns of a given multivariate Markov chain. The set of $\iota$-entities is such a set. Importantly the perception-action loop also induces an entity-set. We then propose formal definitions of actions and perceptions for arbitrary entity-sets. These specialise to standard notions in case of the perception-action loop entity-set. Finally we look at some very simple examples.

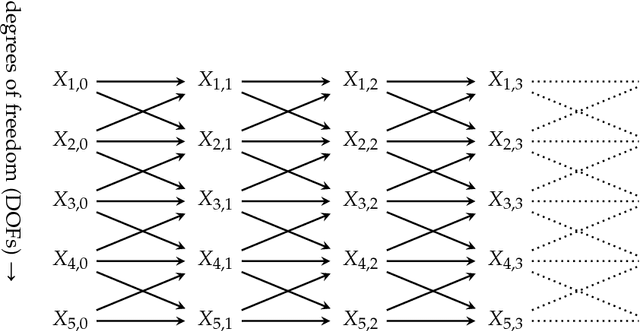

Neural Coarse-Graining: Extracting slowly-varying latent degrees of freedom with neural networks

Sep 01, 2016

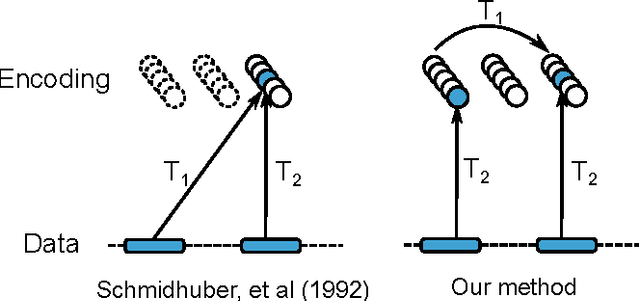

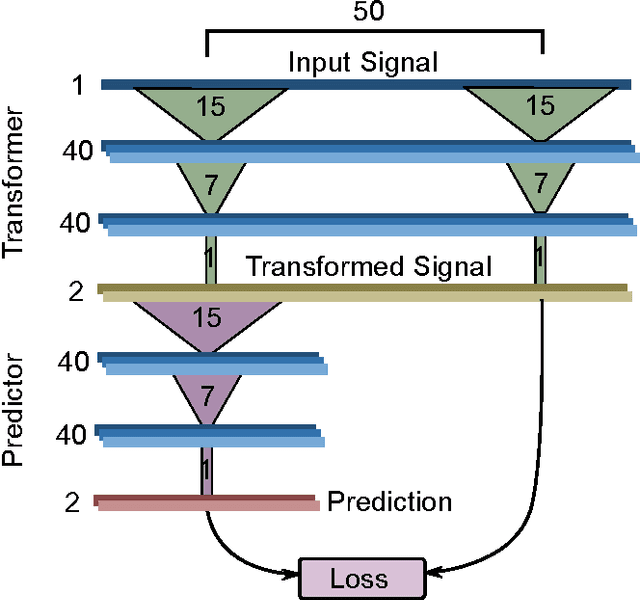

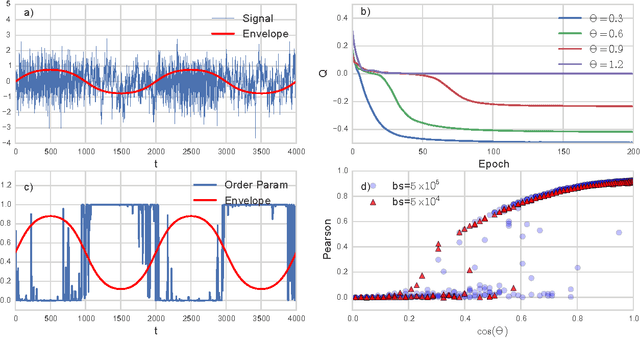

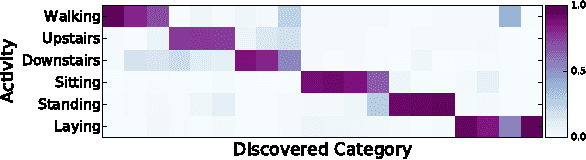

Abstract:We present a loss function for neural networks that encompasses an idea of trivial versus non-trivial predictions, such that the network jointly determines its own prediction goals and learns to satisfy them. This permits the network to choose sub-sets of a problem which are most amenable to its abilities to focus on solving, while discarding 'distracting' elements that interfere with its learning. To do this, the network first transforms the raw data into a higher-level categorical representation, and then trains a predictor from that new time series to its future. To prevent a trivial solution of mapping the signal to zero, we introduce a measure of non-triviality via a contrast between the prediction error of the learned model with a naive model of the overall signal statistics. The transform can learn to discard uninformative and unpredictable components of the signal in favor of the features which are both highly predictive and highly predictable. This creates a coarse-grained model of the time-series dynamics, focusing on predicting the slowly varying latent parameters which control the statistics of the time-series, rather than predicting the fast details directly. The result is a semi-supervised algorithm which is capable of extracting latent parameters, segmenting sections of time-series with differing statistics, and building a higher-level representation of the underlying dynamics from unlabeled data.

Towards information based spatiotemporal patterns as a foundation for agent representation in dynamical systems

May 18, 2016

Abstract:We present some arguments why existing methods for representing agents fall short in applications crucial to artificial life. Using a thought experiment involving a fictitious dynamical systems model of the biosphere we argue that the metabolism, motility, and the concept of counterfactual variation should be compatible with any agent representation in dynamical systems. We then propose an information-theoretic notion of \emph{integrated spatiotemporal patterns} which we believe can serve as the basic building block of an agent definition. We argue that these patterns are capable of solving the problems mentioned before. We also test this in some preliminary experiments.

* 8 pages, 3 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge