Mario Campos-Soberanis

Prototype of a robotic system to assist the learning process of English language with text-generation through DNN

Sep 20, 2023Abstract:In the last ongoing years, there has been a significant ascending on the field of Natural Language Processing (NLP) for performing multiple tasks including English Language Teaching (ELT). An effective strategy to favor the learning process uses interactive devices to engage learners in their self-learning process. In this work, we present a working prototype of a humanoid robotic system to assist English language self-learners through text generation using Long Short Term Memory (LSTM) Neural Networks. The learners interact with the system using a Graphic User Interface that generates text according to the English level of the user. The experimentation was conducted using English learners and the results were measured accordingly to International English Language Testing System (IELTS) rubric. Preliminary results show an increment in the Grammatical Range of learners who interacted with the system.

Evolutionary optimization of contexts for phonetic correction in speech recognition systems

Feb 23, 2021

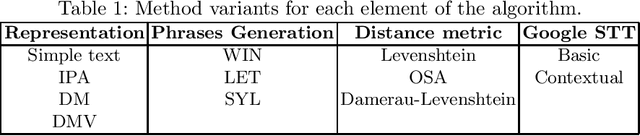

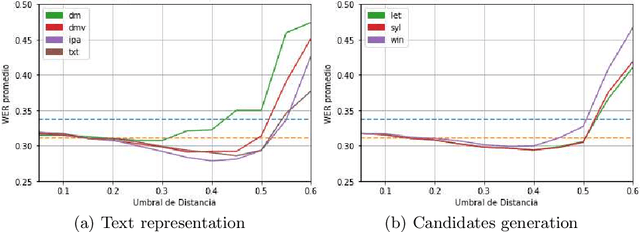

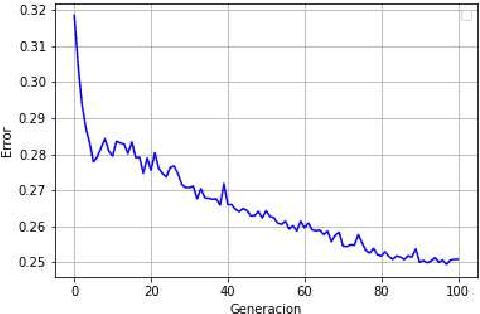

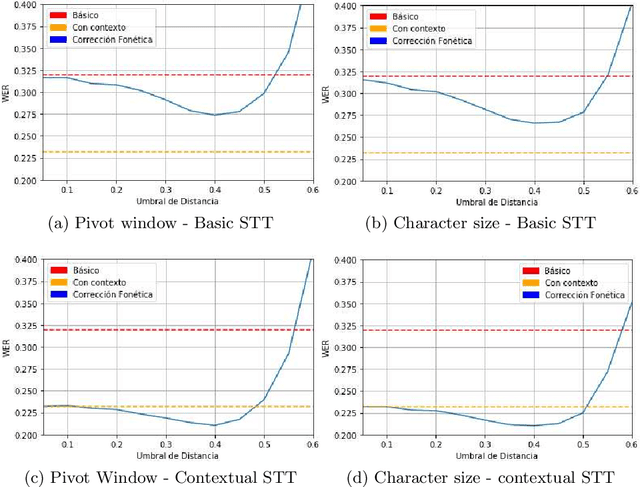

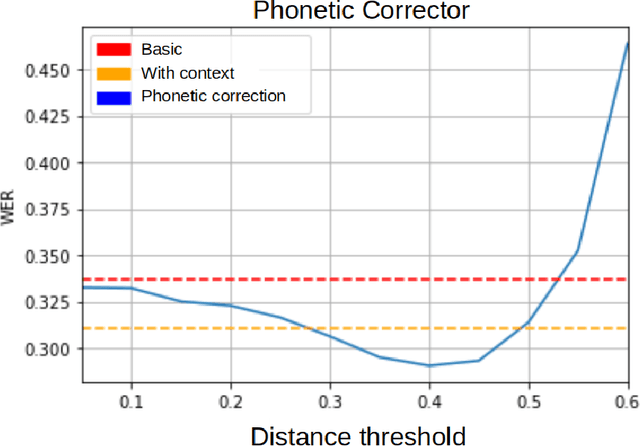

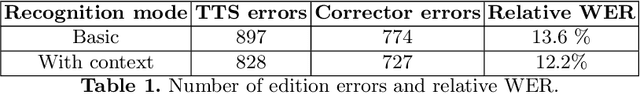

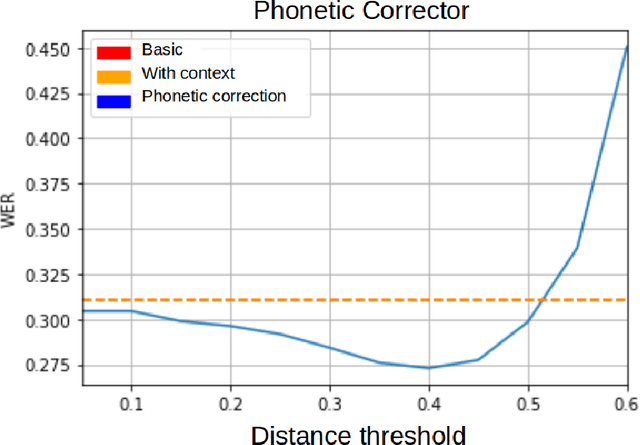

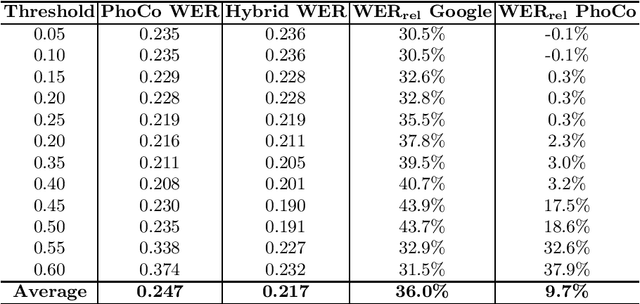

Abstract:Automatic Speech Recognition (ASR) is an area of growing academic and commercial interest due to the high demand for applications that use it to provide a natural communication method. It is common for general purpose ASR systems to fail in applications that use a domain-specific language. Various strategies have been used to reduce the error, such as providing a context that modifies the language model and post-processing correction methods. This article explores the use of an evolutionary process to generate an optimized context for a specific application domain, as well as different correction techniques based on phonetic distance metrics. The results show the viability of a genetic algorithm as a tool for context optimization, which, added to a post-processing correction based on phonetic representations, can reduce the errors on the recognized speech.

* 13 pages, 4 figures, This article is a translation of the paper "Optimizaci\'on evolutiva de contextos para la correcci\'on fon\'etica en sistemas de reconocimiento del habla" presented in COMIA 2019

Fixing Errors of the Google Voice Recognizer through Phonetic Distance Metrics

Feb 18, 2021

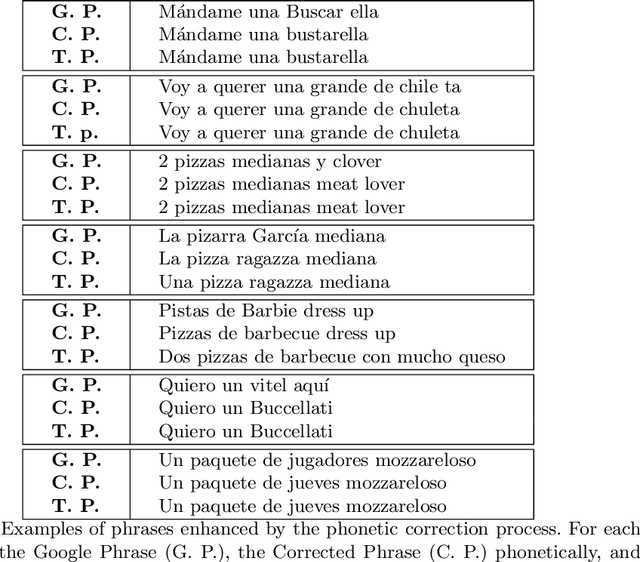

Abstract:Speech recognition systems for the Spanish language, such as Google's, produce errors quite frequently when used in applications of a specific domain. These errors mostly occur when recognizing words new to the recognizer's language model or ad hoc to the domain. This article presents an algorithm that uses Levenshtein distance on phonemes to reduce the speech recognizer's errors. The preliminary results show that it is possible to correct the recognizer's errors significantly by using this metric and using a dictionary of specific phrases from the domain of the application. Despite being designed for particular domains, the algorithm proposed here is of general application. The phrases that must be recognized can be explicitly defined for each application, without the algorithm having to be modified. It is enough to indicate to the algorithm the set of sentences on which it must work. The algorithm's complexity is $O(tn)$, where $t$ is the number of words in the transcript to be corrected, and $n$ is the number of phrases specific to the domain.

* 13 pages, 4 figures. This article is a translation of the paper "Correcci\'on de errores del reconocedor de voz de Google usando m\'etricas de distancia fon\'etica" presented in COMIA 2018

Hybrid phonetic-neural model for correction in speech recognition systems

Feb 12, 2021

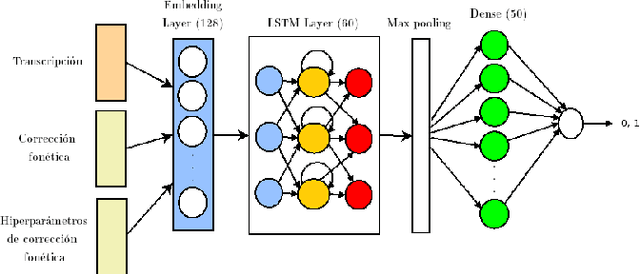

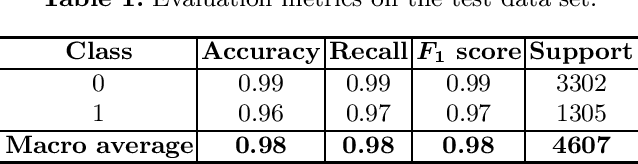

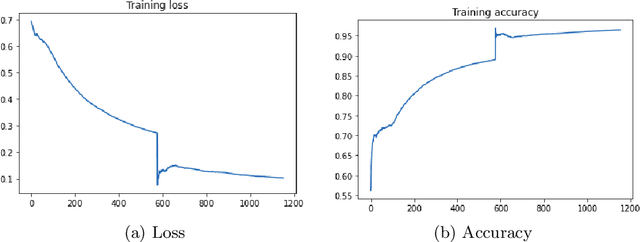

Abstract:Automatic speech recognition (ASR) is a relevant area in multiple settings because it provides a natural communication mechanism between applications and users. ASRs often fail in environments that use language specific to particular application domains. Some strategies have been explored to reduce errors in closed ASRs through post-processing, particularly automatic spell checking, and deep learning approaches. In this article, we explore using a deep neural network to refine the results of a phonetic correction algorithm applied to a telesales audio database. The results exhibit a reduction in the word error rate (WER), both in the original transcription and in the phonetic correction, which shows the viability of deep learning models together with post-processing correction strategies to reduce errors made by closed ASRs in specific language domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge