Marina Paolanti

EEG2Vision: A Multimodal EEG-Based Framework for 2D Visual Reconstruction in Cognitive Neuroscience

Apr 09, 2026Abstract:Reconstructing visual stimuli from non-invasive electroencephalography (EEG) remains challenging due to its low spatial resolution and high noise, particularly under realistic low-density electrode configurations. To address this, we present EEG2Vision, a modular, end-to-end EEG-to-image framework that systematically evaluates reconstruction performance across different EEG resolutions (128, 64, 32, and 24 channels) and enhances visual quality through a prompt-guided post-reconstruction boosting mechanism. Starting from EEG-conditioned diffusion reconstruction, the boosting stage uses a multimodal large language model to extract semantic descriptions and leverages image-to-image diffusion to refine geometry and perceptual coherence while preserving EEG-grounded structure. Our experiments show that semantic decoding accuracy degrades significantly with channel reduction (e.g., 50-way Top-1 Acc from 89% to 38%), while reconstruction quality slight decreases (e.g., FID from 76.77 to 80.51). The proposed boosting consistently improves perceptual metrics across all configurations, achieving up to 9.71% IS gains in low-channel settings. A user study confirms the clear perceptual preference for boosted reconstructions. The proposed approach significantly boosts the feasibility of real-time brain-2-image applications using low-resolution EEG devices, potentially unlocking this type of applications outside laboratory settings.

Brain3D: EEG-to-3D Decoding of Visual Representations via Multimodal Reasoning

Apr 09, 2026Abstract:Decoding visual information from electroencephalography (EEG) has recently achieved promising results, primarily focusing on reconstructing two-dimensional (2D) images from brain activity. However, the reconstruction of three-dimensional (3D) representations remains largely unexplored. This limits the geometric understanding and reduces the applicability of neural decoding in different contexts. To address this gap, we propose Brain3D, a multimodal architecture for EEG-to-3D reconstruction based on EEG-to-image decoding. It progressively transforms neural representations into the 3D domain using geometry-aware generative reasoning. Our pipeline first produces visually grounded images from EEG signals, then employs a multimodal large language model to extract structured 3D-aware descriptions, which guide a diffusion-based generation stage whose outputs are finally converted into coherent 3D meshes via a single-image-to-3D model. By decomposing the problem into structured stages, the proposed approach avoids direct EEG-to-3D mappings and enables scalable brain-driven 3D generation. We conduct a comprehensive evaluation comparing the reconstructed 3D outputs against the original visual stimuli, assessing both semantic alignment and geometric fidelity. Experimental results demonstrate strong performance of the proposed architecture, achieving up to 85.4% 10-way Top-1 EEG decoding accuracy and 0.648 CLIPScore, supporting the feasibility of multimodal EEG-driven 3D reconstruction.

Trusted Data Forever: Is AI the Answer?

Mar 14, 2022

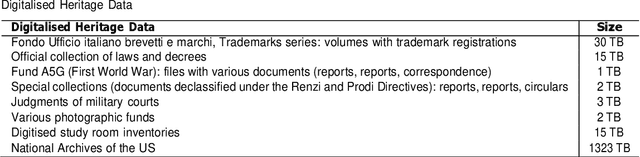

Abstract:Archival institutions and programs worldwide work to ensure that the records of governments, organizations, communities, and individuals are preserved for future generations as cultural heritage, as sources of rights, and as vehicles for holding the past accountable and to inform the future. This commitment is guaranteed through the adoption of strategic and technical measures for the long-term preservation of digital assets in any medium and form - textual, visual, or aural. Public and private archives are the largest providers of data big and small in the world and collectively host yottabytes of trusted data, to be preserved forever. Several aspects of retention and preservation, arrangement and description, management and administrations, and access and use are still open to improvement. In particular, recent advances in Artificial Intelligence (AI) open the discussion as to whether AI can support the ongoing availability and accessibility of trustworthy public records. This paper presents preliminary results of the InterPARES Trust AI (I Trust AI) international research partnership, which aims to (1) identify and develop specific AI technologies to address critical records and archives challenges; (2) determine the benefits and risks of employing AI technologies on records and archives; (3) ensure that archival concepts and principles inform the development of responsible AI; and (4) validate outcomes through a conglomerate of case studies and demonstrations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge