Marco Alì

A Systematic Benchmark of GAN Architectures for MRI-to-CT Synthesis

Mar 13, 2026Abstract:The translation from Magnetic resonance imaging (MRI) to Computed tomography (CT) has been proposed as an effective solution to facilitate MRI-only clinical workflows while limiting exposure to ionizing radiation. Although numerous Generative Adversarial Network (GAN) architectures have been proposed for MRI-to-CT translation, systematic and fair comparisons across heterogeneous models remain limited. We present a comprehensive benchmark of ten GAN architectures evaluated on the SynthRAD2025 dataset across three anatomical districts (abdomen, thorax, head-and-neck). All models were trained under a unified validation protocol with identical preprocessing and optimization settings. Performance was assessed using complementary metrics capturing voxel-wise accuracy, structural fidelity, perceptual quality, and distribution-level realism, alongside an analysis of computational complexity. Supervised Paired models consistently outperformed Unpaired approaches, confirming the importance of voxel-wise supervision. Pix2Pix achieved the most balanced performance across districts while maintaining a favorable quality-to-complexity trade-off. Multi-district training improved structural robustness, whereas intra-district training maximized voxel-wise fidelity. This benchmark provides quantitative and computational guidance for model selection in MRI-only radiotherapy workflows and establishes a reproducible framework for future comparative studies. To ensure the reproducibility of our experiments we make our code public, together with the overall results, at the following link:https://github.com/arco-group/MRI_TO_CT.git

AIforCOVID: predicting the clinical outcomes in patients with COVID-19 applying AI to chest-X-rays. An Italian multicentre study

Dec 11, 2020

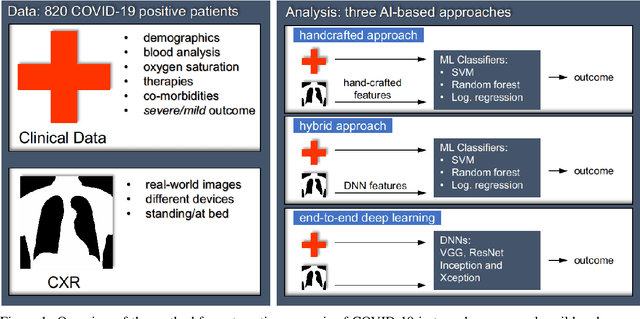

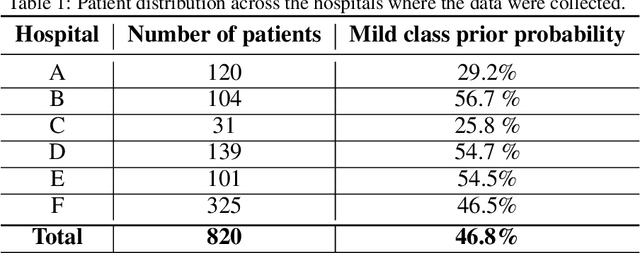

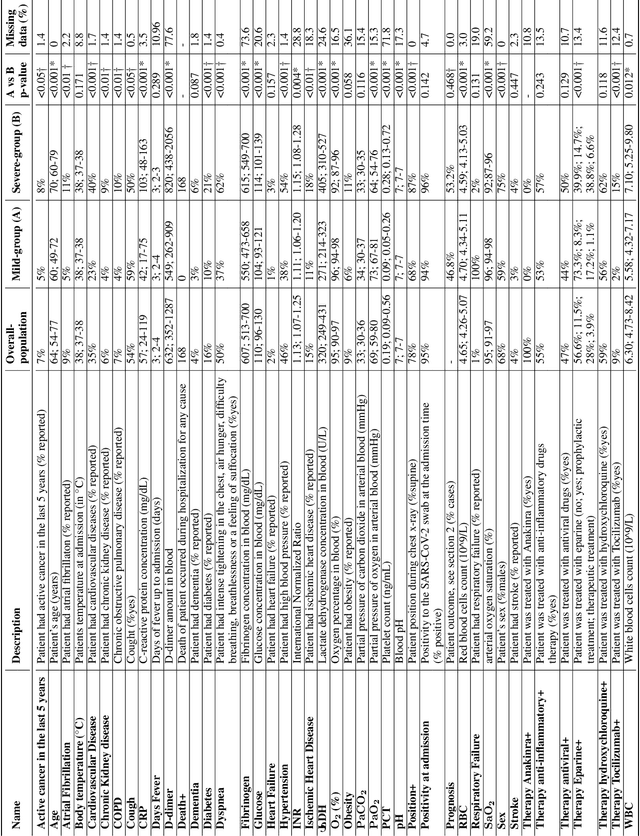

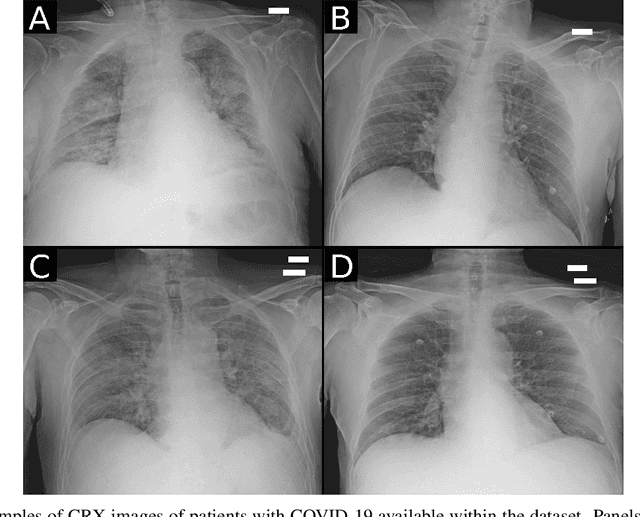

Abstract:Recent epidemiological data report that worldwide more than 53 million people have been infected by SARS-CoV-2, resulting in 1.3 million deaths. The disease has been spreading very rapidly and few months after the identification of the first infected, shortage of hospital resources quickly became a problem. In this work we investigate whether chest X-ray (CXR) can be used as a possible tool for the early identification of patients at risk of severe outcome, like intensive care or death. CXR is a radiological technique that compared to computed tomography (CT) it is simpler, faster, more widespread and it induces lower radiation dose. We present a dataset including data collected from 820 patients by six Italian hospitals in spring 2020 during the first COVID-19 emergency. The dataset includes CXR images, several clinical attributes and clinical outcomes. We investigate the potential of artificial intelligence to predict the prognosis of such patients, distinguishing between severe and mild cases, thus offering a baseline reference for other researchers and practitioners. To this goal, we present three approaches that use features extracted from CXR images, either handcrafted or automatically by convolutional neuronal networks, which are then integrated with the clinical data. Exhaustive evaluation shows promising performance both in 10-fold and leave-one-centre-out cross-validation, implying that clinical data and images have the potential to provide useful information for the management of patients and hospital resources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge