Manuel Oriol

ReqFusion: A Multi-Provider Framework for Automated PEGS Analysis Across Software Domains

Mar 24, 2026Abstract:Requirements engineering is a vital, yet labor-intensive, stage in the software development process. This article introduces ReqFusion: an AI-enhanced system that automates the extraction, classification, and analysis of software requirements utilizing multiple Large Language Model (LLM) providers. The architecture of ReqFusion integrates OpenAI GPT, Anthropic Claude, and Groq models to extract functional and non-functional requirements from various documentation formats (PDF, DOCX, and PPTX) in academic, industrial, and tender proposal contexts. The system uses a domain-independent extraction method and generates requirements following the Project, Environment, Goal, and System (PEGS) approach introduced by Bertrand Meyer. The main idea is that, because the PEGS format is detailed, LLMs have more information and cues about the requirements, producing better results than a simple generic request. An ablation study confirms this hypothesis: PEGS-guided prompting achieves an F1 score of 0.88, compared to 0.71 for generic prompting under the same multi-provider configuration. The evaluation used 18 real-world documents to generate 226 requirements through automated classification, with 54.9% functional and 45.1% nonfunctional across academic, business, and technical domains. An extended evaluation on five projects with 1,050 requirements demonstrated significant improvements in extraction accuracy and a 78% reduction in analysis time compared to manual methods. The multi-provider architecture enhances reliability through model consensus and fallback mechanisms, while the PEGS-based approach ensures comprehensive coverage of all requirement categories.

Architecture for a Trustworthy Quantum Chatbot

Mar 06, 2025

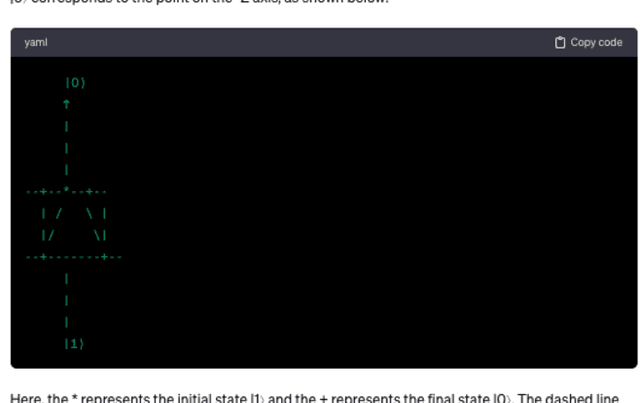

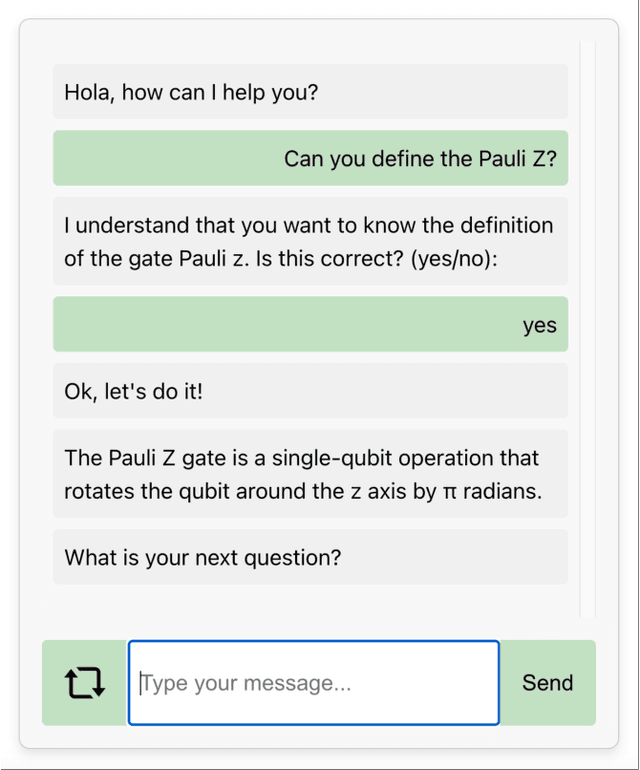

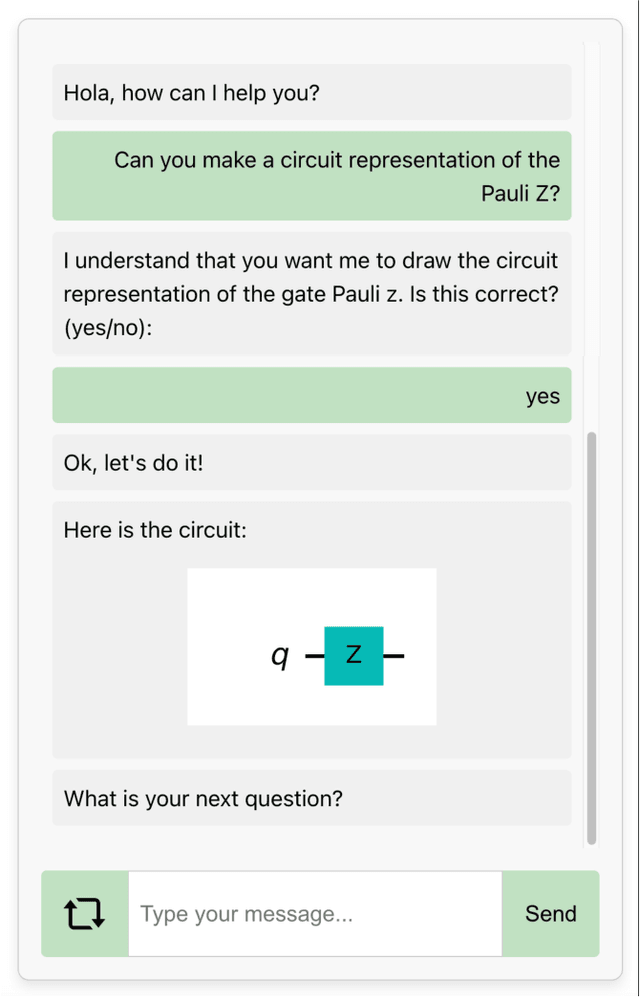

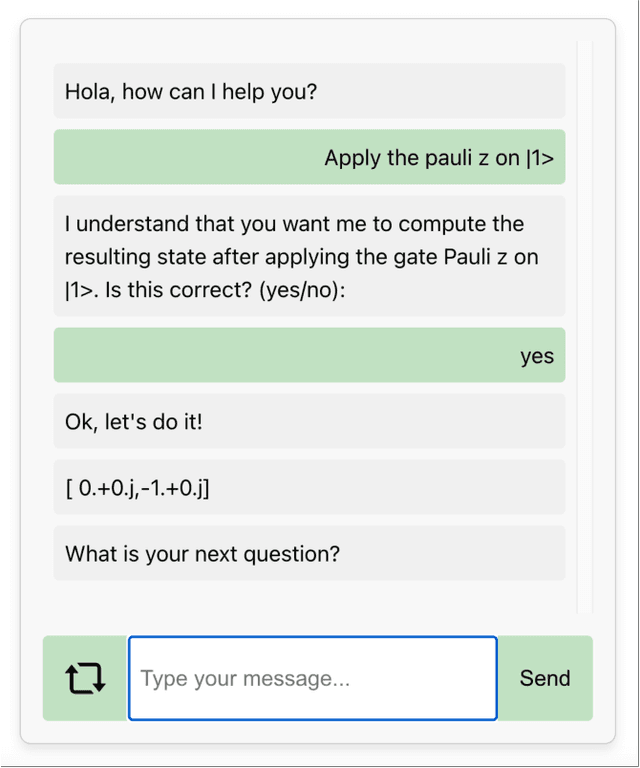

Abstract:Large language model (LLM)-based tools such as ChatGPT seem useful for classical programming assignments. The more specialized the field, the more likely they lack reliability because of the lack of data to train them. In the case of quantum computing, the quality of answers of generic chatbots is low. C4Q is a chatbot focused on quantum programs that addresses this challenge through a software architecture that integrates specialized LLMs to classify requests and specialized question answering modules with a deterministic logical engine to provide trustworthy quantum computing support. This article describes the latest version (2.0) of C4Q, which delivers several enhancements: ready-to-run Qiskit code for gate definitions and circuit operations, expanded features to solve software engineering tasks such as the travelling salesperson problem and the knapsack problem, and a feedback mechanism for iterative improvement. Extensive testing of the backend confirms the system's reliability, while empirical evaluations show that C4Q 2.0's classification LLM reaches near-perfect accuracy. The evaluation of the result consists in a comparative study with three existing chatbots highlighting C4Q 2.0's maintainability and correctness, reflecting on how software architecture decisions, such as separating deterministic logic from probabilistic text generation impact the quality of the results.

Benefits and Risks of Using ChatGPT4 as a Teaching Assistant for Computer Science Students

Nov 08, 2024

Abstract:Upon release, ChatGPT3.5 shocked the software engineering community by its ability to generate answers to specialized questions about coding. Immediately, many educators wondered if it was possible to use the chatbot as a support tool that helps students answer their programming questions. This article evaluates this possibility at three levels: fundamental Computer Science knowledge (basic algorithms and data structures), core competency (design patterns), and advanced knowledge (quantum computing). In each case, we ask normalized questions several times to ChatGPT3.5, then look at the correctness of answers, and finally check if this creates issues. The main result is that the performances of ChatGPT3.5 degrades drastically as the specialization of the domain increases: for basic algorithms it returns answers that are almost always correct, for design patterns the generated code contains many code smells and is generally of low quality, but it is still sometimes able to fix it (if asked), and for quantum computing it is often blatantly wrong.

C4Q: A Chatbot for Quantum

Jan 29, 2024

Abstract:Quantum computing is a growing field that promises many real-world applications such as quantum cryptography or quantum finance. The number of people able to use quantum computing is however still very small. This limitation comes from the difficulty to understand the concepts and to know how to start coding. Therefore, there is a need for tools that can assist non-expert in overcoming this complexity. One possibility would be to use existing conversational agents. Unfortunately ChatGPT and other Large-Language Models produce inaccurate results. This article presents C4Q, a chatbot that answers accurately basic questions and guides users when trying to code quantum programs. Contrary to other approaches C4Q uses a pre-trained large language model only to discover and classify user requests. It then generates an accurate answer using an own engine. Thanks to this architectural design, C4Q's answers are always correct, and thus C4Q can become a support tool that makes quantum computing more available to non-experts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge