Manoranjan Majji

Magnetic Field Aided Vehicle Localization with Acceleration Correction

Nov 10, 2024

Abstract:This paper presents a novel approach for vehicle localization by leveraging the ambient magnetic field within a given environment. Our approach involves introducing a global mathematical function for magnetic field mapping, combined with Euclidean distance-based matching technique for accurately estimating vehicle position in suburban settings. The mathematical function based map structure ensures efficiency and scalability of the magnetic field map, while the batch processing based localization provides continuity in pose estimation. Additionally, we establish a bias estimation pipeline for an onboard accelerometer by utilizing the updated poses obtained through magnetic field matching. Our work aims to showcase the potential utility of magnetic fields as supplementary aids to existing localization methods, particularly beneficial in scenarios where Global Positioning System (GPS) signal is restricted or where cost-effective navigation systems are required.

A Rapid Trajectory Optimization and Control Framework for Resource-Constrained Applications

Oct 09, 2024

Abstract:This paper presents a computationally efficient model predictive control formulation that uses an integral Chebyshev collocation method to enable rapid operations of autonomous agents. By posing the finite-horizon optimal control problem and recursive re-evaluation of the optimal trajectories, minimization of the L2 norms of the state and control errors are transcribed into a quadratic program. Control and state variable constraints are parameterized using Chebyshev polynomials and are accommodated in the optimal trajectory generation programs to incorporate the actuator limits and keepout constraints. Differentiable collision detection of polytopes is leveraged for optimal collision avoidance. Results obtained from the collocation methods are benchmarked against the existing approaches on an edge computer to outline the performance improvements. Finally, collaborative control scenarios involving multi-agent space systems are considered to demonstrate the technical merits of the proposed work.

Robust Proximity Operations using Probabilistic Markov Models

Sep 27, 2024Abstract:A Markov decision process-based state switching is devised, implemented, and analyzed for proximity operations of various autonomous vehicles. The framework contains a pose estimator along with a multi-state guidance algorithm. The unified pose estimator leverages the extended Kalman filter for the fusion of measurements from rate gyroscopes, monocular vision, and ultra-wideband radar sensors. It is also equipped with Mahalonobis distance-based outlier rejection and under-weighting of measurements for robust performance. The use of probabilistic Markov models to transition between various guidance modes is proposed to enable robust and efficient proximity operations. Finally, the framework is validated through an experimental analysis of the docking of two small satellites and the precision landing of an aerial vehicle.

A Scalable Tabletop Satellite Automation Testbed:Design And Experiments

Sep 15, 2024

Abstract:This paper presents a detailed system design and component selection for the Transforming Proximity Operations and Docking Service (TPODS) module, designed to gain custody of uncontrolled resident space objects (RSOs) via rendezvous and proximity operation (RPO). In addition to serving as a free-flying robotic manipulator to work with cooperative and uncooperative RSOs, the TPODS modules are engineered to have the ability to cooperate with one another to build scaffolding for more complex satellite servicing activities. The structural design of the prototype module is inspired by Tensegrity principles, minimizing the structural mass of the modules frame. The prototype TPODS module is fabricated using lightweight polycarbonate with an aluminum or carbon fiber frame. The inner shell that houses various electronic and pneumatic components is 3-D printed using ABS material. Four OpenMV H7 R1 cameras are used for the pose estimation of resident space objects (RSOs), including other TPODS modules. Compressed air supplied by an external source is used for the initial testing and can be replaced by module-mounted nitrogen pressure vessels for full on-board propulsion later. A Teensy 4.1 single-board computer is used as a central command unit that receives data from the four OpenMV cameras, and commands its thrusters based on the control logic.

Disturbance-Robust Backup Control Barrier Functions: Safety Under Uncertain Dynamics

Sep 12, 2024

Abstract:Obtaining a controlled invariant set is crucial for safety-critical control with control barrier functions (CBFs) but is non-trivial for complex nonlinear systems and constraints. Backup control barrier functions allow such sets to be constructed online in a computationally tractable manner by examining the evolution (or flow) of the system under a known backup control law. However, for systems with unmodeled disturbances, this flow cannot be directly computed, making the current methods inadequate for assuring safety in these scenarios. To address this gap, we leverage bounds on the nominal and disturbed flow to compute a forward invariant set online by ensuring safety of an expanding norm ball tube centered around the nominal system evolution. We prove that this set results in robust control constraints which guarantee safety of the disturbed system via our Disturbance-Robust Backup Control Barrier Function (DR-BCBF) solution. Additionally, the efficacy of the proposed framework is demonstrated in simulation, applied to a double integrator problem and a rigid body spacecraft rotation problem with rate constraints.

Icy Moon Surface Simulation and Stereo Depth Estimation for Sampling Autonomy

Jan 23, 2024Abstract:Sampling autonomy for icy moon lander missions requires understanding of topographic and photometric properties of the sampling terrain. Unavailability of high resolution visual datasets (either bird-eye view or point-of-view from a lander) is an obstacle for selection, verification or development of perception systems. We attempt to alleviate this problem by: 1) proposing Graphical Utility for Icy moon Surface Simulations (GUISS) framework, for versatile stereo dataset generation that spans the spectrum of bulk photometric properties, and 2) focusing on a stereo-based visual perception system and evaluating both traditional and deep learning-based algorithms for depth estimation from stereo matching. The surface reflectance properties of icy moon terrains (Enceladus and Europa) are inferred from multispectral datasets of previous missions. With procedural terrain generation and physically valid illumination sources, our framework can fit a wide range of hypotheses with respect to visual representations of icy moon terrains. This is followed by a study over the performance of stereo matching algorithms under different visual hypotheses. Finally, we emphasize the standing challenges to be addressed for simulating perception data assets for icy moons such as Enceladus and Europa. Our code can be found here: https://github.com/nasa-jpl/guiss.

Deep Reinforcement Learning for Autonomous Spacecraft Inspection using Illumination

Aug 04, 2023

Abstract:This paper investigates the problem of on-orbit spacecraft inspection using a single free-flying deputy spacecraft, equipped with an optical sensor, whose controller is a neural network control system trained with Reinforcement Learning (RL). This work considers the illumination of the inspected spacecraft (chief) by the Sun in order to incentivize acquisition of well-illuminated optical data. The agent's performance is evaluated through statistically efficient metrics. Results demonstrate that the RL agent is able to inspect all points on the chief successfully, while maximizing illumination on inspected points in a simulated environment, using only low-level actions. Due to the stochastic nature of RL, 10 policies were trained using 10 random seeds to obtain a more holistic measure of agent performance. Over these 10 seeds, the interquartile mean (IQM) percentage of inspected points for the finalized model was 98.82%.

Differentiable Rendering for Pose Estimation in Proximity Operations

Dec 24, 2022

Abstract:Differentiable rendering aims to compute the derivative of the image rendering function with respect to the rendering parameters. This paper presents a novel algorithm for 6-DoF pose estimation through gradient-based optimization using a differentiable rendering pipeline. We emphasize two key contributions: (1) instead of solving the conventional 2D to 3D correspondence problem and computing reprojection errors, images (rendered using the 3D model) are compared only in the 2D feature space via sparse 2D feature correspondences. (2) Instead of an analytical image formation model, we compute an approximate local gradient of the rendering process through online learning. The learning data consists of image features extracted from multi-viewpoint renders at small perturbations in the pose neighborhood. The gradients are propagated through the rendering pipeline for the 6-DoF pose estimation using nonlinear least squares. This gradient-based optimization regresses directly upon the pose parameters by aligning the 3D model to reproduce a reference image shape. Using representative experiments, we demonstrate the application of our approach to pose estimation in proximity operations.

NaRPA: Navigation and Rendering Pipeline for Astronautics

Nov 03, 2022

Abstract:This paper presents Navigation and Rendering Pipeline for Astronautics (NaRPA) - a novel ray-tracing-based computer graphics engine to model and simulate light transport for space-borne imaging. NaRPA incorporates lighting models with attention to atmospheric and shading effects for the synthesis of space-to-space and ground-to-space virtual observations. In addition to image rendering, the engine also possesses point cloud, depth, and contour map generation capabilities to simulate passive and active vision-based sensors and to facilitate the designing, testing, or verification of visual navigation algorithms. Physically based rendering capabilities of NaRPA and the efficacy of the proposed rendering algorithm are demonstrated using applications in representative space-based environments. A key demonstration includes NaRPA as a tool for generating stereo imagery and application in 3D coordinate estimation using triangulation. Another prominent application of NaRPA includes a novel differentiable rendering approach for image-based attitude estimation is proposed to highlight the efficacy of the NaRPA engine for simulating vision-based navigation and guidance operations.

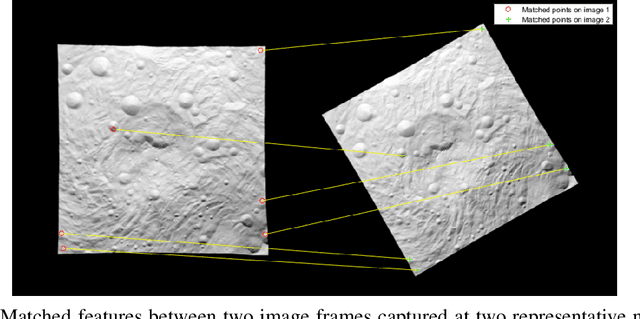

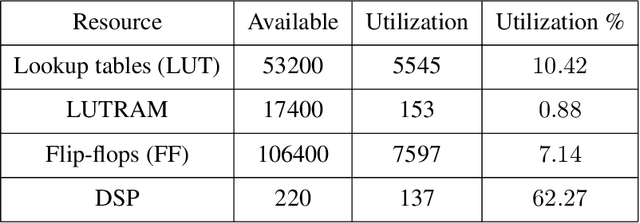

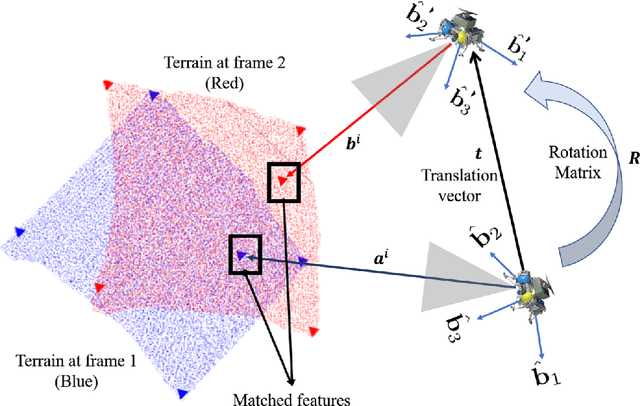

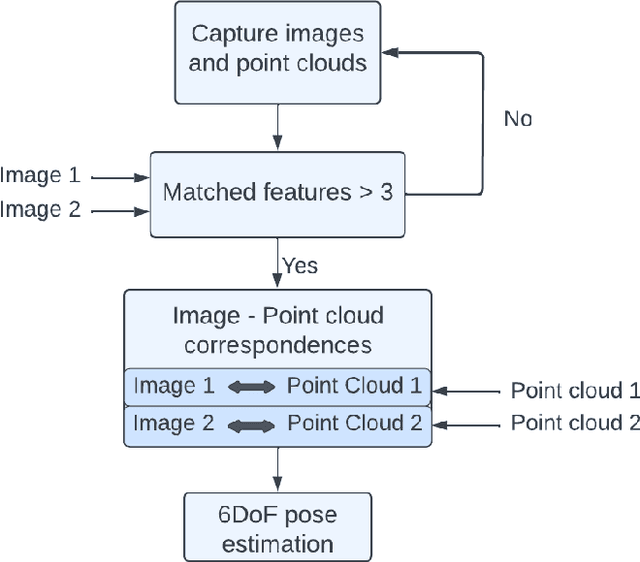

FPGA Hardware Acceleration for Feature-Based Relative Navigation Applications

Oct 18, 2022

Abstract:Estimation of rigid transformation between two point clouds is a computationally challenging problem in vision-based relative navigation. Targeting a real-time navigation solution utilizing point-cloud and image registration algorithms, this paper develops high-performance avionics for power and resource constrained pose estimation framework. A Field-Programmable Gate Array (FPGA) based embedded architecture is developed to accelerate estimation of relative pose between the point-clouds, aided by image features that correspond to the individual point sets. At algorithmic level, the pose estimation method is an adaptation of Optimal Linear Attitude and Translation Estimator (OLTAE) for relative attitude and translation estimation. At the architecture level, the proposed embedded solution is a hardware/software co-design that evaluates the OLTAE computations on the bare-metal hardware for high-speed state estimation. The finite precision FPGA evaluation of the OLTAE algorithm is compared with a double-precision evaluation on MATLAB for performance analysis and error quantification. Implementation results of the proposed finite-precision OLTAE accelerator demonstrate the high-performance compute capabilities of the FPGA-based pose estimation while offering relative numerical errors below 7%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge