Maja R. Rudolph

Exponential Family Embeddings

Nov 21, 2016

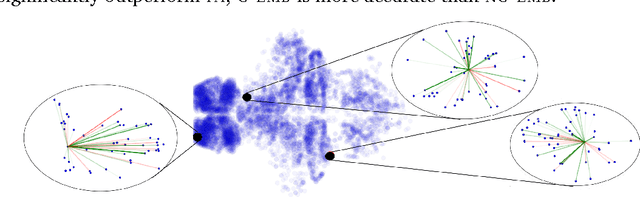

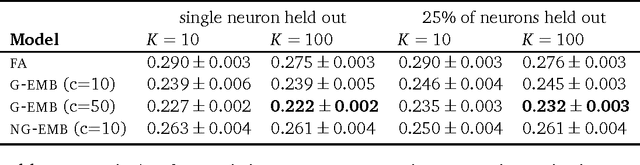

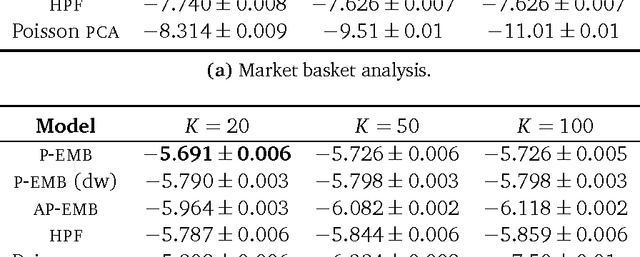

Abstract:Word embeddings are a powerful approach for capturing semantic similarity among terms in a vocabulary. In this paper, we develop exponential family embeddings, a class of methods that extends the idea of word embeddings to other types of high-dimensional data. As examples, we studied neural data with real-valued observations, count data from a market basket analysis, and ratings data from a movie recommendation system. The main idea is to model each observation conditioned on a set of other observations. This set is called the context, and the way the context is defined is a modeling choice that depends on the problem. In language the context is the surrounding words; in neuroscience the context is close-by neurons; in market basket data the context is other items in the shopping cart. Each type of embedding model defines the context, the exponential family of conditional distributions, and how the latent embedding vectors are shared across data. We infer the embeddings with a scalable algorithm based on stochastic gradient descent. On all three applications - neural activity of zebrafish, users' shopping behavior, and movie ratings - we found exponential family embedding models to be more effective than other types of dimension reduction. They better reconstruct held-out data and find interesting qualitative structure.

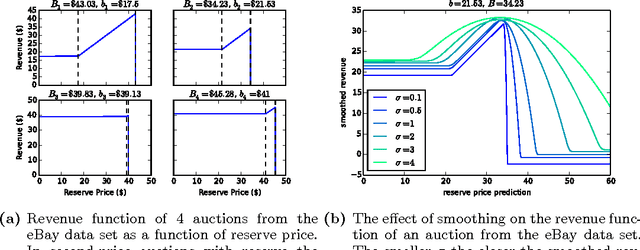

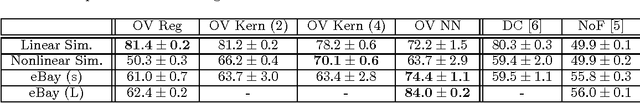

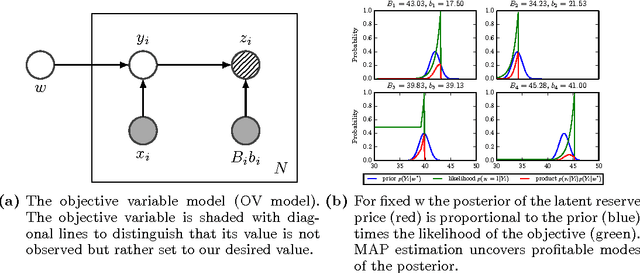

Objective Variables for Probabilistic Revenue Maximization in Second-Price Auctions with Reserve

Jun 24, 2015

Abstract:Many online companies sell advertisement space in second-price auctions with reserve. In this paper, we develop a probabilistic method to learn a profitable strategy to set the reserve price. We use historical auction data with features to fit a predictor of the best reserve price. This problem is delicate - the structure of the auction is such that a reserve price set too high is much worse than a reserve price set too low. To address this we develop objective variables, a new framework for combining probabilistic modeling with optimal decision-making. Objective variables are "hallucinated observations" that transform the revenue maximization task into a regularized maximum likelihood estimation problem, which we solve with an EM algorithm. This framework enables a variety of prediction mechanisms to set the reserve price. As examples, we study objective variable methods with regression, kernelized regression, and neural networks on simulated and real data. Our methods outperform previous approaches both in terms of scalability and profit.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge