Maciej Smołka

Automatic Design of Optimization Test Problems with Large Language Models

Feb 02, 2026Abstract:The development of black-box optimization algorithms depends on the availability of benchmark suites that are both diverse and representative of real-world problem landscapes. Widely used collections such as BBOB and CEC remain dominated by hand-crafted synthetic functions and provide limited coverage of the high-dimensional space of Exploratory Landscape Analysis (ELA) features, which in turn biases evaluation and hinders training of meta-black-box optimizers. We introduce Evolution of Test Functions (EoTF), a framework that automatically generates continuous optimization test functions whose landscapes match a specified target ELA feature vector. EoTF adapts LLM-driven evolutionary search, originally proposed for heuristic discovery, to evolve interpretable, self-contained numpy implementations of objective functions by minimizing the distance between sampled ELA features of generated candidates and a target profile. In experiments on 24 noiseless BBOB functions and a contamination-mitigating suite of 24 MA-BBOB hybrid functions, EoTF reliably produces non-trivial functions with closely matching ELA characteristics and preserves optimizer performance rankings under fixed evaluation budgets, supporting their validity as surrogate benchmarks. While a baseline neural-network-based generator achieves higher accuracy in 2D, EoTF substantially outperforms it in 3D and exhibits stable solution quality as dimensionality increases, highlighting favorable scalability. Overall, EoTF offers a practical route to scalable, portable, and interpretable benchmark generation targeted to desired landscape properties.

Approximation of the objective insensitivity regions using Hierarchic Memetic Strategy coupled with Covariance Matrix Adaptation Evolutionary Strategy

May 17, 2019

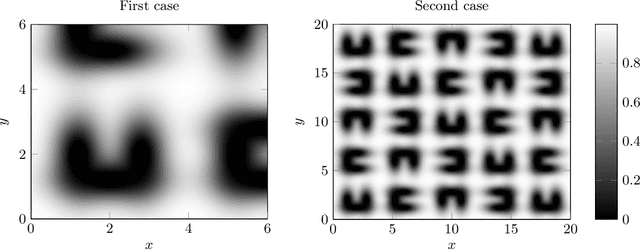

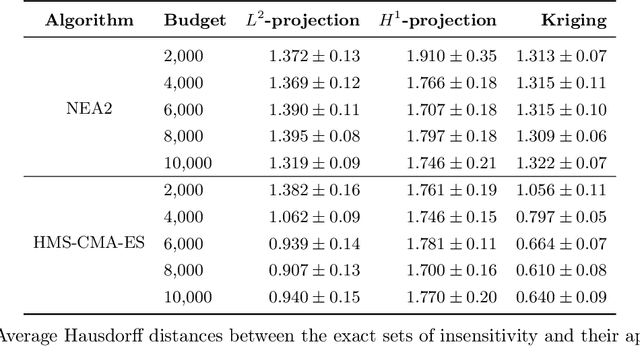

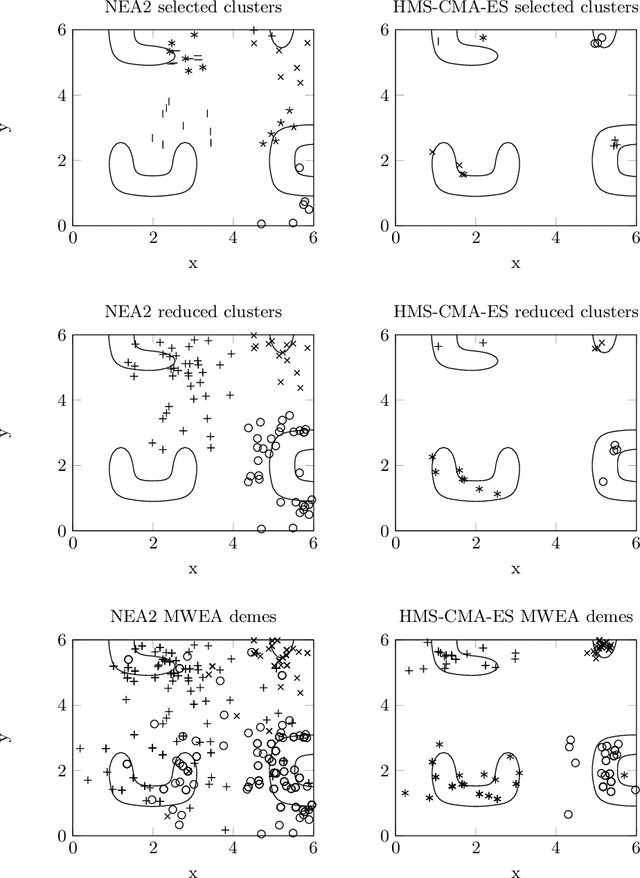

Abstract:One of the most challenging types of ill-posedness in global optimization is the presence of insensitivity regions in design parameter space, so the identification of their shape will be crucial, if ill-posedness is irrecoverable. Such problems may be solved using global stochastic search followed by post-processing of a local sample and a local objective approximation. We propose a new approach of this type composed of Hierarchic Memetic Strategy (HMS) powered by the Covariance Matrix Adaptation Evolutionary Strategy (CMA-ES) well-known as an effective, self-adaptable stochastic optimization algorithm and we leverage the distribution density knowledge it accumulates to better identify and separate insensitivity regions. The results of benchmarks prove that the improved HMS-CMA-ES strategy is effective in both the total computational cost and the accuracy of insensitivity region approximation. The reference data for the tests was obtained by means of a well-known effective strategy of multimodal stochastic optimization called the Niching Evolutionary Algorithm 2 (NEA2), that also uses CMA-ES as a component.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge