Lakshmish Ramaswamy

Fast and Generalizable NeRF Architecture Selection for Satellite Scene Reconstruction

Mar 18, 2026Abstract:Neural Radiance Fields (NeRF) have emerged as a powerful approach for photorealistic 3D reconstruction from multi-view images. However, deploying NeRF for satellite imagery remains challenging. Each scene requires individual training, and optimizing architectures via Neural Architecture Search (NAS) demands hours to days of GPU time. While existing approaches focus on architectural improvements, our SHAP analysis reveals that multi-view consistency, rather than model architecture, determines reconstruction quality. Based on this insight, we develop PreSCAN, a predictive framework that estimates NeRF quality prior to training using lightweight geometric and photometric descriptors. PreSCAN selects suitable architectures in < 30 seconds with < 1 dB prediction error, achieving 1000$\times$ speedup over NAS. We further demonstrate PreSCAN's deployment utility on edge platforms (Jetson Orin), where combining its predictions with offline cost profiling reduces inference power by 26% and latency by 43% with minimal quality loss. Experiments on DFC2019 datasets confirm that PreSCAN generalizes across diverse satellite scenes without retraining.

An Energy-Efficient Ensemble Approach for Mitigating Data Incompleteness in IoT Applications

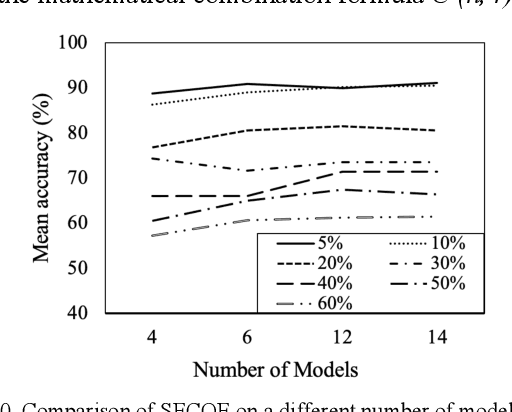

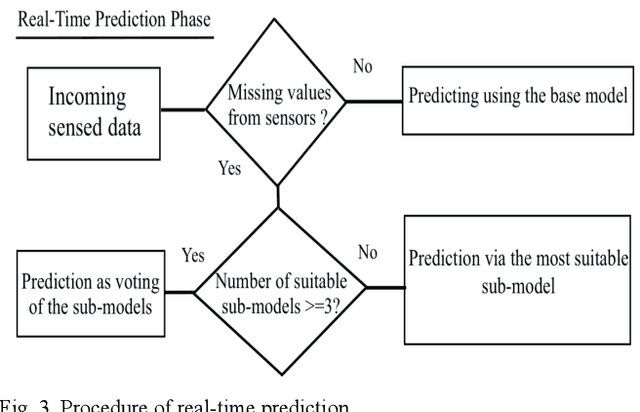

Mar 15, 2024Abstract:Machine Learning (ML) is becoming increasingly important for IoT-based applications. However, the dynamic and ad-hoc nature of many IoT ecosystems poses unique challenges to the efficacy of ML algorithms. One such challenge is data incompleteness, which is manifested as missing sensor readings. Many factors, including sensor failures and/or network disruption, can cause data incompleteness. Furthermore, most IoT systems are severely power-constrained. It is important that we build IoT-based ML systems that are robust against data incompleteness while simultaneously being energy efficient. This paper presents an empirical study of SECOE - a recent technique for alleviating data incompleteness in IoT - with respect to its energy bottlenecks. Towards addressing the energy bottlenecks of SECOE, we propose ENAMLE - a proactive, energy-aware technique for mitigating the impact of concurrent missing data. ENAMLE is unique in the sense that it builds an energy-aware ensemble of sub-models, each trained with a subset of sensors chosen carefully based on their correlations. Furthermore, at inference time, ENAMLE adaptively alters the number of the ensemble of models based on the amount of missing data rate and the energy-accuracy trade-off. ENAMLE's design includes several novel mechanisms for minimizing energy consumption while maintaining accuracy. We present extensive experimental studies on two distinct datasets that demonstrate the energy efficiency of ENAMLE and its ability to alleviate sensor failures.

Towards Modular Machine Learning Solution Development: Benefits and Trade-offs

Jan 23, 2023

Abstract:Machine learning technologies have demonstrated immense capabilities in various domains. They play a key role in the success of modern businesses. However, adoption of machine learning technologies has a lot of untouched potential. Cost of developing custom machine learning solutions that solve unique business problems is a major inhibitor to far-reaching adoption of machine learning technologies. We recognize that the monolithic nature prevalent in today's machine learning applications stands in the way of efficient and cost effective customized machine learning solution development. In this work we explore the benefits of modular machine learning solutions and discuss how modular machine learning solutions can overcome some of the major solution engineering limitations of monolithic machine learning solutions. We analyze the trade-offs between modular and monolithic machine learning solutions through three deep learning problems; one text based and the two image based. Our experimental results show that modular machine learning solutions have a promising potential to reap the solution engineering advantages of modularity while gaining performance and data advantages in a way the monolithic machine learning solutions do not permit.

SECOE: Alleviating Sensors Failure in Machine Learning-Coupled IoT Systems

Oct 05, 2022

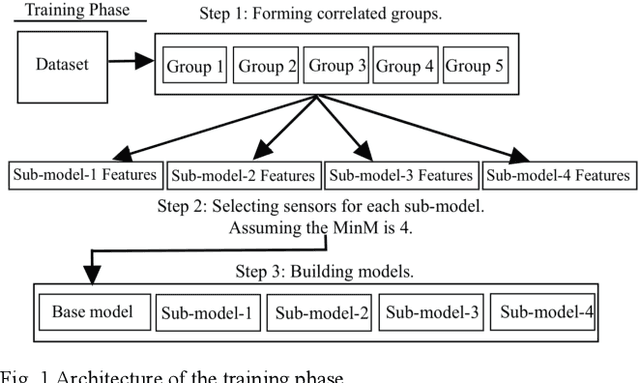

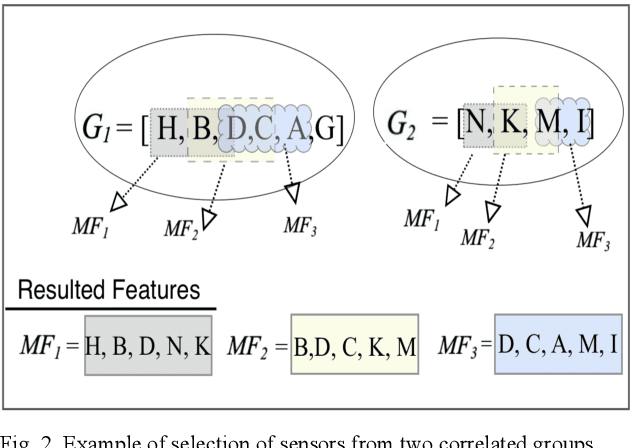

Abstract:Machine learning (ML) applications continue to revolutionize many domains. In recent years, there has been considerable research interest in building novel ML applications for a variety of Internet of Things (IoT) domains, such as precision agriculture, smart cities, and smart manufacturing. IoT domains are characterized by continuous streams of data originating from diverse, geographically distributed sensors, and they often require a real-time or semi-real-time response. IoT characteristics pose several fundamental challenges to designing and implementing effective ML applications. Sensor/network failures that result in data stream interruptions is one such challenge. Unfortunately, the performance of many ML applications quickly degrades when faced with data incompleteness. Current techniques to handle data incompleteness are based upon data imputation ( i.e., they try to fill-in missing data). Unfortunately, these techniques may fail, especially when multiple sensors' data streams become concurrently unavailable (due to simultaneous sensor failures). With the aim of building robust IoT-coupled ML applications, this paper proposes SECOE, a unique, proactive approach for alleviating potentially simultaneous sensor failures. The fundamental idea behind SECOE is to create a carefully chosen ensemble of ML models in which each model is trained assuming a set of failed sensors (i.e., the training set omits corresponding values). SECOE includes a novel technique to minimize the number of models in the ensemble by harnessing the correlations among sensors. We demonstrate the efficacy of the SECOE approach through a series of experiments involving three distinct datasets. The experimental findings reveal that SECOE effectively preserves prediction accuracy in the presence of sensor failures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge