Julian Ahrens

From Channel Measurement to Training Data for PHY Layer AI Applications

Mar 13, 2024

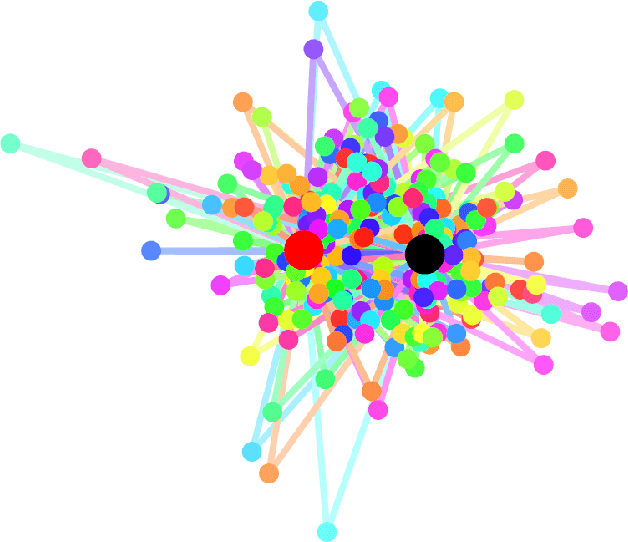

Abstract:Learning-based techniques such as artificial intelligence (AI) and machine learning (ML) play an increasingly important role in the development of future communication networks. The success of a learning algorithm depends on the quality and quantity of the available training data. In the physical layer (PHY), channel information data can be obtained either through measurement campaigns or through simulations based on predefined channel models. Performing measurements can be time consuming while only gaining information about one specific position or scenario. Simulated data, on the other hand, are more generalized and reflect in most cases not a real environment but instead, a statistical approximation based on a mathematical model. This paper presents a procedure for acquiring channel data by means of fast and flexible software defined radio (SDR) based channel measurements along with a method for a parameter extraction that provides configuration input to the simulator. The procedure from the measurement to the simulated channel data is demonstrated in two exemplary propagation scenarios. It is shown, that in both cases the simulated data is in good accordance to the measurements

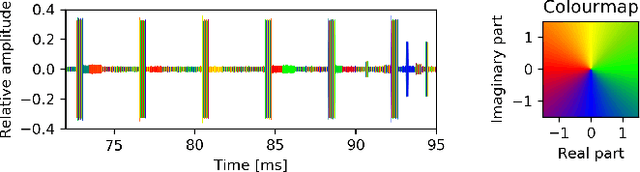

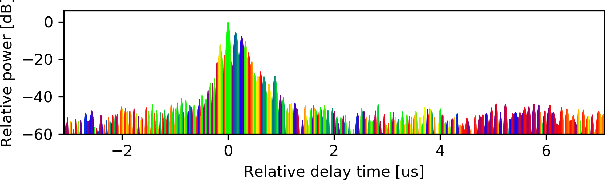

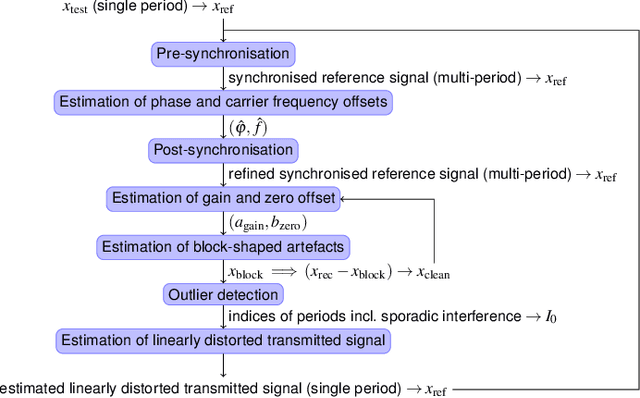

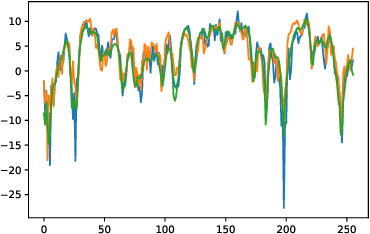

Signal Restoration and Channel Estimation for Channel Sounding with SDRs

May 23, 2022

Abstract:In this paper, the task of channel sounding using software defined radios (SDRs) is considered. In contrast to classical channel sounding equipment, SDRs are general purpose devices and require additional steps to be implemented when employed for this task. On top of this, SDRs may exhibit quirks causing signal artefacts that obstruct the effective collection of channel estimation data. Based on these considerations, in this work, a practical algorithm is devised to compensate for the drawbacks of using SDRs for channel sounding encountered in a concrete setup. The proposed approach utilises concepts from time series and Fourier analysis and comprises a signal restoration routine for mitigating artefacts within the recorded signals and an encompassing channel sounding process. The efficacy of the algorithm is evaluated on real measurements generated within the given setup. The empirical results show that the proposed method is able to counteract the shortcomings of the equipment and deliver reasonable channel estimates.

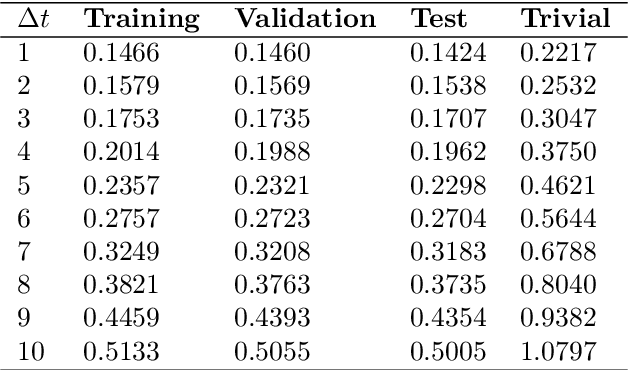

A Machine Learning Method for Prediction of Multipath Channels

Sep 10, 2019

Abstract:In this paper, a machine learning method for predicting the evolution of a mobile communication channel based on a specific type of convolutional neural network is developed and evaluated in a simulated multipath transmission scenario. The simulation and channel estimation are designed to replicate real-world scenarios and common measurements supported by reference signals in modern cellular networks. The capability of the predictor meets the requirements that a deployment of the developed method in a radio resource scheduler of a base station poses. Possible applications of the method are discussed.

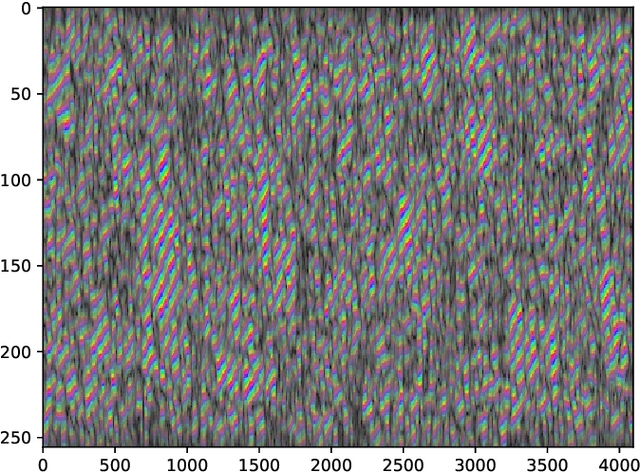

A Machine-Learning Phase Classification Scheme for Anomaly Detection in Signals with Periodic Characteristics

Nov 29, 2018

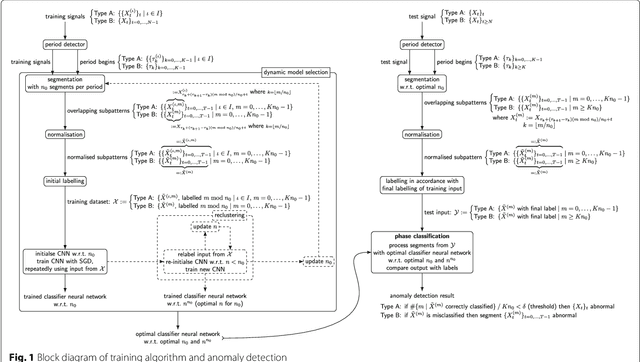

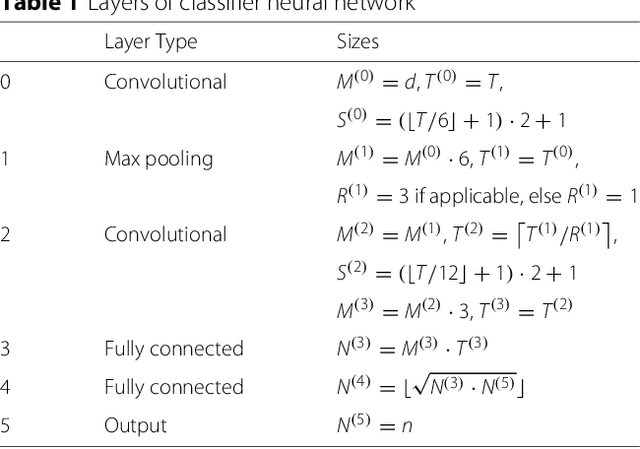

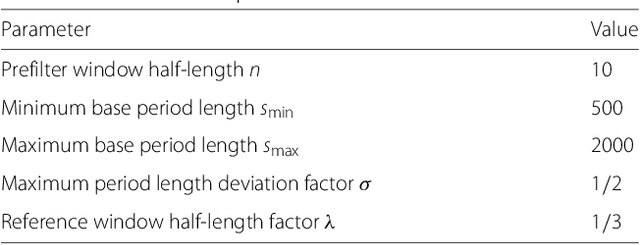

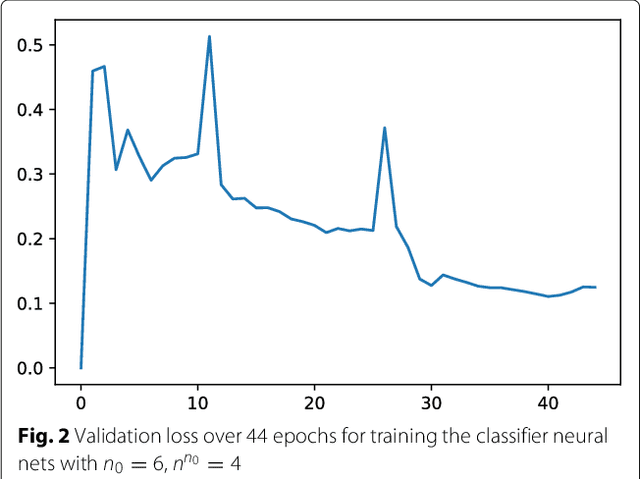

Abstract:In this paper we propose a novel machine-learning method for anomaly detection. Focusing on data with periodic characteristics where randomly varying period lengths are explicitly allowed, a multi-dimensional time series analysis is conducted by training a data-adapted classifier consisting of deep convolutional neural networks performing phase classification. The entire algorithm including data pre-processing, period detection, segmentation, and even dynamic adjustment of the neural nets is implemented for a fully automatic execution. The proposed method is evaluated on three example datasets from the areas of cardiology, intrusion detection, and signal processing, presenting reasonable performance.

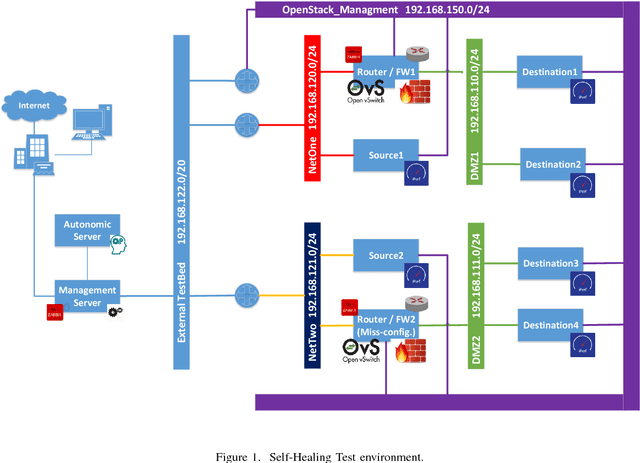

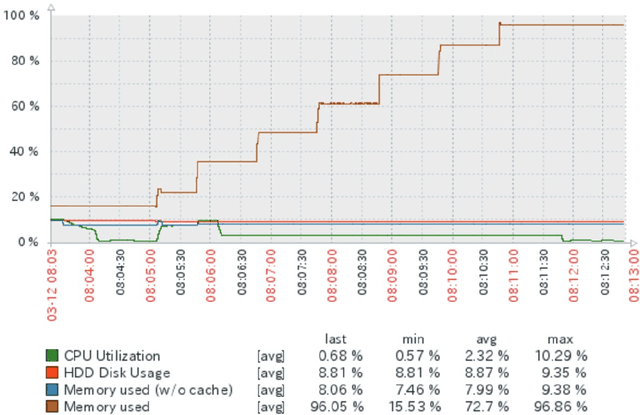

An AI-driven Malfunction Detection Concept for NFV Instances in 5G

Apr 16, 2018

Abstract:Efficient network management is one of the key challenges of the constantly growing and increasingly complex wide area networks (WAN). The paradigm shift towards virtualized (NFV) and software defined networks (SDN) in the next generation of mobile networks (5G), as well as the latest scientific insights in the field of Artificial Intelligence (AI) enable the transition from manually managed networks nowadays to fully autonomic and dynamic self-organized networks (SON). This helps to meet the KPIs and reduce at the same time operational costs (OPEX). In this paper, an AI driven concept is presented for the malfunction detection in NFV applications with the help of semi-supervised learning. For this purpose, a profile of the application under test is created. This profile then is used as a reference to detect abnormal behaviour. For example, if there is a bug in the updated version of the app, it is now possible to react autonomously and roll-back the NFV app to a previous version in order to avoid network outages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge