Juan José García-Ripoll

High-Resolution Tensor-Network Fourier Methods for Exponentially Compressed Non-Gaussian Aggregate Distributions

Mar 24, 2026Abstract:Characteristic functions of weighted sums of independent random variables exhibit low-rank structure in the quantized tensor train (QTT) representation, also known as matrix product states (MPS), enabling up to exponential compression of their fully non-Gaussian probability distributions. Under variable independence, the global characteristic function factorizes into local terms. Its low-rank QTT structure arises from intrinsic spectral smoothness in continuous models, or from spectral energy concentration as the number of components $D$ grows in discrete models. We demonstrate this on weighted sums of Bernoulli and lognormal random variables. In the former, despite an adversarial, incompressible small-$D$ regime, the characteristic function undergoes a sharp bond-dimension collapse for $D \gtrsim 300$ components, enabling polylogarithmic time and memory scaling. In the latter, the approach reaches high-resolution discretizations of $N = 2^{30}$ frequency modes on standard hardware, far beyond the $N = 2^{24}$ ceiling of dense implementations. These compressed representations enable efficient computation of Value at Risk (VaR) and Expected Shortfall (ES), supporting applications in quantitative finance and beyond.

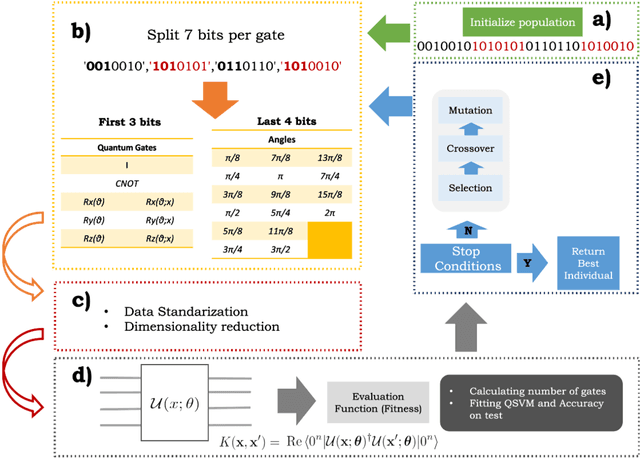

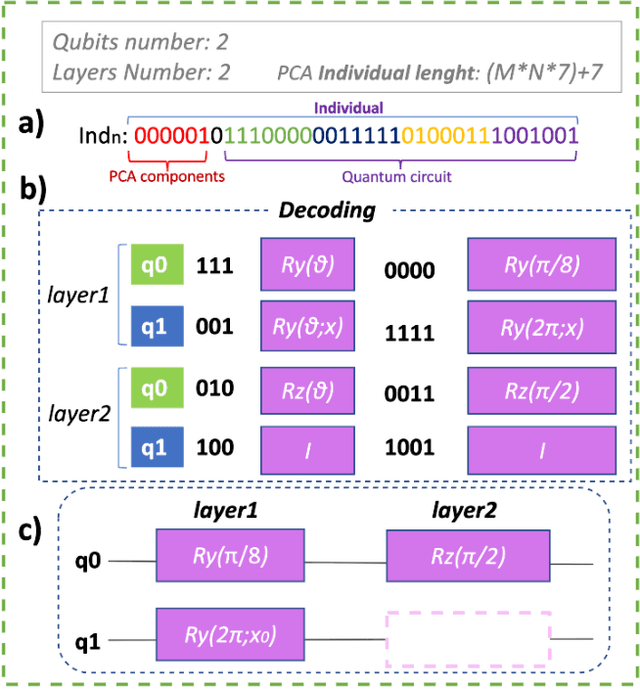

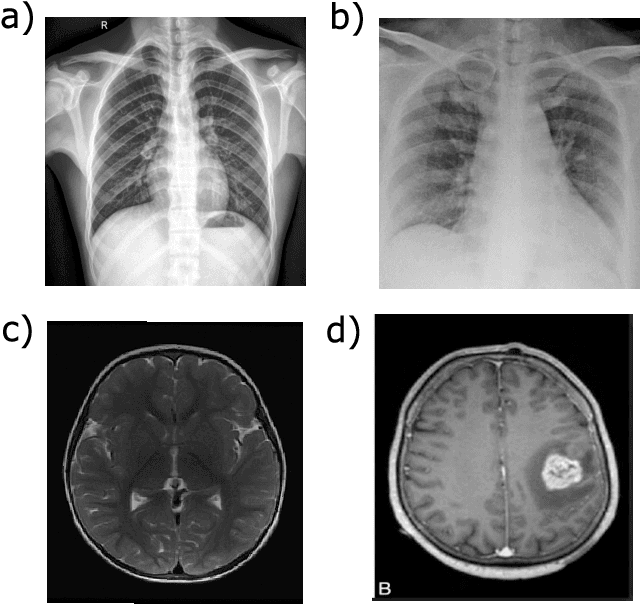

AutoQML: Automatic Generation and Training of Robust Quantum-Inspired Classifiers by Using Genetic Algorithms on Grayscale Images

Aug 28, 2022

Abstract:We propose a new hybrid system for automatically generating and training quantum-inspired classifiers on grayscale images by using multiobjective genetic algorithms. We define a dynamic fitness function to obtain the smallest possible circuit and highest accuracy on unseen data, ensuring that the proposed technique is generalizable and robust. We minimize the complexity of the generated circuits in terms of the number of entanglement gates by penalizing their appearance. We reduce the size of the images with two dimensionality reduction approaches: principal component analysis (PCA), which is encoded in the individual for optimization purpose, and a small convolutional autoencoder (CAE). These two methods are compared with one another and with a classical nonlinear approach to understand their behaviors and to ensure that the classification ability is due to the quantum circuit and not the preprocessing technique used for dimensionality reduction.

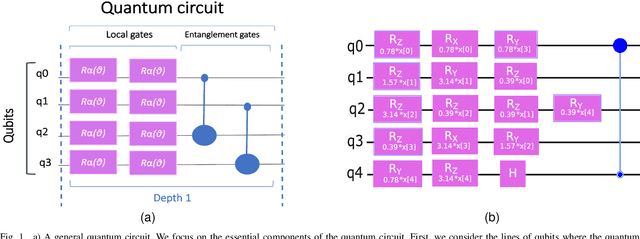

Automatic design of quantum feature maps

May 26, 2021

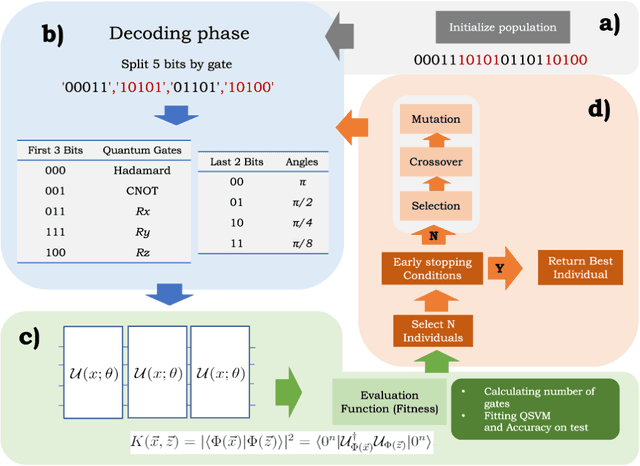

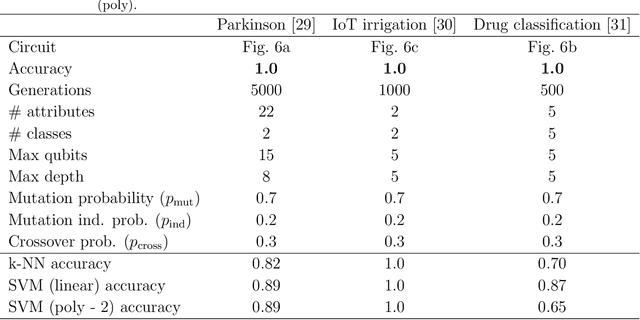

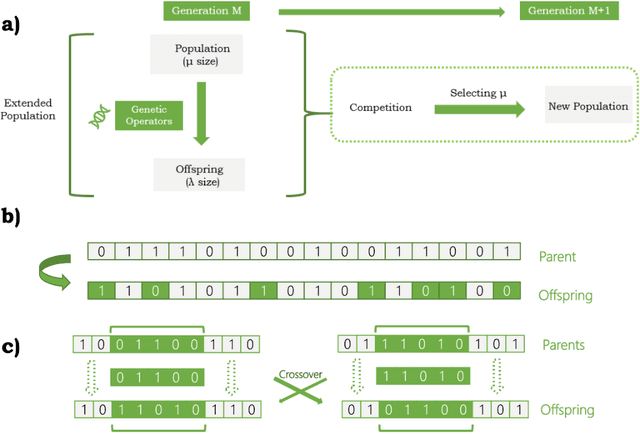

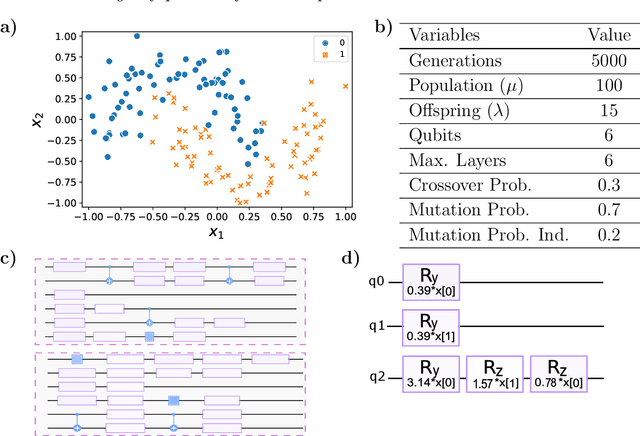

Abstract:We propose a new technique for the automatic generation of optimal ad-hoc ans\"atze for classification by using quantum support vector machine (QSVM). This efficient method is based on NSGA-II multiobjective genetic algorithms which allow both maximize the accuracy and minimize the ansatz size. It is demonstrated the validity of the technique by a practical example with a non-linear dataset, interpreting the resulting circuit and its outputs. We also show other application fields of the technique that reinforce the validity of the method, and a comparison with classical classifiers in order to understand the advantages of using quantum machine learning.

Hybrid quantum-classical optimization for financial index tracking

Aug 27, 2020

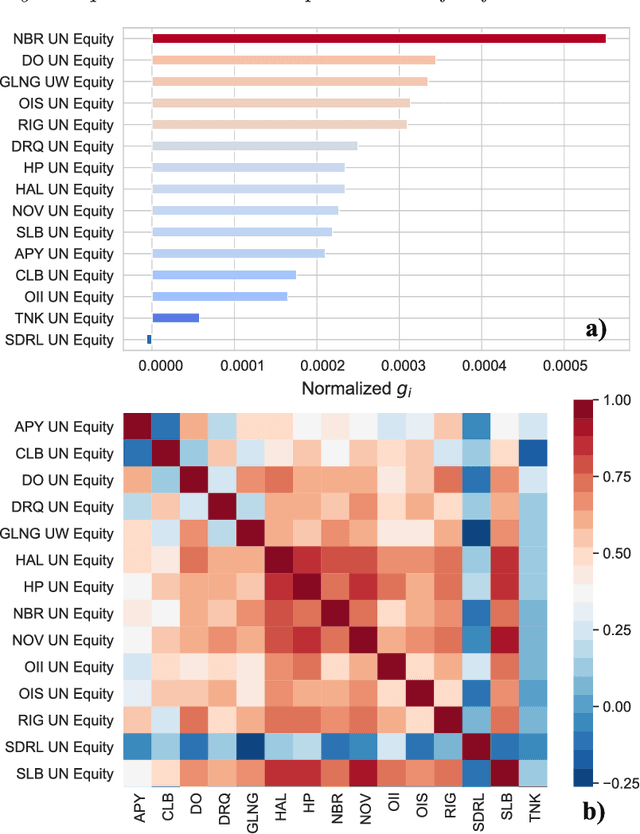

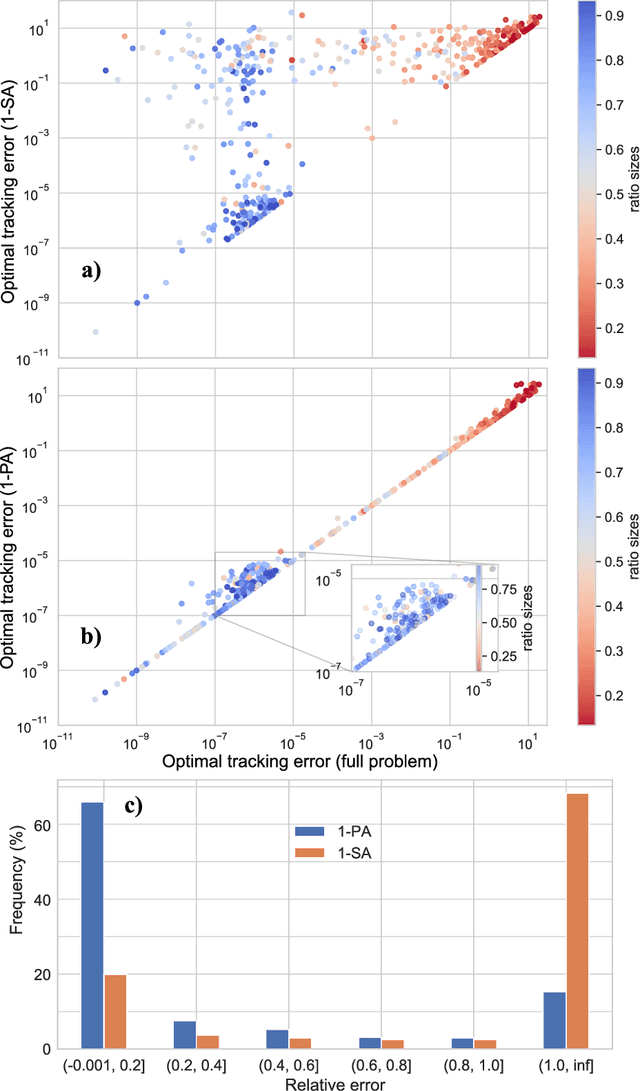

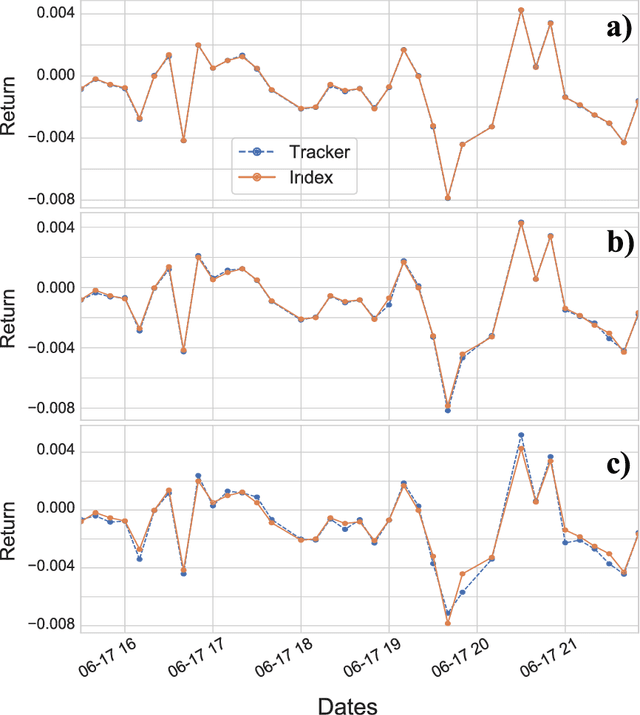

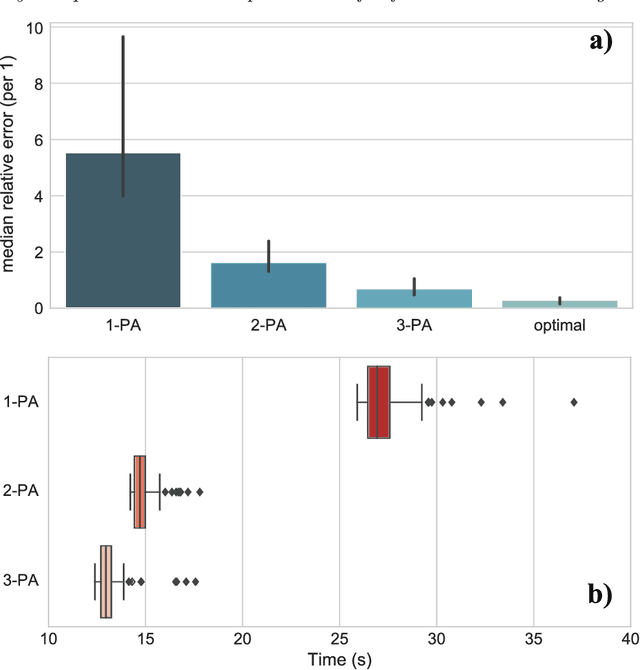

Abstract:Tracking a financial index boils down to replicating its trajectory of returns for a well-defined time span by investing in a weighted subset of the securities included in the benchmark. Picking the optimal combination of assets becomes a challenging NP-hard problem even for moderately large indices consisting of dozens or hundreds of assets, thereby requiring heuristic methods to find approximate solutions. Hybrid quantum-classical optimization with variational gate-based quantum circuits arises as a plausible method to improve performance of current schemes. In this work we introduce a heuristic pruning algorithm to find weighted combinations of assets subject to cardinality constraints. We further consider different strategies to respect such constraints and compare the performance of relevant quantum ans\"{a}tze and classical optimizers through numerical simulations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge