Joseph F. Fitzsimons

A note on state preparation for quantum machine learning

Apr 01, 2018

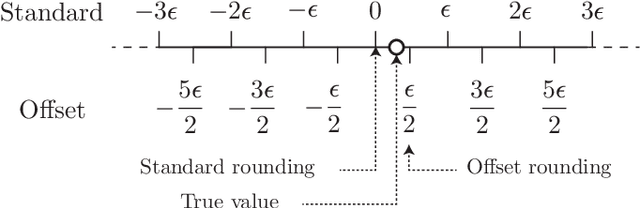

Abstract:The intersection between the fields of machine learning and quantum information processing is proving to be a fruitful field for the discovery of new quantum algorithms, which potentially offer an exponential speed-up over their classical counterparts. However, many such algorithms require the ability to produce states proportional to vectors stored in quantum memory. Even given access to quantum databases which store exponentially long vectors, the construction of which is considered a one-off overhead, it has been argued that the cost of preparing such amplitude-encoded states may offset any exponential quantum advantage. Here we argue that specifically in the context of machine learning applications it suffices to prepare a state close to the ideal state only in the $\infty$-norm, and that this can be achieved with only a constant number of memory queries.

Quantum algorithms for training Gaussian Processes

Mar 28, 2018Abstract:Gaussian processes (GPs) are important models in supervised machine learning. Training in Gaussian processes refers to selecting the covariance functions and the associated parameters in order to improve the outcome of predictions, the core of which amounts to evaluating the logarithm of the marginal likelihood (LML) of a given model. LML gives a concrete measure of the quality of prediction that a GP model is expected to achieve. The classical computation of LML typically carries a polynomial time overhead with respect to the input size. We propose a quantum algorithm that computes the logarithm of the determinant of a Hermitian matrix, which runs in logarithmic time for sparse matrices. This is applied in conjunction with a variant of the quantum linear system algorithm that allows for logarithmic time computation of the form $\mathbf{y}^TA^{-1}\mathbf{y}$, where $\mathbf{y}$ is a dense vector and $A$ is the covariance matrix. We hence show that quantum computing can be used to estimate the LML of a GP with exponentially improved efficiency under certain conditions.

Quantum assisted Gaussian process regression

Dec 12, 2015Abstract:Gaussian processes (GP) are a widely used model for regression problems in supervised machine learning. Implementation of GP regression typically requires $O(n^3)$ logic gates. We show that the quantum linear systems algorithm [Harrow et al., Phys. Rev. Lett. 103, 150502 (2009)] can be applied to Gaussian process regression (GPR), leading to an exponential reduction in computation time in some instances. We show that even in some cases not ideally suited to the quantum linear systems algorithm, a polynomial increase in efficiency still occurs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge