Joon-Hyuk Ko

Watch your neighbors: Training statistically accurate chaotic systems with local phase space information

May 14, 2026Abstract:Chaotic systems pose fundamental challenges for data-driven dynamics discovery, as small modeling errors lead to exponentially growing trajectory discrepancies. Since exact long-term prediction is unattainable, it is natural to ask what a good surrogate model for chaotic dynamics is. Prior work has largely focused either on reproducing the Jacobian of the underlying dynamics, which governs local expansion and contraction rates, or on training surrogate models that reproduce the ground-truth dynamics' long-term statistical behavior. In this work, we propose a new framework that aims to bridge these two paradigms by training surrogate dynamics models with accurate Jacobians and long-term statistical properties. Our method constructs a local covering of a chaotic attractor in phase space and analyzes the expansion and contraction of these coverings under the dynamics. The surrogate model is trained by minimizing the maximum mean discrepancy between the pushforward distributions of the coverings under the surrogate and ground-truth dynamics. Experiments show that our method significantly improves Jacobian accuracy while remaining competitive with state-of-the-art statistically accurate dynamics learning methods. Our code is fully available at https://anonymous.4open.science/r/neighborwatch.

Homotopy-based training of NeuralODEs for accurate dynamics discovery

Oct 04, 2022

Abstract:Conceptually, Neural Ordinary Differential Equations (NeuralODEs) pose an attractive way to extract dynamical laws from time series data, as they are natural extensions of the traditional differential equation-based modeling paradigm of the physical sciences. In practice, NeuralODEs display long training times and suboptimal results, especially for longer duration data where they may fail to fit the data altogether. While methods have been proposed to stabilize NeuralODE training, many of these involve placing a strong constraint on the functional form the trained NeuralODE can take that the actual underlying governing equation does not guarantee satisfaction. In this work, we present a novel NeuralODE training algorithm that leverages tools from the chaos and mathematical optimization communities - synchronization and homotopy optimization - for a breakthrough in tackling the NeuralODE training obstacle. We demonstrate architectural changes are unnecessary for effective NeuralODE training. Compared to the conventional training methods, our algorithm achieves drastically lower loss values without any changes to the model architectures. Experiments on both simulated and real systems with complex temporal behaviors demonstrate NeuralODEs trained with our algorithm are able to accurately capture true long term behaviors and correctly extrapolate into the future.

Bayesian neural network with pretrained protein embedding enhances prediction accuracy of drug-protein interaction

Dec 21, 2020

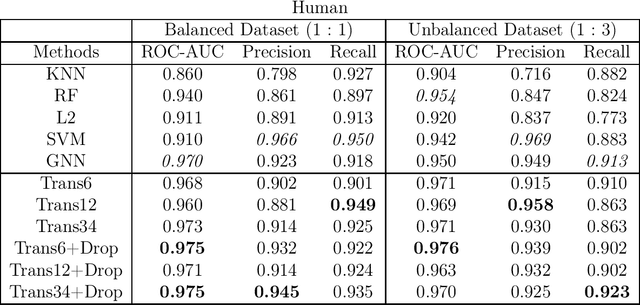

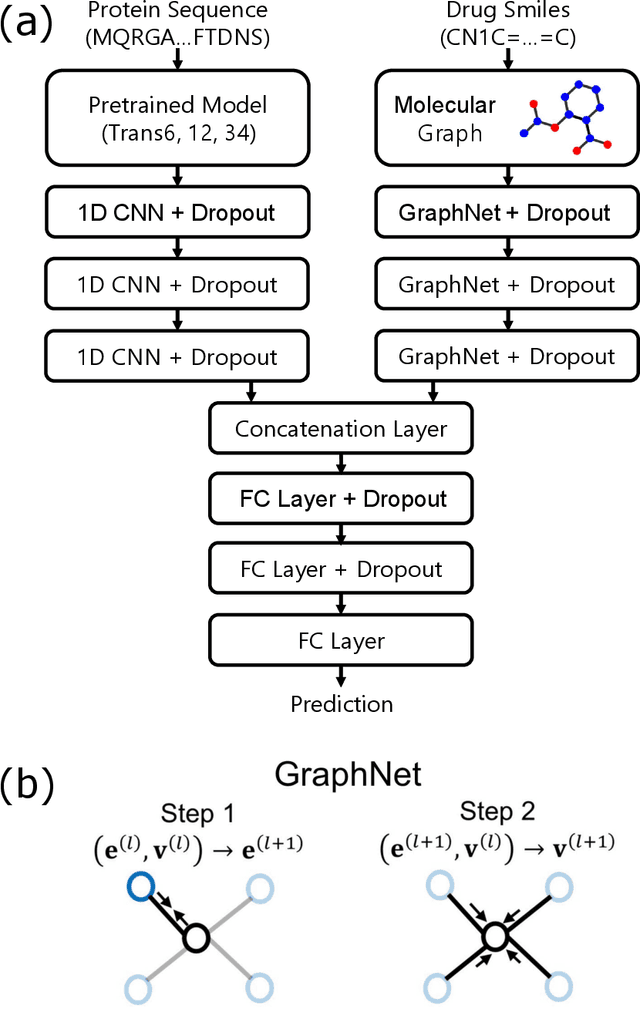

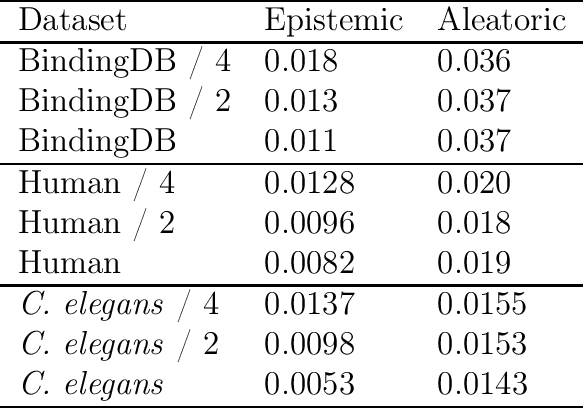

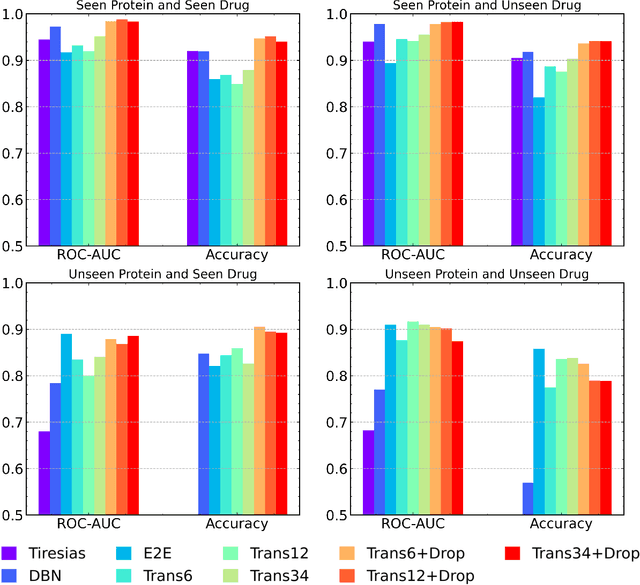

Abstract:The characterization of drug-protein interactions is crucial in the high-throughput screening for drug discovery. The deep learning-based approaches have attracted attention because they can predict drug-protein interactions without trial-and-error by humans. However, because data labeling requires significant resources, the available protein data size is relatively small, which consequently decreases model performance. Here we propose two methods to construct a deep learning framework that exhibits superior performance with a small labeled dataset. At first, we use transfer learning in encoding protein sequences with a pretrained model, which trains general sequence representations in an unsupervised manner. Second, we use a Bayesian neural network to make a robust model by estimating the data uncertainty. As a result, our model performs better than the previous baselines for predicting drug-protein interactions. We also show that the quantified uncertainty from the Bayesian inference is related to the confidence and can be used for screening DPI data points.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge