John Morgan

Interpretability without actionability: mechanistic methods cannot correct language model errors despite near-perfect internal representations

Mar 18, 2026Abstract:Language models encode task-relevant knowledge in internal representations that far exceeds their output performance, but whether mechanistic interpretability methods can bridge this knowledge-action gap has not been systematically tested. We compared four mechanistic interpretability methods -- concept bottleneck steering (Steerling-8B), sparse autoencoder feature steering, logit lens with activation patching, and linear probing with truthfulness separator vector steering (Qwen 2.5 7B Instruct) -- for correcting false-negative triage errors using 400 physician-adjudicated clinical vignettes (144 hazards, 256 benign). Linear probes discriminated hazardous from benign cases with 98.2% AUROC, yet the model's output sensitivity was only 45.1%, a 53-percentage-point knowledge-action gap. Concept bottleneck steering corrected 20% of missed hazards but disrupted 53% of correct detections, indistinguishable from random perturbation (p=0.84). SAE feature steering produced zero effect despite 3,695 significant features. TSV steering at high strength corrected 24% of missed hazards while disrupting 6% of correct detections, but left 76% of errors uncorrected. Current mechanistic interpretability methods cannot reliably translate internal knowledge into corrected outputs, with implications for AI safety frameworks that assume interpretability enables effective error correction.

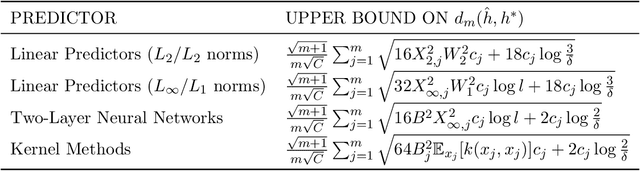

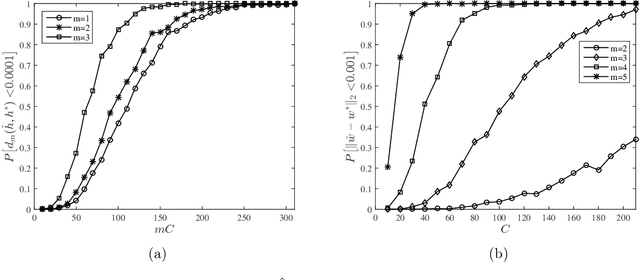

Cost-Aware Rademacher Complexity of Multiple Experiments

Oct 05, 2018

Abstract:We analyze the sample complexity of learning from multiple experiments where the experimenter has a total budget for obtaining samples. In this problem, the learner should choose a hypothesis that performs well with respect to multiple experiments, and their related data distributions. Each collected sample is associated with a cost which depends on the particular experiments. In our setup, a learner performs m experiments, while incurring a total cost C. By using a Rademacher complexity approach, we show that the gap between the training and generalization error is O(C^{-1/2}). We also provide some examples for linear prediction, two-layer neural networks and kernel methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge