Jittat Fakcharoenphol

Kasetsart University, Bangkok, Thailand

Stochastic Contextual Bandits with Graph-based Contexts

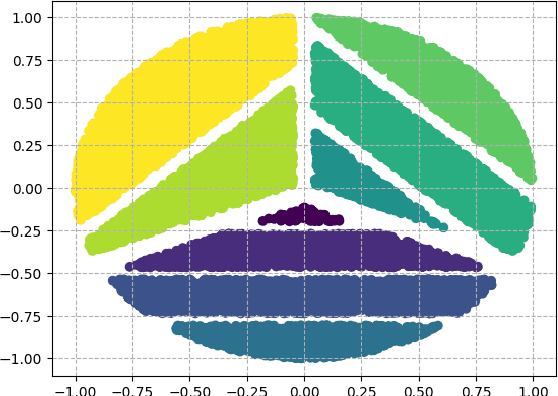

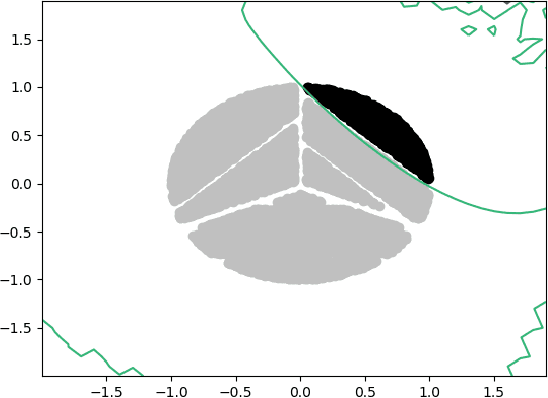

May 02, 2023Abstract:We naturally generalize the on-line graph prediction problem to a version of stochastic contextual bandit problems where contexts are vertices in a graph and the structure of the graph provides information on the similarity of contexts. More specifically, we are given a graph $G=(V,E)$, whose vertex set $V$ represents contexts with {\em unknown} vertex label $y$. In our stochastic contextual bandit setting, vertices with the same label share the same reward distribution. The standard notion of instance difficulties in graph label prediction is the cutsize $f$ defined to be the number of edges whose end points having different labels. For line graphs and trees we present an algorithm with regret bound of $\tilde{O}(T^{2/3}K^{1/3}f^{1/3})$ where $K$ is the number of arms. Our algorithm relies on the optimal stochastic bandit algorithm by Zimmert and Seldin~[AISTAT'19, JMLR'21]. When the best arm outperforms the other arms, the regret improves to $\tilde{O}(\sqrt{KT\cdot f})$. The regret bound in the later case is comparable to other optimal contextual bandit results in more general cases, but our algorithm is easy to analyze, runs very efficiently, and does not require an i.i.d. assumption on the input context sequence. The algorithm also works with general graphs using a standard random spanning tree reduction.

Fair Resource Allocation for Demands with Sharp Lower Tail Inequalities

Jan 29, 2021

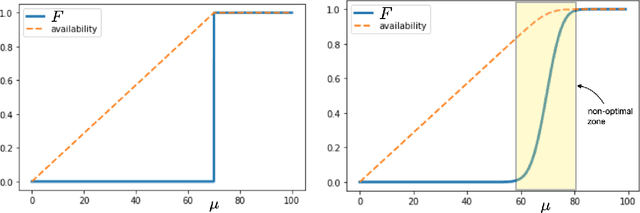

Abstract:We consider a fairness problem in resource allocation where multiple groups demand resources from a common source with the total fixed amount. The general model was introduced by Elzayn et al. [FAT*'19]. We follow Donahue and Kleinberg [FAT*'20] who considered the case when the demand distribution is known. We show that for many common demand distributions that satisfy sharp lower tail inequalities, a natural allocation that provides resources proportional to each group's average demand performs very well. More specifically, this natural allocation is approximately fair and efficient (i.e., it provides near maximum utilization). We also show that, when small amount of unfairness is allowed, the Price of Fairness (PoF), in this case, is close to 1.

Bandit Multiclass Linear Classification for the Group Linear Separable Case

Dec 21, 2019

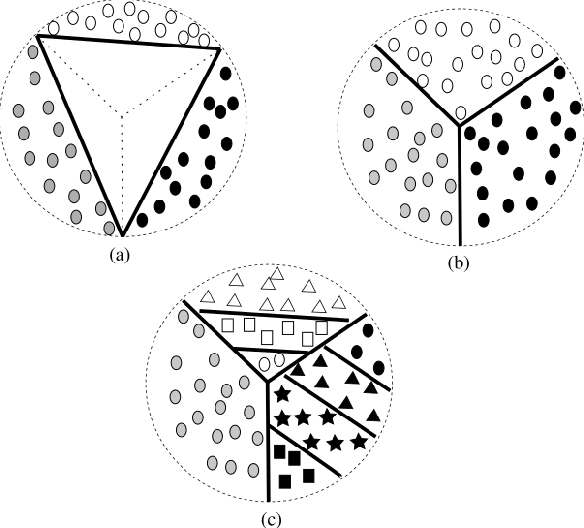

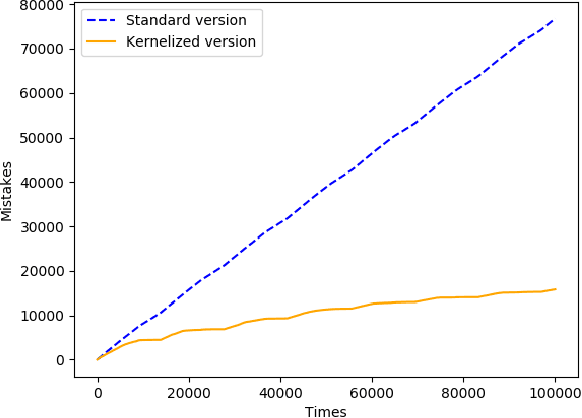

Abstract:We consider the online multiclass linear classification under the bandit feedback setting. Beygelzimer, P\'{a}l, Sz\"{o}r\'{e}nyi, Thiruvenkatachari, Wei, and Zhang [ICML'19] considered two notions of linear separability, weak and strong linear separability. When examples are strongly linearly separable with margin $\gamma$, they presented an algorithm based on Multiclass Perceptron with mistake bound $O(K/\gamma^2)$, where $K$ is the number of classes. They employed rational kernel to deal with examples under the weakly linearly separable condition, and obtained the mistake bound of $\min(K\cdot 2^{\tilde{O}(K\log^2(1/\gamma))},K\cdot 2^{\tilde{O}(\sqrt{1/\gamma}\log K)})$. In this paper, we refine the notion of weak linear separability to support the notion of class grouping, called group weak linear separable condition. This situation may arise from the fact that class structures contain inherent grouping. We show that under this condition, we can also use the rational kernel and obtain the mistake bound of $K\cdot 2^{\tilde{O}(\sqrt{1/\gamma}\log L)})$, where $L\leq K$ represents the number of groups.

Learning Network Structures from Contagion

May 29, 2017Abstract:In 2014, Amin, Heidari, and Kearns proved that tree networks can be learned by observing only the infected set of vertices of the contagion process under the independent cascade model, in both the active and passive query models. They also showed empirically that simple extensions of their algorithms work on sparse networks. In this work, we focus on the active model. We prove that a simple modification of Amin et al.'s algorithm works on more general classes of networks, namely (i) networks with large girth and low path growth rate, and (ii) networks with bounded degree. This also provides partial theoretical explanation for Amin et al.'s experiments on sparse networks.

Low congestion online routing and an improved mistake bound for online prediction of graph labeling

Sep 12, 2008Abstract:In this paper, we show a connection between a certain online low-congestion routing problem and an online prediction of graph labeling. More specifically, we prove that if there exists a routing scheme that guarantees a congestion of $\alpha$ on any edge, there exists an online prediction algorithm with mistake bound $\alpha$ times the cut size, which is the size of the cut induced by the label partitioning of graph vertices. With previous known bound of $O(\log n)$ for $\alpha$ for the routing problem on trees with $n$ vertices, we obtain an improved prediction algorithm for graphs with high effective resistance. In contrast to previous approaches that move the graph problem into problems in vector space using graph Laplacian and rely on the analysis of the perceptron algorithm, our proof are purely combinatorial. Further more, our approach directly generalizes to the case where labels are not binary.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge