Chayutpong Prompak

Stochastic Contextual Bandits with Graph-based Contexts

May 02, 2023Abstract:We naturally generalize the on-line graph prediction problem to a version of stochastic contextual bandit problems where contexts are vertices in a graph and the structure of the graph provides information on the similarity of contexts. More specifically, we are given a graph $G=(V,E)$, whose vertex set $V$ represents contexts with {\em unknown} vertex label $y$. In our stochastic contextual bandit setting, vertices with the same label share the same reward distribution. The standard notion of instance difficulties in graph label prediction is the cutsize $f$ defined to be the number of edges whose end points having different labels. For line graphs and trees we present an algorithm with regret bound of $\tilde{O}(T^{2/3}K^{1/3}f^{1/3})$ where $K$ is the number of arms. Our algorithm relies on the optimal stochastic bandit algorithm by Zimmert and Seldin~[AISTAT'19, JMLR'21]. When the best arm outperforms the other arms, the regret improves to $\tilde{O}(\sqrt{KT\cdot f})$. The regret bound in the later case is comparable to other optimal contextual bandit results in more general cases, but our algorithm is easy to analyze, runs very efficiently, and does not require an i.i.d. assumption on the input context sequence. The algorithm also works with general graphs using a standard random spanning tree reduction.

Bandit Multiclass Linear Classification for the Group Linear Separable Case

Dec 21, 2019

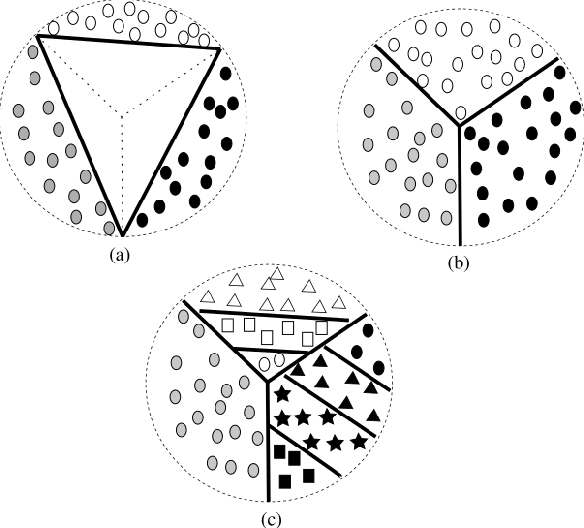

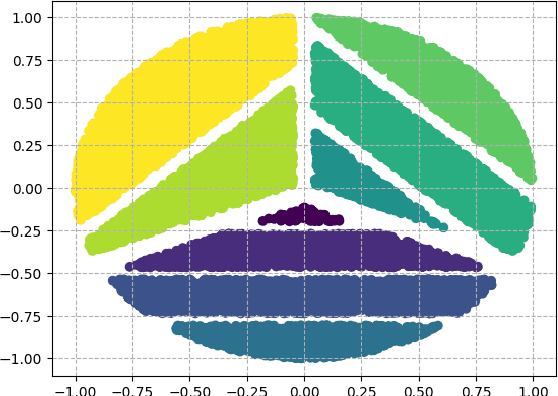

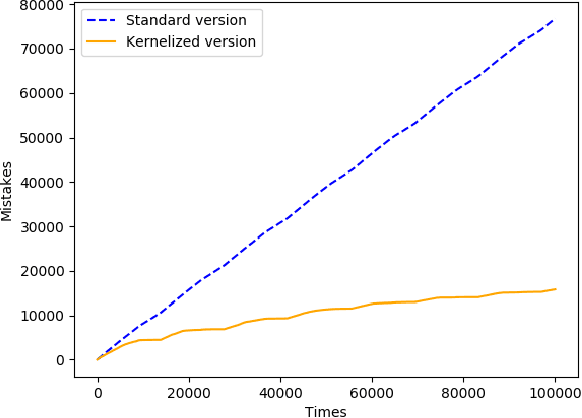

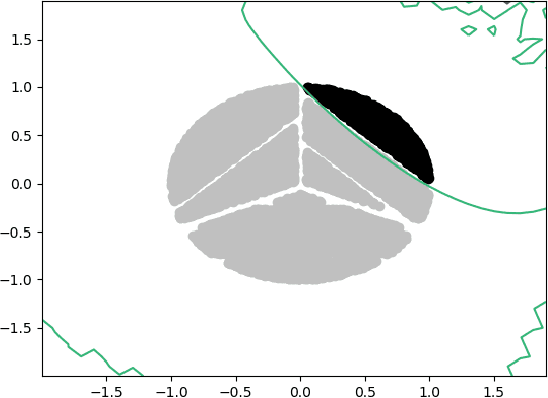

Abstract:We consider the online multiclass linear classification under the bandit feedback setting. Beygelzimer, P\'{a}l, Sz\"{o}r\'{e}nyi, Thiruvenkatachari, Wei, and Zhang [ICML'19] considered two notions of linear separability, weak and strong linear separability. When examples are strongly linearly separable with margin $\gamma$, they presented an algorithm based on Multiclass Perceptron with mistake bound $O(K/\gamma^2)$, where $K$ is the number of classes. They employed rational kernel to deal with examples under the weakly linearly separable condition, and obtained the mistake bound of $\min(K\cdot 2^{\tilde{O}(K\log^2(1/\gamma))},K\cdot 2^{\tilde{O}(\sqrt{1/\gamma}\log K)})$. In this paper, we refine the notion of weak linear separability to support the notion of class grouping, called group weak linear separable condition. This situation may arise from the fact that class structures contain inherent grouping. We show that under this condition, we can also use the rational kernel and obtain the mistake bound of $K\cdot 2^{\tilde{O}(\sqrt{1/\gamma}\log L)})$, where $L\leq K$ represents the number of groups.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge