Javier Velez

Structural Return Maximization for Reinforcement Learning

May 12, 2014

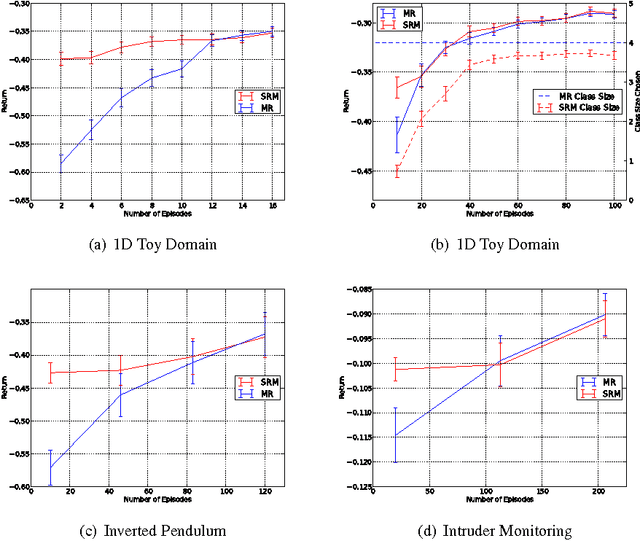

Abstract:Batch Reinforcement Learning (RL) algorithms attempt to choose a policy from a designer-provided class of policies given a fixed set of training data. Choosing the policy which maximizes an estimate of return often leads to over-fitting when only limited data is available, due to the size of the policy class in relation to the amount of data available. In this work, we focus on learning policy classes that are appropriately sized to the amount of data available. We accomplish this by using the principle of Structural Risk Minimization, from Statistical Learning Theory, which uses Rademacher complexity to identify a policy class that maximizes a bound on the return of the best policy in the chosen policy class, given the available data. Unlike similar batch RL approaches, our bound on return requires only extremely weak assumptions on the true system.

Modelling Observation Correlations for Active Exploration and Robust Object Detection

Jan 18, 2014

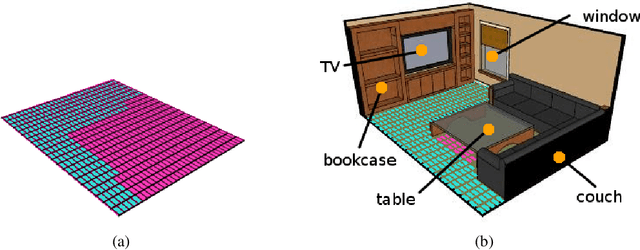

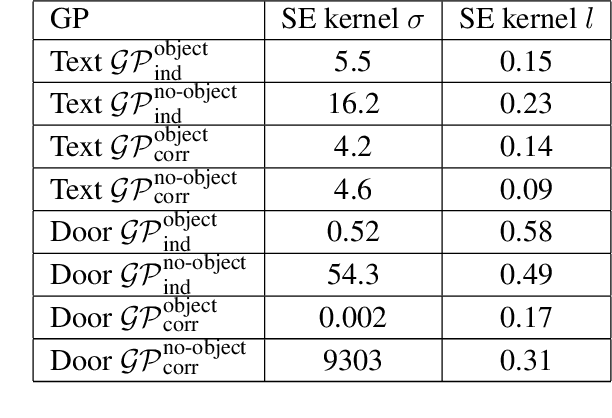

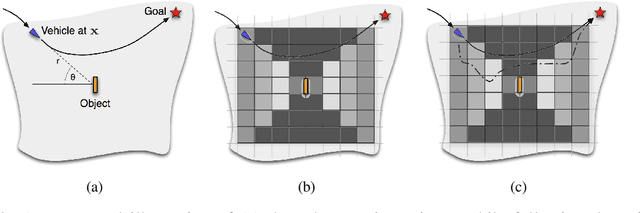

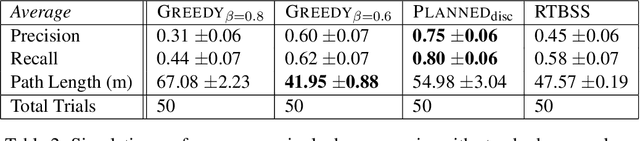

Abstract:Today, mobile robots are expected to carry out increasingly complex tasks in multifarious, real-world environments. Often, the tasks require a certain semantic understanding of the workspace. Consider, for example, spoken instructions from a human collaborator referring to objects of interest; the robot must be able to accurately detect these objects to correctly understand the instructions. However, existing object detection, while competent, is not perfect. In particular, the performance of detection algorithms is commonly sensitive to the position of the sensor relative to the objects in the scene. This paper presents an online planning algorithm which learns an explicit model of the spatial dependence of object detection and generates plans which maximize the expected performance of the detection, and by extension the overall plan performance. Crucially, the learned sensor model incorporates spatial correlations between measurements, capturing the fact that successive measurements taken at the same or nearby locations are not independent. We show how this sensor model can be incorporated into an efficient forward search algorithm in the information space of detected objects, allowing the robot to generate motion plans efficiently. We investigate the performance of our approach by addressing the tasks of door and text detection in indoor environments and demonstrate significant improvement in detection performance during task execution over alternative methods in simulated and real robot experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge