Jaime Carbonell

Self-Paced Multitask Learning with Shared Knowledge

Jun 19, 2017

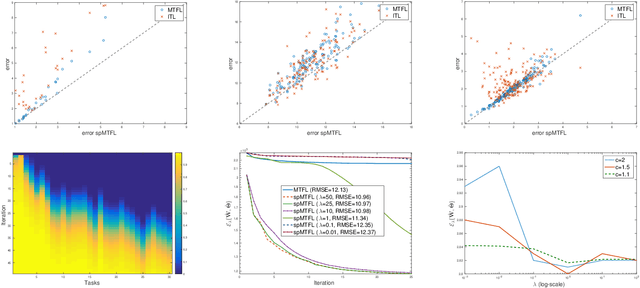

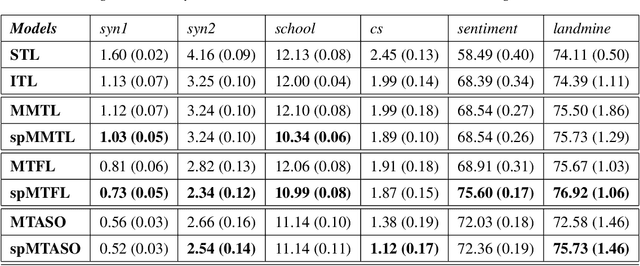

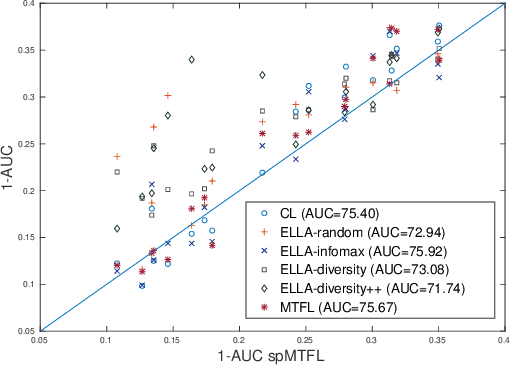

Abstract:This paper introduces self-paced task selection to multitask learning, where instances from more closely related tasks are selected in a progression of easier-to-harder tasks, to emulate an effective human education strategy, but applied to multitask machine learning. We develop the mathematical foundation for the approach based on iterative selection of the most appropriate task, learning the task parameters, and updating the shared knowledge, optimizing a new bi-convex loss function. This proposed method applies quite generally, including to multitask feature learning, multitask learning with alternating structure optimization, etc. Results show that in each of the above formulations self-paced (easier-to-harder) task selection outperforms the baseline version of these methods in all the experiments.

Co-Clustering for Multitask Learning

Mar 03, 2017

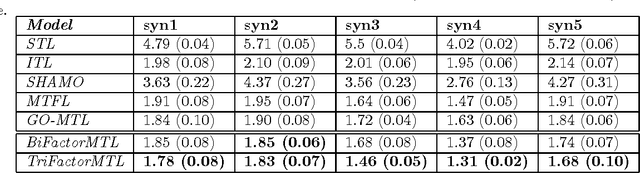

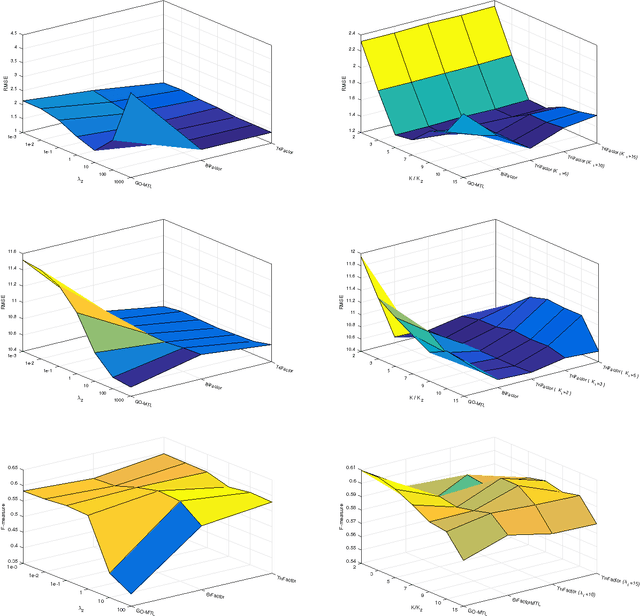

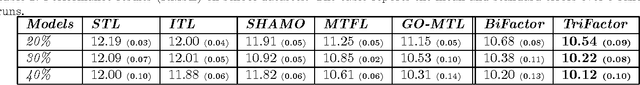

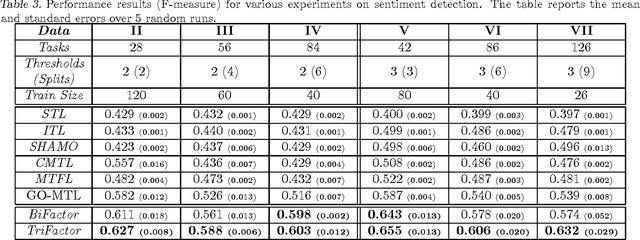

Abstract:This paper presents a new multitask learning framework that learns a shared representation among the tasks, incorporating both task and feature clusters. The jointly-induced clusters yield a shared latent subspace where task relationships are learned more effectively and more generally than in state-of-the-art multitask learning methods. The proposed general framework enables the derivation of more specific or restricted state-of-the-art multitask methods. The paper also proposes a highly-scalable multitask learning algorithm, based on the new framework, using conjugate gradient descent and generalized \textit{Sylvester equations}. Experimental results on synthetic and benchmark datasets show that the proposed method systematically outperforms several state-of-the-art multitask learning methods.

Multi-Task Multiple Kernel Relationship Learning

Mar 02, 2017

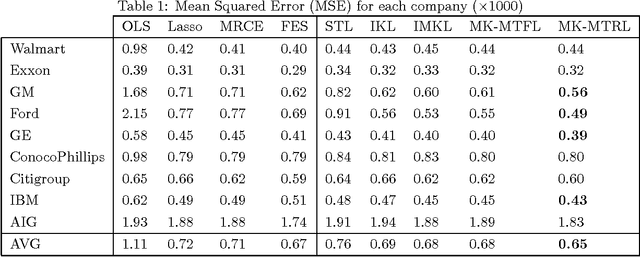

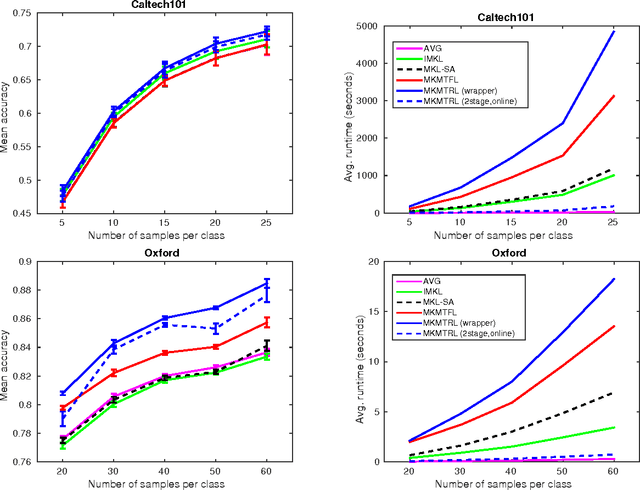

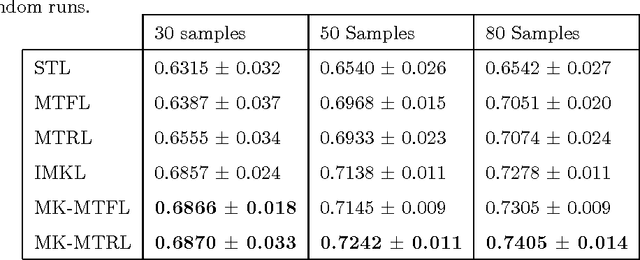

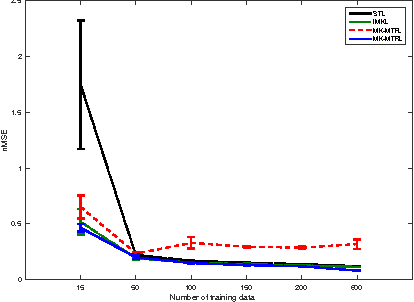

Abstract:This paper presents a novel multitask multiple kernel learning framework that efficiently learns the kernel weights leveraging the relationship across multiple tasks. The idea is to automatically infer this task relationship in the \textit{RKHS} space corresponding to the given base kernels. The problem is formulated as a regularization-based approach called \textit{Multi-Task Multiple Kernel Relationship Learning} (\textit{MK-MTRL}), which models the task relationship matrix from the weights learned from latent feature spaces of task-specific base kernels. Unlike in previous work, the proposed formulation allows one to incorporate prior knowledge for simultaneously learning several related tasks. We propose an alternating minimization algorithm to learn the model parameters, kernel weights and task relationship matrix. In order to tackle large-scale problems, we further propose a two-stage \textit{MK-MTRL} online learning algorithm and show that it significantly reduces the computational time, and also achieves performance comparable to that of the joint learning framework. Experimental results on benchmark datasets show that the proposed formulations outperform several state-of-the-art multitask learning methods.

Privacy-Preserving Multi-Document Summarization

Aug 06, 2015

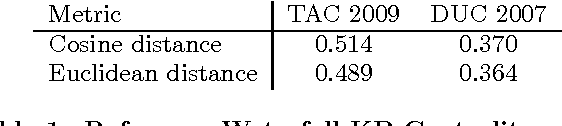

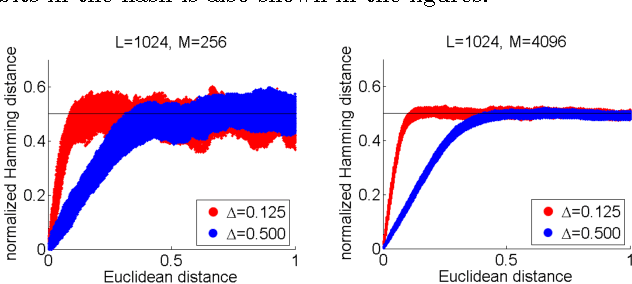

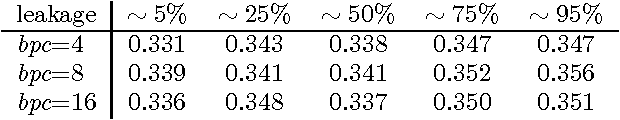

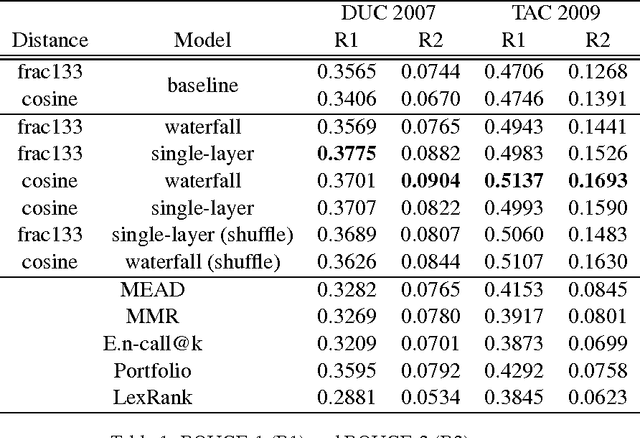

Abstract:State-of-the-art extractive multi-document summarization systems are usually designed without any concern about privacy issues, meaning that all documents are open to third parties. In this paper we propose a privacy-preserving approach to multi-document summarization. Our approach enables other parties to obtain summaries without learning anything else about the original documents' content. We use a hashing scheme known as Secure Binary Embeddings to convert documents representation containing key phrases and bag-of-words into bit strings, allowing the computation of approximate distances, instead of exact ones. Our experiments indicate that our system yields similar results to its non-private counterpart on standard multi-document evaluation datasets.

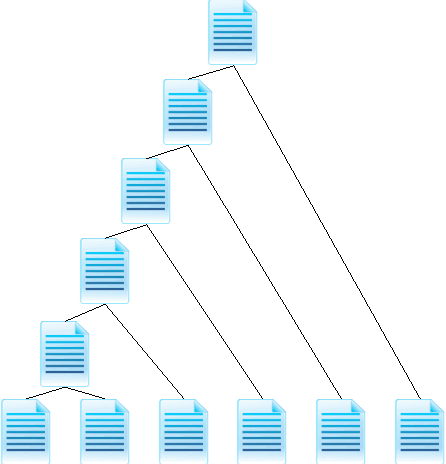

Extending a Single-Document Summarizer to Multi-Document: a Hierarchical Approach

Jul 10, 2015

Abstract:The increasing amount of online content motivated the development of multi-document summarization methods. In this work, we explore straightforward approaches to extend single-document summarization methods to multi-document summarization. The proposed methods are based on the hierarchical combination of single-document summaries, and achieves state of the art results.

Bounds on the Minimax Rate for Estimating a Prior over a VC Class from Independent Learning Tasks

May 20, 2015Abstract:We study the optimal rates of convergence for estimating a prior distribution over a VC class from a sequence of independent data sets respectively labeled by independent target functions sampled from the prior. We specifically derive upper and lower bounds on the optimal rates under a smoothness condition on the correct prior, with the number of samples per data set equal the VC dimension. These results have implications for the improvements achievable via transfer learning. We additionally extend this setting to real-valued function, where we establish consistency of an estimator for the prior, and discuss an additional application to a preference elicitation problem in algorithmic economics.

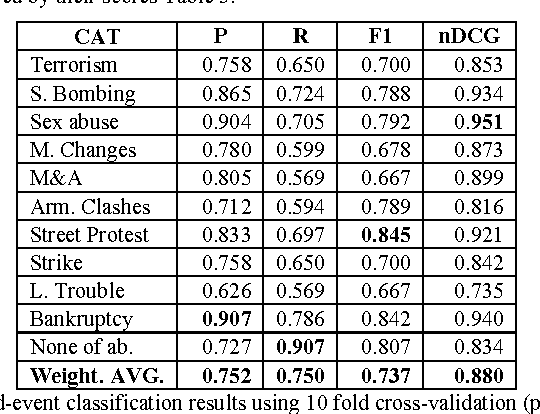

Ensemble Detection of Single & Multiple Events at Sentence-Level

Mar 24, 2014

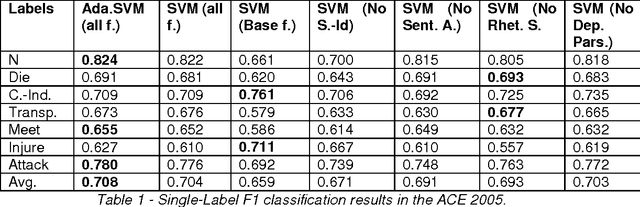

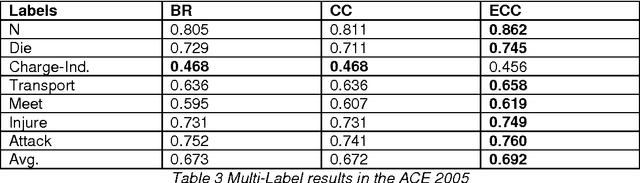

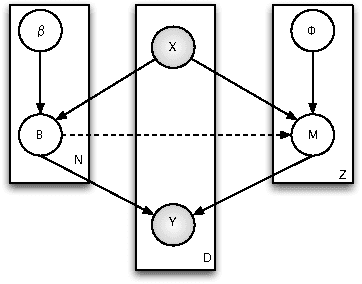

Abstract:Event classification at sentence level is an important Information Extraction task with applications in several NLP, IR, and personalization systems. Multi-label binary relevance (BR) are the state-of-art methods. In this work, we explored new multi-label methods known for capturing relations between event types. These new methods, such as the ensemble Chain of Classifiers, improve the F1 on average across the 6 labels by 2.8% over the Binary Relevance. The low occurrence of multi-label sentences motivated the reduction of the hard imbalanced multi-label classification problem with low number of occurrences of multiple labels per instance to an more tractable imbalanced multiclass problem with better results (+ 4.6%). We report the results of adding new features, such as sentiment strength, rhetorical signals, domain-id (source-id and date), and key-phrases in both single-label and multi-label event classification scenarios.

Co-Multistage of Multiple Classifiers for Imbalanced Multiclass Learning

Jan 24, 2014

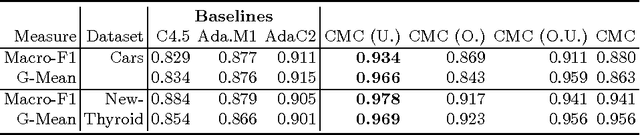

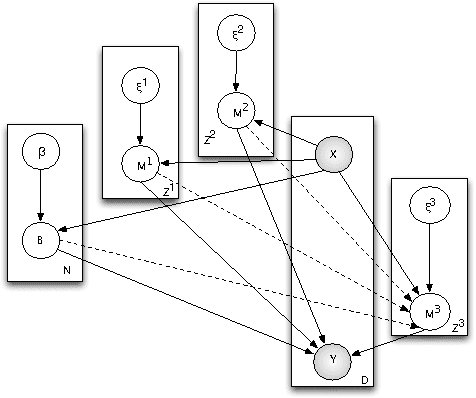

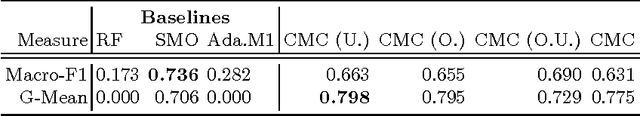

Abstract:In this work, we propose two stochastic architectural models (CMC and CMC-M) with two layers of classifiers applicable to datasets with one and multiple skewed classes. This distinction becomes important when the datasets have a large number of classes. Therefore, we present a novel solution to imbalanced multiclass learning with several skewed majority classes, which improves minority classes identification. This fact is particularly important for text classification tasks, such as event detection. Our models combined with pre-processing sampling techniques improved the classification results on six well-known datasets. Finally, we have also introduced a new metric SG-Mean to overcome the multiplication by zero limitation of G-Mean.

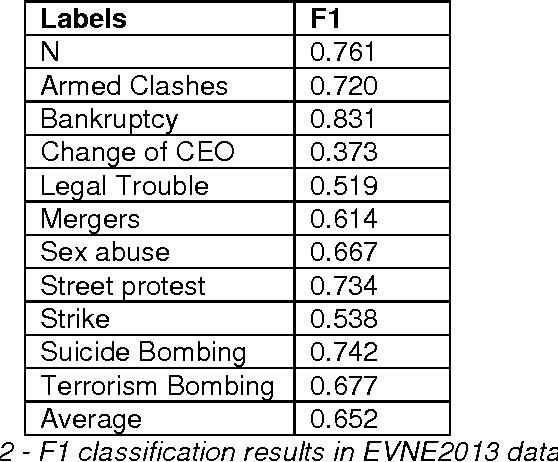

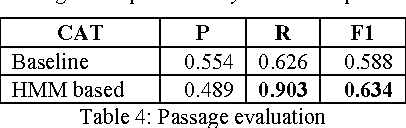

Recognition of Named-Event Passages in News Articles

Jun 20, 2013

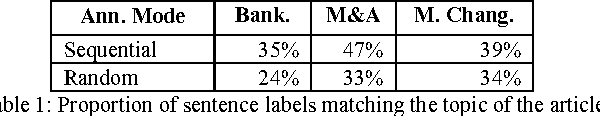

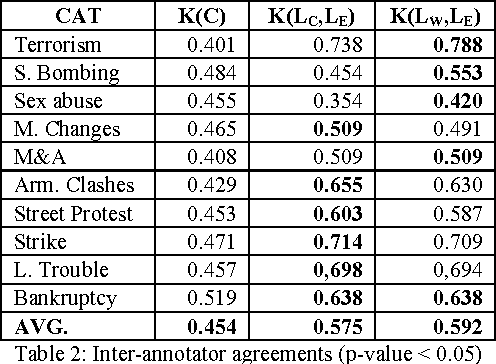

Abstract:We extend the concept of Named Entities to Named Events - commonly occurring events such as battles and earthquakes. We propose a method for finding specific passages in news articles that contain information about such events and report our preliminary evaluation results. Collecting "Gold Standard" data presents many problems, both practical and conceptual. We present a method for obtaining such data using the Amazon Mechanical Turk service.

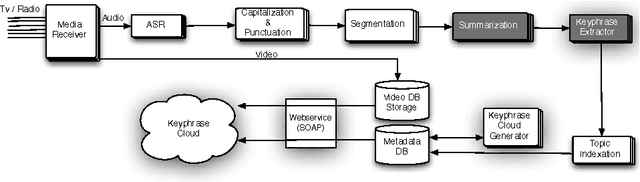

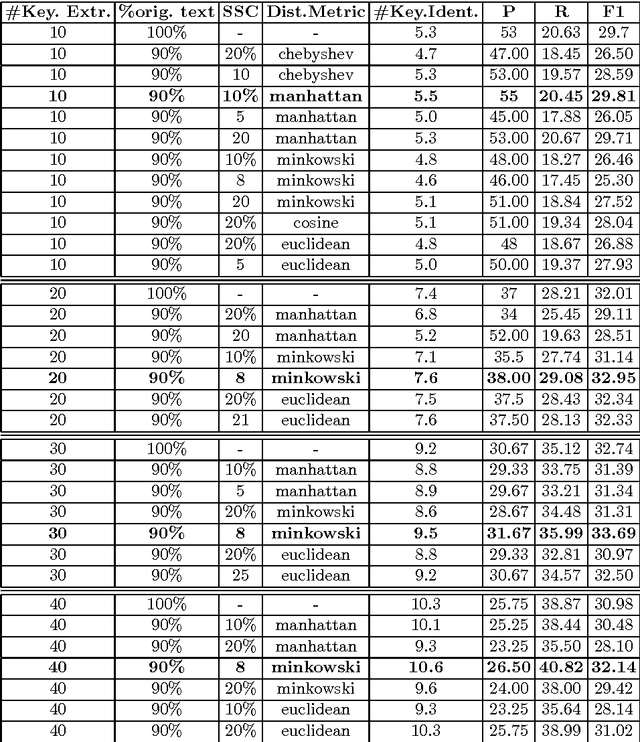

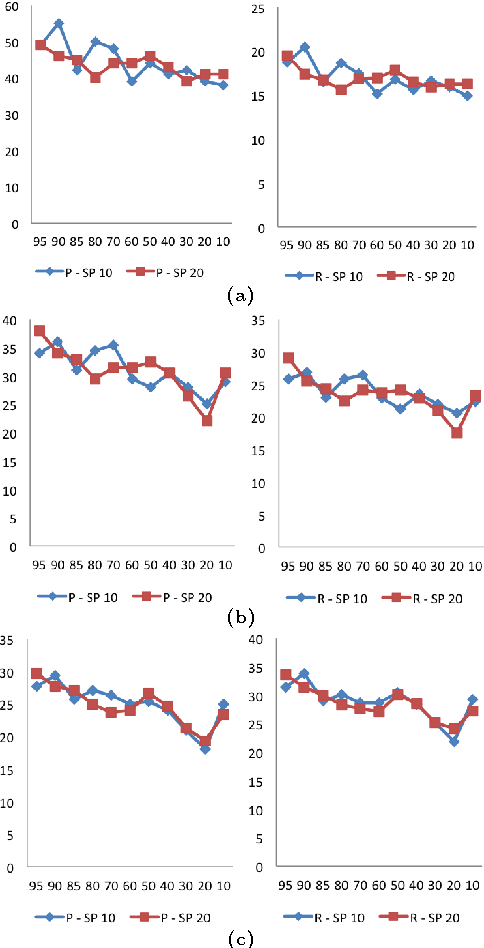

Key Phrase Extraction of Lightly Filtered Broadcast News

Jun 20, 2013

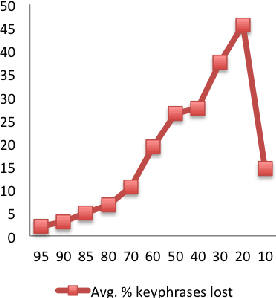

Abstract:This paper explores the impact of light filtering on automatic key phrase extraction (AKE) applied to Broadcast News (BN). Key phrases are words and expressions that best characterize the content of a document. Key phrases are often used to index the document or as features in further processing. This makes improvements in AKE accuracy particularly important. We hypothesized that filtering out marginally relevant sentences from a document would improve AKE accuracy. Our experiments confirmed this hypothesis. Elimination of as little as 10% of the document sentences lead to a 2% improvement in AKE precision and recall. AKE is built over MAUI toolkit that follows a supervised learning approach. We trained and tested our AKE method on a gold standard made of 8 BN programs containing 110 manually annotated news stories. The experiments were conducted within a Multimedia Monitoring Solution (MMS) system for TV and radio news/programs, running daily, and monitoring 12 TV and 4 radio channels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge