Jack O. Burns

How to Deploy a 10-km Interferometric Radio Telescope on the Moon with Just Four Tethered Robots

Sep 06, 2022

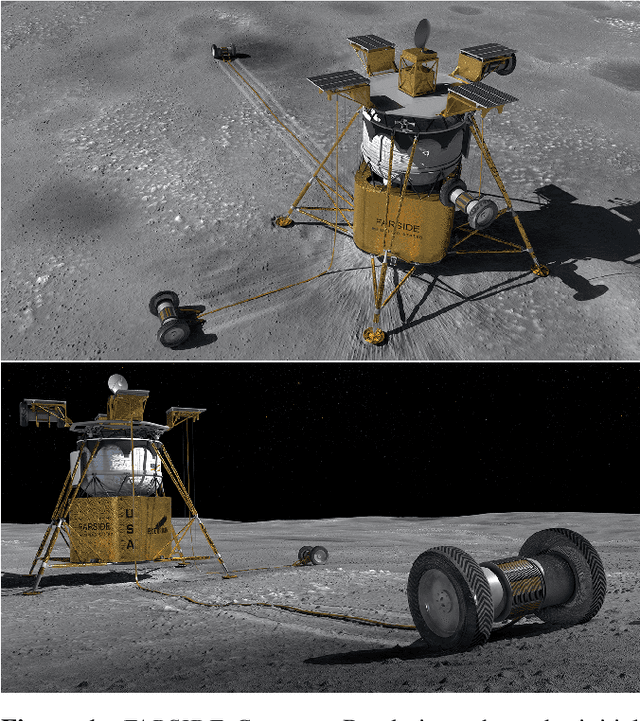

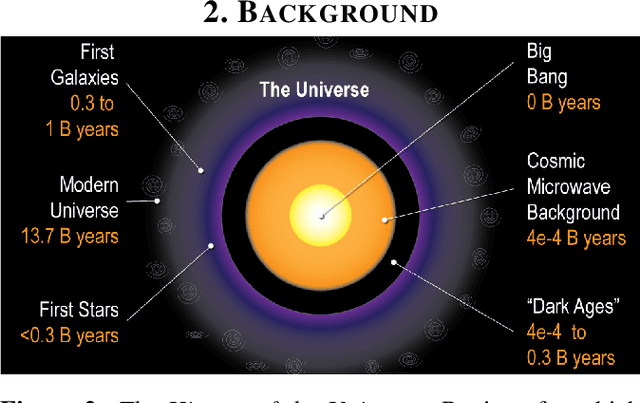

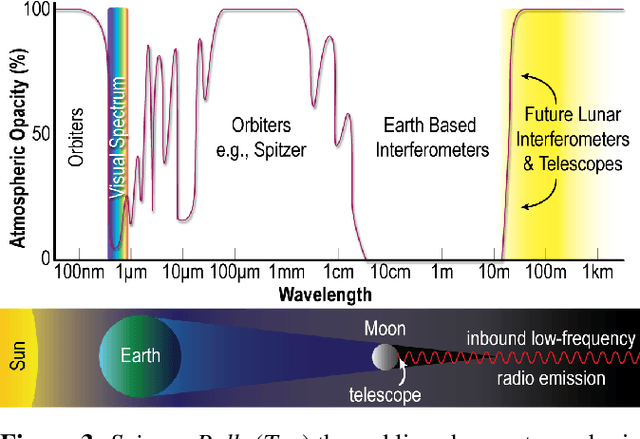

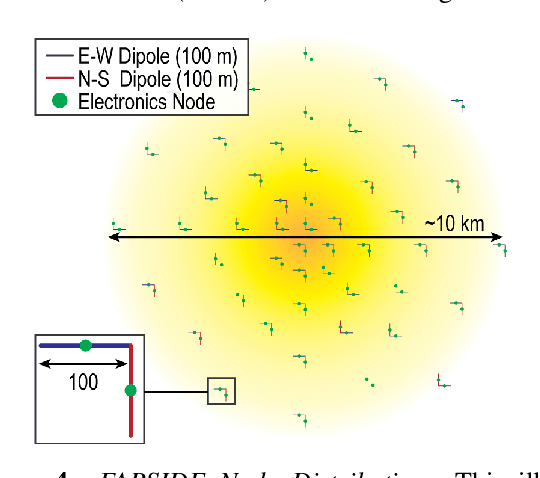

Abstract:The Far-side Array for Radio Science Investigations of the Dark ages and Exoplanets (FARSIDE) is a proposed mission concept to the lunar far side that seeks to deploy and operate an array of 128 dual-polarization, dipole antennas over a region of 100 square kilometers. The resulting interferometric radio telescope would provide unprecedented radio images of distant star systems, allowing for the investigation of faint radio signatures of coronal mass ejections and energetic particle events and could also lead to the detection of magnetospheres around exoplanets within their parent star's habitable zone. Simultaneously, FARSIDE would also measure the "Dark Ages" of the early Universe at a global 21-cm signal across a range of red shifts (z approximately 50-100). Each discrete antenna node in the array is connected to a central hub (located at the lander) via a communication and power tether. Nodes are driven by cold=operable electronics that continuously monitor an extremely wide-band of frequencies (200 kHz to 40 MHz), which surpass the capabilities of Earth-based telescopes by two orders of magnitude. Achieving this ground-breaking capability requires a robust deployment strategy on the lunar surface, which is feasible with existing, high TRL technologies (demonstrated or under active development) and is capable of delivery to the surface on next-generation commercial landers, such as Blue Origin's Blue Moon Lander. This paper presents an antenna packaging, placement, and surface deployment trade study that leverages recent advances in tethered mobile robots under development at NASA's Jet Propulsion Laboratory, which are used to deploy a flat, antenna-embedded, tape tether with optical communication and power transmission capabilities.

* 8 pages, 17 figures, IEEE Aerospace Conference Proceedings, 2021

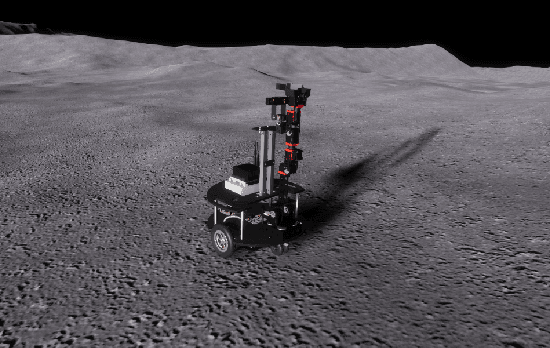

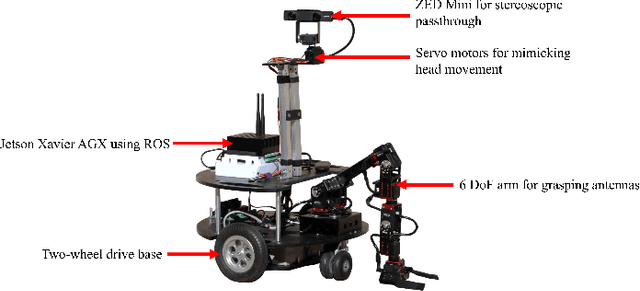

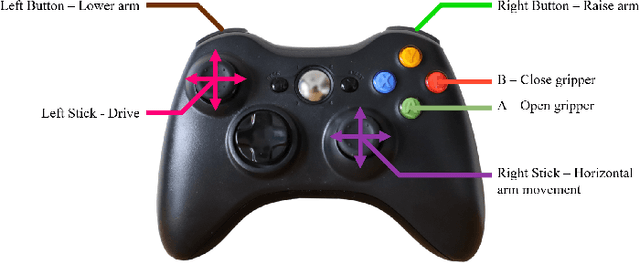

Virtual Reality Digital Twin and Environment for Troubleshooting Lunar-based Infrastructure Assembly Failures

Mar 05, 2022

Abstract:Humans and robots will need to collaborate in order to create a sustainable human lunar presence by the end of the 2020s. This includes cases in which a human will be required to teleoperate an autonomous rover that has encountered an instrument assembly failure. To aid teleoperators in the troubleshooting process, we propose a virtual reality digital twin placed in a simulated environment. Here, the operator can virtually interact with a digital version of the rover and mechanical arm that uses the same controls and kinematic model. The user can also adopt the egocentric (a first person view through using stereoscopic passthrough) and exocentric (a third person view where the operator can virtually walk around the environment and rover as if they were on site) view. We also discuss our metrics for evaluating the differences between our digital and physical robot, as well as the experimental concept based on real and applicable missions, and future work that would compare our platform to traditional troubleshooting methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge