J. Webster Stayman

Volumetric Material Decomposition Using Spectral Diffusion Posterior Sampling with a Compressed Polychromatic Forward Model

Mar 28, 2025

Abstract:We have previously introduced Spectral Diffusion Posterior Sampling (Spectral DPS) as a framework for accurate one-step material decomposition by integrating analytic spectral system models with priors learned from large datasets. This work extends the 2D Spectral DPS algorithm to 3D by addressing potentially limiting large-memory requirements with a pre-trained 2D diffusion model for slice-by-slice processing and a compressed polychromatic forward model to ensure accurate physical modeling. Simulation studies demonstrate that the proposed memory-efficient 3D Spectral DPS enables material decomposition of clinically significant volume sizes. Quantitative analysis reveals that Spectral DPS outperforms other deep-learning algorithms, such as InceptNet and conditional DDPM in contrast quantification, inter-slice continuity, and resolution preservation. This study establishes a foundation for advancing one-step material decomposition in volumetric spectral CT.

CTorch: PyTorch-Compatible GPU-Accelerated Auto-Differentiable Projector Toolbox for Computed Tomography

Mar 20, 2025

Abstract:This work introduces CTorch, a PyTorch-compatible, GPU-accelerated, and auto-differentiable projector toolbox designed to handle various CT geometries with configurable projector algorithms. CTorch provides flexible scanner geometry definition, supporting 2D fan-beam, 3D circular cone-beam, and 3D non-circular cone-beam geometries. Each geometry allows view-specific definitions to accommodate variations during scanning. Both flat- and curved-detector models may be specified to accommodate various clinical devices. CTorch implements four projector algorithms: voxel-driven, ray-driven, distance-driven (DD), and separable footprint (SF), allowing users to balance accuracy and computational efficiency based on their needs. All the projectors are primarily built using CUDA C for GPU acceleration, then compiled as Python-callable functions, and wrapped as PyTorch network module. This design allows direct use of PyTorch tensors, enabling seamless integration into PyTorch's auto-differentiation framework. These features make CTorch an flexible and efficient tool for CT imaging research, with potential applications in accurate CT simulations, efficient iterative reconstruction, and advanced deep-learning-based CT reconstruction.

Deep Learning CT Image Restoration using System Blur and Noise Models

Jul 20, 2024Abstract:The restoration of images affected by blur and noise has been widely studied and has broad potential for applications including in medical imaging modalities like computed tomography (CT). Although the blur and noise in CT images can be attributed to a variety of system factors, these image properties can often be modeled and predicted accurately and used in classical restoration approaches for deconvolution and denoising. In classical approaches, simultaneous deconvolution and denoising can be challenging and often represent competing goals. Recently, deep learning approaches have demonstrated the potential to enhance image quality beyond classic limits; however, most deep learning models attempt a blind restoration problem and base their restoration on image inputs alone without direct knowledge of the image noise and blur properties. In this work, we present a method that leverages both degraded image inputs and a characterization of the system blur and noise to combine modeling and deep learning approaches. Different methods to integrate these auxiliary inputs are presented. Namely, an input-variant and a weight-variant approach wherein the auxiliary inputs are incorporated as a parameter vector before and after the convolutional block, respectively, allowing easy integration into any CNN architecture. The proposed model shows superior performance compared to baseline models lacking auxiliary inputs. Evaluations are based on the average Peak Signal-to-Noise Ratio (PSNR), selected examples of good and poor performance for varying approaches, and an input space analysis to assess the effect of different noise and blur on performance. Results demonstrate the efficacy of providing a deep learning model with auxiliary inputs, representing system blur and noise characteristics, to enhance the performance of the model in image restoration tasks.

Strategies for CT Reconstruction using Diffusion Posterior Sampling with a Nonlinear Model

Jul 17, 2024

Abstract:Diffusion Posterior Sampling(DPS) methodology is a novel framework that permits nonlinear CT reconstruction by integrating a diffusion prior and an analytic physical system model, allowing for one-time training for different applications. However, baseline DPS can struggle with large variability, hallucinations, and slow reconstruction. This work introduces a number of strategies designed to enhance the stability and efficiency of DPS CT reconstruction. Specifically, jumpstart sampling allows one to skip many reverse time steps, significantly reducing the reconstruction time as well as the sampling variability. Additionally, the likelihood update is modified to simplify the Jacobian computation and improve data consistency more efficiently. Finally, a hyperparameter sweep is conducted to investigate the effects of parameter tuning and to optimize the overall reconstruction performance. Simulation studies demonstrated that the proposed DPS technique achieves up to 46.72% PSNR and 51.50% SSIM enhancement in a low-mAs setting, and an over 31.43% variability reduction in a sparse-view setting. Moreover, reconstruction time is sped up from >23.5 s/slice to <1.5 s/slice. In a physical data study, the proposed DPS exhibits robustness on an anthropomorphic phantom reconstruction which does not strictly follow the prior distribution. Quantitative analysis demonstrates that the proposed DPS can accommodate various dose levels and number of views. With 10% dose, only a 5.60% and 4.84% reduction of PSNR and SSIM was observed for the proposed approach. Both simulation and phantom studies demonstrate that the proposed method can significantly improve reconstruction accuracy and reduce computational costs, greatly enhancing the practicality of DPS CT reconstruction.

CT Material Decomposition using Spectral Diffusion Posterior Sampling

Feb 05, 2024

Abstract:In this work, we introduce a new deep learning approach based on diffusion posterior sampling (DPS) to perform material decomposition from spectral CT measurements. This approach combines sophisticated prior knowledge from unsupervised training with a rigorous physical model of the measurements. A faster and more stable variant is proposed that uses a jumpstarted process to reduce the number of time steps required in the reverse process and a gradient approximation to reduce the computational cost. Performance is investigated for two spectral CT systems: dual-kVp and dual-layer detector CT. On both systems, DPS achieves high Structure Similarity Index Metric Measure(SSIM) with only 10% of iterations as used in the model-based material decomposition(MBMD). Jumpstarted DPS (JSDPS) further reduces computational time by over 85% and achieves the highest accuracy, the lowest uncertainty, and the lowest computational costs compared to classic DPS and MBMD. The results demonstrate the potential of JSDPS for providing relatively fast and accurate material decomposition based on spectral CT data.

Diffusion Posterior Sampling for Nonlinear CT Reconstruction

Dec 03, 2023Abstract:Diffusion models have been demonstrated as powerful deep learning tools for image generation in CT reconstruction and restoration. Recently, diffusion posterior sampling, where a score-based diffusion prior is combined with a likelihood model, has been used to produce high quality CT images given low-quality measurements. This technique is attractive since it permits a one-time, unsupervised training of a CT prior; which can then be incorporated with an arbitrary data model. However, current methods only rely on a linear model of x-ray CT physics to reconstruct or restore images. While it is common to linearize the transmission tomography reconstruction problem, this is an approximation to the true and inherently nonlinear forward model. We propose a new method that solves the inverse problem of nonlinear CT image reconstruction via diffusion posterior sampling. We implement a traditional unconditional diffusion model by training a prior score function estimator, and apply Bayes rule to combine this prior with a measurement likelihood score function derived from the nonlinear physical model to arrive at a posterior score function that can be used to sample the reverse-time diffusion process. This plug-and-play method allows incorporation of a diffusion-based prior with generalized nonlinear CT image reconstruction into multiple CT system designs with different forward models, without the need for any additional training. We develop the algorithm that performs this reconstruction, including an ordered-subsets variant for accelerated processing and demonstrate the technique in both fully sampled low dose data and sparse-view geometries using a single unsupervised training of the prior.

High-Sensitivity Iodine Imaging by Combining Spectral CT Technologies

Mar 29, 2021

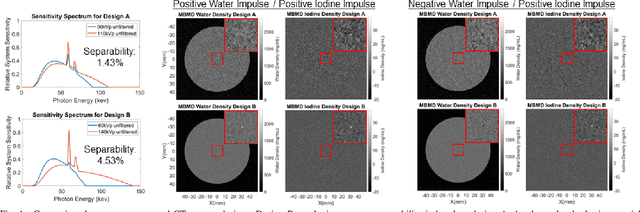

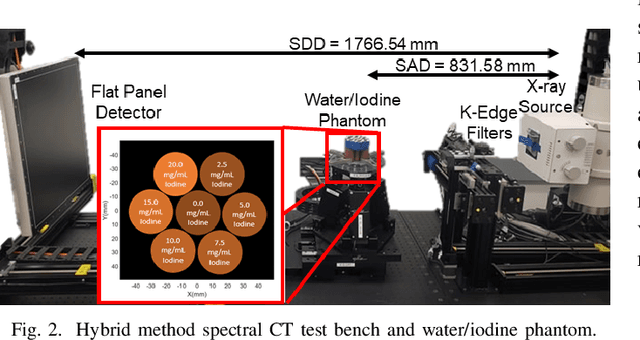

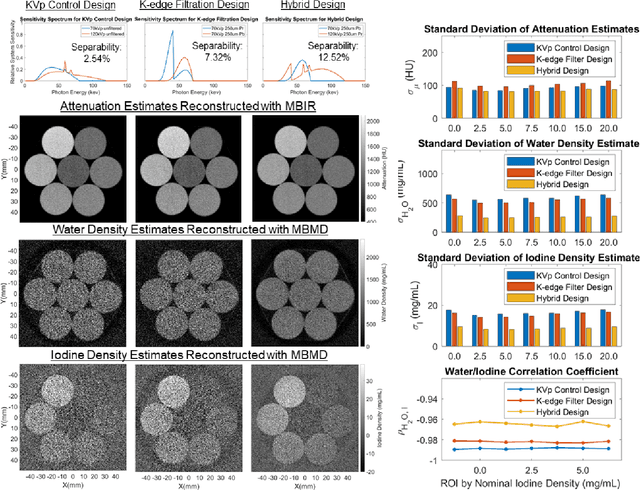

Abstract:Spectral CT offers enhanced material discrimination over single-energy systems and enables quantitative estimation of basis material density images. Water/iodine decomposition in contrast-enhanced CT is one of the most widespread applications of this technology in the clinic. However, low concentrations of iodine can be difficult to estimate accurately, limiting potential clinical applications and/or raising injected contrast agent requirements. We seek high-sensitivity spectral CT system designs which minimize noise in water/iodine density estimates. In this work, we present a model-driven framework for spectral CT system design optimization to maximize material separability. We apply this tool to optimize the sensitivity spectra on a spectral CT test bench using a hybrid design which combines source kVp control and k-edge filtration. Following design optimization, we scanned a water/iodine phantom with the hybrid spectral CT system and performed dose-normalized comparisons to two single-technique designs which use only kVp control or only kedge filtration. The material decomposition results show that the hybrid system reduces both standard deviation and crossmaterial noise correlations compared to the designs where the constituent technologies are used individually.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge