Ivan Selesnick

Adaptive Iterative Soft-Thresholding Algorithm with the Median Absolute Deviation

Jul 02, 2025Abstract:The adaptive Iterative Soft-Thresholding Algorithm (ISTA) has been a popular algorithm for finding a desirable solution to the LASSO problem without explicitly tuning the regularization parameter $\lambda$. Despite that the adaptive ISTA is a successful practical algorithm, few theoretical results exist. In this paper, we present the theoretical analysis on the adaptive ISTA with the thresholding strategy of estimating noise level by median absolute deviation. We show properties of the fixed points of the algorithm, including scale equivariance, non-uniqueness, and local stability, prove the local linear convergence guarantee, and show its global convergence behavior.

Bregman Plug-and-Play Priors

Feb 04, 2022

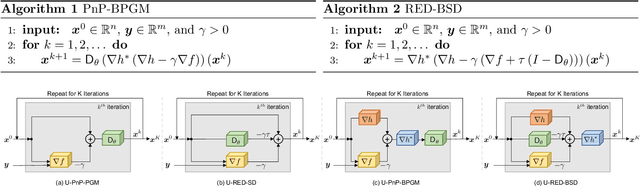

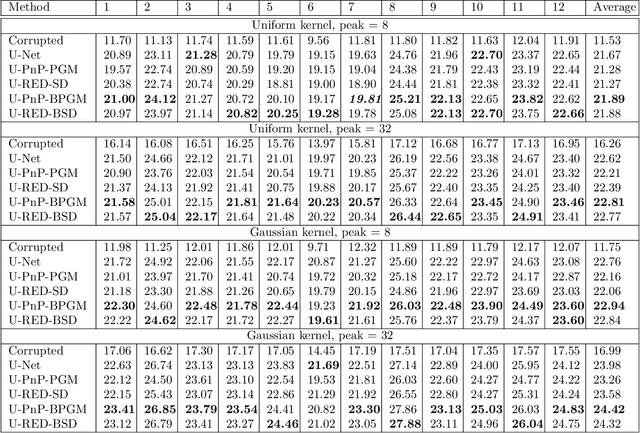

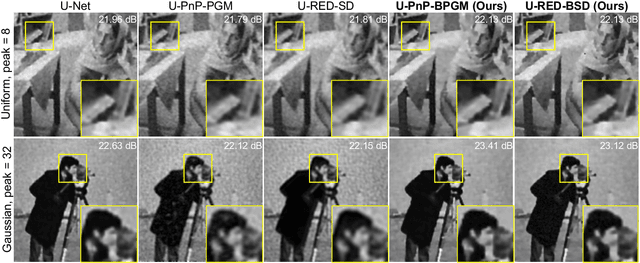

Abstract:The past few years have seen a surge of activity around integration of deep learning networks and optimization algorithms for solving inverse problems. Recent work on plug-and-play priors (PnP), regularization by denoising (RED), and deep unfolding has shown the state-of-the-art performance of such integration in a variety of applications. However, the current paradigm for designing such algorithms is inherently Euclidean, due to the usage of the quadratic norm within the projection and proximal operators. We propose to broaden this perspective by considering a non-Euclidean setting based on the more general Bregman distance. Our new Bregman Proximal Gradient Method variant of PnP (PnP-BPGM) and Bregman Steepest Descent variant of RED (RED-BSD) replace the traditional updates in PnP and RED from the quadratic norms to more general Bregman distance. We present a theoretical convergence result for PnP-BPGM and demonstrate the effectiveness of our algorithms on Poisson linear inverse problems.

Image Fusion via Sparse Regularization with Non-Convex Penalties

May 23, 2019

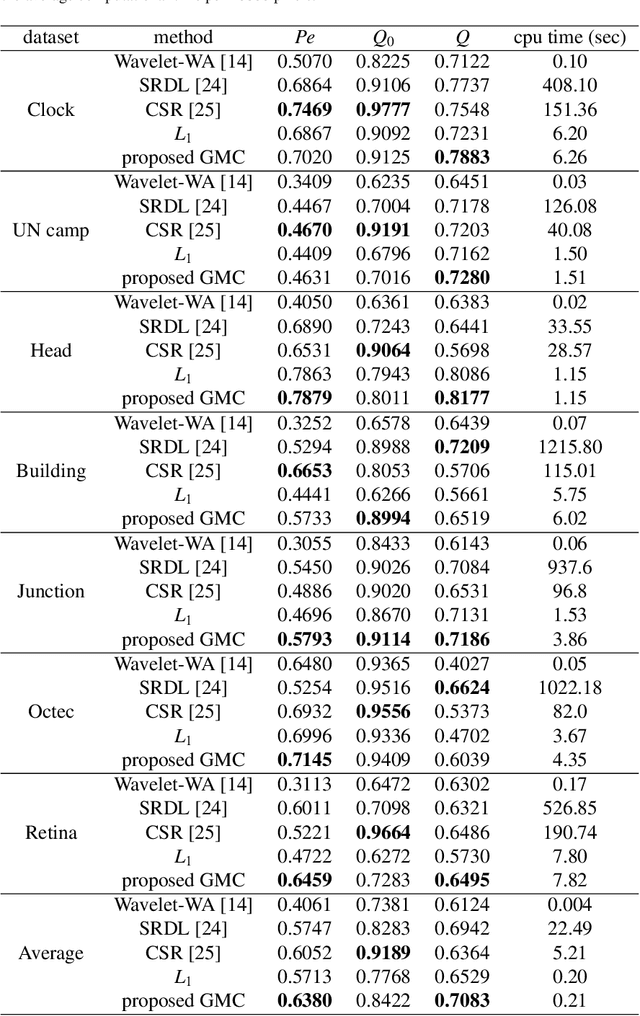

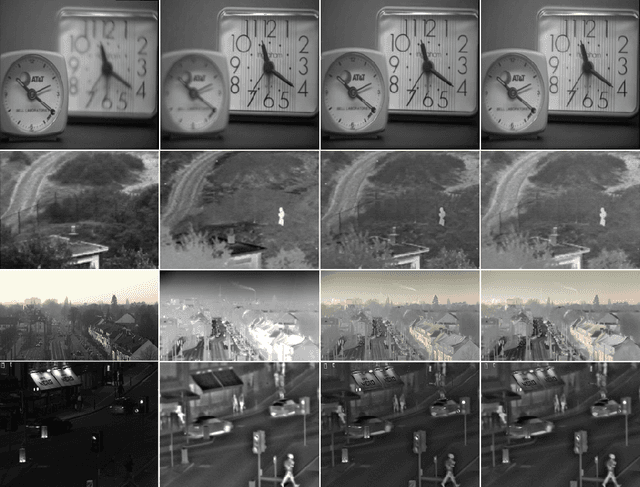

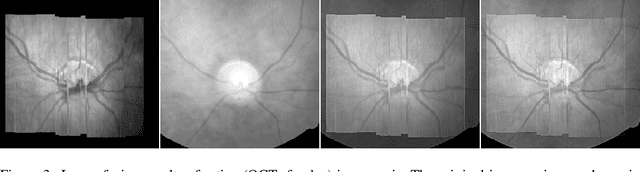

Abstract:The L1 norm regularized least squares method is often used for finding sparse approximate solutions and is widely used in 1-D signal restoration. Basis pursuit denoising (BPD) performs noise reduction in this way. However, the shortcoming of using L1 norm regularization is the underestimation of the true solution. Recently, a class of non-convex penalties have been proposed to improve this situation. This kind of penalty function is non-convex itself, but preserves the convexity property of the whole cost function. This approach has been confirmed to offer good performance in 1-D signal denoising. This paper demonstrates the aforementioned method to 2-D signals (images) and applies it to multisensor image fusion. The problem is posed as an inverse one and a corresponding cost function is judiciously designed to include two data attachment terms. The whole cost function is proved to be convex upon suitably choosing the non-convex penalty, so that the cost function minimization can be tackled by convex optimization approaches, which comprise simple computations. The performance of the proposed method is benchmarked against a number of state-of-the-art image fusion techniques and superior performance is demonstrated both visually and in terms of various assessment measures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge