Inna Skarga-Bandurova

MR-GNF: Multi-Resolution Graph Neural Forecasting on Ellipsoidal Meshes for Efficient Regional Weather Prediction

Mar 13, 2026Abstract:Weather forecasting offers an ideal testbed for artificial intelligence (AI) to learn complex, multi-scale physical systems. Traditional numerical weather prediction remains computationally costly for frequent regional updates, as high-resolution nests require intensive boundary coupling. We introduce Multi-Resolution Graph Neural Forecasting (MR-GNF), a lightweight, physics-aware model that performs short-term regional forecasts directly on an ellipsoidal, multi-scale graph of the Earth. The framework couples a 0.25° region of interest with a 0.5° context belt and 1.0° outer domain, enabling continuous cross-scale message passing without explicit nested boundaries. Its axial graph-attention network alternates vertical self-attention across pressure levels with horizontal graph attention across surface nodes, capturing implicit 3-D structure in just 1.6 M parameters. Trained on 40 years of ERA5 reanalysis (1980-2024), MR-GNF delivers stable +6 h to +24 h forecasts for near-surface temperature, wind, and precipitation over the UK-Ireland sector. Despite a total compute cost below 80 GPU-hours on a single RTX 6000 Ada, the model matches or exceeds heavier regional AI systems while preserving physical consistency across scales. These results demonstrate that graph-based neural operators can achieve trustworthy, high-resolution weather prediction at a fraction of NWP cost, opening a practical path toward AI-driven early-warning and renewable-energy forecasting systems. Project page and code: https://github.com/AndriiShchur/MR-GNF

The SARAS Endoscopic Surgeon Action Detection (ESAD) dataset: Challenges and methods

Apr 07, 2021

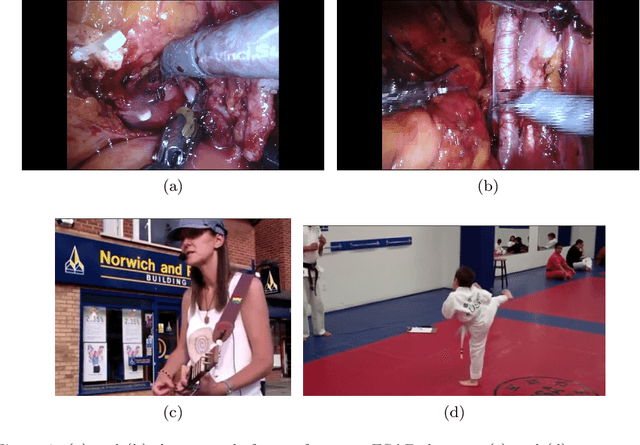

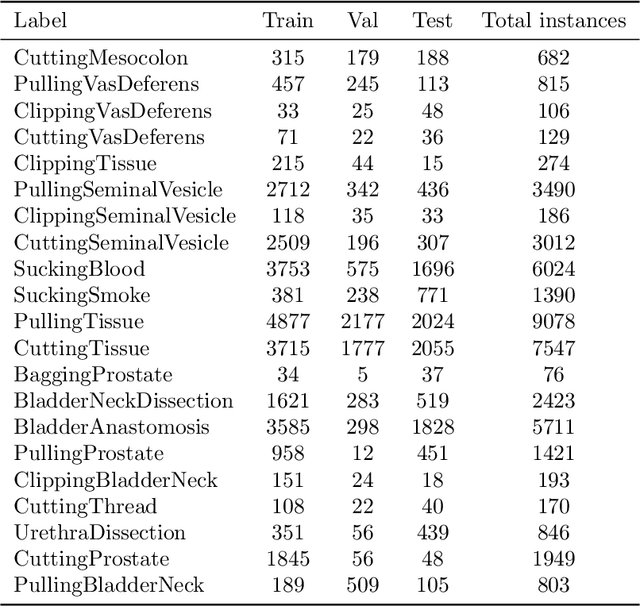

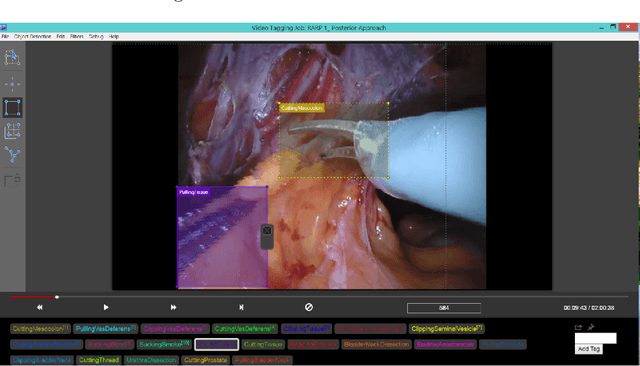

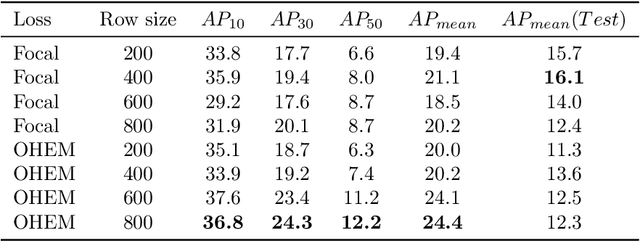

Abstract:For an autonomous robotic system, monitoring surgeon actions and assisting the main surgeon during a procedure can be very challenging. The challenges come from the peculiar structure of the surgical scene, the greater similarity in appearance of actions performed via tools in a cavity compared to, say, human actions in unconstrained environments, as well as from the motion of the endoscopic camera. This paper presents ESAD, the first large-scale dataset designed to tackle the problem of surgeon action detection in endoscopic minimally invasive surgery. ESAD aims at contributing to increase the effectiveness and reliability of surgical assistant robots by realistically testing their awareness of the actions performed by a surgeon. The dataset provides bounding box annotation for 21 action classes on real endoscopic video frames captured during prostatectomy, and was used as the basis of a recent MIDL 2020 challenge. We also present an analysis of the dataset conducted using the baseline model which was released as part of the challenge, and a description of the top performing models submitted to the challenge together with the results they obtained. This study provides significant insight into what approaches can be effective and can be extended further. We believe that ESAD will serve in the future as a useful benchmark for all researchers active in surgeon action detection and assistive robotics at large.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge