I. M. Ross

Hessians in Birkhoff-Theoretic Trajectory Optimization

Nov 17, 2025Abstract:This paper derives various Hessians associated with Birkhoff-theoretic methods for trajectory optimization. According to a theorem proved in this paper, approximately 80% of the eigenvalues are contained in the narrow interval [-2, 4] for all Birkhoff-discretized optimal control problems. A preliminary analysis of computational complexity is also presented with further discussions on the grand challenge of solving a million point trajectory optimization problem.

* This paper appeared as an Engineering Note in the J. Guid. Control & Dynamics

Derivation of Coordinate Descent Algorithms from Optimal Control Theory

Sep 07, 2023Abstract:Recently, it was posited that disparate optimization algorithms may be coalesced in terms of a central source emanating from optimal control theory. Here we further this proposition by showing how coordinate descent algorithms may be derived from this emerging new principle. In particular, we show that basic coordinate descent algorithms can be derived using a maximum principle and a collection of max functions as "control" Lyapunov functions. The convergence of the resulting coordinate descent algorithms is thus connected to the controlled dissipation of their corresponding Lyapunov functions. The operational metric for the search vector in all cases is given by the Hessian of the convex objective function.

Implementations of the Universal Birkhoff Theory for Fast Trajectory Optimization

Aug 04, 2023Abstract:This is part II of a two-part paper. Part I presented a universal Birkhoff theory for fast and accurate trajectory optimization. The theory rested on two main hypotheses. In this paper, it is shown that if the computational grid is selected from any one of the Legendre and Chebyshev family of node points, be it Lobatto, Radau or Gauss, then, the resulting collection of trajectory optimization methods satisfy the hypotheses required for the universal Birkhoff theory to hold. All of these grid points can be generated at an $\mathcal{O}(1)$ computational speed. Furthermore, all Birkhoff-generated solutions can be tested for optimality by a joint application of Pontryagin's- and Covector-Mapping Principles, where the latter was developed in Part~I. More importantly, the optimality checks can be performed without resorting to an indirect method or even explicitly producing the full differential-algebraic boundary value problem that results from an application of Pontryagin's Principle. Numerical problems are solved to illustrate all these ideas. The examples are chosen to particularly highlight three practically useful features of Birkhoff methods: (1) bang-bang optimal controls can be produced without suffering any Gibbs phenomenon, (2) discontinuous and even Dirac delta covector trajectories can be well approximated, and (3) extremal solutions over dense grids can be computed in a stable and efficient manner.

A Derivation of Nesterov's Accelerated Gradient Algorithm from Optimal Control Theory

Mar 29, 2022Abstract:Nesterov's accelerated gradient algorithm is derived from first principles. The first principles are founded on the recently-developed optimal control theory for optimization. This theory frames an optimization problem as an optimal control problem whose trajectories generate various continuous-time algorithms. The algorithmic trajectories satisfy the necessary conditions for optimal control. The necessary conditions produce a controllable dynamical system for accelerated optimization. Stabilizing this system via a quadratic control Lyapunov function generates an ordinary differential equation. An Euler discretization of the resulting differential equation produces Nesterov's algorithm. In this context, this result solves the purported mystery surrounding the algorithm.

An Optimal Control Theory for the Traveling Salesman Problem and Its Variants

May 07, 2020

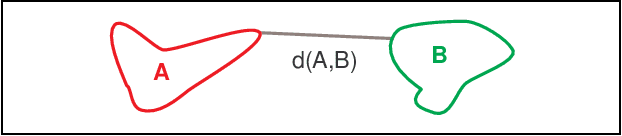

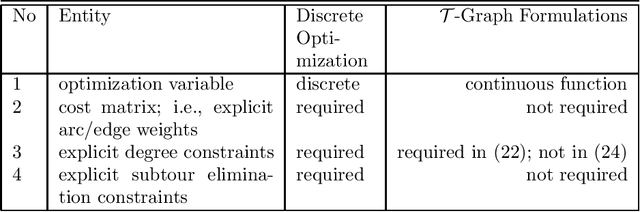

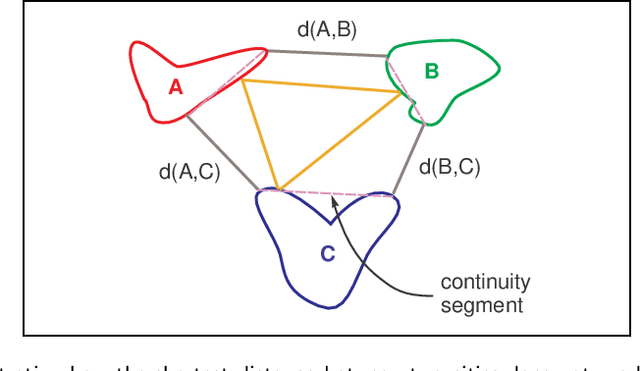

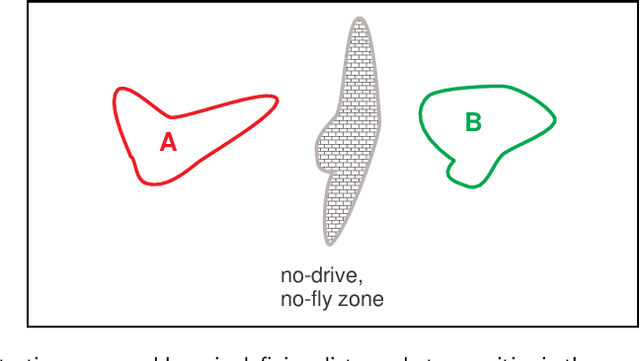

Abstract:We show that the traveling salesman problem (TSP) and its many variants may be modeled as functional optimization problems over a graph. In this formulation, all vertices and arcs of the graph are functionals; i.e., a mapping from a space of measurable functions to the field of real numbers. Many variants of the TSP, such as those with neighborhoods, with forbidden neighborhoods, with time-windows and with profits, can all be framed under this construct. In sharp contrast to their discrete-optimization counterparts, the modeling constructs presented in this paper represent a fundamentally new domain of analysis and computation for TSPs and their variants. Beyond its apparent mathematical unification of a class of problems in graph theory, the main advantage of the new approach is that it facilitates the modeling of certain application-specific problems in their home space of measurable functions. Consequently, certain elements of economic system theory such as dynamical models and continuous-time cost/profit functionals can be directly incorporated in the new optimization problem formulation. Furthermore, subtour elimination constraints, prevalent in discrete optimization formulations, are naturally enforced through continuity requirements. The price for the new modeling framework is nonsmooth functionals. Although a number of theoretical issues remain open in the proposed mathematical framework, we demonstrate the computational viability of the new modeling constructs over a sample set of problems to illustrate the rapid production of end-to-end TSP solutions to extensively-constrained practical problems.

Enhancements to the DIDO Optimal Control Toolbox

Apr 27, 2020

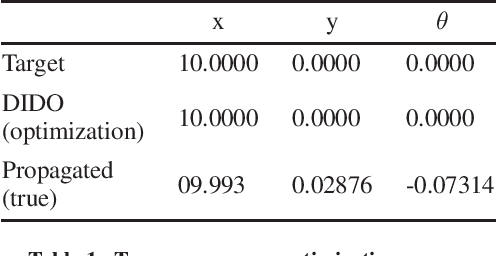

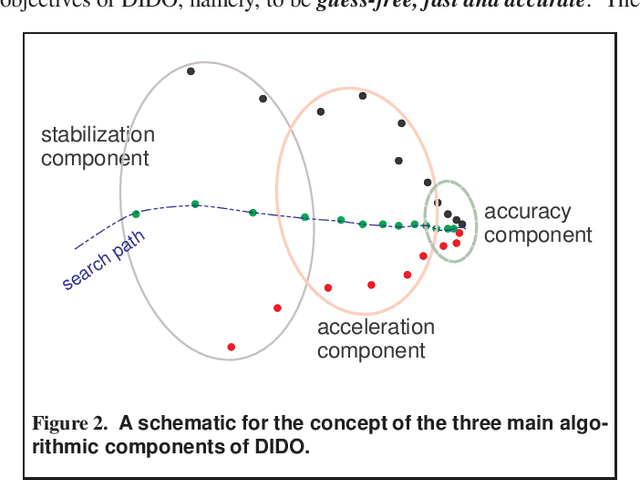

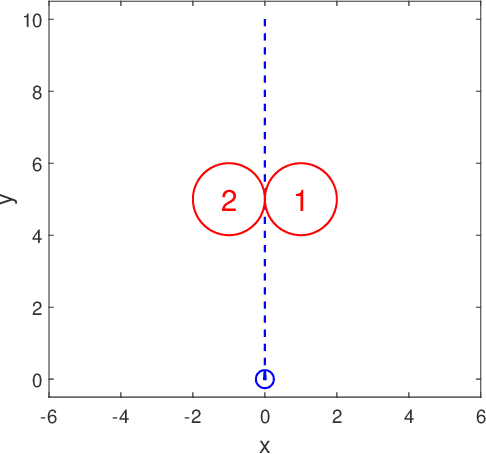

Abstract:In 2020, DIDO turned 20! The software package emerged in 2001 as a basic, user-friendly MATLAB teaching-tool to illustrate the various nuances of Pontryagin's Principle but quickly rose to prominence in 2007 after NASA announced it had executed a globally optimal maneuver using DIDO. Since then, the toolbox has grown in applications well beyond its aerospace roots: from solving problems in quantum control to ushering rapid, nonlinear sensitivity-analysis in designing high-performance automobiles. Most recently, it has been used to solve continuous-time traveling-salesman problems. Over the last two decades, DIDO's algorithms have evolved from their simple use of generic nonlinear programming solvers to a more sophisticated employment of fast spectral Hamiltonian programming techniques. A description of the internal enhancements to DIDO that define its mathematics and algorithms are described in this paper. A challenge example problem from robotics is included to showcase how the latest version of DIDO is capable of escaping the trappings of a "local minimum" that ensnare many other trajectory optimization methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge