Hugo de Vos

Political corpus creation through automatic speech recognition on EU debates

Apr 17, 2023Abstract:In this paper, we present a transcribed corpus of the LIBE committee of the EU parliament, totalling 3.6 Million running words. The meetings of parliamentary committees of the EU are a potentially valuable source of information for political scientists but the data is not readily available because only disclosed as speech recordings together with limited metadata. The meetings are in English, partly spoken by non-native speakers, and partly spoken by interpreters. We investigated the most appropriate Automatic Speech Recognition (ASR) model to create an accurate text transcription of the audio recordings of the meetings in order to make their content available for research and analysis. We focused on the unsupervised domain adaptation of the ASR pipeline. Building on the transformer-based Wav2vec2.0 model, we experimented with multiple acoustic models, language models and the addition of domain-specific terms. We found that a domain-specific acoustic model and a domain-specific language model give substantial improvements to the ASR output, reducing the word error rate (WER) from 28.22 to 17.95. The use of domain-specific terms in the decoding stage did not have a positive effect on the quality of the ASR in terms of WER. Initial topic modelling results indicated that the corpus is useful for downstream analysis tasks. We release the resulting corpus and our analysis pipeline for future research.

Small data problems in political research: a critical replication study

Sep 27, 2021

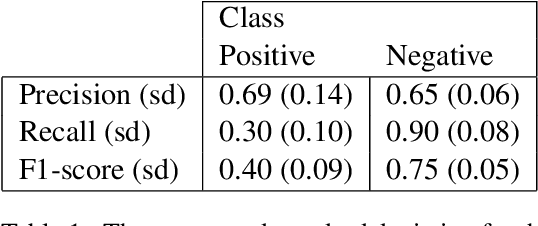

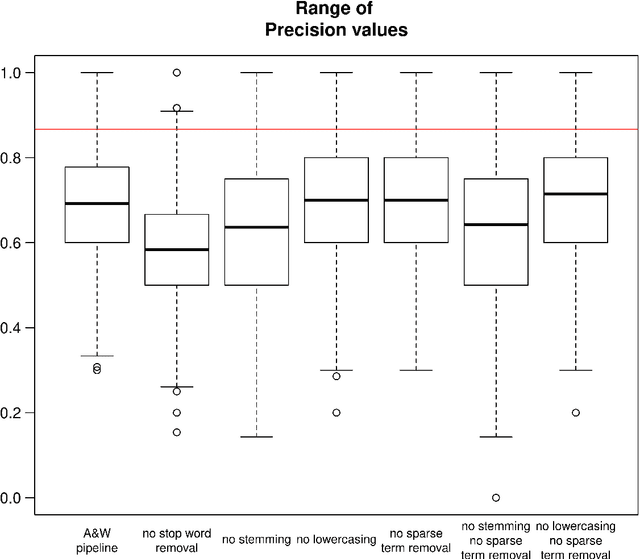

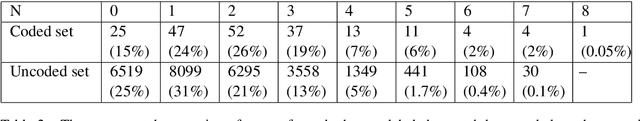

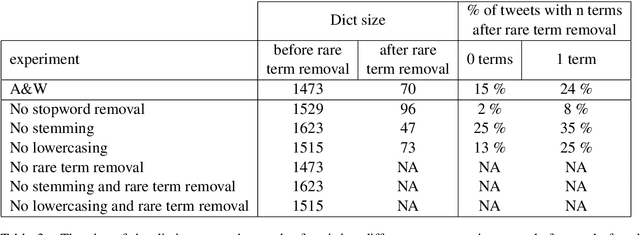

Abstract:In an often-cited 2019 paper on the use of machine learning in political research, Anastasopoulos & Whitford (A&W) propose a text classification method for tweets related to organizational reputation. The aim of their paper was to provide a 'guide to practice' for public administration scholars and practitioners on the use of machine learning. In the current paper we follow up on that work with a replication of A&W's experiments and additional analyses on model stability and the effects of preprocessing, both in relation to the small data size. We show that (1) the small data causes the classification model to be highly sensitive to variations in the random train-test split, and that (2) the applied preprocessing causes the data to be extremely sparse, with the majority of items in the data having at most two non-zero lexical features. With additional experiments in which we vary the steps of the preprocessing pipeline, we show that the small data size keeps causing problems, irrespective of the preprocessing choices. Based on our findings, we argue that A&W's conclusions regarding the automated classification of organizational reputation tweets -- either substantive or methodological -- can not be maintained and require a larger data set for training and more careful validation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge