Hector J. Levesque

University of Toronto

Toward a New Science of Common Sense

Dec 23, 2021Abstract:Common sense has always been of interest in AI, but has rarely taken center stage. Despite its mention in one of John McCarthy's earliest papers and years of work by dedicated researchers, arguably no AI system with a serious amount of general common sense has ever emerged. Why is that? What's missing? Examples of AI systems' failures of common sense abound, and they point to AI's frequent focus on expertise as the cause. Those attempting to break the brittleness barrier, even in the context of modern deep learning, have tended to invest their energy in large numbers of small bits of commonsense knowledge. But all the commonsense knowledge fragments in the world don't add up to a system that actually demonstrates common sense in a human-like way. We advocate examining common sense from a broader perspective than in the past. Common sense is more complex than it has been taken to be and is worthy of its own scientific exploration.

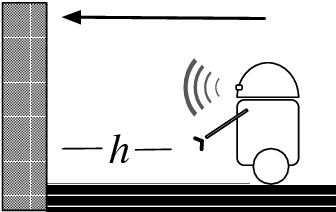

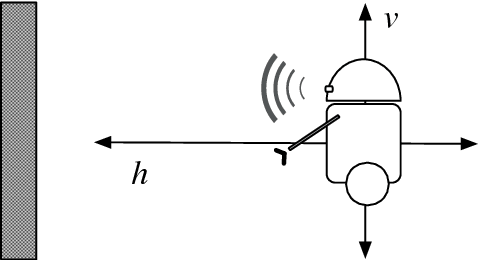

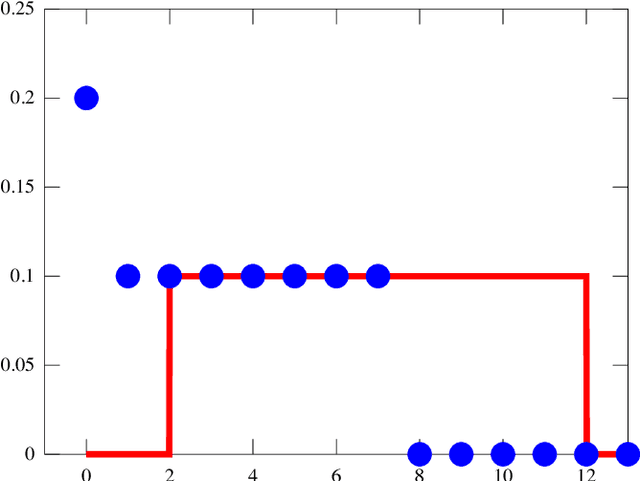

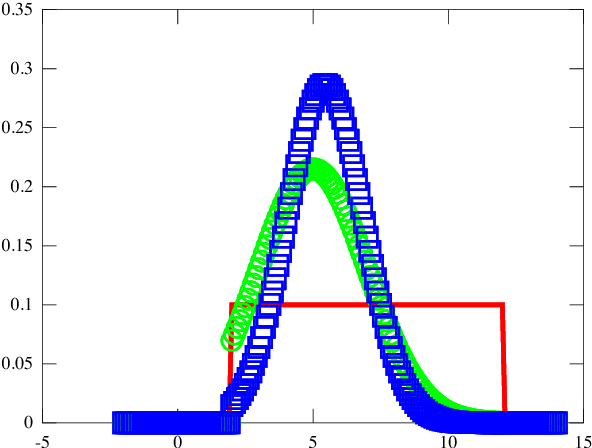

Reasoning about Discrete and Continuous Noisy Sensors and Effectors in Dynamical Systems

Sep 14, 2018

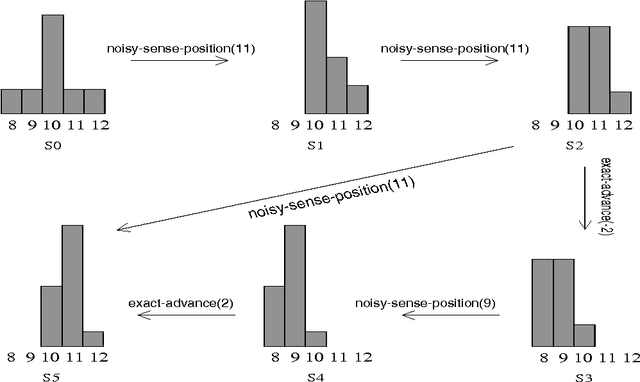

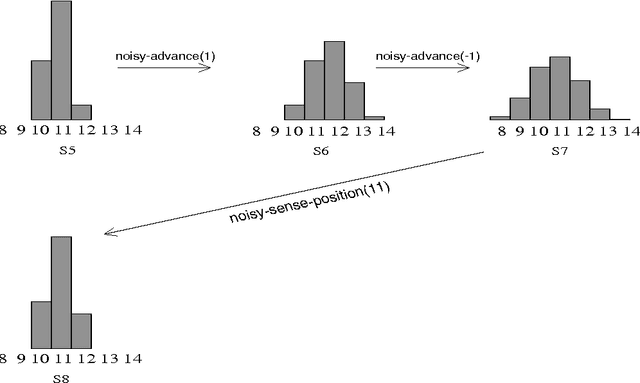

Abstract:Among the many approaches for reasoning about degrees of belief in the presence of noisy sensing and acting, the logical account proposed by Bacchus, Halpern, and Levesque is perhaps the most expressive. While their formalism is quite general, it is restricted to fluents whose values are drawn from discrete finite domains, as opposed to the continuous domains seen in many robotic applications. In this work, we show how this limitation in that approach can be lifted. By dealing seamlessly with both discrete distributions and continuous densities within a rich theory of action, we provide a very general logical specification of how belief should change after acting and sensing in complex noisy domains.

Reasoning about Noisy Sensors and Effectors in the Situation Calculus

Sep 09, 1998

Abstract:Agents interacting with an incompletely known world need to be able to reason about the effects of their actions, and to gain further information about that world they need to use sensors of some sort. Unfortunately, both the effects of actions and the information returned from sensors are subject to error. To cope with such uncertainties, the agent can maintain probabilistic beliefs about the state of the world. With probabilistic beliefs the agent will be able to quantify the likelihood of the various outcomes of its actions and is better able to utilize the information gathered from its error-prone actions and sensors. In this paper, we present a model in which we can reason about an agent's probabilistic degrees of belief and the manner in which these beliefs change as various actions are executed. We build on a general logical theory of action developed by Reiter and others, formalized in the situation calculus. We propose a simple axiomatization that captures an agent's state of belief and the manner in which these beliefs change when actions are executed. Our model displays a number of intuitively reasonable properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge