Hajime Shimao

Chaotic Dynamics in Multi-LLM Deliberation

Mar 10, 2026Abstract:Collective AI systems increasingly rely on multi-LLM deliberation, but their stability under repeated execution remains poorly characterized. We model five-agent LLM committees as random dynamical systems and quantify inter-run sensitivity using an empirical Lyapunov exponent ($\hatλ$) derived from trajectory divergence in committee mean preferences. Across 12 policy scenarios, a factorial design at $T=0$ identifies two independent routes to instability: role differentiation in homogeneous committees and model heterogeneity in no-role committees. Critically, these effects appear even in the $T=0$ regime where practitioners often expect deterministic behavior. In the HL-01 benchmark, both routes produce elevated divergence ($\hatλ=0.0541$ and $0.0947$, respectively), while homogeneous no-role committees also remain in a positive-divergence regime ($\hatλ=0.0221$). The combined mixed+roles condition is less unstable than mixed+no-role ($\hatλ=0.0519$ vs $0.0947$), showing non-additive interaction. Mechanistically, Chair-role ablation reduces $\hatλ$ most strongly, and targeted protocol variants that shorten memory windows further attenuate divergence. These results support stability auditing as a core design requirement for multi-LLM governance systems.

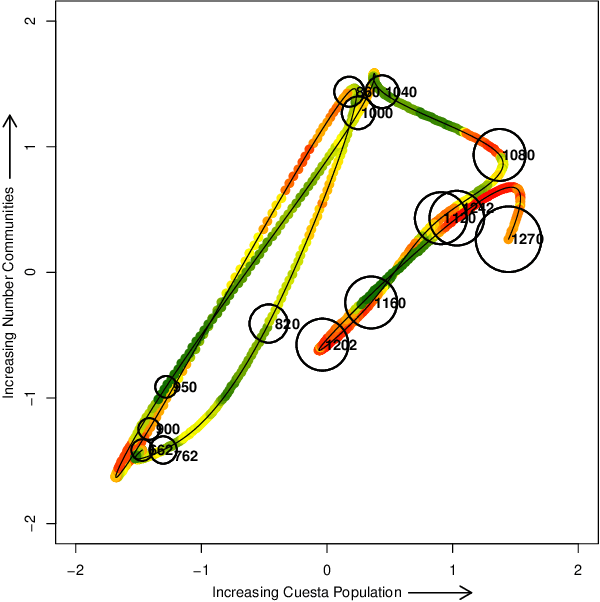

The Past as a Stochastic Process

Dec 11, 2021

Abstract:Historical processes manifest remarkable diversity. Nevertheless, scholars have long attempted to identify patterns and categorize historical actors and influences with some success. A stochastic process framework provides a structured approach for the analysis of large historical datasets that allows for detection of sometimes surprising patterns, identification of relevant causal actors both endogenous and exogenous to the process, and comparison between different historical cases. The combination of data, analytical tools and the organizing theoretical framework of stochastic processes complements traditional narrative approaches in history and archaeology.

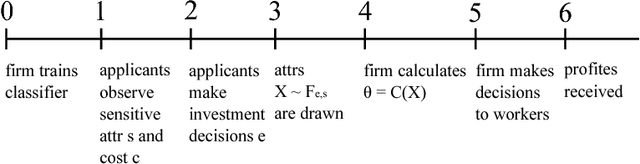

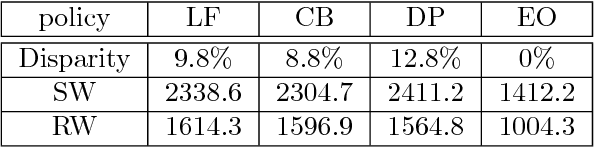

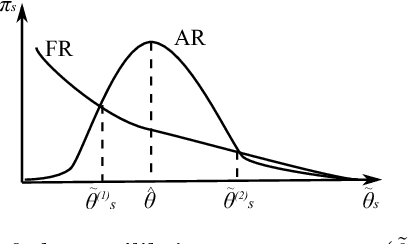

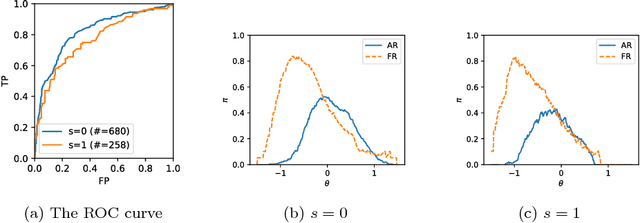

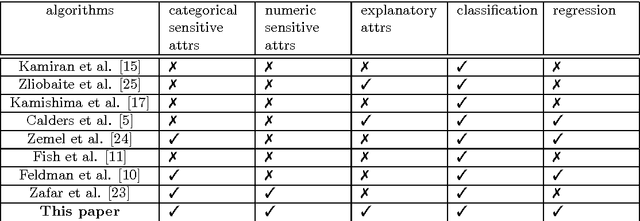

Comparing Fairness Criteria Based on Social Outcome

Jun 13, 2018

Abstract:Fairness in algorithmic decision-making processes is attracting increasing concern. When an algorithm is applied to human-related decision-making an estimator solely optimizing its predictive power can learn biases on the existing data, which motivates us the notion of fairness in machine learning. while several different notions are studied in the literature, little studies are done on how these notions affect the individuals. We demonstrate such a comparison between several policies induced by well-known fairness criteria, including the color-blind (CB), the demographic parity (DP), and the equalized odds (EO). We show that the EO is the only criterion among them that removes group-level disparity. Empirical studies on the social welfare and disparity of these policies are conducted.

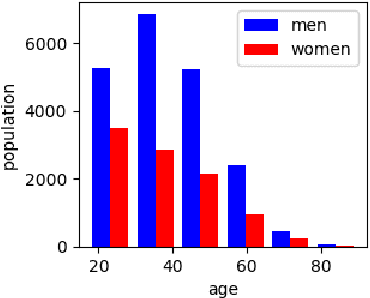

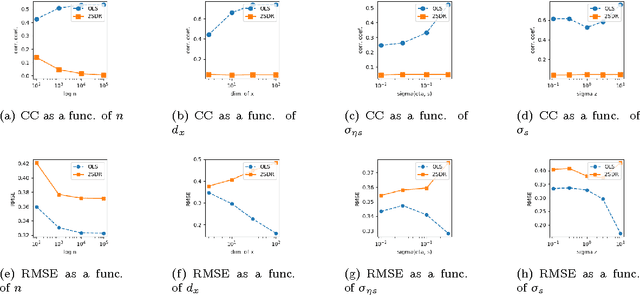

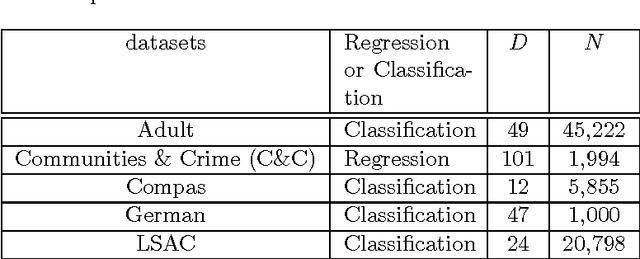

Two-stage Algorithm for Fairness-aware Machine Learning

Oct 13, 2017

Abstract:Algorithmic decision making process now affects many aspects of our lives. Standard tools for machine learning, such as classification and regression, are subject to the bias in data, and thus direct application of such off-the-shelf tools could lead to a specific group being unfairly discriminated. Removing sensitive attributes of data does not solve this problem because a \textit{disparate impact} can arise when non-sensitive attributes and sensitive attributes are correlated. Here, we study a fair machine learning algorithm that avoids such a disparate impact when making a decision. Inspired by the two-stage least squares method that is widely used in the field of economics, we propose a two-stage algorithm that removes bias in the training data. The proposed algorithm is conceptually simple. Unlike most of existing fair algorithms that are designed for classification tasks, the proposed method is able to (i) deal with regression tasks, (ii) combine explanatory attributes to remove reverse discrimination, and (iii) deal with numerical sensitive attributes. The performance and fairness of the proposed algorithm are evaluated in simulations with synthetic and real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge