Guy Engelhard

A Multisite, Report-Based, Centralized Infrastructure for Feedback and Monitoring of Radiology AI/ML Development and Clinical Deployment

Aug 31, 2020

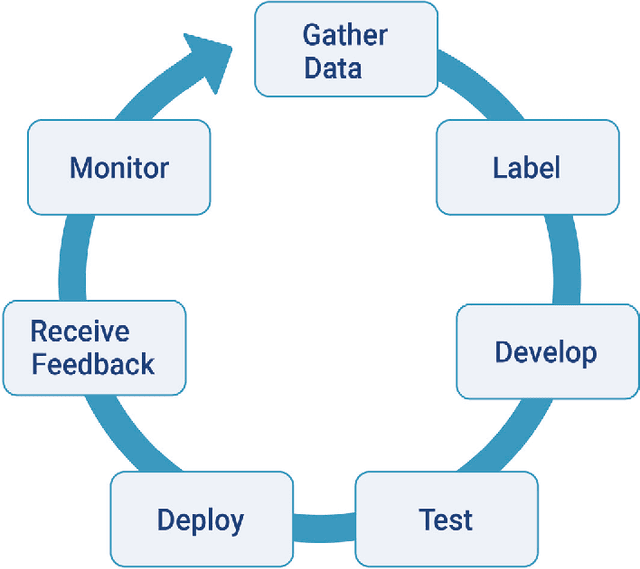

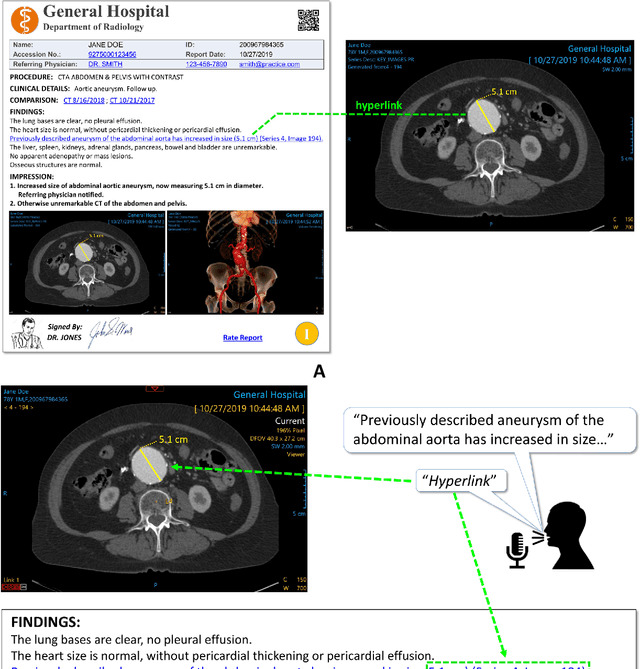

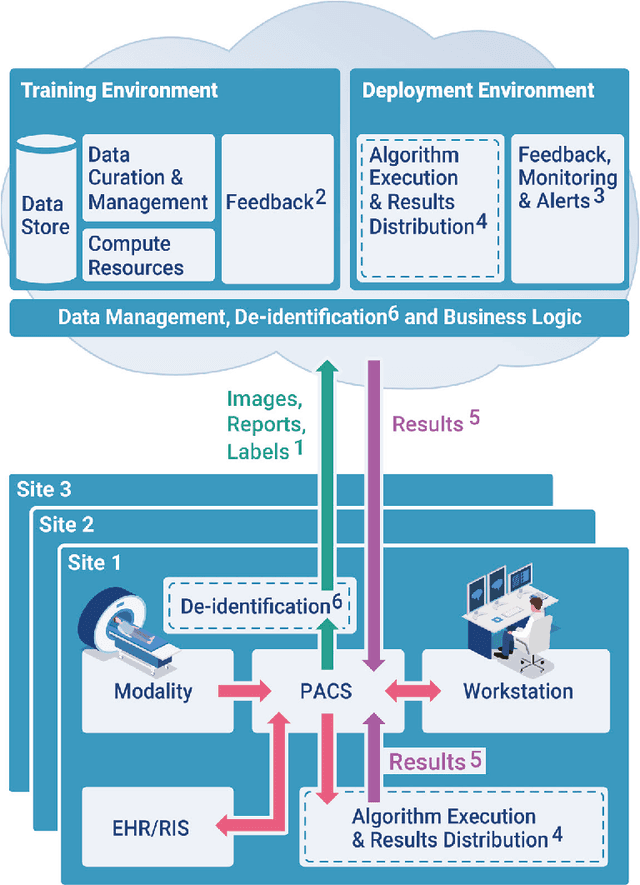

Abstract:An infrastructure for multisite, geographically-distributed creation and collection of diverse, high-quality, curated and labeled radiology image data is crucial for the successful automated development, deployment, monitoring and continuous improvement of Artificial Intelligence (AI)/Machine Learning (ML) solutions in the real world. An interactive radiology reporting approach that integrates image viewing, dictation, natural language processing (NLP) and creation of hyperlinks between image findings and the report, provides localized labels during routine interpretation. These images and labels can be captured and centralized in a cloud-based system. This method provides a practical and efficient mechanism with which to monitor algorithm performance. It also supplies feedback for iterative development and quality improvement of new and existing algorithmic models. Both feedback and monitoring are achieved without burdening the radiologist. The method addresses proposed regulatory requirements for post-marketing surveillance and external data. Comprehensive multi-site data collection assists in reducing bias. Resource requirements are greatly reduced compared to dedicated retrospective expert labeling.

Lung Nodules Detection and Segmentation Using 3D Mask-RCNN

Jul 17, 2019

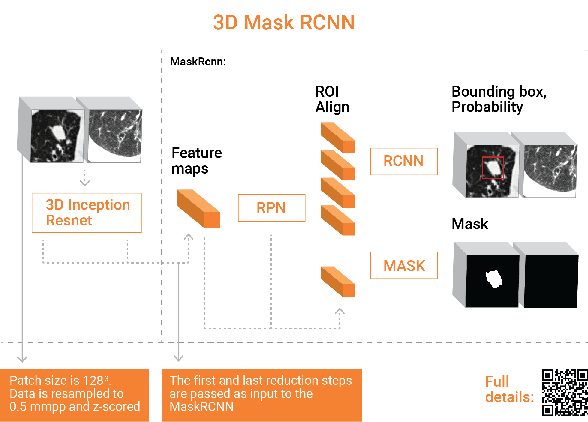

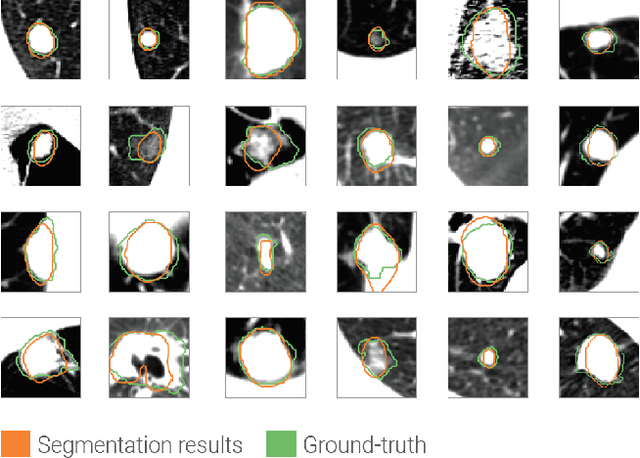

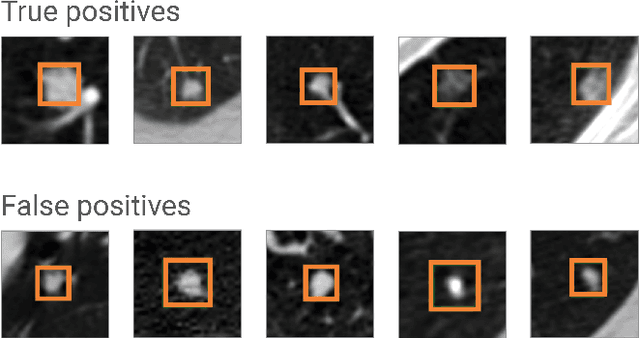

Abstract:Accurate assessment of Lung nodules is a time consuming and error prone ingredient of the radiologist interpretation work. Automating 3D volume detection and segmentation can improve workflow as well as patient care. Previous works have focused either on detecting lung nodules from a full CT scan or on segmenting them from a small ROI. We adapt the state of the art architecture for 2D object detection and segmentation, MaskRCNN, to handle 3D images and employ it to detect and segment lung nodules from CT scans. We report on competitive results for the lung nodule detection on LUNA16 data set. The added value of our method is that in addition to lung nodule detection, our framework produces 3D segmentations of the detected nodules.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge