Gianluca Palermo

EnergyLens: Interpretable Closed-Form Energy Models for Multimodal LLM Inference Serving

May 11, 2026Abstract:As large language models span dense, mixture-of-experts, and state-space architectures and are deployed on heterogeneous accelerators under increasingly diverse multimodal workloads, optimising inference energy has become as critical as optimizing latency and throughput. Existing approaches either treat latency as an energy proxy or rely on data-hungry black-box surrogates. Both fail under varying parallelism strategies: latency and energy optima diverge in over 20% of configurations we tested, and black-box surrogates require hundreds of profiling samples to generalize across model families and hardware. We present EnergyLens, which uses symbolic regression as a structure-discovery tool over profiling data to derive a single twelve-parameter closed-form energy model expressed in terms of system properties such as degree of parallelism, batch size, and sequence length. Unlike black-box surrogates, EnergyLens decouples tensor and pipeline parallelism contributions and separates prefill from decode energy, making its predictions physically interpretable and actionable. Fitted from as few as 50 profiling measurements, EnergyLens achieves 88.2% Top-1 configuration selection accuracy across many evaluation scenarios compared to 60.9% for the closest prior analytical baseline, matches the predictive accuracy of ensemble ML methods with 10x fewer profiling samples, and extrapolates reliably to unseen batch sizes and hardware platforms without structural modification, making it a practical, interpretable tool for energy-optimal LLM deployment.

Dynamic Network selection for the Object Detection task: why it matters and what we (didn't) achieve

May 27, 2021

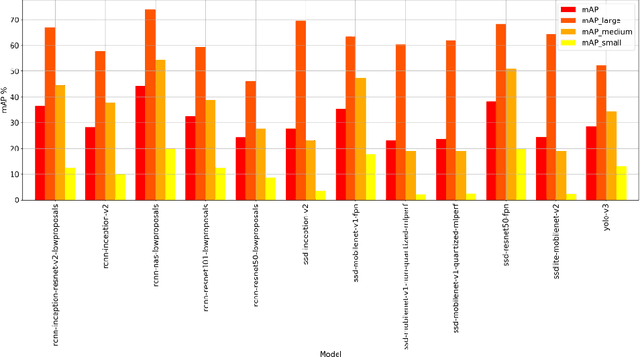

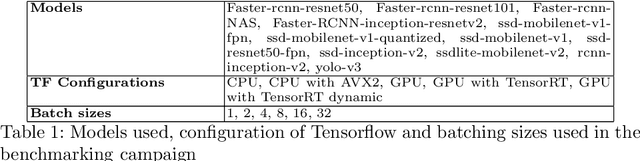

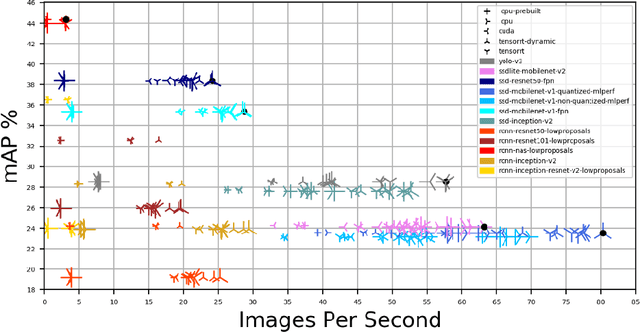

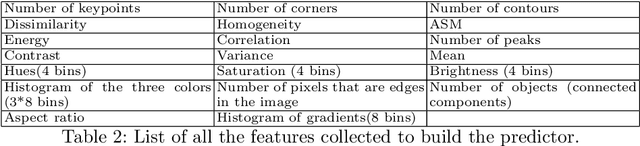

Abstract:In this paper, we want to show the potential benefit of a dynamic auto-tuning approach for the inference process in the Deep Neural Network (DNN) context, tackling the object detection challenge. We benchmarked different neural networks to find the optimal detector for the well-known COCO 17 database, and we demonstrate that even if we only consider the quality of the prediction there is not a single optimal network. This is even more evident if we also consider the time to solution as a metric to evaluate, and then select, the most suitable network. This opens to the possibility for an adaptive methodology to switch among different object detection networks according to run-time requirements (e.g. maximum quality subject to a time-to-solution constraint). Moreover, we demonstrated by developing an ad hoc oracle, that an additional proactive methodology could provide even greater benefits, allowing us to select the best network among the available ones given some characteristics of the processed image. To exploit this method, we need to identify some image features that can be used to steer the decision on the most promising network. Despite the optimization opportunity that has been identified, we were not able to identify a predictor function that validates this attempt neither adopting classical image features nor by using a DNN classifier.

A Survey on Compiler Autotuning using Machine Learning

Sep 03, 2018

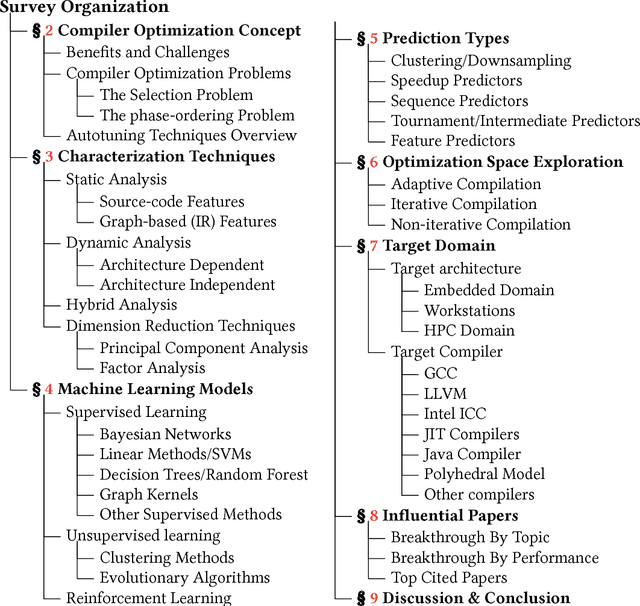

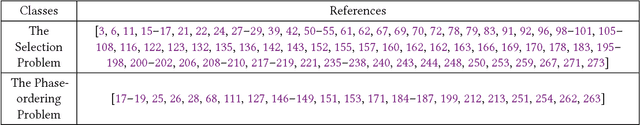

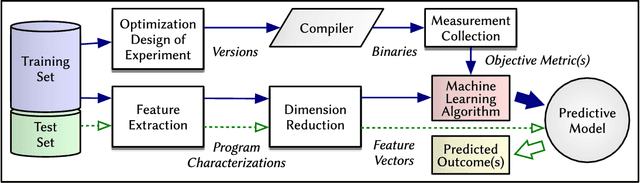

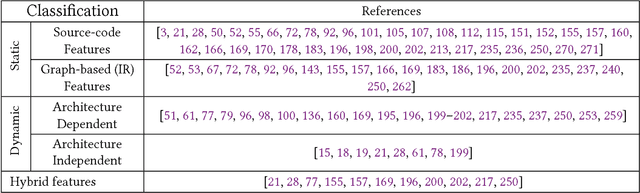

Abstract:Since the mid-1990s, researchers have been trying to use machine-learning based approaches to solve a number of different compiler optimization problems. These techniques primarily enhance the quality of the obtained results and, more importantly, make it feasible to tackle two main compiler optimization problems: optimization selection (choosing which optimizations to apply) and phase-ordering (choosing the order of applying optimizations). The compiler optimization space continues to grow due to the advancement of applications, increasing number of compiler optimizations, and new target architectures. Generic optimization passes in compilers cannot fully leverage newly introduced optimizations and, therefore, cannot keep up with the pace of increasing options. This survey summarizes and classifies the recent advances in using machine learning for the compiler optimization field, particularly on the two major problems of (1) selecting the best optimizations and (2) the phase-ordering of optimizations. The survey highlights the approaches taken so far, the obtained results, the fine-grain classification among different approaches and finally, the influential papers of the field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge