Gary Tan

Distribution-aware Online Continual Learning for Urban Spatio-Temporal Forecasting

Nov 24, 2024

Abstract:Urban spatio-temporal (ST) forecasting is crucial for various urban applications such as intelligent scheduling and trip planning. Previous studies focus on modeling ST correlations among urban locations in offline settings, which often neglect the non-stationary nature of urban ST data, particularly, distribution shifts over time. This oversight can lead to degraded performance in real-world scenarios. In this paper, we first analyze the distribution shifts in urban ST data, and then introduce DOST, a novel online continual learning framework tailored for ST data characteristics. DOST employs an adaptive ST network equipped with a variable-independent adapter to address the unique distribution shifts at each urban location dynamically. Further, to accommodate the gradual nature of these shifts, we also develop an awake-hibernate learning strategy that intermittently fine-tunes the adapter during the online phase to reduce computational overhead. This strategy integrates a streaming memory update mechanism designed for urban ST sequential data, enabling effective network adaptation to new patterns while preventing catastrophic forgetting. Experimental results confirm DOST's superiority over state-of-the-art models on four real-world datasets, providing online forecasts within an average of 0.1 seconds and achieving a 12.89% reduction in forecast errors compared to baseline models.

PRNet: A Periodic Residual Learning Network for Crowd Flow Forecasting

Dec 08, 2021

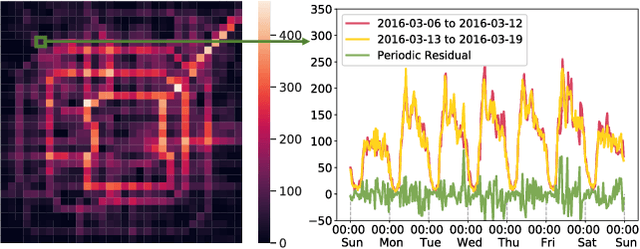

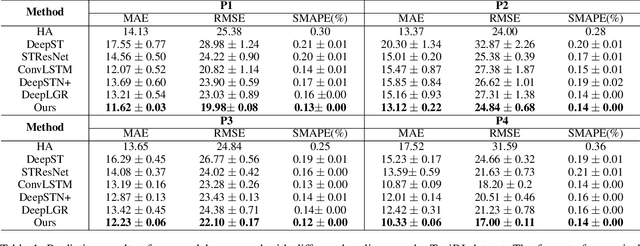

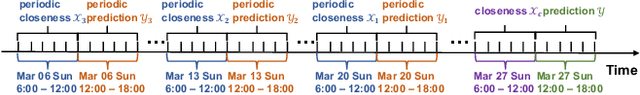

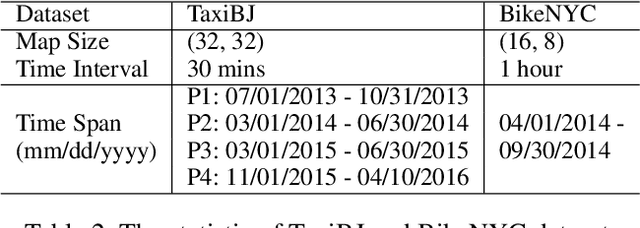

Abstract:Crowd flow forecasting, e.g., predicting the crowds entering or leaving certain regions, is of great importance to real-world urban applications. One of the key properties of crowd flow data is periodicity: a pattern that occurs at regular time intervals, such as a weekly pattern. To capture such periodicity, existing studies either explicitly model it based on the periodic hidden states or implicitly learn it by feeding all periodic segments into neural networks. In this paper, we devise a novel periodic residual learning network (PRNet) for better modeling the periodicity in crowd flow data. Differing from existing methods, PRNet frames the crowd flow forecasting as a periodic residual learning problem by modeling the deviation between the input (the previous time period) and the output (the future time period). As compared to predicting highly dynamic crowd flows directly, learning such stationary deviation is much easier, which thus facilitates the model training. Besides, the learned deviation enables the network to produce the residual between future conditions and its corresponding weekly observations at each time interval, and therefore contributes to substantially better predictions. We further propose a lightweight Spatial-Channel Enhanced Encoder to build more powerful region representations, by jointly capturing global spatial correlations and temporal dependencies. Experimental results on two real-world datasets demonstrate that PRNet outperforms the state-of-the-art methods in terms of both accuracy and robustness.

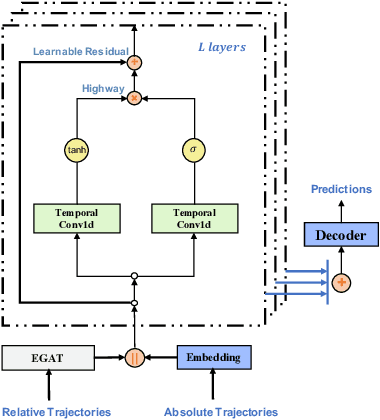

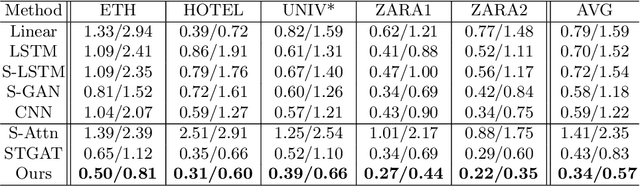

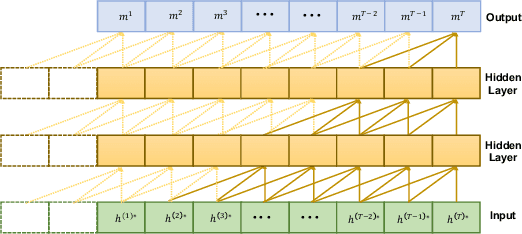

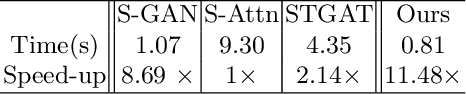

GraphTCN: Spatio-Temporal Interaction Modeling for Human Trajectory Prediction

Mar 26, 2020

Abstract:Trajectory prediction is a fundamental and challenging task to forecast the future path of the agents in autonomous applications with multi-agent interaction, where the agents need to predict the future movements of their neighbors to avoid collisions. To respond timely and precisely to the environment, high efficiency and accuracy are required in the prediction. Conventional approaches, e.g., LSTM-based models, take considerable computation costs in the prediction, especially for the long sequence prediction. To support a more efficient and accurate trajectory prediction, we instead propose a novel CNN-based spatial-temporal graph framework GraphTCN, which captures the spatial and temporal interactions in an input-aware manner. The spatial interaction between agents at each time step is captured with an edge graph attention network (EGAT), and the temporal interaction across time step is modeled with a modified gated convolutional network (CNN). In contrast to conventional models, both the spatial and temporal modeling in GraphTCN are computed within each local time window. Therefore, GraphTCN can be executed in parallel for much higher efficiency, and meanwhile with accuracy comparable to best-performing approaches. Experimental results confirm that GraphTCN achieves noticeably better performance in terms of both efficiency and accuracy compared with state-of-the-art methods on various trajectory prediction benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge