Fiaz Ahmed

ClimSim: An open large-scale dataset for training high-resolution physics emulators in hybrid multi-scale climate simulators

Jun 16, 2023Abstract:Modern climate projections lack adequate spatial and temporal resolution due to computational constraints. A consequence is inaccurate and imprecise prediction of critical processes such as storms. Hybrid methods that combine physics with machine learning (ML) have introduced a new generation of higher fidelity climate simulators that can sidestep Moore's Law by outsourcing compute-hungry, short, high-resolution simulations to ML emulators. However, this hybrid ML-physics simulation approach requires domain-specific treatment and has been inaccessible to ML experts because of lack of training data and relevant, easy-to-use workflows. We present ClimSim, the largest-ever dataset designed for hybrid ML-physics research. It comprises multi-scale climate simulations, developed by a consortium of climate scientists and ML researchers. It consists of 5.7 billion pairs of multivariate input and output vectors that isolate the influence of locally-nested, high-resolution, high-fidelity physics on a host climate simulator's macro-scale physical state. The dataset is global in coverage, spans multiple years at high sampling frequency, and is designed such that resulting emulators are compatible with downstream coupling into operational climate simulators. We implement a range of deterministic and stochastic regression baselines to highlight the ML challenges and their scoring. The data (https://huggingface.co/datasets/LEAP/ClimSim_high-res) and code (https://leap-stc.github.io/ClimSim) are released openly to support the development of hybrid ML-physics and high-fidelity climate simulations for the benefit of science and society.

Climate-Invariant Machine Learning

Dec 14, 2021

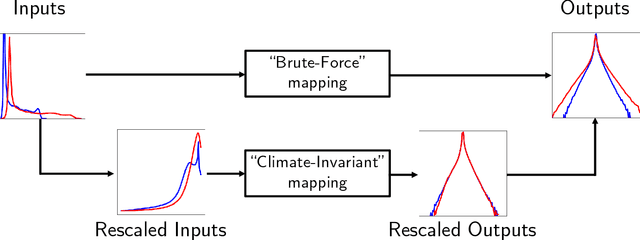

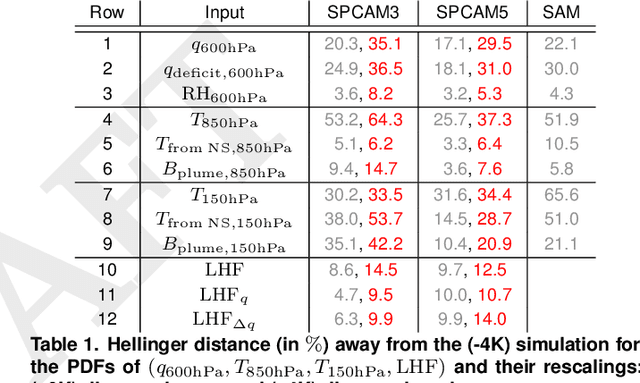

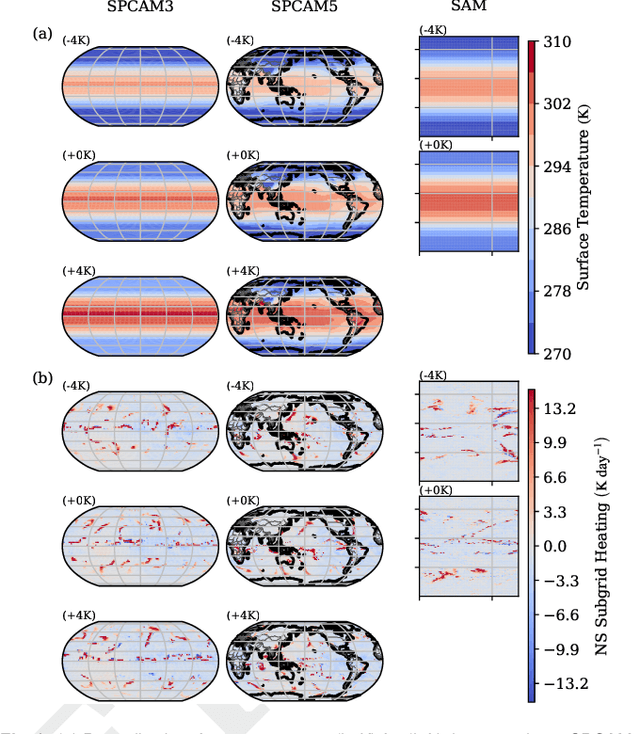

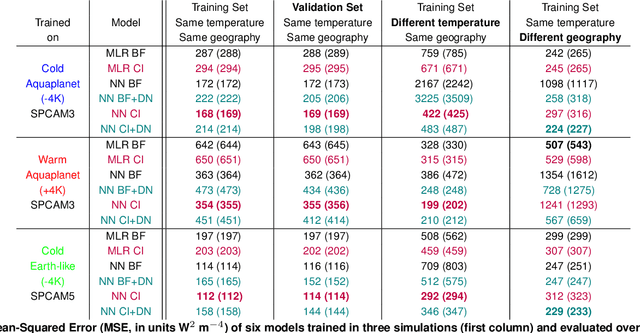

Abstract:Data-driven algorithms, in particular neural networks, can emulate the effects of unresolved processes in coarse-resolution climate models when trained on high-resolution simulation data; however, they often make large generalization errors when evaluated in conditions they were not trained on. Here, we propose to physically rescale the inputs and outputs of machine learning algorithms to help them generalize to unseen climates. Applied to offline parameterizations of subgrid-scale thermodynamics in three distinct climate models, we show that rescaled or "climate-invariant" neural networks make accurate predictions in test climates that are 4K and 8K warmer than their training climates. Additionally, "climate-invariant" neural nets facilitate generalization between Aquaplanet and Earth-like simulations. Through visualization and attribution methods, we show that compared to standard machine learning models, "climate-invariant" algorithms learn more local and robust relations between storm-scale convection, radiation, and their synoptic thermodynamic environment. Overall, these results suggest that explicitly incorporating physical knowledge into data-driven models of Earth system processes can improve their consistency and ability to generalize across climate regimes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge