Fernando L. Teixeira

Parametric Interpolation of Dynamic Mode Decomposition for Predicting Nonlinear Systems

Apr 13, 2026Abstract:We present parameter-interpolated dynamic mode decomposition (piDMD), a parametric reduced-order modeling framework that embeds known parameter-affine structure directly into the DMD regression step. Unlike existing parametric DMD methods which interpolate modes, eigenvalues, or reduced operators and can be fragile with sparse training data or multi-dimensional parameter spaces, piDMD learns a single parameter-affine Koopman surrogate reduced order model (ROM) across multiple training parameter samples and predicts at unseen parameter values without retraining. We validate piDMD on fluid flow past a cylinder, electron beam oscillations in transverse magnetic fields, and virtual cathode oscillations -- the latter two being simulated using an electromagnetic particle-in-cell (EMPIC) method. Across all benchmarks, piDMD achieves accurate long-horizon predictions and improved robustness over state-of-the-art interpolation-based parametric DMD baselines, with less training samples and with multi-dimensional parameter spaces.

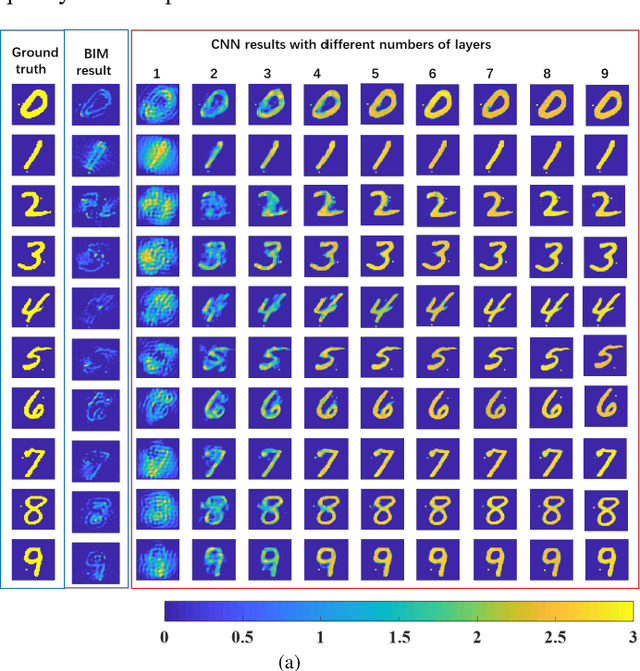

Performance Analysis and Dynamic Evolution of Deep Convolutional Neural Network for Nonlinear Inverse Scattering

Jan 09, 2019

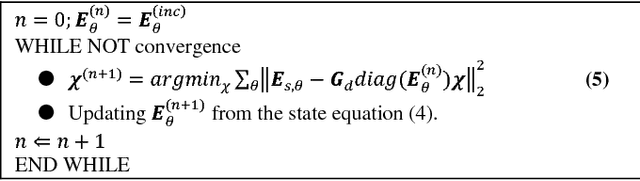

Abstract:The solution of nonlinear electromagnetic (EM) inverse scattering problems is typically hindered by several challenges such as ill-posedness, strong nonlinearity, and high computational costs. Recently, deep learning has been demonstrated to be a promising tool in addressing these challenges. In particular, it is possible to establish a connection between a deep convolutional neural network (CNN) and iterative solution methods of nonlinear EM inverse scattering. This has led to the development of an efficient CNN-based solution to nonlinear EM inverse problems, termed DeepNIS. It has been shown that DeepNIS can outperform conventional nonlinear inverse scattering methods in terms of both image quality and computational time. In this work, we quantitatively evaluate the performance of DeepNIS as a function of the number of layers using structure similarity measure (SSIM) and mean-square error (MSE) metrics. In addition, we probe the dynamic evolution behavior of DeepNIS by examining its near-isometry property. It is shown that after a proper training stage the proposed CNN is near optimal in terms of the stability and generalization ability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge