Debdipta Goswami

Parametric Interpolation of Dynamic Mode Decomposition for Predicting Nonlinear Systems

Apr 13, 2026Abstract:We present parameter-interpolated dynamic mode decomposition (piDMD), a parametric reduced-order modeling framework that embeds known parameter-affine structure directly into the DMD regression step. Unlike existing parametric DMD methods which interpolate modes, eigenvalues, or reduced operators and can be fragile with sparse training data or multi-dimensional parameter spaces, piDMD learns a single parameter-affine Koopman surrogate reduced order model (ROM) across multiple training parameter samples and predicts at unseen parameter values without retraining. We validate piDMD on fluid flow past a cylinder, electron beam oscillations in transverse magnetic fields, and virtual cathode oscillations -- the latter two being simulated using an electromagnetic particle-in-cell (EMPIC) method. Across all benchmarks, piDMD achieves accurate long-horizon predictions and improved robustness over state-of-the-art interpolation-based parametric DMD baselines, with less training samples and with multi-dimensional parameter spaces.

On Data-Driven Koopman Representations of Nonlinear Delay Differential Equations

Apr 03, 2026Abstract:This work establishes a rigorous bridge between infinite-dimensional delay dynamics and finite-dimensional Koopman learning, with explicit and interpretable error guarantees. While Koopman analysis is well-developed for ordinary differential equations (ODEs) and partially for partial differential equations (PDEs), its extension to delay differential equations (DDEs) remains limited due to the infinite-dimensional phase space of DDEs. We propose a finite-dimensional Koopman approximation framework based on history discretization and a suitable reconstruction operator, enabling a tractable representation of the Koopman operator via kernel-based extended dynamic mode decomposition (kEDMD). Deterministic error bounds are derived for the learned predictor, decomposing the total error into contributions from history discretization, kernel interpolation, and data-driven regression. Additionally, we develop a kernel-based reconstruction method to recover discretized states from lifted Koopman coordinates, with provable guarantees. Numerical results demonstrate convergence of the learned predictor with respect to both discretization resolution and training data, supporting reliable prediction and control of delay systems.

Feature-Based Echo-State Networks: A Step Towards Interpretability and Minimalism in Reservoir Computer

Mar 28, 2024

Abstract:This paper proposes a novel and interpretable recurrent neural-network structure using the echo-state network (ESN) paradigm for time-series prediction. While the traditional ESNs perform well for dynamical systems prediction, it needs a large dynamic reservoir with increased computational complexity. It also lacks interpretability to discern contributions from different input combinations to the output. Here, a systematic reservoir architecture is developed using smaller parallel reservoirs driven by different input combinations, known as features, and then they are nonlinearly combined to produce the output. The resultant feature-based ESN (Feat-ESN) outperforms the traditional single-reservoir ESN with less reservoir nodes. The predictive capability of the proposed architecture is demonstrated on three systems: two synthetic datasets from chaotic dynamical systems and a set of real-time traffic data.

Temporally-Consistent Koopman Autoencoders for Forecasting Dynamical Systems

Mar 19, 2024Abstract:Absence of sufficiently high-quality data often poses a key challenge in data-driven modeling of high-dimensional spatio-temporal dynamical systems. Koopman Autoencoders (KAEs) harness the expressivity of deep neural networks (DNNs), the dimension reduction capabilities of autoencoders, and the spectral properties of the Koopman operator to learn a reduced-order feature space with simpler, linear dynamics. However, the effectiveness of KAEs is hindered by limited and noisy training datasets, leading to poor generalizability. To address this, we introduce the Temporally-Consistent Koopman Autoencoder (tcKAE), designed to generate accurate long-term predictions even with constrained and noisy training data. This is achieved through a consistency regularization term that enforces prediction coherence across different time steps, thus enhancing the robustness and generalizability of tcKAE over existing models. We provide analytical justification for this approach based on Koopman spectral theory and empirically demonstrate tcKAE's superior performance over state-of-the-art KAE models across a variety of test cases, including simple pendulum oscillations, kinetic plasmas, fluid flows, and sea surface temperature data.

Sequential Learning from Noisy Data: Data-Assimilation Meets Echo-State Network

Apr 01, 2023

Abstract:This paper explores the problem of training a recurrent neural network from noisy data. While neural network based dynamic predictors perform well with noise-free training data, prediction with noisy inputs during training phase poses a significant challenge. Here a sequential training algorithm is developed for an echo-state network (ESN) by incorporating noisy observations using an ensemble Kalman filter. The resultant Kalman-trained echo-state network (KalT-ESN) outperforms the traditionally trained ESN with least square algorithm while still being computationally cheap. The proposed method is demonstrated on noisy observations from three systems: two synthetic datasets from chaotic dynamical systems and a set of real-time traffic data.

Delay Embedded Echo-State Network: A Predictor for Partially Observed Systems

Nov 11, 2022

Abstract:This paper considers the problem of data-driven prediction of partially observed systems using a recurrent neural network. While neural network based dynamic predictors perform well with full-state training data, prediction with partial observation during training phase poses a significant challenge. Here a predictor for partial observations is developed using an echo-state network (ESN) and time delay embedding of the partially observed state. The proposed method is theoretically justified with Taken's embedding theorem and strong observability of a nonlinear system. The efficacy of the proposed method is demonstrated on three systems: two synthetic datasets from chaotic dynamical systems and a set of real-time traffic data.

Investigation of A Collective Decision Making System of Different Neighbourhood-Size Based on Hyper-Geometric Distribution

Oct 21, 2014

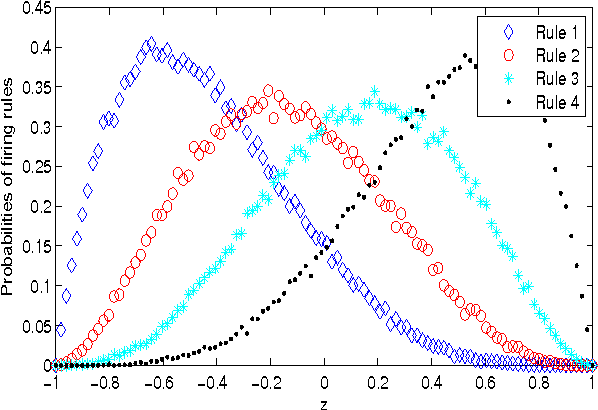

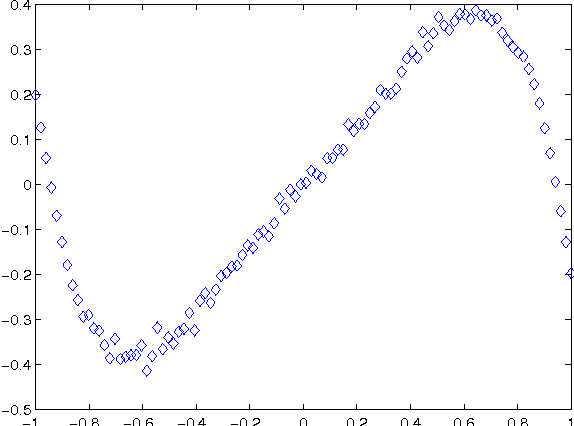

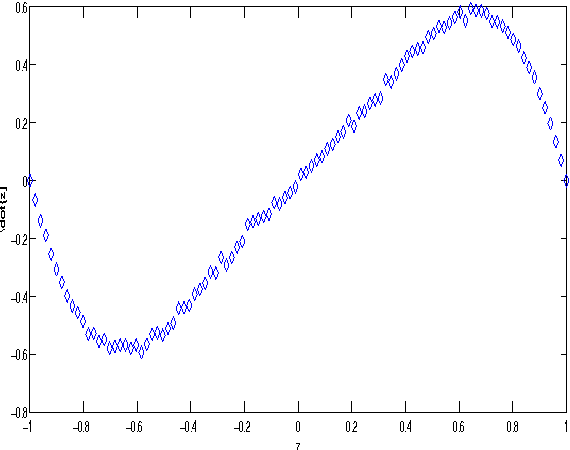

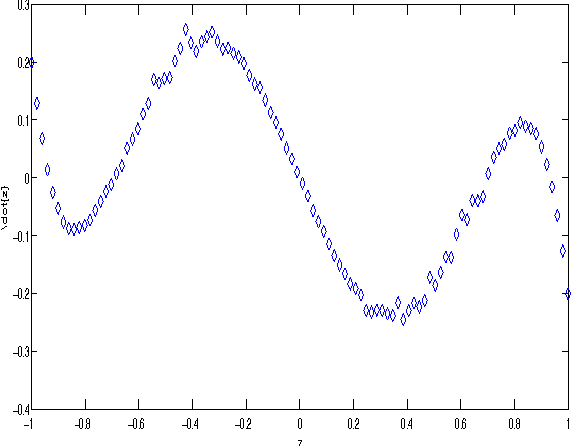

Abstract:The study of collective decision making system has become the central part of the Swarm- Intelligence Related research in recent years. The most challenging task of modelling a collec- tive decision making system is to develop the macroscopic stochastic equation from its microscopic model. In this report we have investigated the behaviour of a collective decision making system with specified microscopic rules that resemble the chemical reaction and used different group size. Then we ventured to derive a generalized analytical model of a collective-decision system using hyper-geometric distribution. Index Terms-swarm; collective decision making; noise; group size; hyper-geometric distribution

Multi-Agent Shape Formation and Tracking Inspired from a Social Foraging Dynamics

Oct 16, 2014

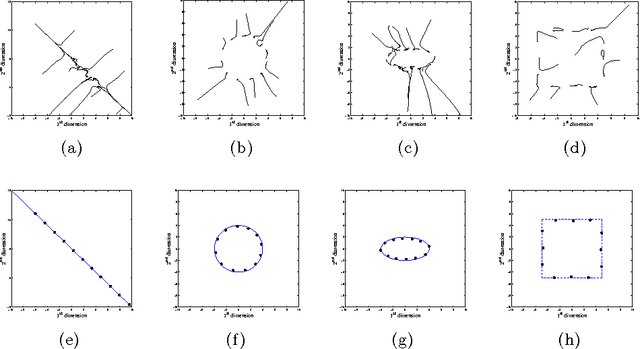

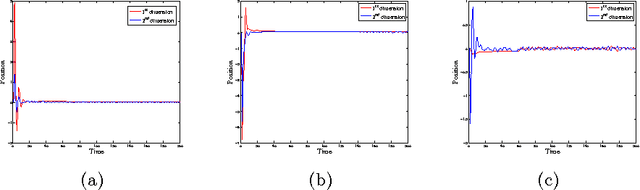

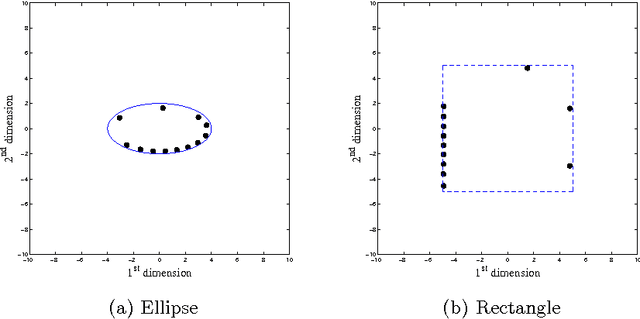

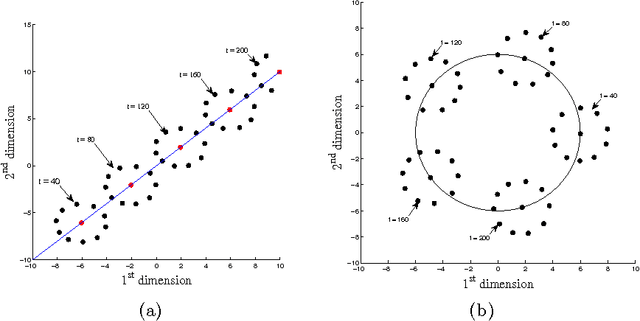

Abstract:Principle of Swarm Intelligence has recently found widespread application in formation control and automated tracking by the automated multi-agent system. This article proposes an elegant and effective method inspired by foraging dynamics to produce geometric-patterns by the search agents. Starting from a random initial orientation, it is investigated how the foraging dynamics can be modified to achieve convergence of the agents on the desired pattern with almost uniform density. Guided through the proposed dynamics, the agents can also track a moving point by continuously circulating around the point. An analytical treatment supported with computer simulation results is provided to better understand the convergence behaviour of the system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge