Farzad Pourbabaee

Trading off Relevance and Revenue in the Jobs Marketplace: Estimation, Optimization and Auction Design

Apr 04, 2025

Abstract:We study the problem of position allocation in job marketplaces, where the platform determines the ranking of the jobs for each seeker. The design of ranking mechanisms is critical to marketplace efficiency, as it influences both short-term revenue from promoted job placements and long-term health through sustained seeker engagement. Our analysis focuses on the tradeoff between revenue and relevance, as well as the innovations in job auction design. We demonstrated two ways to improve relevance with minimal impact on revenue: incorporating the seekers preferences and applying position-aware auctions.

High Dimensional Decision Making, Upper and Lower Bounds

May 02, 2021Abstract:A decision maker's utility depends on her action $a\in A \subset \mathbb{R}^d$ and the payoff relevant state of the world $\theta\in \Theta$. One can define the value of acquiring new information as the difference between the maximum expected utility pre- and post information acquisition. In this paper, I find asymptotic results on the expected value of information as $d \to \infty$, by using tools from the theory of (sub)-Guassian processes and generic chaining.

Robust Experimentation in the Continuous Time Bandit Problem

Mar 31, 2021

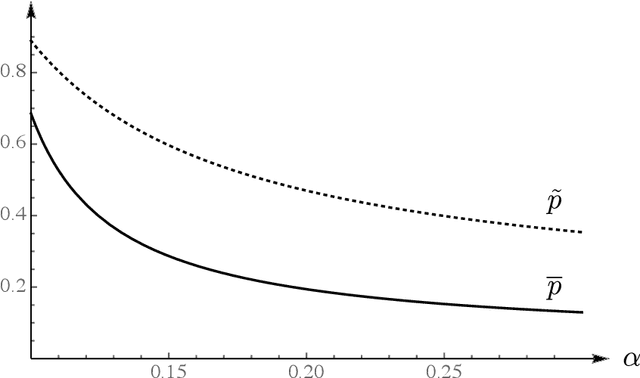

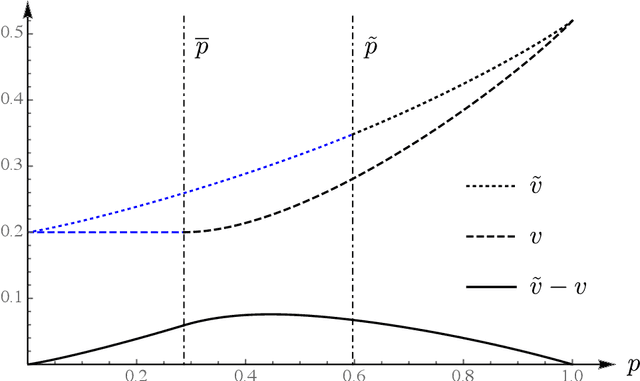

Abstract:We study the experimentation dynamics of a decision maker (DM) in a two-armed bandit setup (Bolton and Harris (1999)), where the agent holds ambiguous beliefs regarding the distribution of the return process of one arm and is certain about the other one. The DM entertains Multiplier preferences a la Hansen and Sargent (2001), thus we frame the decision making environment as a two-player differential game against nature in continuous time. We characterize the DM value function and her optimal experimentation strategy that turns out to follow a cut-off rule with respect to her belief process. The belief threshold for exploring the ambiguous arm is found in closed form and is shown to be increasing with respect to the ambiguity aversion index. We then study the effect of provision of an unambiguous information source about the ambiguous arm. Interestingly, we show that the exploration threshold rises unambiguously as a result of this new information source, thereby leading to more conservatism. This analysis also sheds light on the efficient time to reach for an expert opinion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge