Farhad Aghili

Hybrid Visual Servoing of Tendon-driven Continuum Robots

Feb 19, 2025

Abstract:This paper introduces a novel Hybrid Visual Servoing (HVS) approach for controlling tendon-driven continuum robots (TDCRs). The HVS system combines Image-Based Visual Servoing (IBVS) with Deep Learning-Based Visual Servoing (DLBVS) to overcome the limitations of each method and improve overall performance. IBVS offers higher accuracy and faster convergence in feature-rich environments, while DLBVS enhances robustness against disturbances and offers a larger workspace. By enabling smooth transitions between IBVS and DLBVS, the proposed HVS ensures effective control in dynamic, unstructured environments. The effectiveness of this approach is validated through simulations and real-world experiments, demonstrating that HVS achieves reduced iteration time, faster convergence, lower final error, and smoother performance compared to DLBVS alone, while maintaining DLBVS's robustness in challenging conditions such as occlusions, lighting changes, actuator noise, and physical impacts.

Impedance Control for Manipulators Handling Heavy Payloads

Sep 23, 2024Abstract:Attaching a heavy payload to the wrist force/moment (F/M) sensor of a manipulator can cause conventional impedance controllers to fail in establishing the desired impedance due to the presence of non-contact forces; namely, the inertial and gravitational forces of the payload. This paper presents an impedance control scheme designed to accurately shape the force-response of such a manipulator without requiring acceleration measurements. As a result, neither wrist accelerometers nor dynamic estimators for compensating inertial load forces are necessary. The proposed controller employs an inner-outer loop feedback structure, which not only addresses uncertainties in the robot's dynamics but also enables the specification of a general target impedance model, including nonlinear models. Stability and convergence of the controller are analytically proven, with results showing that the control input remains bounded as long as the desired inertia differs from the payload inertia. Experimental results confirm that the proposed impedance controller effectively shapes the impedance of a manipulator carrying a heavy load according to the desired impedance model.

Adaptive Visual Servoing for On-Orbit Servicing

Sep 09, 2024Abstract:This paper presents an adaptive visual servoing framework for robotic on-orbit servicing (OOS), specifically designed for capturing tumbling satellites. The vision-guided robotic system is capable of selecting optimal control actions in the event of partial or complete vision system failure, particularly in the short term. The autonomous system accounts for physical and operational constraints, executing visual servoing tasks to minimize a cost function. A hierarchical control architecture is developed, integrating a variant of the Iterative Closest Point (ICP) algorithm for image registration, a constrained noise-adaptive Kalman filter, fault detection and recovery logic, and a constrained optimal path planner. The dynamic estimator provides real-time estimates of unknown states and uncertain parameters essential for motion prediction, while ensuring consistency through a set of inequality constraints. It also adjusts the Kalman filter parameters adaptively in response to unexpected vision errors. In the event of vision system faults, a recovery strategy is activated, guided by fault detection logic that monitors the visual feedback via the metric fit error of image registration. The estimated/predicted pose and parameters are subsequently fed into an optimal path planner, which directs the robot's end-effector to the target's grasping point. This process is subject to multiple constraints, including acceleration limits, smooth capture, and line-of-sight maintenance with the target. Experimental results demonstrate that the proposed visual servoing system successfully captured a free-floating object, despite complete occlusion of the vision system.

Seamless Capture and Stabilization of Spinning Satellites By Space Robots with Spinning Base

Feb 02, 2024Abstract:This paper introduces an innovative guidance and control method for simultaneously capturing and stabilizing a fast-spinning target satellite, such as a spin-stabilized satellite, using a spinning-base servicing satellite equipped with a robotic manipulator, joint locks, and reaction wheels (RWs). The method involves controlling the RWs of the servicing satellite to replicate the spinning motion of the target satellite, while locking the manipulator's joints to achieve spin-matching. This maneuver makes the target stationary with respect to the rotating frame of the servicing satellite located at its center-of-mass (CoM), simplifying the robot capture trajectory planning and eliminating post-capture trajectory planning entirely. In the next phase, the joints are unlocked, and a coordination controller drives the robotic manipulator to capture the target satellite while maintaining zero relative rotation between the servicing and target satellites. The spin stabilization phase begins after completing the capture phase, where the joints are locked to form a single tumbling rigid body consisting of the rigidly connected servicing and target satellites. An optimal controller applies negative control torques to the RWs to dampen out the tumbling motion of the interconnected satellites as quickly as possible, subject to the actuation torque limit of the RWs and the maximum torque exerted by the manipulator's end-effector.

Autonomous Robots for Active Removal of Orbital Debris

Nov 11, 2023

Abstract:This paper presents a vision guidance and control method for autonomous robotic capture and stabilization of orbital objects in a time-critical manner. The method takes into account various operational and physical constraints, including ensuring a smooth capture, handling line-of-sight (LOS) obstructions of the target, and staying within the acceleration, force, and torque limits of the robot. Our approach involves the development of an optimal control framework for an eye-to-hand visual servoing method, which integrates two sequential sub-maneuvers: a pre-capturing maneuver and a post-capturing maneuver, aimed at achieving the shortest possible capture time. Integrating both control strategies enables a seamless transition between them, allowing for real-time switching to the appropriate control system. Moreover, both controllers are adaptively tuned through vision feedback to account for the unknown dynamics of the target. The integrated estimation and control architecture also facilitates fault detection and recovery of the visual feedback in situations where the feedback is temporarily obstructed. The experimental results demonstrate the successful execution of pre- and post-capturing operations on a tumbling and drifting target, despite multiple operational constraints.

Coordination Control of Free-Flyer Manipulators

Mar 20, 2023

Abstract:This paper presents a method for guiding a robot manipulator to capture and bring a tumbling satellite to a state of rest. The proposed approach includes developing a coordination control for the combined system of the space robot and the target satellite, where the satellite acts as the manipulator payload. This control ensures that the robot tracks the optimal path while regulating the attitude of the chase vehicle to a desired value. Two optimal trajectories are then designed for the pre- and post-capture phases. In the pre-capturing phase, the manipulator manoeuvres are optimized by minimizing a cost function that includes the time of travel and the weighted norms of the end-effector velocity and acceleration, subject to the constraint that the robot end-effector and a grapple fixture on the satellite arrive at the rendezvous point with the same velocity. In the post-grasping phase, the manipulator dumps the initial velocity of the tumbling satellite in minimum time while ensuring that the magnitude of the torque applied to the satellite remains below a safe value. Overall, this method offers a promising solution for effectively capturing and bringing tumbling satellites to a state of rest.

Automated Rendezvous & Docking Using 3D Vision

Nov 06, 2022

Abstract:The robustness and accuracy of a vision system for motion estimation of a tumbling target satellite are enhanced by an adaptive Kalman filter. This allows a vision-guided robot to complete the grasping of the target even if occlusion occurs during the operation. A complete dynamics model, including aspects of orbital mechanics, is incorporated for accurate estimation. Based on the model, an adaptive Kalman filter is developed that estimates not only the system states but also all the model parameters such as the inertia ratio, center-of-mass, and the rotation of the principal axes of the target satellite. An experiment is conducted by using a robotic arm to move a satellite mockup according to orbital mechanics while the satellite pose is measured by a laser camera system. The measurements are sent to the Kalman filter, which, in turn, drives another robotic arm to grasp the target. The results demonstrate successful grasping even if the vision system is blocked for several seconds.

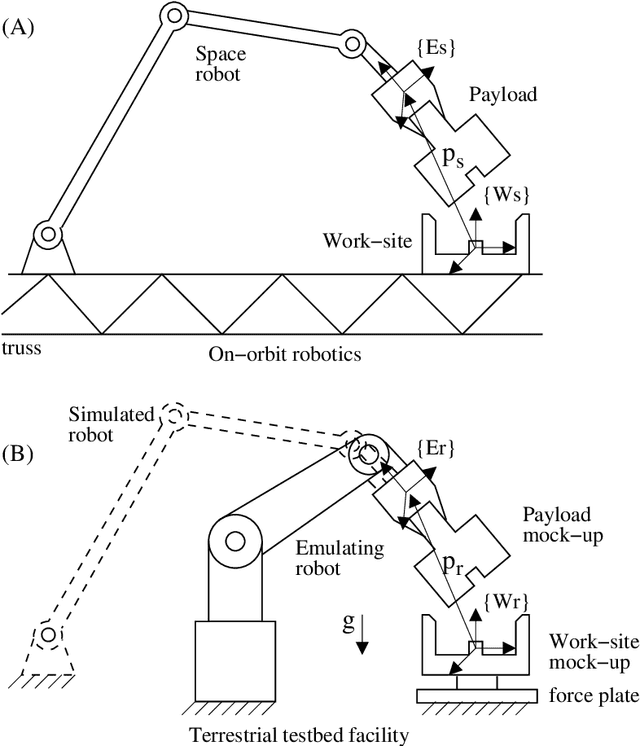

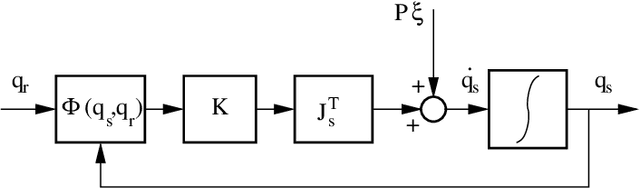

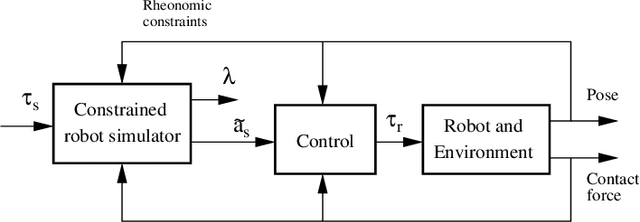

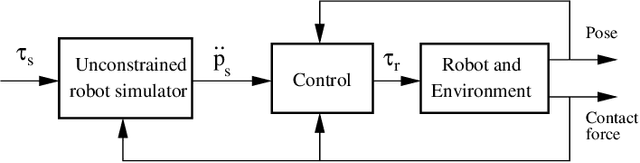

Hybrid Simulator for Space Docking and Robotic Proximity Operations

Oct 08, 2022

Abstract:In this work, we present a hybrid simulator for space docking and robotic proximity operations methodology. This methodology also allows for the emulation of a target robot operating in a complex environment by using an actual robot. The emulation scheme aims to replicate the dynamic behavior of the target robot interacting with the environment, without dealing with a complex calculation of the contact dynamics. This method forms a basis for the task verification of a flexible space robot. The actual emulating robot is structurally rigid, while the target robot can represent any class of robots, e.g., flexible, redundant, or space robots. Although the emulating robot is not dynamically equivalent to the target robot, the dynamical similarity can be achieved by using a control law developed herein. The effect of disturbances and actuator dynamics on the fidelity and the contact stability of the robot emulation is thoroughly analyzed.

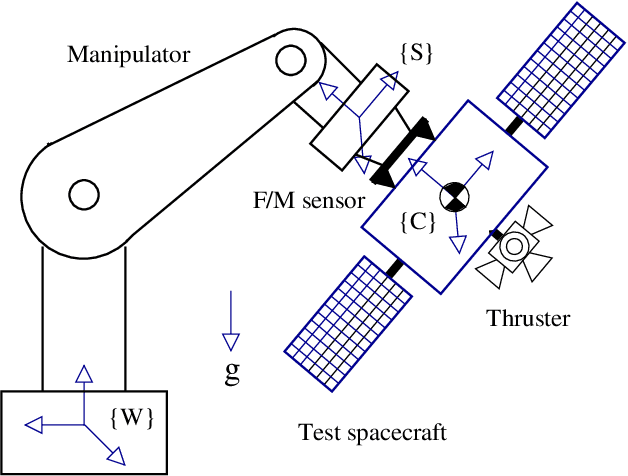

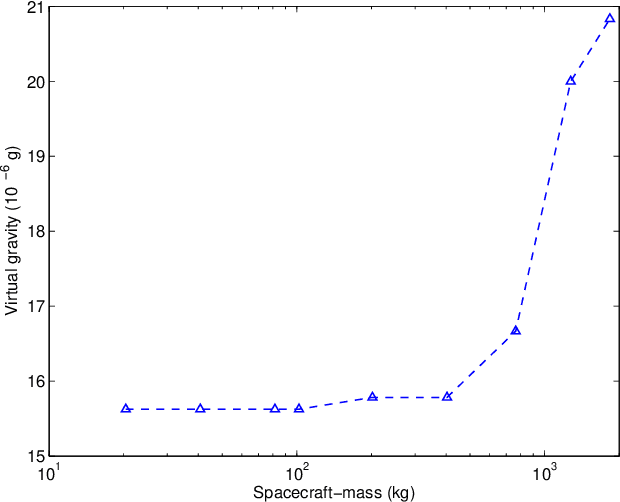

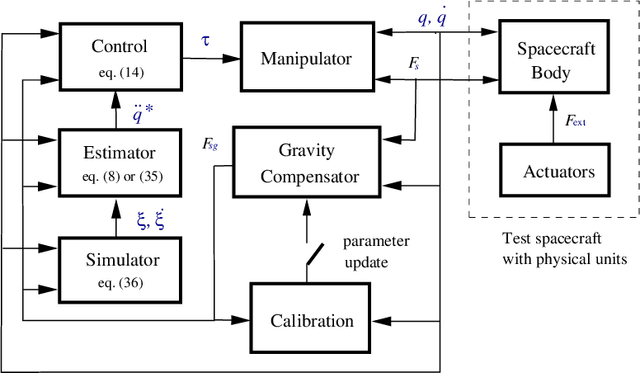

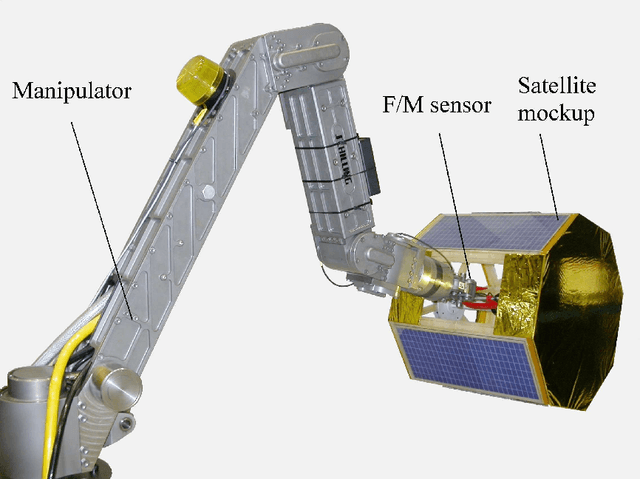

Six-DOF Spacecraft Dynamics Simulator For Testing Translation and Attitude Control

Sep 17, 2022

Abstract:This paper presents a method to control a manipulator system grasping a rigid-body payload so that the motion of the combined system in consequence of externally applied forces to be the same as another free-floating rigid-body (with different inertial properties). This allows zero-g emulation of a scaled spacecraft prototype under the test in a 1-g laboratory environment. The controller consisting of motion feedback and force/moment feedback adjusts the motion of the test spacecraft so as to match that of the flight spacecraft, even if the latter has flexible appendages (such as solar panels) and the former is rigid. The stability of the overall system is analytically investigated, and the results show that the system remains stable provided that the inertial properties of two spacecraft are different and that an upperbound on the norm of the inertia ratio of the payload to manipulator is respected. Important practical issues such as calibration and sensitivity analysis to sensor noise and quantization are also presented.

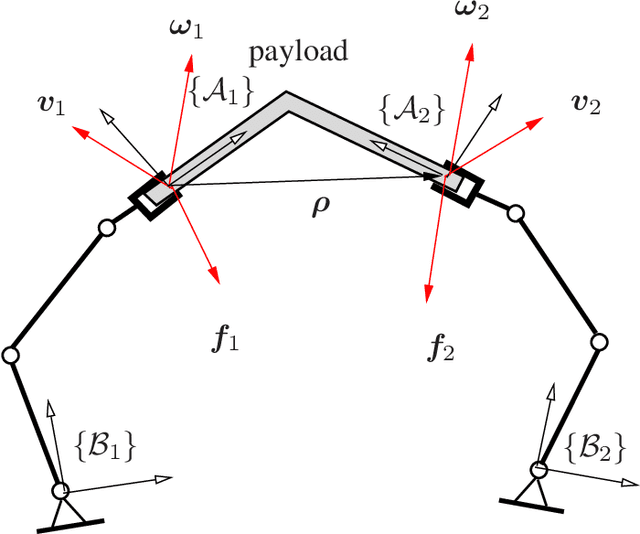

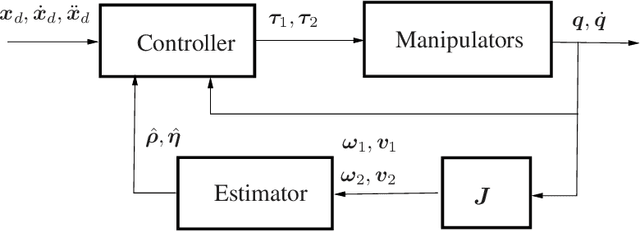

Adaptive Machine Learning for Cooperative Manipulators

Sep 06, 2022

Abstract:The problem of self-tuning control of cooperative manipulators forming a closed kinematic chain in the presence of an inaccurate kinematics model is addressed using adaptive machine learning. The kinematic parameters pertaining to the relative position/orientation uncertainties of the interconnected manipulators are updated online by two cascaded estimators in order to tune a cooperative controller for achieving accurate motion tracking with minimum-norm actuation force. This technique permits accurate calibration of the relative kinematics of the involved manipulators without needing high precision end-point sensing or force measurements, and hence it is economically justified. Investigating the stability of the entire real-time estimator/controller system reveals that the convergence and stability of the adaptive control process can be ensured if i) the direction of the angular velocity vector does not remain constant over time, and ii) the initial kinematic parameter error is upper bounded by a scaler function of some known parameters. The adaptive controller is proved to be singularity-free even though the control law involves inverting the approximation of a matrix computed at the estimated parameters. Experimental results demonstrate the sensitivity of the tracking performance of the conventional inverse dynamic control scheme to kinematic inaccuracies, while the tracking error is significantly reduced by the self-tuning cooperative controller.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge